yi-01-ai

commited on

Commit

·

4623fa6

1

Parent(s):

757055b

Auto Sync from git://github.com/01-ai/Yi.git/commit/ddfb3e80b169e48bd700177f4f896fe23e587f5a

Browse files

README.md

CHANGED

|

@@ -82,7 +82,7 @@ pipeline_tag: text-generation

|

|

| 82 |

- [Quick start](#quick-start)

|

| 83 |

- [Choose your path](#choose-your-parth)

|

| 84 |

- [pip](#pip)

|

| 85 |

-

- [llama.cpp](

|

| 86 |

- [Web demo](#web-demo)

|

| 87 |

- [Fine tune](#fine-tune)

|

| 88 |

- [Quantization](#quantization)

|

|

@@ -265,12 +265,12 @@ sequence length and can be extended to 32K during inference time.

|

|

| 265 |

- [Quick start](#quick-start)

|

| 266 |

- [Choose your path](#choose-your-parth)

|

| 267 |

- [pip](#pip)

|

| 268 |

-

- [llama.cpp](

|

| 269 |

- [Web demo](#web-demo)

|

| 270 |

- [Fine tune](#fine-tune)

|

| 271 |

- [Quantization](#quantization)

|

| 272 |

-

- [Deployment](

|

| 273 |

-

- [Learning hub](

|

| 274 |

|

| 275 |

## Quick start

|

| 276 |

|

|

@@ -280,7 +280,7 @@ Getting up and running with Yi models is simple with multiple choices available.

|

|

| 280 |

|

| 281 |

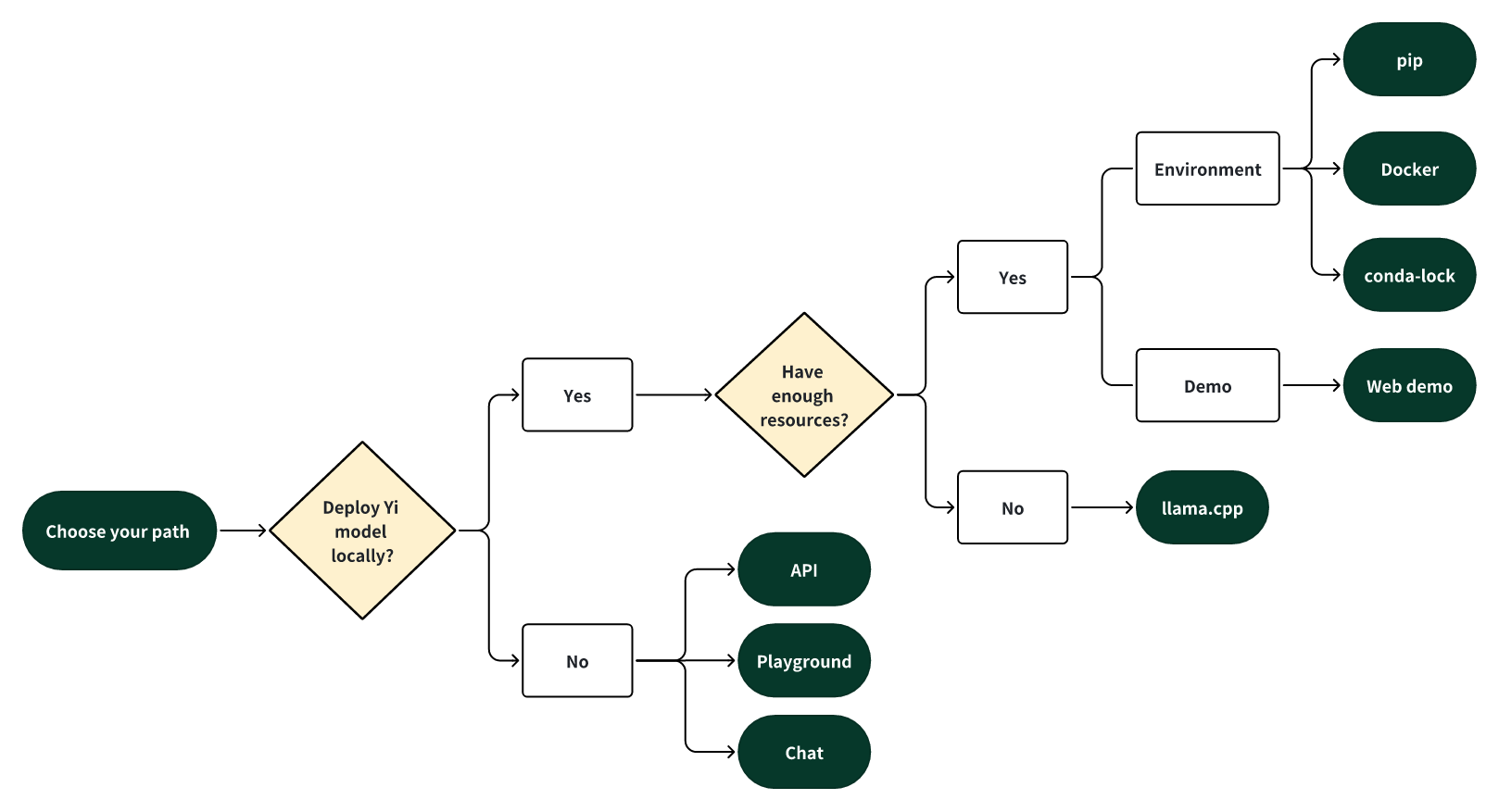

Select one of the following paths to begin your journey with Yi!

|

| 282 |

|

| 283 |

-

|

| 284 |

|

| 285 |

#### 🎯 Deploy Yi locally

|

| 286 |

|

|

@@ -288,7 +288,7 @@ If you prefer to deploy Yi models locally,

|

|

| 288 |

|

| 289 |

- 🙋♀️ and you have **sufficient** resources (for example, NVIDIA A800 80GB), you can choose one of the following methods:

|

| 290 |

- [pip](#pip)

|

| 291 |

-

- [Docker](

|

| 292 |

- [conda-lock](https://github.com/01-ai/Yi/blob/main/docs/README_legacy.md#12-local-development-environment)

|

| 293 |

|

| 294 |

- 🙋♀️ and you have **limited** resources (for example, a MacBook Pro), you can use [llama.cpp](#quick-start---llamacpp)

|

|

@@ -427,7 +427,7 @@ Then you can see an output similar to the one below. 🥳

|

|

| 427 |

### Quick start - Docker

|

| 428 |

<details>

|

| 429 |

<summary> Run Yi-34B-chat locally with Docker: a step-by-step guide. ⬇️</summary>

|

| 430 |

-

<br>This tutorial guides you through every step of running <strong>Yi-34B-Chat on an A800 GPU</strong> locally and then performing inference.

|

| 431 |

<h4>Step 0: Prerequisites</h4>

|

| 432 |

<p>Make sure you've installed <a href="https://docs.docker.com/engine/install/?open_in_browser=true">Docker</a> and <a href="https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/latest/install-guide.html">nvidia-container-toolkit</a>.</p>

|

| 433 |

|

|

@@ -536,9 +536,10 @@ Now you have successfully asked a question to the Yi model and got an answer!

|

|

| 536 |

|

| 537 |

##### Method 2: Perform inference in web

|

| 538 |

|

| 539 |

-

1. To initialize a lightweight and swift chatbot,

|

| 540 |

|

| 541 |

```bash

|

|

|

|

| 542 |

./server --ctx-size 2048 --host 0.0.0.0 --n-gpu-layers 64 --model /Users/yu/yi-chat-6B-GGUF/yi-chat-6b.Q2_K.gguf

|

| 543 |

```

|

| 544 |

|

|

@@ -576,12 +577,12 @@ Now you have successfully asked a question to the Yi model and got an answer!

|

|

| 576 |

|

| 577 |

2. To access the chatbot interface, open your web browser and enter `http://0.0.0.0:8080` into the address bar.

|

| 578 |

|

| 579 |

-

|

| 580 |

|

| 581 |

|

| 582 |

3. Enter a question, such as "How do you feed your pet fox? Please answer this question in 6 simple steps" into the prompt window, and you will receive a corresponding answer.

|

| 583 |

|

| 584 |

-

|

| 585 |

|

| 586 |

</ul>

|

| 587 |

</details>

|

|

@@ -602,7 +603,7 @@ python demo/web_demo.py -c <your-model-path>

|

|

| 602 |

|

| 603 |

You can access the web UI by entering the address provided in the console into your browser.

|

| 604 |

|

| 605 |

-

|

| 606 |

|

| 607 |

### Finetuning

|

| 608 |

|

|

@@ -1010,7 +1011,7 @@ If you're seeking to explore the diverse capabilities within Yi's thriving famil

|

|

| 1010 |

|

| 1011 |

Yi-34B-Chat model demonstrates exceptional performance, ranking first among all existing open-source models in the benchmarks including MMLU, CMMLU, BBH, GSM8k, and more.

|

| 1012 |

|

| 1013 |

-

|

| 1031 |

|

| 1032 |

<details>

|

| 1033 |

<summary> Evaluation methods. ⬇️</summary>

|

|

|

|

| 82 |

- [Quick start](#quick-start)

|

| 83 |

- [Choose your path](#choose-your-parth)

|

| 84 |

- [pip](#pip)

|

| 85 |

+

- [llama.cpp](#quick-start---llamacpp)

|

| 86 |

- [Web demo](#web-demo)

|

| 87 |

- [Fine tune](#fine-tune)

|

| 88 |

- [Quantization](#quantization)

|

|

|

|

| 265 |

- [Quick start](#quick-start)

|

| 266 |

- [Choose your path](#choose-your-parth)

|

| 267 |

- [pip](#pip)

|

| 268 |

+

- [llama.cpp](#quick-start---llamacpp)

|

| 269 |

- [Web demo](#web-demo)

|

| 270 |

- [Fine tune](#fine-tune)

|

| 271 |

- [Quantization](#quantization)

|

| 272 |

+

- [Deployment](#deployment)

|

| 273 |

+

- [Learning hub](#learning-hub)

|

| 274 |

|

| 275 |

## Quick start

|

| 276 |

|

|

|

|

| 280 |

|

| 281 |

Select one of the following paths to begin your journey with Yi!

|

| 282 |

|

| 283 |

+

|

| 284 |

|

| 285 |

#### 🎯 Deploy Yi locally

|

| 286 |

|

|

|

|

| 288 |

|

| 289 |

- 🙋♀️ and you have **sufficient** resources (for example, NVIDIA A800 80GB), you can choose one of the following methods:

|

| 290 |

- [pip](#pip)

|

| 291 |

+

- [Docker](#quick-start---docker)

|

| 292 |

- [conda-lock](https://github.com/01-ai/Yi/blob/main/docs/README_legacy.md#12-local-development-environment)

|

| 293 |

|

| 294 |

- 🙋♀️ and you have **limited** resources (for example, a MacBook Pro), you can use [llama.cpp](#quick-start---llamacpp)

|

|

|

|

| 427 |

### Quick start - Docker

|

| 428 |

<details>

|

| 429 |

<summary> Run Yi-34B-chat locally with Docker: a step-by-step guide. ⬇️</summary>

|

| 430 |

+

<br>This tutorial guides you through every step of running <strong>Yi-34B-Chat on an A800 GPU</strong> or <strong>4*4090</strong> locally and then performing inference.

|

| 431 |

<h4>Step 0: Prerequisites</h4>

|

| 432 |

<p>Make sure you've installed <a href="https://docs.docker.com/engine/install/?open_in_browser=true">Docker</a> and <a href="https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/latest/install-guide.html">nvidia-container-toolkit</a>.</p>

|

| 433 |

|

|

|

|

| 536 |

|

| 537 |

##### Method 2: Perform inference in web

|

| 538 |

|

| 539 |

+

1. To initialize a lightweight and swift chatbot, run the following command.

|

| 540 |

|

| 541 |

```bash

|

| 542 |

+

cd llama.cpp

|

| 543 |

./server --ctx-size 2048 --host 0.0.0.0 --n-gpu-layers 64 --model /Users/yu/yi-chat-6B-GGUF/yi-chat-6b.Q2_K.gguf

|

| 544 |

```

|

| 545 |

|

|

|

|

| 577 |

|

| 578 |

2. To access the chatbot interface, open your web browser and enter `http://0.0.0.0:8080` into the address bar.

|

| 579 |

|

| 580 |

+

|

| 581 |

|

| 582 |

|

| 583 |

3. Enter a question, such as "How do you feed your pet fox? Please answer this question in 6 simple steps" into the prompt window, and you will receive a corresponding answer.

|

| 584 |

|

| 585 |

+

|

| 586 |

|

| 587 |

</ul>

|

| 588 |

</details>

|

|

|

|

| 603 |

|

| 604 |

You can access the web UI by entering the address provided in the console into your browser.

|

| 605 |

|

| 606 |

+

|

| 607 |

|

| 608 |

### Finetuning

|

| 609 |

|

|

|

|

| 1011 |

|

| 1012 |

Yi-34B-Chat model demonstrates exceptional performance, ranking first among all existing open-source models in the benchmarks including MMLU, CMMLU, BBH, GSM8k, and more.

|

| 1013 |

|

| 1014 |

+

|

| 1015 |

|

| 1016 |

<details>

|

| 1017 |

<summary> Evaluation methods and challenges. ⬇️ </summary>

|

|

|

|

| 1028 |

|

| 1029 |

The Yi-34B and Yi-34B-200K models stand out as the top performers among open-source models, especially excelling in MMLU, CMML, common-sense reasoning, reading comprehension, and more.

|

| 1030 |

|

| 1031 |

+

|

| 1032 |

|

| 1033 |

<details>

|

| 1034 |

<summary> Evaluation methods. ⬇️</summary>

|