---

license: apache-2.0

datasets:

- AIDC-AI/Ovis-dataset

library_name: transformers

tags:

- MLLM

pipeline_tag: image-text-to-text

---

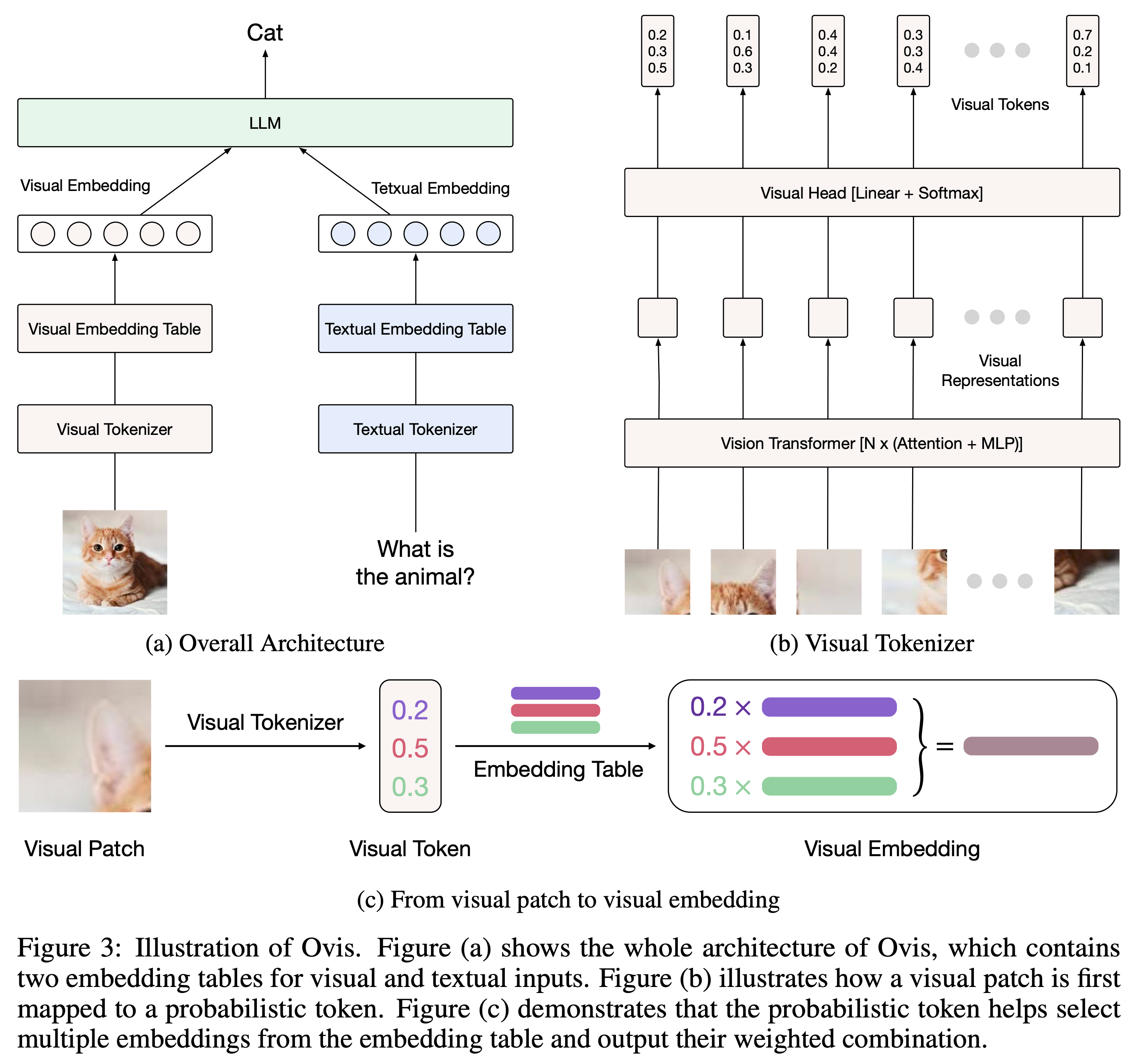

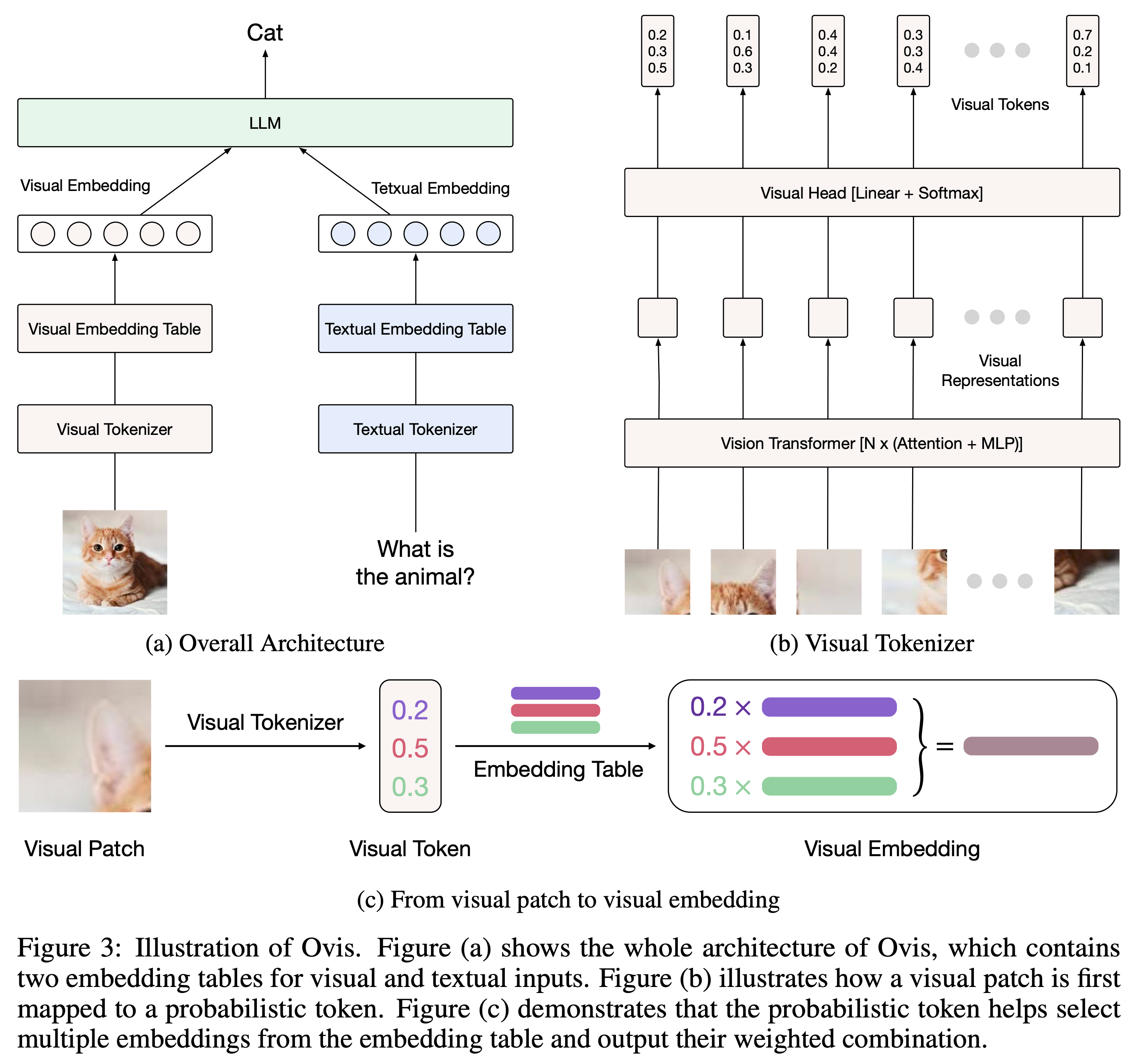

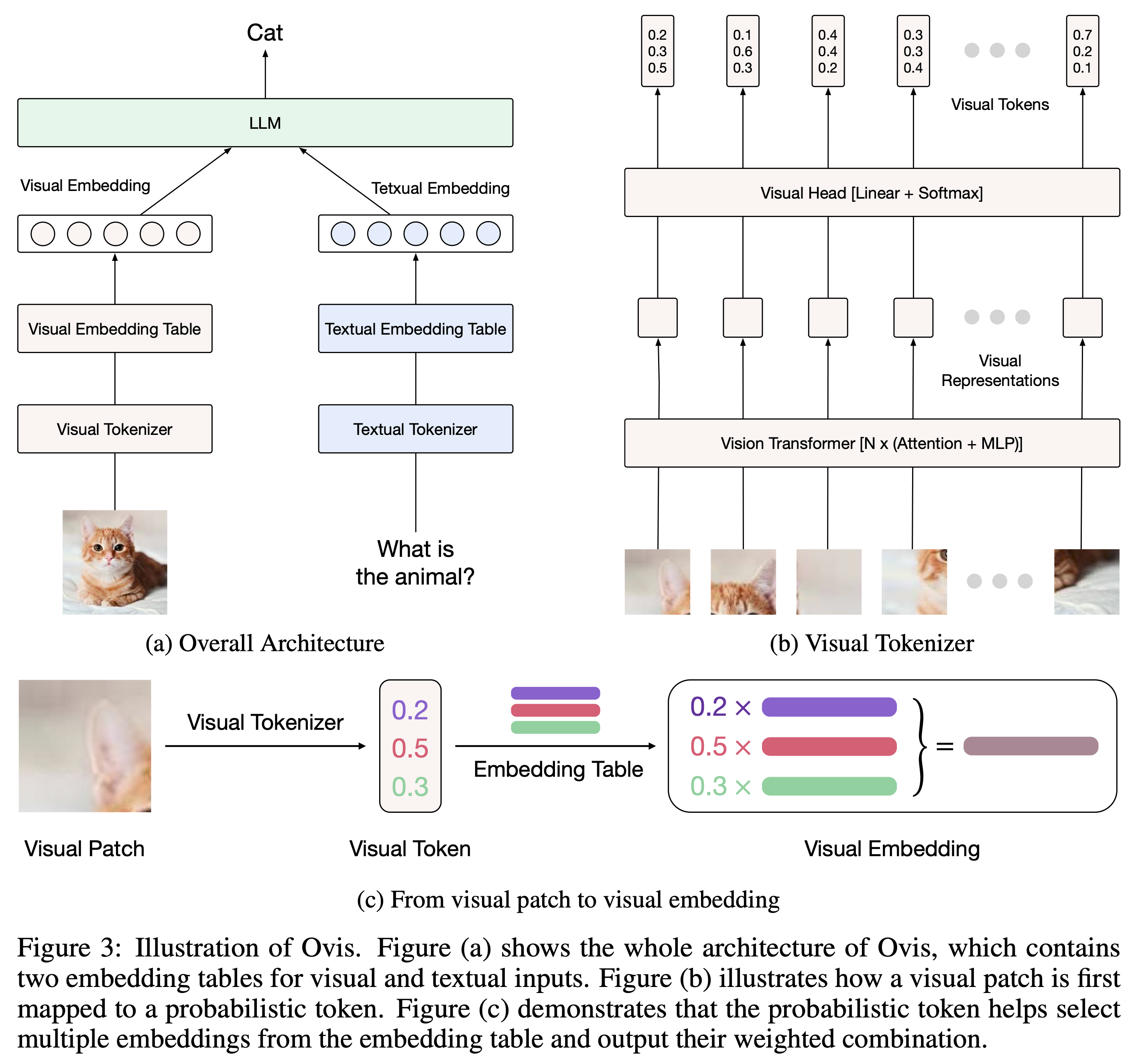

## Introduction

Ovis is a novel Multimodal Large Language Model (MLLM) architecture, designed to structurally align visual and textual embeddings. For a comprehensive introduction, please refer to [Ovis paper](https://arxiv.org/abs/2405.20797) and [Ovis GitHub](https://github.com/AIDC-AI/Ovis).

## Model

As always, Ovis1.5 remains fully open-source: we release the training datasets, training & inference codes, and model weights for **reproducible transparency** and community collaboration.

| Ovis MLLMs | ViT | LLM | Training Datasets | Code | Model Weights |

|:-------------------------|:-----------:|:------------------:|:-------------------------------------------------------------------:|:-------------------------------------------:|:----------------------------------------------------------------:|

| Ovis1.5-Llama3-8B | Siglip-400M | Llama3-8B-Instruct | [Huggingface](https://huggingface.co/datasets/AIDC-AI/Ovis-dataset) | [Github](https://github.com/AIDC-AI/Ovis) | [Huggingface](https://huggingface.co/AIDC-AI/Ovis1.5-Llama3-8B) |

| Ovis1.5-Gemma2-9B | Siglip-400M | Gemma2-9B-It | [Huggingface](https://huggingface.co/datasets/AIDC-AI/Ovis-dataset) | [Github](https://github.com/AIDC-AI/Ovis) | [Huggingface](https://huggingface.co/AIDC-AI/Ovis1.5-Gemma2-9B) |

## Performance

We evaluate Ovis1.5 across various multimodal benchmarks using [VLMEvalKit](https://github.com/open-compass/VLMEvalKit) and compare its performance to leading MLLMs with similar parameter scales.

| | MiniCPM-Llama3-V2.5 | GLM-4V-9B | Ovis1.5-Llama3-8B | Ovis1.5-Gemma2-9B |

|:------------------|--------------------:|----------:|------------------:|------------------:|

| Open Weights | ✅ | ✅ | ✅ | ✅ |

| Open Datasets | ❌ | ❌ | ✅ | ✅ |

| MMTBench-VAL | 57.6 | 48.8 | 60.7 | **62.7** |

| MMBench-EN-V1.1 | 74 | 68.7 | **78.2** | 78.0 |

| MMBench-CN-V1.1 | 70.1 | 67.1 | **75.2** | 75.1 |

| MMStar | 51.8 | 54.8 | 57.2 | **58.7** |

| MMMU-Val | 45.8 | 46.9 | 48.6 | **49.8** |

| MathVista-Mini | 54.3 | 51.1 | 62.4 | **65.7** |

| HallusionBenchAvg | 42.4 | 45 | 44.5 | **48.0** |

| AI2D | 78.4 | 71.2 | 82.5 | **84.7** |

| OCRBench | 725 | **776** | 743 | 756 |

| MMVet | 52.8 | **58** | 52.2 | 56.5 |

| RealWorldQA | 63.5 | 66 | 64.6 | **66.9** |

## Usage

Below is a code snippet to run Ovis with multimodal inputs. For additional usage instructions, including inference wrapper and Gradio UI, please refer to [Ovis GitHub](https://github.com/AIDC-AI/Ovis?tab=readme-ov-file#inference).

```bash

pip install torch==2.1.2 transformers==4.43.2 pillow==10.3.0

```

```python

import torch

from PIL import Image

from transformers import AutoModelForCausalLM

# load model

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis1.5-Gemma2-9B",

torch_dtype=torch.bfloat16,

multimodal_max_length=8192,

trust_remote_code=True).cuda()

text_tokenizer = model.get_text_tokenizer()

visual_tokenizer = model.get_visual_tokenizer()

conversation_formatter = model.get_conversation_formatter()

# enter image path and prompt

image_path = input("Enter image path: ")

image = Image.open(image_path)

text = input("Enter prompt: ")

query = f'\n{text}'

prompt, input_ids = conversation_formatter.format_query(query)

input_ids = torch.unsqueeze(input_ids, dim=0).to(device=model.device)

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id).to(device=model.device)

pixel_values = [visual_tokenizer.preprocess_image(image).to(

dtype=visual_tokenizer.dtype, device=visual_tokenizer.device)]

# generate output

with torch.inference_mode():

gen_kwargs = dict(

max_new_tokens=1024,

do_sample=False,

top_p=None,

top_k=None,

temperature=None,

repetition_penalty=None,

eos_token_id=model.generation_config.eos_token_id,

pad_token_id=text_tokenizer.pad_token_id,

use_cache=True

)

output_ids = model.generate(input_ids, pixel_values=pixel_values, attention_mask=attention_mask, **gen_kwargs)[0]

output = text_tokenizer.decode(output_ids, skip_special_tokens=True)

print(f'Output: {output}')

```

## Citation

If you find Ovis useful, please cite the paper

```

@article{lu2024ovis,

title={Ovis: Structural Embedding Alignment for Multimodal Large Language Model},

author={Shiyin Lu and Yang Li and Qing-Guo Chen and Zhao Xu and Weihua Luo and Kaifu Zhang and Han-Jia Ye},

year={2024},

journal={arXiv:2405.20797}

}

```

## License

The project is licensed under the Apache 2.0 License and is restricted to uses that comply with the license agreements of Qwen, Llama3, Clip, and Siglip.