We present MedMT5, the first open-source text-to-text multilingual model for the medical domain. MedMT5 is an encoder-decoder model developed by continuing the training of publicly available mT5 checkpoints on medical domain data for English, Spanish, French, and Italian.

- 📖 Paper: ** Coming soon **| MedMT5-Large (HiTZ/MedMT5-large) | MedMT5-XL (HiTZ/MedMT5-xl) | |

|---|---|---|

| Param. no. | 738M | 3B |

| Sequence Length | 1024 | 480 |

| Token/step | 65536 | 30720 |

| Epochs | 1 | 1 |

| Total Tokens | 4.5B | 4.5B |

| Optimizer | Adafactor | Adafactor |

| LR | 0.001 | 0.001 |

| Scheduler | Constant | Constant |

| Hardware | 4xA100 | 4xA100 |

| Time (h) | 10.5 | 20.5 |

| CO2eq (kg) | 2.9 | 5.6 |

### MedMT5 for Sequence Labelling

If you want to use MedMT5 for Sequence Labeling, we recommend you use this code: https://github.com/ikergarcia1996/Sequence-Labeling-LLMs

## Training Data

| Language | Source | Words |

|---|---|---|

| English | ClinicalTrials | 127.4M |

| EMEA | 12M | |

| PubMed | 968.4M | |

| Spanish | EMEA | 13.6M |

| PubMed | 8.4M | |

| Medical Crawler | 918M | |

| SPACC | 350K | |

| UFAL | 10.5M | |

| WikiMed | 5.2M | |

| French | PubMed | 1.4M |

| Science Direct | 15.2M | |

| Wikipedia - Médecine | 5M | |

| EDP | 48K | |

| Google Patents | 654M | |

| Italian | Medical Commoncrawl - IT | 67M |

| Drug instructions | 30.5M | |

| Wikipedia - Medicina | 13.3M | |

| E3C Corpus - IT | 11.6M | |

| Medicine descriptions | 6.3M | |

| Medical theses | 5.8M | |

| Medical websites | 4M | |

| PubMed | 2.3M | |

| Supplement description | 1.3M | |

| Medical notes | 975K | |

| Pathologies | 157K | |

| Medical test simulations | 26K | |

| Clinical cases | 20K |

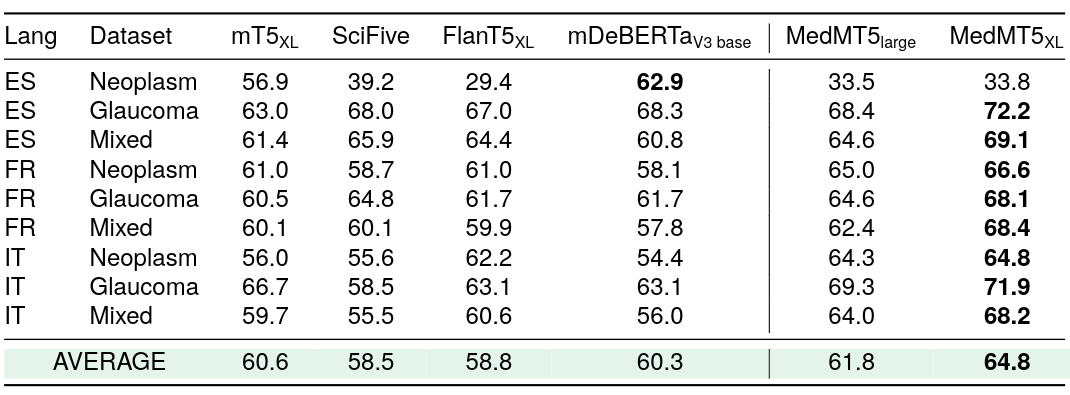

### Multi-task supervised F1 scores for Sequence Labelling

### Zero-shot F1 scores for Argument Mining. Models have been trained in English and evaluated in Spanish, French and Italian.

## Ethical Statement

Our research in developing MedMT5, a multilingual text-to-text model for the medical domain, has ethical implications that we acknowledge. Firstly, the broader impact of this work lies in its potential to improve medical communication and understanding across languages, which can enhance healthcare access and quality for diverse linguistic communities. However, it also raises ethical considerations related to privacy and data security. To create our multilingual corpus, we have taken measures to anonymize and protect sensitive patient information, adhering to data protection regulations in each language's jurisdiction or deriving our data from sources that explicitly address this issue in line with privacy and safety regulations and guidelines. Furthermore, we are committed to transparency and fairness in our model's development and evaluation. We have worked to ensure that our benchmarks are representative and unbiased, and we will continue to monitor and address any potential biases in the future. Finally, we emphasize our commitment to open source by making our data, code, and models publicly available, with the aim of promoting collaboration within the research community.