File size: 1,671 Bytes

0b2e037 a80cb0e 0b2e037 c8d5658 0b2e037 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 |

---

metrics:

- accuracy

model-index:

- name: dear-jarvis-monolith-xed-en

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# dear-jarvis-monolith-xed-en

This model was trained from scratch on an unkown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.7655

- Accuracy: 0.4678

## Model description

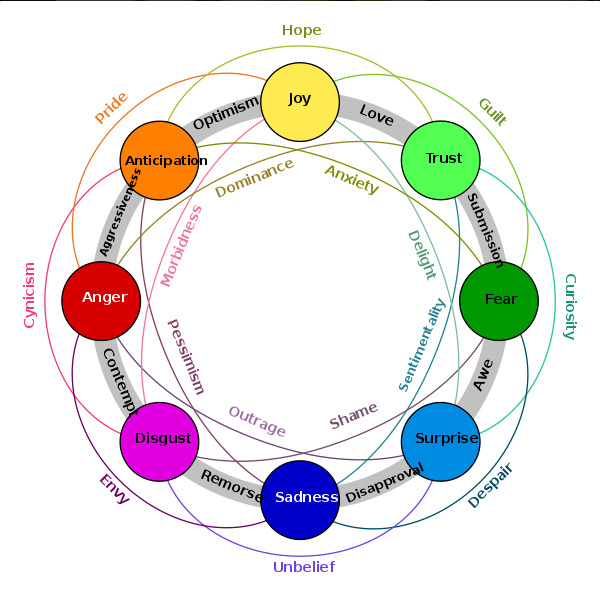

LABEL_0 = neutral

LABEL_1 = anger

LABEL_2 = anticipation

LABEL_3 = disgust

LABEL_4 = fear

LABEL_5 = joy

LABEL_6 = sadness

LABEL_7 = surprise

LABEL_8 = trust

Labels are based on Plutchik's model of emotions and may be combined:

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.5876 | 1.0 | 2003 | 1.5386 | 0.4596 |

| 1.1935 | 2.0 | 4006 | 1.5512 | 0.4803 |

| 0.7676 | 3.0 | 6009 | 1.7655 | 0.4678 |

### Framework versions

- Transformers 4.6.1

- Pytorch 1.8.1+cu101

- Datasets 1.8.0

- Tokenizers 0.10.3

|