metadata

license: llama3.2

language:

- en

datasets:

- PJMixers-Dev/HailMary-v0.1-KTO

base_model:

- PJMixers-Dev/LLaMa-3.2-Instruct-JankMix-v0.2-SFT-3B

model-index:

- name: PJMixers-Dev/LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: IFEval (0-Shot)

type: HuggingFaceH4/ifeval

args:

num_few_shot: 0

metrics:

- type: inst_level_strict_acc and prompt_level_strict_acc

value: 65.04

name: strict accuracy

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: BBH (3-Shot)

type: BBH

args:

num_few_shot: 3

metrics:

- type: acc_norm

value: 22.29

name: normalized accuracy

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MATH Lvl 5 (4-Shot)

type: hendrycks/competition_math

args:

num_few_shot: 4

metrics:

- type: exact_match

value: 11.78

name: exact match

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GPQA (0-shot)

type: Idavidrein/gpqa

args:

num_few_shot: 0

metrics:

- type: acc_norm

value: 2.91

name: acc_norm

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MuSR (0-shot)

type: TAUR-Lab/MuSR

args:

num_few_shot: 0

metrics:

- type: acc_norm

value: 4.69

name: acc_norm

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU-PRO (5-shot)

type: TIGER-Lab/MMLU-Pro

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 23.42

name: accuracy

source:

url: >-

https://huggingface.co/datasets/open-llm-leaderboard/PJMixers-Dev__LLaMa-3.2-Instruct-JankMix-v0.2-SFT-HailMary-v0.1-KTO-3B-details

name: Open LLM Leaderboard

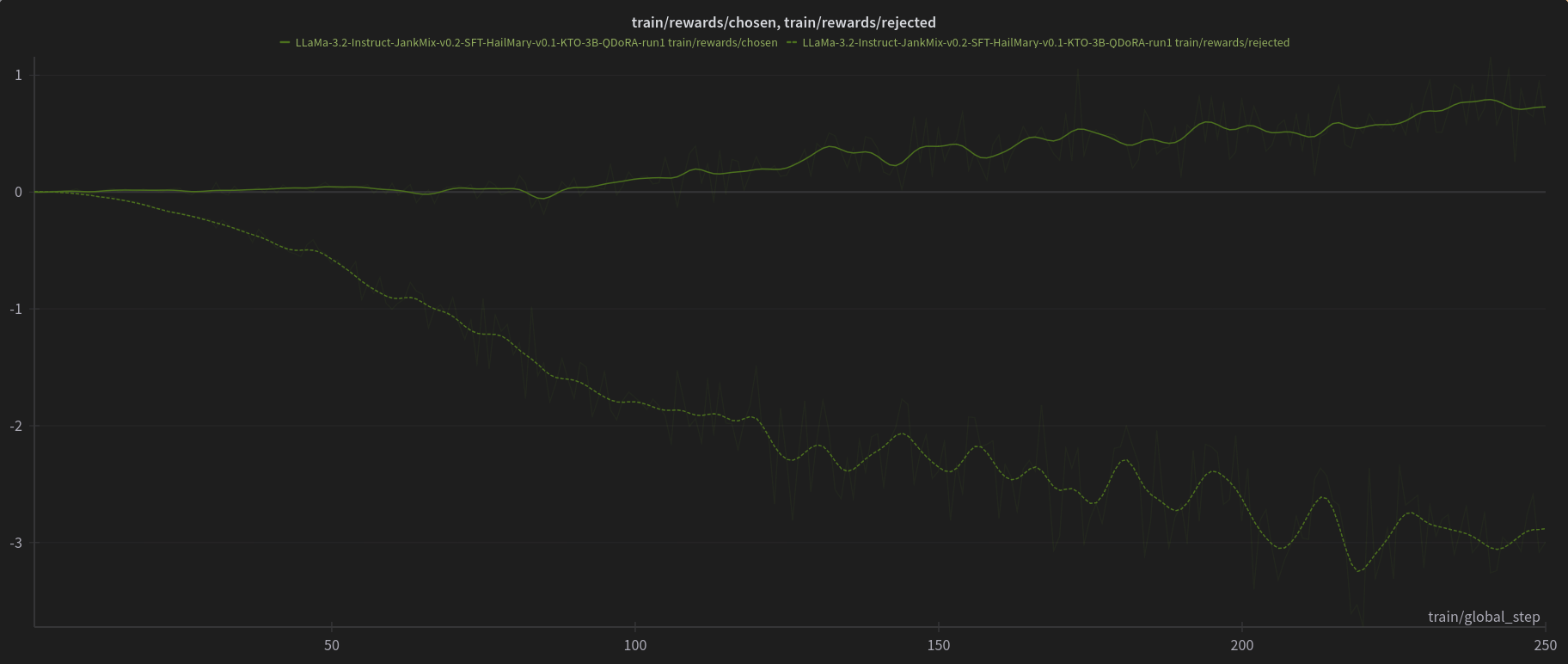

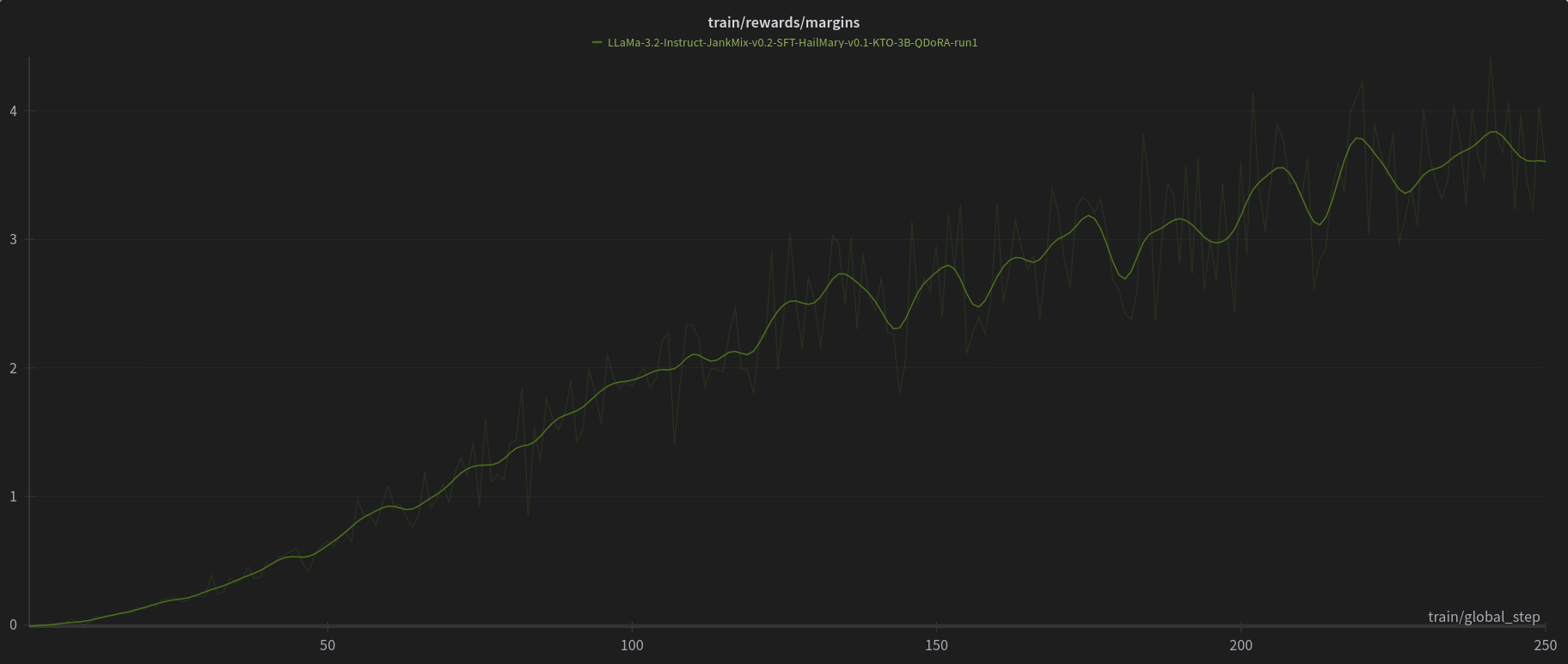

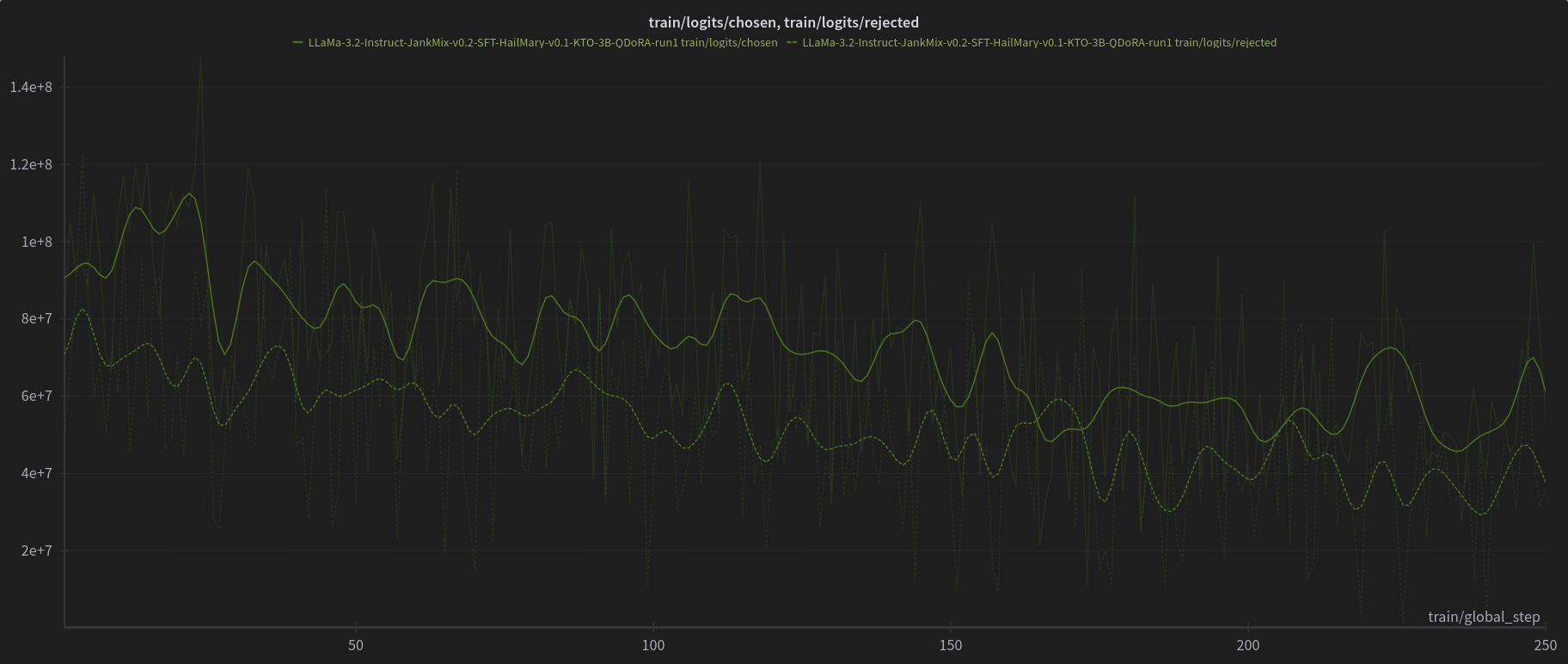

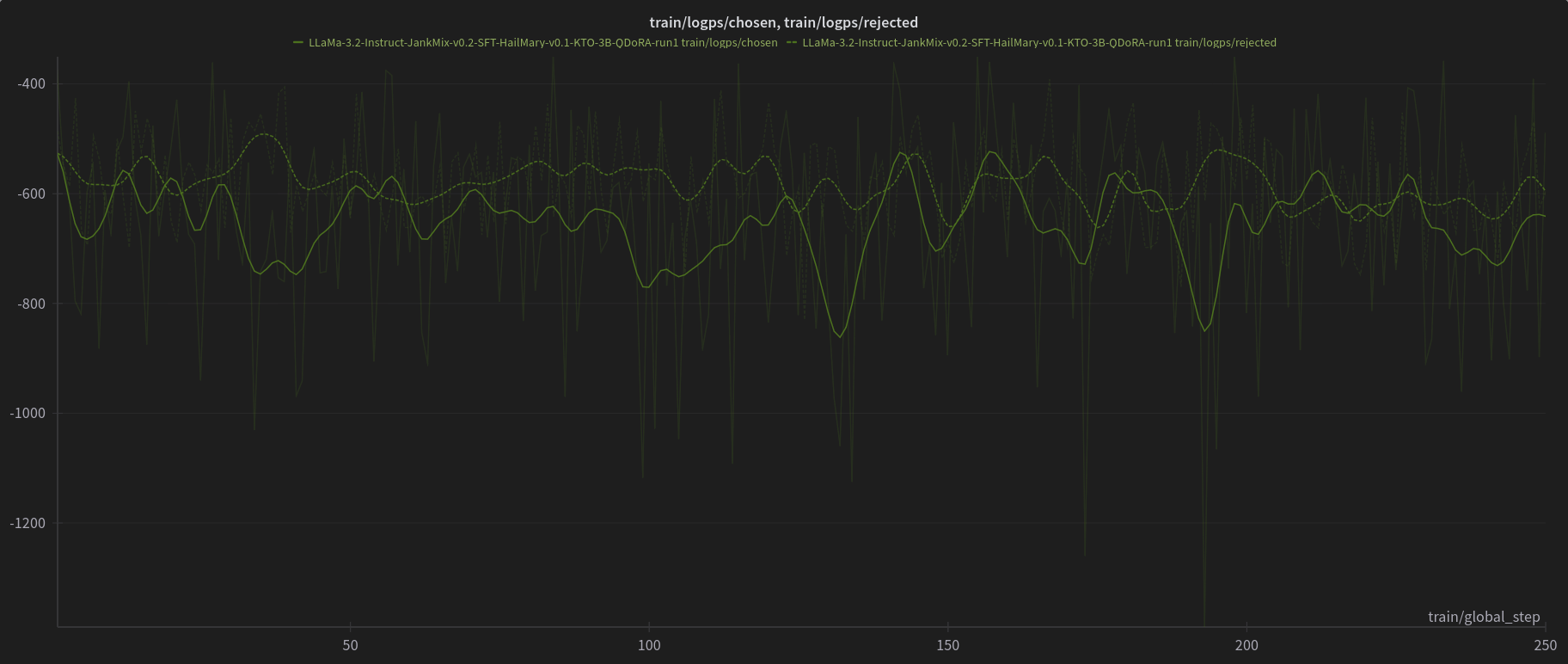

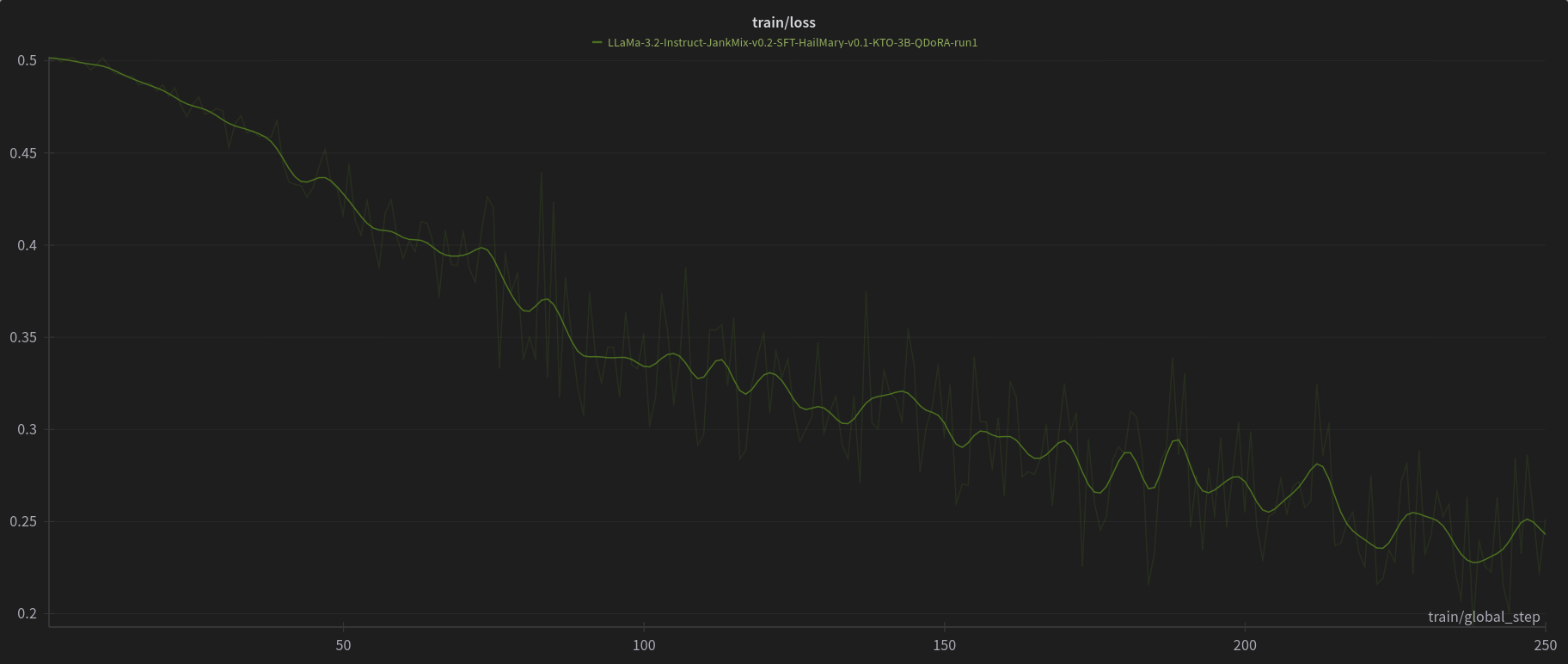

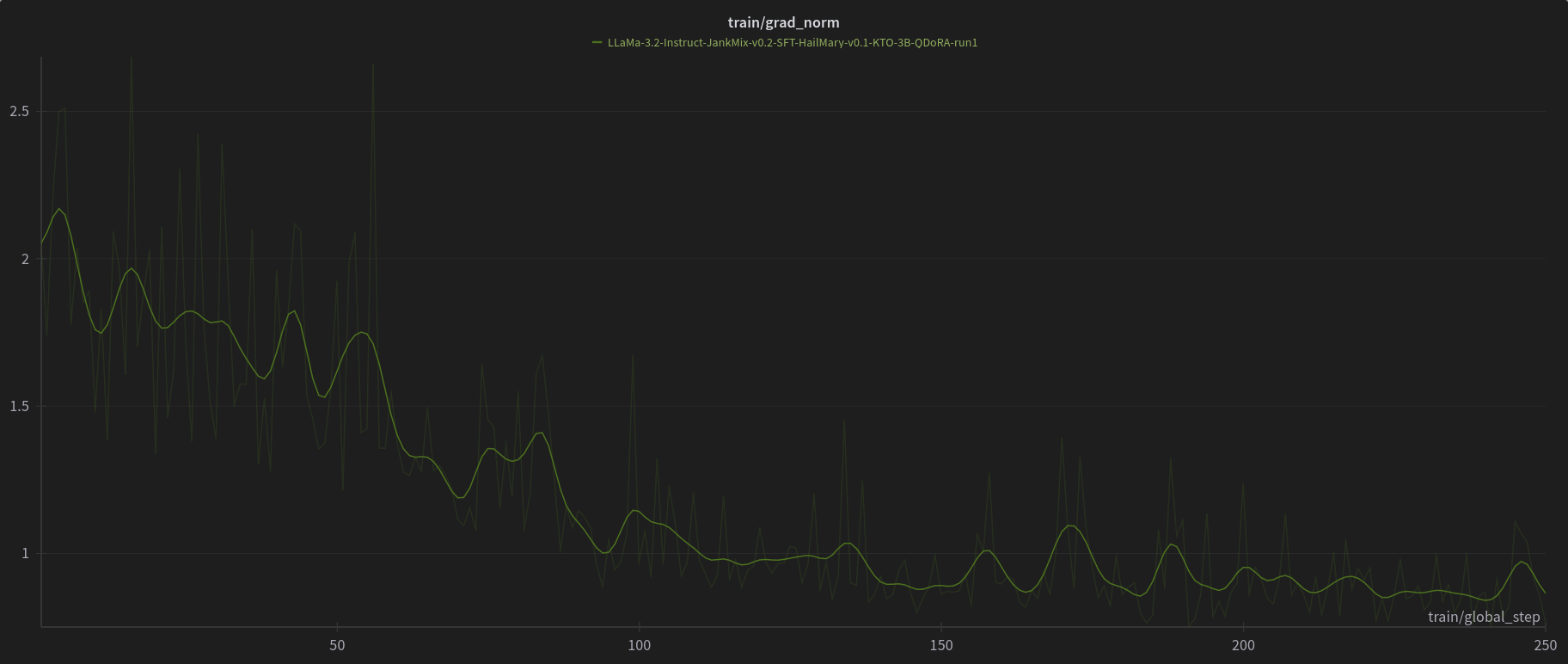

PJMixers-Dev/LLaMa-3.2-Instruct-JankMix-v0.2-SFT-3B was further trained using KTO (with apo_zero_unpaired loss type) using a mix of instruct, RP, and storygen datasets. I created rejected samples by using the SFT with bad settings (including logit bias) for every model turn.

The model was only trained at max_length=6144, and is nowhere near a full epoch as it eventually crashed. So think of this like a test of a test.

W&B Training Logs

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 21.69 |

| IFEval (0-Shot) | 65.04 |

| BBH (3-Shot) | 22.29 |

| MATH Lvl 5 (4-Shot) | 11.78 |

| GPQA (0-shot) | 2.91 |

| MuSR (0-shot) | 4.69 |

| MMLU-PRO (5-shot) | 23.42 |