Upload folder using huggingface_hub (#1)

Browse files- 8c636dbd8512b756625d3c722904ebc237732ff11dc7c60fd7ebf21a1d091335 (fa3e26c20813e16a087d58f2dafd2bbefdb63f18)

- 2e11cbeb4c9cf97474f762ef7f915dcb05fb0a0471ba43963edf2ddc8383e162 (083ffdf111ff60b2184024fc23c3ed55e36fa482)

- README.md +84 -0

- config.json +51 -0

- generation_config.json +6 -0

- model.safetensors +3 -0

- modeling_gritlm7b.py +1422 -0

- plots.png +0 -0

- smash_config.json +27 -0

README.md

ADDED

|

@@ -0,0 +1,84 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

thumbnail: "https://assets-global.website-files.com/646b351987a8d8ce158d1940/64ec9e96b4334c0e1ac41504_Logo%20with%20white%20text.svg"

|

| 3 |

+

metrics:

|

| 4 |

+

- memory_disk

|

| 5 |

+

- memory_inference

|

| 6 |

+

- inference_latency

|

| 7 |

+

- inference_throughput

|

| 8 |

+

- inference_CO2_emissions

|

| 9 |

+

- inference_energy_consumption

|

| 10 |

+

tags:

|

| 11 |

+

- pruna-ai

|

| 12 |

+

---

|

| 13 |

+

<!-- header start -->

|

| 14 |

+

<!-- 200823 -->

|

| 15 |

+

<div style="width: auto; margin-left: auto; margin-right: auto">

|

| 16 |

+

<a href="https://www.pruna.ai/" target="_blank" rel="noopener noreferrer">

|

| 17 |

+

<img src="https://i.imgur.com/eDAlcgk.png" alt="PrunaAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 18 |

+

</a>

|

| 19 |

+

</div>

|

| 20 |

+

<!-- header end -->

|

| 21 |

+

|

| 22 |

+

[](https://twitter.com/PrunaAI)

|

| 23 |

+

[](https://github.com/PrunaAI)

|

| 24 |

+

[](https://www.linkedin.com/company/93832878/admin/feed/posts/?feedType=following)

|

| 25 |

+

[](https://discord.gg/CP4VSgck)

|

| 26 |

+

|

| 27 |

+

# Simply make AI models cheaper, smaller, faster, and greener!

|

| 28 |

+

|

| 29 |

+

- Give a thumbs up if you like this model!

|

| 30 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 31 |

+

- Request access to easily compress your *own* AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 32 |

+

- Read the documentations to know more [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/)

|

| 33 |

+

- Join Pruna AI community on Discord [here](https://discord.gg/CP4VSgck) to share feedback/suggestions or get help.

|

| 34 |

+

|

| 35 |

+

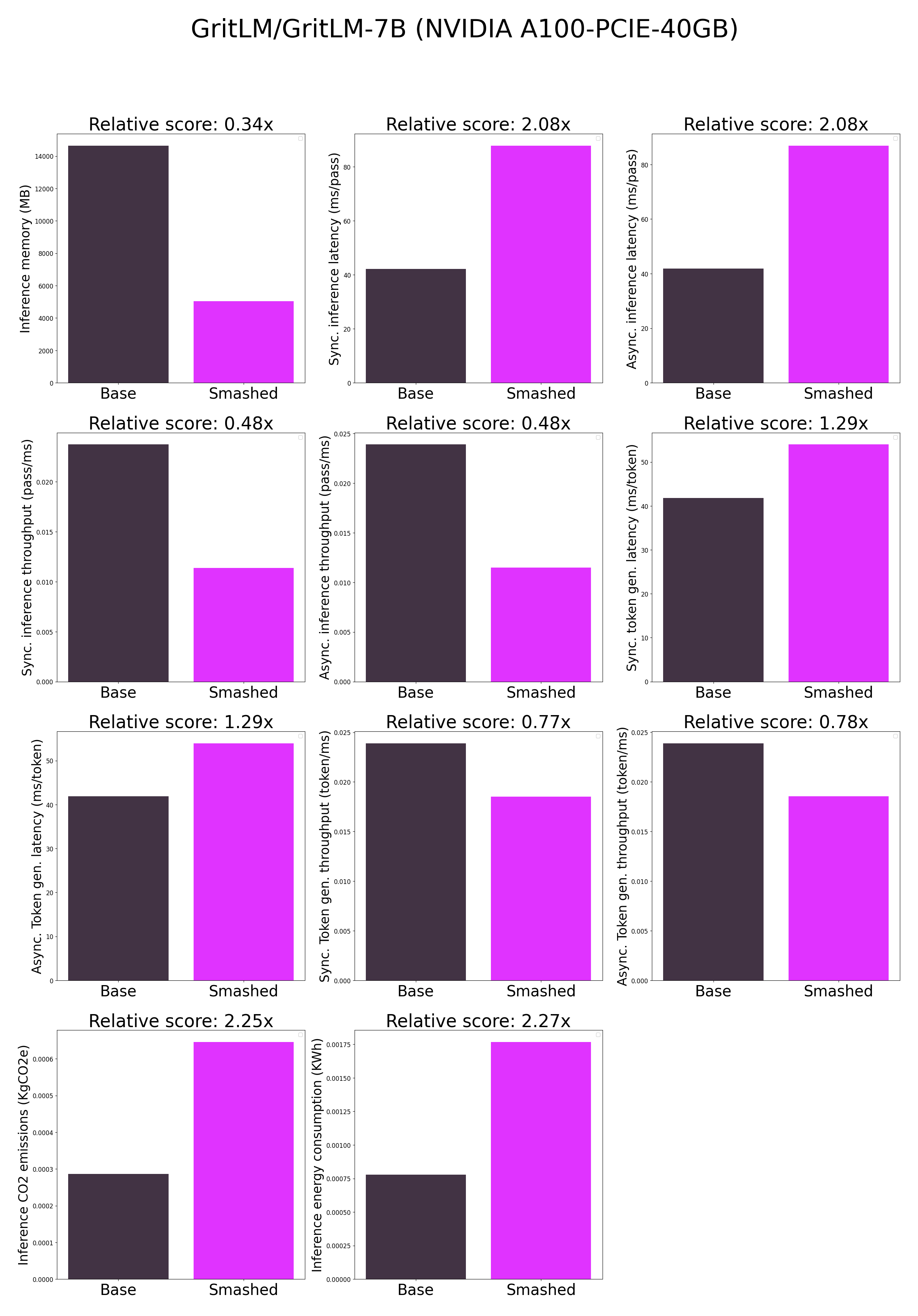

## Results

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

**Frequently Asked Questions**

|

| 40 |

+

- ***How does the compression work?*** The model is compressed with llm-int8.

|

| 41 |

+

- ***How does the model quality change?*** The quality of the model output might vary compared to the base model.

|

| 42 |

+

- ***How is the model efficiency evaluated?*** These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 43 |

+

- ***What is the model format?*** We use safetensors.

|

| 44 |

+

- ***What calibration data has been used?*** If needed by the compression method, we used WikiText as the calibration data.

|

| 45 |

+

- ***What is the naming convention for Pruna Huggingface models?*** We take the original model name and append "turbo", "tiny", or "green" if the smashed model has a measured inference speed, inference memory, or inference energy consumption which is less than 90% of the original base model.

|

| 46 |

+

- ***How to compress my own models?*** You can request premium access to more compression methods and tech support for your specific use-cases [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 47 |

+

- ***What are "first" metrics?*** Results mentioning "first" are obtained after the first run of the model. The first run might take more memory or be slower than the subsequent runs due cuda overheads.

|

| 48 |

+

- ***What are "Sync" and "Async" metrics?*** "Sync" metrics are obtained by syncing all GPU processes and stop measurement when all of them are executed. "Async" metrics are obtained without syncing all GPU processes and stop when the model output can be used by the CPU. We provide both metrics since both could be relevant depending on the use-case. We recommend to test the efficiency gains directly in your use-cases.

|

| 49 |

+

|

| 50 |

+

## Setup

|

| 51 |

+

|

| 52 |

+

You can run the smashed model with these steps:

|

| 53 |

+

|

| 54 |

+

0. Check requirements from the original repo GritLM/GritLM-7B installed. In particular, check python, cuda, and transformers versions.

|

| 55 |

+

1. Make sure that you have installed quantization related packages.

|

| 56 |

+

```bash

|

| 57 |

+

pip install transformers accelerate bitsandbytes>0.37.0

|

| 58 |

+

```

|

| 59 |

+

2. Load & run the model.

|

| 60 |

+

```python

|

| 61 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

+

|

| 63 |

+

model = AutoModelForCausalLM.from_pretrained("PrunaAI/GritLM-GritLM-7B-bnb-4bit-smashed",

|

| 64 |

+

trust_remote_code=True)

|

| 65 |

+

tokenizer = AutoTokenizer.from_pretrained("GritLM/GritLM-7B")

|

| 66 |

+

|

| 67 |

+

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

| 68 |

+

|

| 69 |

+

outputs = model.generate(input_ids, max_new_tokens=216)

|

| 70 |

+

tokenizer.decode(outputs[0])

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

## Configurations

|

| 74 |

+

|

| 75 |

+

The configuration info are in `smash_config.json`.

|

| 76 |

+

|

| 77 |

+

## Credits & License

|

| 78 |

+

|

| 79 |

+

The license of the smashed model follows the license of the original model. Please check the license of the original model GritLM/GritLM-7B before using this model which provided the base model. The license of the `pruna-engine` is [here](https://pypi.org/project/pruna-engine/) on Pypi.

|

| 80 |

+

|

| 81 |

+

## Want to compress other models?

|

| 82 |

+

|

| 83 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 84 |

+

- Request access to easily compress your own AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

config.json

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/tmp/tmp2wct6ej9",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"MistralForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoModel": "GritLM/GritLM-7B--modeling_gritlm7b.MistralModel",

|

| 9 |

+

"AutoModelForCausalLM": "modeling_gritlm7b.MistralForCausalLM",

|

| 10 |

+

"AutoModelForSequenceClassification": "GritLM/GritLM-7B--modeling_gritlm7b.MistralForSequenceClassification"

|

| 11 |

+

},

|

| 12 |

+

"bos_token_id": 1,

|

| 13 |

+

"eos_token_id": 2,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 4096,

|

| 16 |

+

"id2label": {

|

| 17 |

+

"0": "LABEL_0"

|

| 18 |

+

},

|

| 19 |

+

"initializer_range": 0.02,

|

| 20 |

+

"intermediate_size": 14336,

|

| 21 |

+

"label2id": {

|

| 22 |

+

"LABEL_0": 0

|

| 23 |

+

},

|

| 24 |

+

"max_position_embeddings": 32768,

|

| 25 |

+

"model_type": "mistral",

|

| 26 |

+

"num_attention_heads": 32,

|

| 27 |

+

"num_hidden_layers": 32,

|

| 28 |

+

"num_key_value_heads": 8,

|

| 29 |

+

"quantization_config": {

|

| 30 |

+

"bnb_4bit_compute_dtype": "bfloat16",

|

| 31 |

+

"bnb_4bit_quant_type": "fp4",

|

| 32 |

+

"bnb_4bit_use_double_quant": true,

|

| 33 |

+

"llm_int8_enable_fp32_cpu_offload": false,

|

| 34 |

+

"llm_int8_has_fp16_weight": false,

|

| 35 |

+

"llm_int8_skip_modules": [

|

| 36 |

+

"lm_head"

|

| 37 |

+

],

|

| 38 |

+

"llm_int8_threshold": 6.0,

|

| 39 |

+

"load_in_4bit": true,

|

| 40 |

+

"load_in_8bit": false,

|

| 41 |

+

"quant_method": "bitsandbytes"

|

| 42 |

+

},

|

| 43 |

+

"rms_norm_eps": 1e-05,

|

| 44 |

+

"rope_theta": 10000.0,

|

| 45 |

+

"sliding_window": 4096,

|

| 46 |

+

"tie_word_embeddings": false,

|

| 47 |

+

"torch_dtype": "float16",

|

| 48 |

+

"transformers_version": "4.37.1",

|

| 49 |

+

"use_cache": true,

|

| 50 |

+

"vocab_size": 32000

|

| 51 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"transformers_version": "4.37.1"

|

| 6 |

+

}

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a674cb4bb36dde94f1487bf15187bc75a007263f44090c66d9f409e068db231b

|

| 3 |

+

size 4125687624

|

modeling_gritlm7b.py

ADDED

|

@@ -0,0 +1,1422 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2023 Mistral AI and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# This code is based on EleutherAI's GPT-NeoX library and the GPT-NeoX

|

| 5 |

+

# and OPT implementations in this library. It has been modified from its

|

| 6 |

+

# original forms to accommodate minor architectural differences compared

|

| 7 |

+

# to GPT-NeoX and OPT used by the Meta AI team that trained the model.

|

| 8 |

+

#

|

| 9 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 10 |

+

# you may not use this file except in compliance with the License.

|

| 11 |

+

# You may obtain a copy of the License at

|

| 12 |

+

#

|

| 13 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 14 |

+

#

|

| 15 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 16 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 17 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 18 |

+

# See the License for the specific language governing permissions and

|

| 19 |

+

# limitations under the License.

|

| 20 |

+

""" PyTorch Mistral model."""

|

| 21 |

+

import inspect

|

| 22 |

+

import math

|

| 23 |

+

import os

|

| 24 |

+

import warnings

|

| 25 |

+

from typing import List, Optional, Tuple, Union

|

| 26 |

+

|

| 27 |

+

import torch

|

| 28 |

+

import torch.nn.functional as F

|

| 29 |

+

import torch.utils.checkpoint

|

| 30 |

+

from torch import nn

|

| 31 |

+

from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

|

| 32 |

+

|

| 33 |

+

from transformers.activations import ACT2FN

|

| 34 |

+

from transformers.cache_utils import Cache, DynamicCache

|

| 35 |

+

from transformers.modeling_attn_mask_utils import _prepare_4d_causal_attention_mask, _prepare_4d_causal_attention_mask_for_sdpa, _prepare_4d_attention_mask, _prepare_4d_attention_mask_for_sdpa

|

| 36 |

+

from transformers.modeling_outputs import BaseModelOutputWithPast, CausalLMOutputWithPast, SequenceClassifierOutputWithPast

|

| 37 |

+

from transformers.modeling_utils import PreTrainedModel

|

| 38 |

+

from transformers.utils import (

|

| 39 |

+

add_start_docstrings,

|

| 40 |

+

add_start_docstrings_to_model_forward,

|

| 41 |

+

is_flash_attn_2_available,

|

| 42 |

+

is_flash_attn_greater_or_equal_2_10,

|

| 43 |

+

logging,

|

| 44 |

+

replace_return_docstrings,

|

| 45 |

+

)

|

| 46 |

+

from transformers import MistralConfig

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

# transformers has a bug where it will try to import everything from a custom model file unless there's try/except

|

| 50 |

+

try:

|

| 51 |

+

if is_flash_attn_2_available():

|

| 52 |

+

from flash_attn import flash_attn_func, flash_attn_varlen_func

|

| 53 |

+

from flash_attn.bert_padding import index_first_axis, pad_input, unpad_input # noqa

|

| 54 |

+

|

| 55 |

+

_flash_supports_window_size = "window_size" in list(inspect.signature(flash_attn_func).parameters)

|

| 56 |

+

except:

|

| 57 |

+

pass

|

| 58 |

+

|

| 59 |

+

logger = logging.get_logger(__name__)

|

| 60 |

+

|

| 61 |

+

_CONFIG_FOR_DOC = "MistralConfig"

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

# Copied from transformers.models.llama.modeling_llama._get_unpad_data

|

| 65 |

+

def _get_unpad_data(attention_mask):

|

| 66 |

+

seqlens_in_batch = attention_mask.sum(dim=-1, dtype=torch.int32)

|

| 67 |

+

indices = torch.nonzero(attention_mask.flatten(), as_tuple=False).flatten()

|

| 68 |

+

max_seqlen_in_batch = seqlens_in_batch.max().item()

|

| 69 |

+

cu_seqlens = F.pad(torch.cumsum(seqlens_in_batch, dim=0, dtype=torch.torch.int32), (1, 0))

|

| 70 |

+

return (

|

| 71 |

+

indices,

|

| 72 |

+

cu_seqlens,

|

| 73 |

+

max_seqlen_in_batch,

|

| 74 |

+

)

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

# Copied from transformers.models.llama.modeling_llama.LlamaRMSNorm with Llama->Mistral

|

| 78 |

+

class MistralRMSNorm(nn.Module):

|

| 79 |

+

def __init__(self, hidden_size, eps=1e-6):

|

| 80 |

+

"""

|

| 81 |

+

MistralRMSNorm is equivalent to T5LayerNorm

|

| 82 |

+

"""

|

| 83 |

+

super().__init__()

|

| 84 |

+

self.weight = nn.Parameter(torch.ones(hidden_size))

|

| 85 |

+

self.variance_epsilon = eps

|

| 86 |

+

|

| 87 |

+

def forward(self, hidden_states):

|

| 88 |

+

input_dtype = hidden_states.dtype

|

| 89 |

+

hidden_states = hidden_states.to(torch.float32)

|

| 90 |

+

variance = hidden_states.pow(2).mean(-1, keepdim=True)

|

| 91 |

+

hidden_states = hidden_states * torch.rsqrt(variance + self.variance_epsilon)

|

| 92 |

+

return self.weight * hidden_states.to(input_dtype)

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

# Copied from transformers.models.llama.modeling_llama.LlamaRotaryEmbedding with Llama->Mistral

|

| 96 |

+

class MistralRotaryEmbedding(nn.Module):

|

| 97 |

+

def __init__(self, dim, max_position_embeddings=2048, base=10000, device=None):

|

| 98 |

+

super().__init__()

|

| 99 |

+

|

| 100 |

+

self.dim = dim

|

| 101 |

+

self.max_position_embeddings = max_position_embeddings

|

| 102 |

+

self.base = base

|

| 103 |

+

inv_freq = 1.0 / (self.base ** (torch.arange(0, self.dim, 2).float().to(device) / self.dim))

|

| 104 |

+

self.register_buffer("inv_freq", inv_freq, persistent=False)

|

| 105 |

+

|

| 106 |

+

# Build here to make `torch.jit.trace` work.

|

| 107 |

+

self._set_cos_sin_cache(

|

| 108 |

+

seq_len=max_position_embeddings, device=self.inv_freq.device, dtype=torch.get_default_dtype()

|

| 109 |

+

)

|

| 110 |

+

|

| 111 |

+

def _set_cos_sin_cache(self, seq_len, device, dtype):

|

| 112 |

+

self.max_seq_len_cached = seq_len

|

| 113 |

+

t = torch.arange(self.max_seq_len_cached, device=device, dtype=self.inv_freq.dtype)

|

| 114 |

+

|

| 115 |

+

freqs = torch.outer(t, self.inv_freq)

|

| 116 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 117 |

+

emb = torch.cat((freqs, freqs), dim=-1)

|

| 118 |

+

self.register_buffer("cos_cached", emb.cos().to(dtype), persistent=False)

|

| 119 |

+

self.register_buffer("sin_cached", emb.sin().to(dtype), persistent=False)

|

| 120 |

+

|

| 121 |

+

def forward(self, x, seq_len=None):

|

| 122 |

+

# x: [bs, num_attention_heads, seq_len, head_size]

|

| 123 |

+

if seq_len > self.max_seq_len_cached:

|

| 124 |

+

self._set_cos_sin_cache(seq_len=seq_len, device=x.device, dtype=x.dtype)

|

| 125 |

+

|

| 126 |

+

return (

|

| 127 |

+

self.cos_cached[:seq_len].to(dtype=x.dtype),

|

| 128 |

+

self.sin_cached[:seq_len].to(dtype=x.dtype),

|

| 129 |

+

)

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

# Copied from transformers.models.llama.modeling_llama.rotate_half

|

| 133 |

+

def rotate_half(x):

|

| 134 |

+

"""Rotates half the hidden dims of the input."""

|

| 135 |

+

x1 = x[..., : x.shape[-1] // 2]

|

| 136 |

+

x2 = x[..., x.shape[-1] // 2 :]

|

| 137 |

+

return torch.cat((-x2, x1), dim=-1)

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

# Copied from transformers.models.llama.modeling_llama.apply_rotary_pos_emb

|

| 141 |

+

def apply_rotary_pos_emb(q, k, cos, sin, position_ids, unsqueeze_dim=1):

|

| 142 |

+

"""Applies Rotary Position Embedding to the query and key tensors.

|

| 143 |

+

|

| 144 |

+

Args:

|

| 145 |

+

q (`torch.Tensor`): The query tensor.

|

| 146 |

+

k (`torch.Tensor`): The key tensor.

|

| 147 |

+

cos (`torch.Tensor`): The cosine part of the rotary embedding.

|

| 148 |

+

sin (`torch.Tensor`): The sine part of the rotary embedding.

|

| 149 |

+

position_ids (`torch.Tensor`):

|

| 150 |

+

The position indices of the tokens corresponding to the query and key tensors. For example, this can be

|

| 151 |

+

used to pass offsetted position ids when working with a KV-cache.

|

| 152 |

+

unsqueeze_dim (`int`, *optional*, defaults to 1):

|

| 153 |

+

The 'unsqueeze_dim' argument specifies the dimension along which to unsqueeze cos[position_ids] and

|

| 154 |

+

sin[position_ids] so that they can be properly broadcasted to the dimensions of q and k. For example, note

|

| 155 |

+

that cos[position_ids] and sin[position_ids] have the shape [batch_size, seq_len, head_dim]. Then, if q and

|

| 156 |

+

k have the shape [batch_size, heads, seq_len, head_dim], then setting unsqueeze_dim=1 makes

|

| 157 |

+

cos[position_ids] and sin[position_ids] broadcastable to the shapes of q and k. Similarly, if q and k have

|

| 158 |

+

the shape [batch_size, seq_len, heads, head_dim], then set unsqueeze_dim=2.

|

| 159 |

+

Returns:

|

| 160 |

+

`tuple(torch.Tensor)` comprising of the query and key tensors rotated using the Rotary Position Embedding.

|

| 161 |

+

"""

|

| 162 |

+

cos = cos[position_ids].unsqueeze(unsqueeze_dim)

|

| 163 |

+

sin = sin[position_ids].unsqueeze(unsqueeze_dim)

|

| 164 |

+

q_embed = (q * cos) + (rotate_half(q) * sin)

|

| 165 |

+

k_embed = (k * cos) + (rotate_half(k) * sin)

|

| 166 |

+

return q_embed, k_embed

|

| 167 |

+

|

| 168 |

+

|

| 169 |

+

class MistralMLP(nn.Module):

|

| 170 |

+

def __init__(self, config):

|

| 171 |

+

super().__init__()

|

| 172 |

+

self.config = config

|

| 173 |

+

self.hidden_size = config.hidden_size

|

| 174 |

+

self.intermediate_size = config.intermediate_size

|

| 175 |

+

self.gate_proj = nn.Linear(self.hidden_size, self.intermediate_size, bias=False)

|

| 176 |

+

self.up_proj = nn.Linear(self.hidden_size, self.intermediate_size, bias=False)

|

| 177 |

+

self.down_proj = nn.Linear(self.intermediate_size, self.hidden_size, bias=False)

|

| 178 |

+

self.act_fn = ACT2FN[config.hidden_act]

|

| 179 |

+

|

| 180 |

+

def forward(self, x):

|

| 181 |

+

return self.down_proj(self.act_fn(self.gate_proj(x)) * self.up_proj(x))

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

# Copied from transformers.models.llama.modeling_llama.repeat_kv

|

| 185 |

+

def repeat_kv(hidden_states: torch.Tensor, n_rep: int) -> torch.Tensor:

|

| 186 |

+

"""

|

| 187 |

+

This is the equivalent of torch.repeat_interleave(x, dim=1, repeats=n_rep). The hidden states go from (batch,

|

| 188 |

+

num_key_value_heads, seqlen, head_dim) to (batch, num_attention_heads, seqlen, head_dim)

|

| 189 |

+

"""

|

| 190 |

+

batch, num_key_value_heads, slen, head_dim = hidden_states.shape

|

| 191 |

+

if n_rep == 1:

|

| 192 |

+

return hidden_states

|

| 193 |

+

hidden_states = hidden_states[:, :, None, :, :].expand(batch, num_key_value_heads, n_rep, slen, head_dim)

|

| 194 |

+

return hidden_states.reshape(batch, num_key_value_heads * n_rep, slen, head_dim)

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

class MistralAttention(nn.Module):

|

| 198 |

+

"""

|

| 199 |

+

Multi-headed attention from 'Attention Is All You Need' paper. Modified to use sliding window attention: Longformer

|

| 200 |

+

and "Generating Long Sequences with Sparse Transformers".

|

| 201 |

+

"""

|

| 202 |

+

|

| 203 |

+

def __init__(self, config: MistralConfig, layer_idx: Optional[int] = None):

|

| 204 |

+

super().__init__()

|

| 205 |

+

self.config = config

|

| 206 |

+

self.layer_idx = layer_idx

|

| 207 |

+

if layer_idx is None:

|

| 208 |

+

logger.warning_once(

|

| 209 |

+

f"Instantiating {self.__class__.__name__} without passing `layer_idx` is not recommended and will "

|

| 210 |

+

"to errors during the forward call, if caching is used. Please make sure to provide a `layer_idx` "

|

| 211 |

+

"when creating this class."

|

| 212 |

+

)

|

| 213 |

+

|

| 214 |

+

self.hidden_size = config.hidden_size

|

| 215 |

+

self.num_heads = config.num_attention_heads

|

| 216 |

+

self.head_dim = self.hidden_size // self.num_heads

|

| 217 |

+

self.num_key_value_heads = config.num_key_value_heads

|

| 218 |

+

self.num_key_value_groups = self.num_heads // self.num_key_value_heads

|

| 219 |

+

self.max_position_embeddings = config.max_position_embeddings

|

| 220 |

+

self.rope_theta = config.rope_theta

|

| 221 |

+

self.attention_dropout = config.attention_dropout

|

| 222 |

+

|

| 223 |

+

if (self.head_dim * self.num_heads) != self.hidden_size:

|

| 224 |

+

raise ValueError(

|

| 225 |

+

f"hidden_size must be divisible by num_heads (got `hidden_size`: {self.hidden_size}"

|

| 226 |

+

f" and `num_heads`: {self.num_heads})."

|

| 227 |

+

)

|

| 228 |

+

self.q_proj = nn.Linear(self.hidden_size, self.num_heads * self.head_dim, bias=False)

|

| 229 |

+

self.k_proj = nn.Linear(self.hidden_size, self.num_key_value_heads * self.head_dim, bias=False)

|

| 230 |

+

self.v_proj = nn.Linear(self.hidden_size, self.num_key_value_heads * self.head_dim, bias=False)

|

| 231 |

+

self.o_proj = nn.Linear(self.num_heads * self.head_dim, self.hidden_size, bias=False)

|

| 232 |

+

|

| 233 |

+

self.rotary_emb = MistralRotaryEmbedding(

|

| 234 |

+

self.head_dim,

|

| 235 |

+

max_position_embeddings=self.max_position_embeddings,

|

| 236 |

+

base=self.rope_theta,

|

| 237 |

+

)

|

| 238 |

+

|

| 239 |

+

def _shape(self, tensor: torch.Tensor, seq_len: int, bsz: int):

|

| 240 |

+

return tensor.view(bsz, seq_len, self.num_heads, self.head_dim).transpose(1, 2).contiguous()

|

| 241 |

+

|

| 242 |

+

def forward(

|

| 243 |

+

self,

|

| 244 |

+

hidden_states: torch.Tensor,

|

| 245 |

+

attention_mask: Optional[torch.Tensor] = None,

|

| 246 |

+

position_ids: Optional[torch.LongTensor] = None,

|

| 247 |

+

past_key_value: Optional[Cache] = None,

|

| 248 |

+

output_attentions: bool = False,

|

| 249 |

+

use_cache: bool = False,

|

| 250 |

+

**kwargs,

|

| 251 |

+

) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

|

| 252 |

+

if "padding_mask" in kwargs:

|

| 253 |

+

warnings.warn(

|

| 254 |

+

"Passing `padding_mask` is deprecated and will be removed in v4.37. Please make sure use `attention_mask` instead.`"

|

| 255 |

+

)

|

| 256 |

+

bsz, q_len, _ = hidden_states.size()

|

| 257 |

+

|

| 258 |

+

query_states = self.q_proj(hidden_states)

|

| 259 |

+

key_states = self.k_proj(hidden_states)

|

| 260 |

+

value_states = self.v_proj(hidden_states)

|

| 261 |

+

|

| 262 |

+

query_states = query_states.view(bsz, q_len, self.num_heads, self.head_dim).transpose(1, 2)

|

| 263 |

+

key_states = key_states.view(bsz, q_len, self.num_key_value_heads, self.head_dim).transpose(1, 2)

|

| 264 |

+

value_states = value_states.view(bsz, q_len, self.num_key_value_heads, self.head_dim).transpose(1, 2)

|

| 265 |

+

|

| 266 |

+

kv_seq_len = key_states.shape[-2]

|

| 267 |

+

if past_key_value is not None:

|

| 268 |

+

if self.layer_idx is None:

|

| 269 |

+

raise ValueError(

|

| 270 |

+

f"The cache structure has changed since version v4.36. If you are using {self.__class__.__name__} "

|

| 271 |

+

"for auto-regressive decoding with k/v caching, please make sure to initialize the attention class "

|

| 272 |

+

"with a layer index."

|

| 273 |

+

)

|

| 274 |

+

kv_seq_len += past_key_value.get_usable_length(kv_seq_len, self.layer_idx)

|

| 275 |

+

cos, sin = self.rotary_emb(value_states, seq_len=kv_seq_len)

|

| 276 |

+

query_states, key_states = apply_rotary_pos_emb(query_states, key_states, cos, sin, position_ids)

|

| 277 |

+

|

| 278 |

+

if past_key_value is not None:

|

| 279 |

+

cache_kwargs = {"sin": sin, "cos": cos} # Specific to RoPE models

|

| 280 |

+

key_states, value_states = past_key_value.update(key_states, value_states, self.layer_idx, cache_kwargs)

|

| 281 |

+

|

| 282 |

+

# repeat k/v heads if n_kv_heads < n_heads

|

| 283 |

+

key_states = repeat_kv(key_states, self.num_key_value_groups)

|

| 284 |

+

value_states = repeat_kv(value_states, self.num_key_value_groups)

|

| 285 |

+

|

| 286 |

+

attn_weights = torch.matmul(query_states, key_states.transpose(2, 3)) / math.sqrt(self.head_dim)

|

| 287 |

+

|

| 288 |

+

if attn_weights.size() != (bsz, self.num_heads, q_len, kv_seq_len):

|

| 289 |

+

raise ValueError(

|

| 290 |

+

f"Attention weights should be of size {(bsz, self.num_heads, q_len, kv_seq_len)}, but is"

|

| 291 |

+

f" {attn_weights.size()}"

|

| 292 |

+

)

|

| 293 |

+

|

| 294 |

+

if attention_mask is not None:

|

| 295 |

+

if attention_mask.size() != (bsz, 1, q_len, kv_seq_len):

|

| 296 |

+

raise ValueError(

|

| 297 |

+

f"Attention mask should be of size {(bsz, 1, q_len, kv_seq_len)}, but is {attention_mask.size()}"

|

| 298 |

+

)

|

| 299 |

+

|

| 300 |

+

attn_weights = attn_weights + attention_mask

|

| 301 |

+

|

| 302 |

+

# upcast attention to fp32

|

| 303 |

+

attn_weights = nn.functional.softmax(attn_weights, dim=-1, dtype=torch.float32).to(query_states.dtype)

|

| 304 |

+

attn_weights = nn.functional.dropout(attn_weights, p=self.attention_dropout, training=self.training)

|

| 305 |

+

attn_output = torch.matmul(attn_weights, value_states)

|

| 306 |

+

|

| 307 |

+

if attn_output.size() != (bsz, self.num_heads, q_len, self.head_dim):

|

| 308 |

+

raise ValueError(

|

| 309 |

+

f"`attn_output` should be of size {(bsz, self.num_heads, q_len, self.head_dim)}, but is"

|

| 310 |

+

f" {attn_output.size()}"

|

| 311 |

+

)

|

| 312 |

+

|

| 313 |

+

attn_output = attn_output.transpose(1, 2).contiguous()

|

| 314 |

+

attn_output = attn_output.reshape(bsz, q_len, self.hidden_size)

|

| 315 |

+

|

| 316 |

+

attn_output = self.o_proj(attn_output)

|

| 317 |

+

|

| 318 |

+

if not output_attentions:

|

| 319 |

+

attn_weights = None

|

| 320 |

+

|

| 321 |

+

return attn_output, attn_weights, past_key_value

|

| 322 |

+

|

| 323 |

+

|

| 324 |

+

class MistralFlashAttention2(MistralAttention):

|

| 325 |

+

"""

|

| 326 |

+

Mistral flash attention module. This module inherits from `MistralAttention` as the weights of the module stays

|

| 327 |

+

untouched. The only required change would be on the forward pass where it needs to correctly call the public API of

|

| 328 |

+