File size: 8,161 Bytes

472e87c d943666 472e87c 34854fb 472e87c 2b9dbda 443f909 472e87c bf11f42 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c f183e8f 472e87c |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 |

---

license: mit

datasets:

- TIGER-Lab/VideoFeedback

language:

- en

metrics:

- accuracy/spcc

library_name: transformers

pipeline_tag: video-text-to-text

---

[📃Paper](https://arxiv.org/abs/2406.15252) | [🌐Website](https://tiger-ai-lab.github.io/VideoScore/) | [💻Github](https://github.com/TIGER-AI-Lab/VideoScore) | [🛢️Datasets](https://huggingface.co/datasets/TIGER-Lab/VideoFeedback) | [🤗Model (VideoScore)](https://huggingface.co/TIGER-Lab/VideoScore) | [🤗Model (VideoScore-anno-only)](https://huggingface.co/TIGER-Lab/VideoScore-anno-only) | [🤗Model (VideoScore-v1.1)](https://huggingface.co/TIGER-Lab/VideoScore-v1.1)| [🤗Demo](https://huggingface.co/spaces/TIGER-Lab/VideoScore)

## Introduction

- 🤯🤯Try on the new version [VideoScore-v1.1](https://huggingface.co/TIGER-Lab/VideoScore-v1.1), a variant from [VideoScore](https://huggingface.co/TIGER-Lab/VideoScore) with better performance in **"text-to-video alignment"** subscore and the support for **48 frames** in inference now!

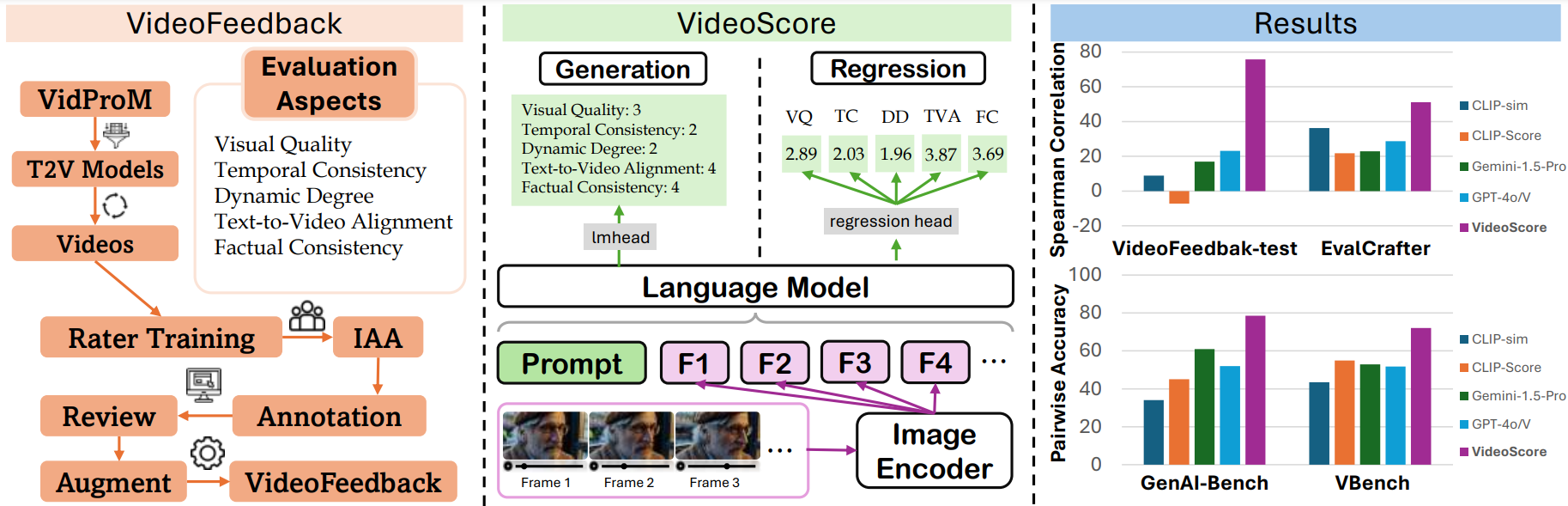

- [VideoScore](https://huggingface.co/TIGER-Lab/VideoScore) series is a video quality evaluation model series, taking [Mantis-8B-Idefics2](https://huggingface.co/TIGER-Lab/Mantis-8B-Idefics2) as base-model

and trained on [VideoFeedback](https://huggingface.co/datasets/TIGER-Lab/VideoFeedback),

a large video evaluation dataset with multi-aspect human scores.

- Following VideoScore, VideoScore-v1.1 can also reach about 75 Spearman correlation with humans on VideoFeedback-test, surpassing all the MLLM-prompting methods and feature-based metrics.

VideoScore-v1.1 also beat the best baselines on other two benchmarks GenAI-Bench and VBench, showing high alignment with human evaluations.

For the data details of these benchmarks, please refer to [VideoScore-Bench](https://huggingface.co/datasets/TIGER-Lab/VideoScore-Bench).

- VideoScore-v1.1 is a **regression version** model.

## Evaluation Results

We test VideoScore-v1.1 on VideoFeedback-test and take Spearman corrleation between model's output and human ratings

averaged among all the evaluation aspects as indicator.

The evaluation results are shown below:

| metric | VideoFeedback-test |

|:-----------------:|:------------------:|

| VideoScore-v1.1 | **74.0** |

| Gemini-1.5-Pro | 22.1 |

| Gemini-1.5-Flash | 20.8 |

| GPT-4o | <u>23.1</u> |

| CLIP-sim | 8.9 |

| DINO-sim | 7.5 |

| SSIM-sim | 13.4 |

| CLIP-Score | -7.2 |

| LLaVA-1.5-7B | 8.5 |

| LLaVA-1.6-7B | -3.1 |

| X-CLIP-Score | -1.9 |

| PIQE | -10.1 |

| BRISQUE | -20.3 |

| Idefics2 | 6.5 |

| MSE-dyn | -5.5 |

| SSIM-dyn | -12.9 |

The best in VideoScore series is in bold and the best in baselines is underlined.

## Usage

### Installation

```

pip install git+https://github.com/TIGER-AI-Lab/VideoScore.git

# or

# pip install mantis-vl

```

### Inference

```

cd VideoScore/examples

```

```python

import av

import numpy as np

from typing import List

from PIL import Image

import torch

from transformers import AutoProcessor

from mantis.models.idefics2 import Idefics2ForSequenceClassification

def _read_video_pyav(

frame_paths:List[str],

max_frames:int,

):

frames = []

container.seek(0)

start_index = indices[0]

end_index = indices[-1]

for i, frame in enumerate(container.decode(video=0)):

if i > end_index:

break

if i >= start_index and i in indices:

frames.append(frame)

return np.stack([x.to_ndarray(format="rgb24") for x in frames])

ROUND_DIGIT=3

REGRESSION_QUERY_PROMPT = """

Suppose you are an expert in judging and evaluating the quality of AI-generated videos,

please watch the following frames of a given video and see the text prompt for generating the video,

then give scores from 5 different dimensions:

(1) visual quality: the quality of the video in terms of clearness, resolution, brightness, and color

(2) temporal consistency, both the consistency of objects or humans and the smoothness of motion or movements

(3) dynamic degree, the degree of dynamic changes

(4) text-to-video alignment, the alignment between the text prompt and the video content

(5) factual consistency, the consistency of the video content with the common-sense and factual knowledge

for each dimension, output a float number from 1.0 to 4.0,

the higher the number is, the better the video performs in that sub-score,

the lowest 1.0 means Bad, the highest 4.0 means Perfect/Real (the video is like a real video)

Here is an output example:

visual quality: 3.2

temporal consistency: 2.7

dynamic degree: 4.0

text-to-video alignment: 2.3

factual consistency: 1.8

For this video, the text prompt is "{text_prompt}",

all the frames of video are as follows:

"""

# MAX_NUM_FRAMES=16

# model_name="TIGER-Lab/VideoScore"

# =======================================

# we support 48 frames in VideoScore-v1.1

# =======================================

MAX_NUM_FRAMES=48

model_name="TIGER-Lab/VideoScore-v1.1"

video_path="video1.mp4"

video_prompt="Near the Elephant Gate village, they approach the haunted house at night. Rajiv feels anxious, but Bhavesh encourages him. As they reach the house, a mysterious sound in the air adds to the suspense."

processor = AutoProcessor.from_pretrained(model_name,torch_dtype=torch.bfloat16)

model = Idefics2ForSequenceClassification.from_pretrained(model_name,torch_dtype=torch.bfloat16).eval()

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

# sample uniformly 8 frames from the video

container = av.open(video_path)

total_frames = container.streams.video[0].frames

if total_frames > MAX_NUM_FRAMES:

indices = np.arange(0, total_frames, total_frames / MAX_NUM_FRAMES).astype(int)

else:

indices = np.arange(total_frames)

frames = [Image.fromarray(x) for x in _read_video_pyav(container, indices)]

eval_prompt = REGRESSION_QUERY_PROMPT.format(text_prompt=video_prompt)

num_image_token = eval_prompt.count("<image>")

if num_image_token < len(frames):

eval_prompt += "<image> " * (len(frames) - num_image_token)

flatten_images = []

for x in [frames]:

if isinstance(x, list):

flatten_images.extend(x)

else:

flatten_images.append(x)

flatten_images = [Image.open(x) if isinstance(x, str) else x for x in flatten_images]

inputs = processor(text=eval_prompt, images=flatten_images, return_tensors="pt")

inputs = {k: v.to(model.device) for k, v in inputs.items()}

with torch.no_grad():

outputs = model(**inputs)

logits = outputs.logits

num_aspects = logits.shape[-1]

aspect_scores = []

for i in range(num_aspects):

aspect_scores.append(round(logits[0, i].item(),ROUND_DIGIT))

print(aspect_scores)

"""

model output on visual quality, temporal consistency, dynamic degree,

text-to-video alignment, factual consistency, respectively

VideoScore:

[2.297, 2.469, 2.906, 2.766, 2.516]

VideoScore-v1.1:

[2.328, 2.484, 2.562, 1.969, 2.594]

"""

```

### Training

see [VideoScore/training](https://github.com/TIGER-AI-Lab/VideoScore/tree/main/training) for details

### Evaluation

see [VideoScore/benchmark](https://github.com/TIGER-AI-Lab/VideoScore/tree/main/benchmark) for details

## Citation

```bibtex

@article{he2024videoscore,

title = {VideoScore: Building Automatic Metrics to Simulate Fine-grained Human Feedback for Video Generation},

author = {He, Xuan and Jiang, Dongfu and Zhang, Ge and Ku, Max and Soni, Achint and Siu, Sherman and Chen, Haonan and Chandra, Abhranil and Jiang, Ziyan and Arulraj, Aaran and Wang, Kai and Do, Quy Duc and Ni, Yuansheng and Lyu, Bohan and Narsupalli, Yaswanth and Fan, Rongqi and Lyu, Zhiheng and Lin, Yuchen and Chen, Wenhu},

journal = {ArXiv},

year = {2024},

volume={abs/2406.15252},

url = {https://arxiv.org/abs/2406.15252},

}

``` |