Add files using upload-large-folder tool

Browse files- .gitattributes +1 -0

- LICENSE.md +51 -0

- README.md +242 -0

- SD3.5L_example_workflow.json +688 -0

- mmdit.png +0 -0

- model_index.json +40 -0

- scheduler/scheduler_config.json +6 -0

- sd3.5_large.safetensors +3 -0

- sd3.5_large_demo.png +3 -0

- text_encoder/config.json +24 -0

- text_encoder/model.fp16.safetensors +3 -0

- text_encoder/model.safetensors +3 -0

- text_encoder_2/config.json +24 -0

- text_encoder_2/model.fp16.safetensors +3 -0

- text_encoder_2/model.safetensors +3 -0

- text_encoder_3/config.json +31 -0

- text_encoder_3/model-00001-of-00002.safetensors +3 -0

- text_encoder_3/model-00002-of-00002.safetensors +3 -0

- text_encoder_3/model.fp16-00001-of-00002.safetensors +3 -0

- text_encoder_3/model.fp16-00002-of-00002.safetensors +3 -0

- text_encoder_3/model.safetensors.index.fp16.json +226 -0

- text_encoder_3/model.safetensors.index.json +226 -0

- text_encoders/README.md +11 -0

- text_encoders/clip_g.safetensors +3 -0

- text_encoders/clip_l.safetensors +3 -0

- text_encoders/t5xxl_fp16.safetensors +3 -0

- text_encoders/t5xxl_fp8_e4m3fn.safetensors +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +30 -0

- tokenizer/tokenizer_config.json +30 -0

- tokenizer/vocab.json +0 -0

- tokenizer_2/merges.txt +0 -0

- tokenizer_2/special_tokens_map.json +30 -0

- tokenizer_2/tokenizer_config.json +38 -0

- tokenizer_2/vocab.json +0 -0

- tokenizer_3/special_tokens_map.json +125 -0

- tokenizer_3/spiece.model +3 -0

- tokenizer_3/tokenizer.json +0 -0

- tokenizer_3/tokenizer_config.json +940 -0

- transformer/config.json +16 -0

- transformer/diffusion_pytorch_model-00001-of-00002.safetensors +3 -0

- transformer/diffusion_pytorch_model-00002-of-00002.safetensors +3 -0

- transformer/diffusion_pytorch_model.safetensors.index.json +0 -0

- vae/config.json +38 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

sd3.5_large_demo.png filter=lfs diff=lfs merge=lfs -text

|

LICENSE.md

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

STABILITY AI COMMUNITY LICENSE AGREEMENT

|

| 2 |

+

Last Updated: July 5, 2024

|

| 3 |

+

|

| 4 |

+

|

| 5 |

+

I. INTRODUCTION

|

| 6 |

+

|

| 7 |

+

This Agreement applies to any individual person or entity ("You", "Your" or "Licensee") that uses or distributes any portion or element of the Stability AI Materials or Derivative Works thereof for any Research & Non-Commercial or Commercial purpose. Capitalized terms not otherwise defined herein are defined in Section V below.

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

This Agreement is intended to allow research, non-commercial, and limited commercial uses of the Models free of charge. In order to ensure that certain limited commercial uses of the Models continue to be allowed, this Agreement preserves free access to the Models for people or organizations generating annual revenue of less than US $1,000,000 (or local currency equivalent).

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

By clicking "I Accept" or by using or distributing or using any portion or element of the Stability Materials or Derivative Works, You agree that You have read, understood and are bound by the terms of this Agreement. If You are acting on behalf of a company, organization or other entity, then "You" includes you and that entity, and You agree that You: (i) are an authorized representative of such entity with the authority to bind such entity to this Agreement, and (ii) You agree to the terms of this Agreement on that entity's behalf.

|

| 14 |

+

|

| 15 |

+

II. RESEARCH & NON-COMMERCIAL USE LICENSE

|

| 16 |

+

|

| 17 |

+

Subject to the terms of this Agreement, Stability AI grants You a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable and royalty-free limited license under Stability AI's intellectual property or other rights owned by Stability AI embodied in the Stability AI Materials to use, reproduce, distribute, and create Derivative Works of, and make modifications to, the Stability AI Materials for any Research or Non-Commercial Purpose. "Research Purpose" means academic or scientific advancement, and in each case, is not primarily intended for commercial advantage or monetary compensation to You or others. "Non-Commercial Purpose" means any purpose other than a Research Purpose that is not primarily intended for commercial advantage or monetary compensation to You or others, such as personal use (i.e., hobbyist) or evaluation and testing.

|

| 18 |

+

|

| 19 |

+

III. COMMERCIAL USE LICENSE

|

| 20 |

+

|

| 21 |

+

Subject to the terms of this Agreement (including the remainder of this Section III), Stability AI grants You a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable and royalty-free limited license under Stability AI's intellectual property or other rights owned by Stability AI embodied in the Stability AI Materials to use, reproduce, distribute, and create Derivative Works of, and make modifications to, the Stability AI Materials for any Commercial Purpose. "Commercial Purpose" means any purpose other than a Research Purpose or Non-Commercial Purpose that is primarily intended for commercial advantage or monetary compensation to You or others, including but not limited to, (i) creating, modifying, or distributing Your product or service, including via a hosted service or application programming interface, and (ii) for Your business's or organization's internal operations.

|

| 22 |

+

If You are using or distributing the Stability AI Materials for a Commercial Purpose, You must register with Stability AI at (https://stability.ai/community-license). If at any time You or Your Affiliate(s), either individually or in aggregate, generate more than USD $1,000,000 in annual revenue (or the equivalent thereof in Your local currency), regardless of whether that revenue is generated directly or indirectly from the Stability AI Materials or Derivative Works, any licenses granted to You under this Agreement shall terminate as of such date. You must request a license from Stability AI at (https://stability.ai/enterprise) , which Stability AI may grant to You in its sole discretion. If you receive Stability AI Materials, or any Derivative Works thereof, from a Licensee as part of an integrated end user product, then Section III of this Agreement will not apply to you.

|

| 23 |

+

|

| 24 |

+

IV. GENERAL TERMS

|

| 25 |

+

|

| 26 |

+

Your Research, Non-Commercial, and Commercial License(s) under this Agreement are subject to the following terms.

|

| 27 |

+

a. Distribution & Attribution. If You distribute or make available the Stability AI Materials or a Derivative Work to a third party, or a product or service that uses any portion of them, You shall: (i) provide a copy of this Agreement to that third party, (ii) retain the following attribution notice within a "Notice" text file distributed as a part of such copies: "This Stability AI Model is licensed under the Stability AI Community License, Copyright © Stability AI Ltd. All Rights Reserved", and (iii) prominently display "Powered by Stability AI" on a related website, user interface, blogpost, about page, or product documentation. If You create a Derivative Work, You may add your own attribution notice(s) to the "Notice" text file included with that Derivative Work, provided that You clearly indicate which attributions apply to the Stability AI Materials and state in the "Notice" text file that You changed the Stability AI Materials and how it was modified.

|

| 28 |

+

b. Use Restrictions. Your use of the Stability AI Materials and Derivative Works, including any output or results of the Stability AI Materials or Derivative Works, must comply with applicable laws and regulations (including Trade Control Laws and equivalent regulations) and adhere to the Documentation and Stability AI's AUP, which is hereby incorporated by reference. Furthermore, You will not use the Stability AI Materials or Derivative Works, or any output or results of the Stability AI Materials or Derivative Works, to create or improve any foundational generative AI model (excluding the Models or Derivative Works).

|

| 29 |

+

c. Intellectual Property.

|

| 30 |

+

(i) Trademark License. No trademark licenses are granted under this Agreement, and in connection with the Stability AI Materials or Derivative Works, You may not use any name or mark owned by or associated with Stability AI or any of its Affiliates, except as required under Section IV(a) herein.

|

| 31 |

+

(ii) Ownership of Derivative Works. As between You and Stability AI, You are the owner of Derivative Works You create, subject to Stability AI's ownership of the Stability AI Materials and any Derivative Works made by or for Stability AI.

|

| 32 |

+

(iii) Ownership of Outputs. As between You and Stability AI, You own any outputs generated from the Models or Derivative Works to the extent permitted by applicable law.

|

| 33 |

+

(iv) Disputes. If You or Your Affiliate(s) institute litigation or other proceedings against Stability AI (including a cross-claim or counterclaim in a lawsuit) alleging that the Stability AI Materials, Derivative Works or associated outputs or results, or any portion of any of the foregoing, constitutes infringement of intellectual property or other rights owned or licensable by You, then any licenses granted to You under this Agreement shall terminate as of the date such litigation or claim is filed or instituted. You will indemnify and hold harmless Stability AI from and against any claim by any third party arising out of or related to Your use or distribution of the Stability AI Materials or Derivative Works in violation of this Agreement.

|

| 34 |

+

(v) Feedback. From time to time, You may provide Stability AI with verbal and/or written suggestions, comments or other feedback related to Stability AI's existing or prospective technology, products or services (collectively, "Feedback"). You are not obligated to provide Stability AI with Feedback, but to the extent that You do, You hereby grant Stability AI a perpetual, irrevocable, royalty-free, fully-paid, sub-licensable, transferable, non-exclusive, worldwide right and license to exploit the Feedback in any manner without restriction. Your Feedback is provided "AS IS" and You make no warranties whatsoever about any Feedback.

|

| 35 |

+

d. Disclaimer Of Warranty. UNLESS REQUIRED BY APPLICABLE LAW, THE STABILITY AI MATERIALS AND ANY OUTPUT AND RESULTS THEREFROM ARE PROVIDED ON AN "AS IS" BASIS, WITHOUT WARRANTIES OF ANY KIND, EITHER EXPRESS OR IMPLIED, INCLUDING, WITHOUT LIMITATION, ANY WARRANTIES OF TITLE, NON-INFRINGEMENT, MERCHANTABILITY, OR FITNESS FOR A PARTICULAR PURPOSE. YOU ARE SOLELY RESPONSIBLE FOR DETERMINING THE APPROPRIATENESS OR LAWFULNESS OF USING OR REDISTRIBUTING THE STABILITY AI MATERIALS, DERIVATIVE WORKS OR ANY OUTPUT OR RESULTS AND ASSUME ANY RISKS ASSOCIATED WITH YOUR USE OF THE STABILITY AI MATERIALS, DERIVATIVE WORKS AND ANY OUTPUT AND RESULTS.

|

| 36 |

+

e. Limitation Of Liability. IN NO EVENT WILL STABILITY AI OR ITS AFFILIATES BE LIABLE UNDER ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, TORT, NEGLIGENCE, PRODUCTS LIABILITY, OR OTHERWISE, ARISING OUT OF THIS AGREEMENT, FOR ANY LOST PROFITS OR ANY DIRECT, INDIRECT, SPECIAL, CONSEQUENTIAL, INCIDENTAL, EXEMPLARY OR PUNITIVE DAMAGES, EVEN IF STABILITY AI OR ITS AFFILIATES HAVE BEEN ADVISED OF THE POSSIBILITY OF ANY OF THE FOREGOING.

|

| 37 |

+

f. Term And Termination. The term of this Agreement will commence upon Your acceptance of this Agreement or access to the Stability AI Materials and will continue in full force and effect until terminated in accordance with the terms and conditions herein. Stability AI may terminate this Agreement if You are in breach of any term or condition of this Agreement. Upon termination of this Agreement, You shall delete and cease use of any Stability AI Materials or Derivative Works. Section IV(d), (e), and (g) shall survive the termination of this Agreement.

|

| 38 |

+

g. Governing Law. This Agreement will be governed by and constructed in accordance with the laws of the United States and the State of California without regard to choice of law principles, and the UN Convention on Contracts for International Sale of Goods does not apply to this Agreement.

|

| 39 |

+

|

| 40 |

+

V. DEFINITIONS

|

| 41 |

+

|

| 42 |

+

"Affiliate(s)" means any entity that directly or indirectly controls, is controlled by, or is under common control with the subject entity; for purposes of this definition, "control" means direct or indirect ownership or control of more than 50% of the voting interests of the subject entity.

|

| 43 |

+

"Agreement" means this Stability AI Community License Agreement.

|

| 44 |

+

"AUP" means the Stability AI Acceptable Use Policy available at https://stability.ai/use-policy, as may be updated from time to time.

|

| 45 |

+

"Derivative Work(s)" means (a) any derivative work of the Stability AI Materials as recognized by U.S. copyright laws and (b) any modifications to a Model, and any other model created which is based on or derived from the Model or the Model's output, including"fine tune" and "low-rank adaptation" models derived from a Model or a Model's output, but do not include the output of any Model.

|

| 46 |

+

"Documentation" means any specifications, manuals, documentation, and other written information provided by Stability AI related to the Software or Models.

|

| 47 |

+

"Model(s)" means, collectively, Stability AI's proprietary models and algorithms, including machine-learning models, trained model weights and other elements of the foregoing listed on Stability's Core Models Webpage available at, https://stability.ai/core-models, as may be updated from time to time.

|

| 48 |

+

"Stability AI" or "we" means Stability AI Ltd. and its Affiliates.

|

| 49 |

+

"Software" means Stability AI's proprietary software made available under this Agreement now or in the future.

|

| 50 |

+

"Stability AI Materials" means, collectively, Stability's proprietary Models, Software and Documentation (and any portion or combination thereof) made available under this Agreement.

|

| 51 |

+

"Trade Control Laws" means any applicable U.S. and non-U.S. export control and trade sanctions laws and regulations.

|

README.md

ADDED

|

@@ -0,0 +1,242 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

license_name: stabilityai-ai-community

|

| 4 |

+

license_link: LICENSE.md

|

| 5 |

+

tags:

|

| 6 |

+

- text-to-image

|

| 7 |

+

- stable-diffusion

|

| 8 |

+

- diffusers

|

| 9 |

+

inference: true

|

| 10 |

+

extra_gated_prompt: >-

|

| 11 |

+

By clicking "Agree", you agree to the [License

|

| 12 |

+

Agreement](https://huggingface.co/stabilityai/stable-diffusion-3.5-large/blob/main/LICENSE.md)

|

| 13 |

+

and acknowledge Stability AI's [Privacy

|

| 14 |

+

Policy](https://stability.ai/privacy-policy).

|

| 15 |

+

extra_gated_fields:

|

| 16 |

+

Name: text

|

| 17 |

+

Email: text

|

| 18 |

+

Country: country

|

| 19 |

+

Organization or Affiliation: text

|

| 20 |

+

Receive email updates and promotions on Stability AI products, services, and research?:

|

| 21 |

+

type: select

|

| 22 |

+

options:

|

| 23 |

+

- 'Yes'

|

| 24 |

+

- 'No'

|

| 25 |

+

What do you intend to use the model for?:

|

| 26 |

+

type: select

|

| 27 |

+

options:

|

| 28 |

+

- Research

|

| 29 |

+

- Personal use

|

| 30 |

+

- Creative Professional

|

| 31 |

+

- Startup

|

| 32 |

+

- Enterprise

|

| 33 |

+

I agree to the License Agreement and acknowledge Stability AI's Privacy Policy: checkbox

|

| 34 |

+

|

| 35 |

+

language:

|

| 36 |

+

- en

|

| 37 |

+

pipeline_tag: text-to-image

|

| 38 |

+

---

|

| 39 |

+

|

| 40 |

+

# Stable Diffusion 3.5 Large

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## Model

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

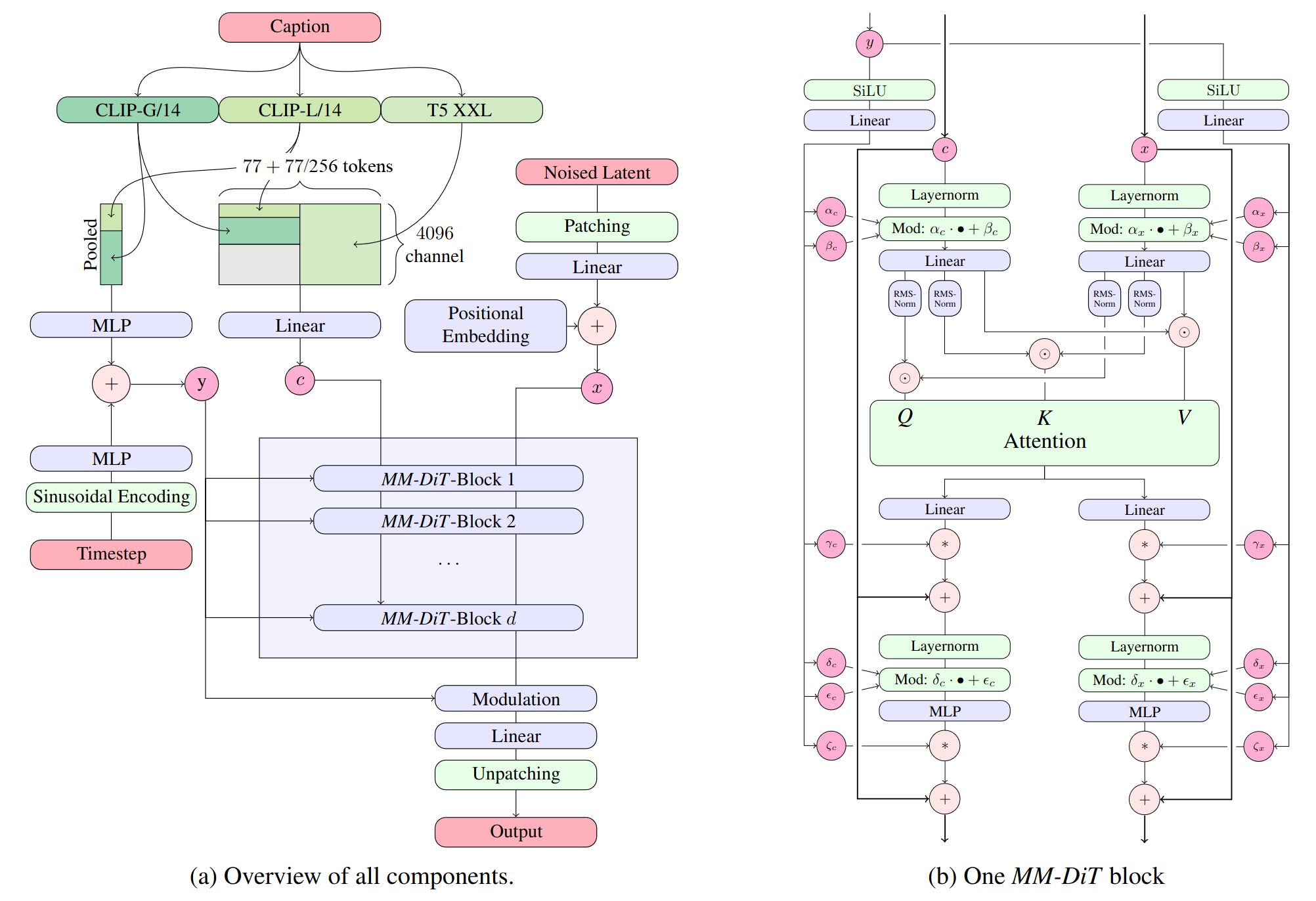

[Stable Diffusion 3.5 Large](https://stability.ai/news/introducing-stable-diffusion-3-5) is a Multimodal Diffusion Transformer (MMDiT) text-to-image model that features improved performance in image quality, typography, complex prompt understanding, and resource-efficiency.

|

| 49 |

+

|

| 50 |

+

Please note: This model is released under the [Stability Community License](https://stability.ai/community-license-agreement). Visit [Stability AI](https://stability.ai/license) to learn or [contact us](https://stability.ai/enterprise) for commercial licensing details.

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

### Model Description

|

| 54 |

+

|

| 55 |

+

- **Developed by:** Stability AI

|

| 56 |

+

- **Model type:** MMDiT text-to-image generative model

|

| 57 |

+

- **Model Description:** This model generates images based on text prompts. It is a [Multimodal Diffusion Transformer](https://arxiv.org/abs/2403.03206) that use three fixed, pretrained text encoders, and with QK-normalization to improve training stability.

|

| 58 |

+

|

| 59 |

+

### License

|

| 60 |

+

|

| 61 |

+

- **Community License:** Free for research, non-commercial, and commercial use for organizations or individuals with less than $1M in total annual revenue. More details can be found in the [Community License Agreement](https://stability.ai/community-license-agreement). Read more at https://stability.ai/license.

|

| 62 |

+

- **For individuals and organizations with annual revenue above $1M**: please [contact us](https://stability.ai/enterprise) to get an Enterprise License.

|

| 63 |

+

|

| 64 |

+

### Model Sources

|

| 65 |

+

|

| 66 |

+

For local or self-hosted use, we recommend [ComfyUI](https://github.com/comfyanonymous/ComfyUI) for node-based UI inference, or [diffusers](https://github.com/huggingface/diffusers) or [GitHub](https://github.com/Stability-AI/sd3.5) for programmatic use.

|

| 67 |

+

|

| 68 |

+

- **ComfyUI:** [Github](https://github.com/comfyanonymous/ComfyUI), [Example Workflow](https://comfyanonymous.github.io/ComfyUI_examples/sd3/)

|

| 69 |

+

- **Huggingface Space:** [Space](https://huggingface.co/spaces/stabilityai/stable-diffusion-3.5-large)

|

| 70 |

+

- **Diffusers**: [See below](#using-with-diffusers).

|

| 71 |

+

- **GitHub**: [GitHub](https://github.com/Stability-AI/sd3.5).

|

| 72 |

+

|

| 73 |

+

- **API Endpoints:**

|

| 74 |

+

- [Stability AI API](https://platform.stability.ai/docs/api-reference#tag/Generate/paths/~1v2beta~1stable-image~1generate~1sd3/post)

|

| 75 |

+

- [Replicate](https://replicate.com/stability-ai/stable-diffusion-3.5-large)

|

| 76 |

+

- [Deepinfra](https://deepinfra.com/stabilityai/sd3.5)

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

### Implementation Details

|

| 80 |

+

|

| 81 |

+

- **QK Normalization:** Implements the QK normalization technique to improve training Stability.

|

| 82 |

+

|

| 83 |

+

- **Text Encoders:**

|

| 84 |

+

- CLIPs: [OpenCLIP-ViT/G](https://github.com/mlfoundations/open_clip), [CLIP-ViT/L](https://github.com/openai/CLIP/tree/main), context length 77 tokens

|

| 85 |

+

- T5: [T5-xxl](https://huggingface.co/google/t5-v1_1-xxl), context length 77/256 tokens at different stages of training

|

| 86 |

+

|

| 87 |

+

- **Training Data and Strategy:**

|

| 88 |

+

|

| 89 |

+

This model was trained on a wide variety of data, including synthetic data and filtered publicly available data.

|

| 90 |

+

|

| 91 |

+

For more technical details of the original MMDiT architecture, please refer to the [Research paper](https://stability.ai/news/stable-diffusion-3-research-paper).

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

### Model Performance

|

| 95 |

+

|

| 96 |

+

See [blog](https://stability.ai/news/introducing-stable-diffusion-3-5) for our study about comparative performance in prompt adherence and aesthetic quality.

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

## File Structure

|

| 100 |

+

|

| 101 |

+

Click here to access the [Files and versions tab](https://huggingface.co/stabilityai/stable-diffusion-3.5-large/tree/main)

|

| 102 |

+

|

| 103 |

+

```│

|

| 104 |

+

├── text_encoders/

|

| 105 |

+

│ ├── README.md

|

| 106 |

+

│ ├── clip_g.safetensors

|

| 107 |

+

│ ├── clip_l.safetensors

|

| 108 |

+

│ ├── t5xxl_fp16.safetensors

|

| 109 |

+

│ └── t5xxl_fp8_e4m3fn.safetensors

|

| 110 |

+

│

|

| 111 |

+

├── README.md

|

| 112 |

+

├── LICENSE

|

| 113 |

+

├── sd3_large.safetensors

|

| 114 |

+

├── SD3.5L_example_workflow.json

|

| 115 |

+

└── sd3_large_demo.png

|

| 116 |

+

|

| 117 |

+

** File structure below is for diffusers integration**

|

| 118 |

+

├── scheduler/

|

| 119 |

+

├── text_encoder/

|

| 120 |

+

├── text_encoder_2/

|

| 121 |

+

├── text_encoder_3/

|

| 122 |

+

├── tokenizer/

|

| 123 |

+

├── tokenizer_2/

|

| 124 |

+

├── tokenizer_3/

|

| 125 |

+

├── transformer/

|

| 126 |

+

├── vae/

|

| 127 |

+

└── model_index.json

|

| 128 |

+

```

|

| 129 |

+

|

| 130 |

+

## Using with Diffusers

|

| 131 |

+

Upgrade to the latest version of the [🧨 diffusers library](https://github.com/huggingface/diffusers)

|

| 132 |

+

```

|

| 133 |

+

pip install -U diffusers

|

| 134 |

+

```

|

| 135 |

+

|

| 136 |

+

and then you can run

|

| 137 |

+

```py

|

| 138 |

+

import torch

|

| 139 |

+

from diffusers import StableDiffusion3Pipeline

|

| 140 |

+

|

| 141 |

+

pipe = StableDiffusion3Pipeline.from_pretrained("stabilityai/stable-diffusion-3.5-large", torch_dtype=torch.bfloat16)

|

| 142 |

+

pipe = pipe.to("cuda")

|

| 143 |

+

|

| 144 |

+

image = pipe(

|

| 145 |

+

"A capybara holding a sign that reads Hello World",

|

| 146 |

+

num_inference_steps=28,

|

| 147 |

+

guidance_scale=3.5,

|

| 148 |

+

).images[0]

|

| 149 |

+

image.save("capybara.png")

|

| 150 |

+

```

|

| 151 |

+

|

| 152 |

+

### Quantizing the model with diffusers

|

| 153 |

+

|

| 154 |

+

Reduce your VRAM usage and have the model fit on 🤏 VRAM GPUs

|

| 155 |

+

|

| 156 |

+

```

|

| 157 |

+

pip install bitsandbytes

|

| 158 |

+

```

|

| 159 |

+

|

| 160 |

+

```py

|

| 161 |

+

from diffusers import BitsAndBytesConfig, SD3Transformer2DModel

|

| 162 |

+

from diffusers import StableDiffusion3Pipeline

|

| 163 |

+

import torch

|

| 164 |

+

|

| 165 |

+

model_id = "stabilityai/stable-diffusion-3.5-large"

|

| 166 |

+

|

| 167 |

+

nf4_config = BitsAndBytesConfig(

|

| 168 |

+

load_in_4bit=True,

|

| 169 |

+

bnb_4bit_quant_type="nf4",

|

| 170 |

+

bnb_4bit_compute_dtype=torch.bfloat16

|

| 171 |

+

)

|

| 172 |

+

model_nf4 = SD3Transformer2DModel.from_pretrained(

|

| 173 |

+

model_id,

|

| 174 |

+

subfolder="transformer",

|

| 175 |

+

quantization_config=nf4_config,

|

| 176 |

+

torch_dtype=torch.bfloat16

|

| 177 |

+

)

|

| 178 |

+

|

| 179 |

+

pipeline = StableDiffusion3Pipeline.from_pretrained(

|

| 180 |

+

model_id,

|

| 181 |

+

transformer=model_nf4,

|

| 182 |

+

torch_dtype=torch.bfloat16

|

| 183 |

+

)

|

| 184 |

+

pipeline.enable_model_cpu_offload()

|

| 185 |

+

|

| 186 |

+

prompt = "A whimsical and creative image depicting a hybrid creature that is a mix of a waffle and a hippopotamus, basking in a river of melted butter amidst a breakfast-themed landscape. It features the distinctive, bulky body shape of a hippo. However, instead of the usual grey skin, the creature's body resembles a golden-brown, crispy waffle fresh off the griddle. The skin is textured with the familiar grid pattern of a waffle, each square filled with a glistening sheen of syrup. The environment combines the natural habitat of a hippo with elements of a breakfast table setting, a river of warm, melted butter, with oversized utensils or plates peeking out from the lush, pancake-like foliage in the background, a towering pepper mill standing in for a tree. As the sun rises in this fantastical world, it casts a warm, buttery glow over the scene. The creature, content in its butter river, lets out a yawn. Nearby, a flock of birds take flight"

|

| 187 |

+

|

| 188 |

+

image = pipeline(

|

| 189 |

+

prompt=prompt,

|

| 190 |

+

num_inference_steps=28,

|

| 191 |

+

guidance_scale=4.5,

|

| 192 |

+

max_sequence_length=512,

|

| 193 |

+

).images[0]

|

| 194 |

+

image.save("whimsical.png")

|

| 195 |

+

```

|

| 196 |

+

|

| 197 |

+

### Fine-tuning

|

| 198 |

+

|

| 199 |

+

Please see the fine-tuning guide [here](https://stabilityai.notion.site/Stable-Diffusion-3-5-Large-Fine-tuning-Tutorial-11a61cdcd1968027a15bdbd7c40be8c6).

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

## Uses

|

| 203 |

+

|

| 204 |

+

### Intended Uses

|

| 205 |

+

|

| 206 |

+

Intended uses include the following:

|

| 207 |

+

* Generation of artworks and use in design and other artistic processes.

|

| 208 |

+

* Applications in educational or creative tools.

|

| 209 |

+

* Research on generative models, including understanding the limitations of generative models.

|

| 210 |

+

|

| 211 |

+

All uses of the model must be in accordance with our [Acceptable Use Policy](https://stability.ai/use-policy).

|

| 212 |

+

|

| 213 |

+

### Out-of-Scope Uses

|

| 214 |

+

|

| 215 |

+

The model was not trained to be factual or true representations of people or events. As such, using the model to generate such content is out-of-scope of the abilities of this model.

|

| 216 |

+

|

| 217 |

+

## Safety

|

| 218 |

+

|

| 219 |

+

As part of our safety-by-design and responsible AI deployment approach, we take deliberate measures to ensure Integrity starts at the early stages of development. We implement safety measures throughout the development of our models. We have implemented safety mitigations that are intended to reduce the risk of certain harms, however we recommend that developers conduct their own testing and apply additional mitigations based on their specific use cases.

|

| 220 |

+

For more about our approach to Safety, please visit our [Safety page](https://stability.ai/safety).

|

| 221 |

+

|

| 222 |

+

### Integrity Evaluation

|

| 223 |

+

|

| 224 |

+

Our integrity evaluation methods include structured evaluations and red-teaming testing for certain harms. Testing was conducted primarily in English and may not cover all possible harms.

|

| 225 |

+

|

| 226 |

+

### Risks identified and mitigations:

|

| 227 |

+

|

| 228 |

+

* Harmful content: We have used filtered data sets when training our models and implemented safeguards that attempt to strike the right balance between usefulness and preventing harm. However, this does not guarantee that all possible harmful content has been removed. TAll developers and deployers should exercise caution and implement content safety guardrails based on their specific product policies and application use cases.

|

| 229 |

+

* Misuse: Technical limitations and developer and end-user education can help mitigate against malicious applications of models. All users are required to adhere to our [Acceptable Use Policy](https://stability.ai/use-policy), including when applying fine-tuning and prompt engineering mechanisms. Please reference the Stability AI Acceptable Use Policy for information on violative uses of our products.

|

| 230 |

+

* Privacy violations: Developers and deployers are encouraged to adhere to privacy regulations with techniques that respect data privacy.

|

| 231 |

+

|

| 232 |

+

### Contact

|

| 233 |

+

|

| 234 |

+

Please report any issues with the model or contact us:

|

| 235 |

+

|

| 236 |

+

* Safety issues: safety@stability.ai

|

| 237 |

+

* Security issues: security@stability.ai

|

| 238 |

+

* Privacy issues: privacy@stability.ai

|

| 239 |

+

* License and general: https://stability.ai/license

|

| 240 |

+

* Enterprise license: https://stability.ai/enterprise

|

| 241 |

+

|

| 242 |

+

|

SD3.5L_example_workflow.json

ADDED

|

@@ -0,0 +1,688 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"last_node_id": 300,

|

| 3 |

+

"last_link_id": 605,

|

| 4 |

+

"nodes": [

|

| 5 |

+

{

|

| 6 |

+

"id": 70,

|

| 7 |

+

"type": "ConditioningSetTimestepRange",

|

| 8 |

+

"pos": [

|

| 9 |

+

126,

|

| 10 |

+

252

|

| 11 |

+

],

|

| 12 |

+

"size": {

|

| 13 |

+

"0": 317.4000244140625,

|

| 14 |

+

"1": 82

|

| 15 |

+

},

|

| 16 |

+

"flags": {},

|

| 17 |

+

"order": 7,

|

| 18 |

+

"mode": 0,

|

| 19 |

+

"inputs": [

|

| 20 |

+

{

|

| 21 |

+

"name": "conditioning",

|

| 22 |

+

"type": "CONDITIONING",

|

| 23 |

+

"link": 93,

|

| 24 |

+

"slot_index": 0

|

| 25 |

+

}

|

| 26 |

+

],

|

| 27 |

+

"outputs": [

|

| 28 |

+

{

|

| 29 |

+

"name": "CONDITIONING",

|

| 30 |

+

"type": "CONDITIONING",

|

| 31 |

+

"links": [

|

| 32 |

+

92

|

| 33 |

+

],

|

| 34 |

+

"shape": 3,

|

| 35 |

+

"slot_index": 0

|

| 36 |

+

}

|

| 37 |

+

],

|

| 38 |

+

"properties": {

|

| 39 |

+

"Node name for S&R": "ConditioningSetTimestepRange"

|

| 40 |

+

},

|

| 41 |

+

"widgets_values": [

|

| 42 |

+

0,

|

| 43 |

+

0.1

|

| 44 |

+

]

|

| 45 |

+

},

|

| 46 |

+

{

|

| 47 |

+

"id": 68,

|

| 48 |

+

"type": "ConditioningSetTimestepRange",

|

| 49 |

+

"pos": [

|

| 50 |

+

126,

|

| 51 |

+

126

|

| 52 |

+

],

|

| 53 |

+

"size": {

|

| 54 |

+

"0": 317.4000244140625,

|

| 55 |

+

"1": 82

|

| 56 |

+

},

|

| 57 |

+

"flags": {},

|

| 58 |

+

"order": 9,

|

| 59 |

+

"mode": 0,

|

| 60 |

+

"inputs": [

|

| 61 |

+

{

|

| 62 |

+

"name": "conditioning",

|

| 63 |

+

"type": "CONDITIONING",

|

| 64 |

+

"link": 90

|

| 65 |

+

}

|

| 66 |

+

],

|

| 67 |

+

"outputs": [

|

| 68 |

+

{

|

| 69 |

+

"name": "CONDITIONING",

|

| 70 |

+

"type": "CONDITIONING",

|

| 71 |

+

"links": [

|

| 72 |

+

91

|

| 73 |

+

],

|

| 74 |

+

"shape": 3,

|

| 75 |

+

"slot_index": 0

|

| 76 |

+

}

|

| 77 |

+

],

|

| 78 |

+

"properties": {

|

| 79 |

+

"Node name for S&R": "ConditioningSetTimestepRange"

|

| 80 |

+

},

|

| 81 |

+

"widgets_values": [

|

| 82 |

+

0.1,

|

| 83 |

+

1

|

| 84 |

+

]

|

| 85 |

+

},

|

| 86 |

+

{

|

| 87 |

+

"id": 67,

|

| 88 |

+

"type": "ConditioningZeroOut",

|

| 89 |

+

"pos": [

|

| 90 |

+

-126,

|

| 91 |

+

126

|

| 92 |

+

],

|

| 93 |

+

"size": {

|

| 94 |

+

"0": 211.60000610351562,

|

| 95 |

+

"1": 26

|

| 96 |

+

},

|

| 97 |

+

"flags": {},

|

| 98 |

+

"order": 8,

|

| 99 |

+

"mode": 0,

|

| 100 |

+

"inputs": [

|

| 101 |

+

{

|

| 102 |

+

"name": "conditioning",

|

| 103 |

+

"type": "CONDITIONING",

|

| 104 |

+

"link": 597

|

| 105 |

+

}

|

| 106 |

+

],

|

| 107 |

+

"outputs": [

|

| 108 |

+

{

|

| 109 |

+

"name": "CONDITIONING",

|

| 110 |

+

"type": "CONDITIONING",

|

| 111 |

+

"links": [

|

| 112 |

+

90

|

| 113 |

+

],

|

| 114 |

+

"shape": 3,

|

| 115 |

+

"slot_index": 0

|

| 116 |

+

}

|

| 117 |

+

],

|

| 118 |

+

"properties": {

|

| 119 |

+

"Node name for S&R": "ConditioningZeroOut"

|

| 120 |

+

}

|

| 121 |

+

},

|

| 122 |

+

{

|

| 123 |

+

"id": 71,

|

| 124 |

+

"type": "CLIPTextEncode",

|

| 125 |

+

"pos": [

|

| 126 |

+

-1010,

|

| 127 |

+

252

|

| 128 |

+

],

|

| 129 |

+

"size": [

|

| 130 |

+

351.8130934034689,

|

| 131 |

+

195.5754530459866

|

| 132 |

+

],

|

| 133 |

+

"flags": {},

|

| 134 |

+

"order": 6,

|

| 135 |

+

"mode": 0,

|

| 136 |

+

"inputs": [

|

| 137 |

+

{

|

| 138 |

+

"name": "clip",

|

| 139 |

+

"type": "CLIP",

|

| 140 |

+

"link": 94

|

| 141 |

+

}

|

| 142 |

+

],

|

| 143 |

+

"outputs": [

|

| 144 |

+

{

|

| 145 |

+

"name": "CONDITIONING",

|

| 146 |

+

"type": "CONDITIONING",

|

| 147 |

+

"links": [

|

| 148 |

+

93,

|

| 149 |

+

597

|

| 150 |

+

],

|

| 151 |

+

"shape": 3,

|

| 152 |

+

"slot_index": 0

|

| 153 |

+

}

|

| 154 |

+

],

|

| 155 |

+

"properties": {

|

| 156 |

+

"Node name for S&R": "CLIPTextEncode"

|

| 157 |

+

},

|

| 158 |

+

"widgets_values": [

|

| 159 |

+

""

|

| 160 |

+

],

|

| 161 |

+

"color": "#322",

|

| 162 |

+

"bgcolor": "#533"

|

| 163 |

+

},

|

| 164 |

+

{

|

| 165 |

+

"id": 6,

|

| 166 |

+

"type": "CLIPTextEncode",

|

| 167 |

+

"pos": [

|

| 168 |

+

-1008,

|

| 169 |

+

2

|

| 170 |

+

],

|

| 171 |

+

"size": [

|

| 172 |

+

342.83352734520565,

|

| 173 |

+

177.20867231021555

|

| 174 |

+

],

|

| 175 |

+

"flags": {},

|

| 176 |

+

"order": 5,

|

| 177 |

+

"mode": 0,

|

| 178 |

+

"inputs": [

|

| 179 |

+

{

|

| 180 |

+

"name": "clip",

|

| 181 |

+

"type": "CLIP",

|

| 182 |

+

"link": 5

|

| 183 |

+

}

|

| 184 |

+

],

|

| 185 |

+

"outputs": [

|

| 186 |

+

{

|

| 187 |

+

"name": "CONDITIONING",

|

| 188 |

+

"type": "CONDITIONING",

|

| 189 |

+

"links": [

|

| 190 |

+

569

|

| 191 |

+

],

|

| 192 |

+

"shape": 3,

|

| 193 |

+

"slot_index": 0

|

| 194 |

+

}

|

| 195 |

+

],

|

| 196 |

+

"properties": {

|

| 197 |

+

"Node name for S&R": "CLIPTextEncode"

|

| 198 |

+

},

|

| 199 |

+

"widgets_values": [

|

| 200 |

+

"beautiful scenery nature glass bottle landscape, purple galaxy bottle,"

|

| 201 |

+

],

|

| 202 |

+

"color": "#232",

|

| 203 |

+

"bgcolor": "#353"

|

| 204 |

+

},

|

| 205 |

+

{

|

| 206 |

+

"id": 294,

|

| 207 |

+

"type": "KSampler",

|

| 208 |

+

"pos": [

|

| 209 |

+

882,

|

| 210 |

+

-504

|

| 211 |

+

],

|

| 212 |

+

"size": [

|

| 213 |

+

378,

|

| 214 |

+

504

|

| 215 |

+

],

|

| 216 |

+

"flags": {},

|

| 217 |

+

"order": 11,

|

| 218 |

+

"mode": 0,

|

| 219 |

+

"inputs": [

|

| 220 |

+

{

|

| 221 |

+

"name": "model",

|

| 222 |

+

"type": "MODEL",

|

| 223 |

+

"link": 568

|

| 224 |

+

},

|

| 225 |

+

{

|

| 226 |

+

"name": "positive",

|

| 227 |

+

"type": "CONDITIONING",

|

| 228 |

+

"link": 569

|

| 229 |

+

},

|

| 230 |

+

{

|

| 231 |

+

"name": "negative",

|

| 232 |

+

"type": "CONDITIONING",

|

| 233 |

+

"link": 604

|

| 234 |

+

},

|

| 235 |

+

{

|

| 236 |

+

"name": "latent_image",

|

| 237 |

+

"type": "LATENT",

|

| 238 |

+

"link": 598

|

| 239 |

+

}

|

| 240 |

+

],

|

| 241 |

+

"outputs": [

|

| 242 |

+

{

|

| 243 |

+

"name": "LATENT",

|

| 244 |

+

"type": "LATENT",

|

| 245 |

+

"links": [

|

| 246 |

+

572

|

| 247 |

+

],

|

| 248 |

+

"shape": 3,

|

| 249 |

+

"slot_index": 0

|

| 250 |

+

}

|

| 251 |

+

],

|

| 252 |

+

"properties": {

|

| 253 |

+

"Node name for S&R": "KSampler"

|

| 254 |

+

},

|

| 255 |

+

"widgets_values": [

|

| 256 |

+

66155038679131,

|

| 257 |

+

"randomize",

|

| 258 |

+

40,

|

| 259 |

+

4.5,

|

| 260 |

+

"dpmpp_2m",

|

| 261 |

+

"sgm_uniform",

|

| 262 |

+

1

|

| 263 |

+

]

|

| 264 |

+

},

|

| 265 |

+

{

|

| 266 |

+

"id": 13,

|

| 267 |

+

"type": "ModelSamplingSD3",

|

| 268 |

+

"pos": [

|

| 269 |

+

126,

|

| 270 |

+

-504

|

| 271 |

+

],

|

| 272 |

+

"size": {

|

| 273 |

+

"0": 315,

|

| 274 |

+

"1": 58

|

| 275 |

+

},

|

| 276 |

+

"flags": {

|

| 277 |

+

"collapsed": false

|

| 278 |

+

},

|

| 279 |

+

"order": 4,

|

| 280 |

+

"mode": 0,

|

| 281 |

+

"inputs": [

|

| 282 |

+

{

|

| 283 |

+

"name": "model",

|

| 284 |

+

"type": "MODEL",

|

| 285 |

+

"link": 445

|

| 286 |

+

}

|

| 287 |

+

],

|

| 288 |

+

"outputs": [

|

| 289 |

+

{

|

| 290 |

+

"name": "MODEL",

|

| 291 |

+

"type": "MODEL",

|

| 292 |

+

"links": [

|

| 293 |

+

568

|

| 294 |

+

],

|

| 295 |

+

"shape": 3,

|

| 296 |

+

"slot_index": 0

|

| 297 |

+

}

|

| 298 |

+

],

|

| 299 |

+

"properties": {

|

| 300 |

+

"Node name for S&R": "ModelSamplingSD3"

|

| 301 |

+

},

|

| 302 |

+

"widgets_values": [

|

| 303 |

+

3

|

| 304 |

+

]

|

| 305 |

+

},

|

| 306 |

+

{

|

| 307 |

+

"id": 4,

|

| 308 |

+

"type": "CheckpointLoaderSimple",

|

| 309 |

+

"pos": [

|

| 310 |

+

-2016,

|

| 311 |

+

-504

|

| 312 |

+

],

|

| 313 |

+

"size": {

|

| 314 |

+

"0": 632.6060180664062,

|

| 315 |

+

"1": 98

|

| 316 |

+

},

|

| 317 |

+

"flags": {},

|

| 318 |

+

"order": 0,

|

| 319 |

+

"mode": 0,

|

| 320 |

+

"outputs": [

|

| 321 |

+

{

|

| 322 |

+

"name": "MODEL",

|

| 323 |

+

"type": "MODEL",

|

| 324 |

+

"links": [

|

| 325 |

+

445

|

| 326 |

+

],

|

| 327 |

+

"shape": 3,

|

| 328 |

+

"slot_index": 0

|

| 329 |

+

},

|

| 330 |

+

{

|

| 331 |

+

"name": "CLIP",

|

| 332 |

+

"type": "CLIP",

|

| 333 |

+

"links": null,

|

| 334 |

+

"shape": 3

|

| 335 |

+

},

|

| 336 |

+

{

|

| 337 |

+

"name": "VAE",

|

| 338 |

+

"type": "VAE",

|

| 339 |

+

"links": [

|

| 340 |

+

605

|

| 341 |

+

],

|

| 342 |

+

"shape": 3,

|

| 343 |

+

"slot_index": 2

|

| 344 |

+

}

|

| 345 |

+

],

|

| 346 |

+

"properties": {

|

| 347 |

+

"Node name for S&R": "CheckpointLoaderSimple"

|

| 348 |

+

},

|

| 349 |

+

"widgets_values": [

|

| 350 |

+

"sd3.5_large.safetensors"

|

| 351 |

+

]

|

| 352 |

+

},

|

| 353 |

+

{

|

| 354 |

+

"id": 69,

|

| 355 |

+

"type": "ConditioningCombine",

|

| 356 |

+

"pos": [

|

| 357 |

+

504,

|

| 358 |

+

126

|

| 359 |

+

],

|

| 360 |

+

"size": {

|

| 361 |

+

"0": 228.39999389648438,

|

| 362 |

+

"1": 46

|

| 363 |

+

},

|

| 364 |

+

"flags": {},

|

| 365 |

+

"order": 10,

|

| 366 |

+

"mode": 0,

|

| 367 |

+

"inputs": [

|

| 368 |

+

{

|

| 369 |

+

"name": "conditioning_1",

|