Model description | Example output | Banchmark results | How to use | Training and finetuning

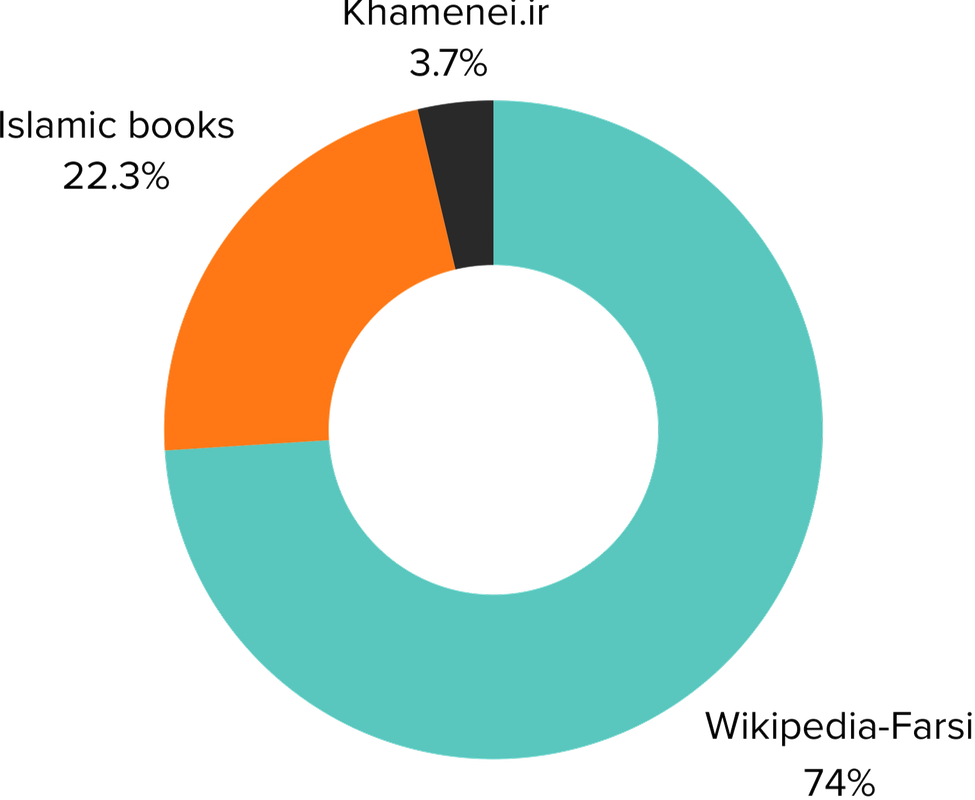

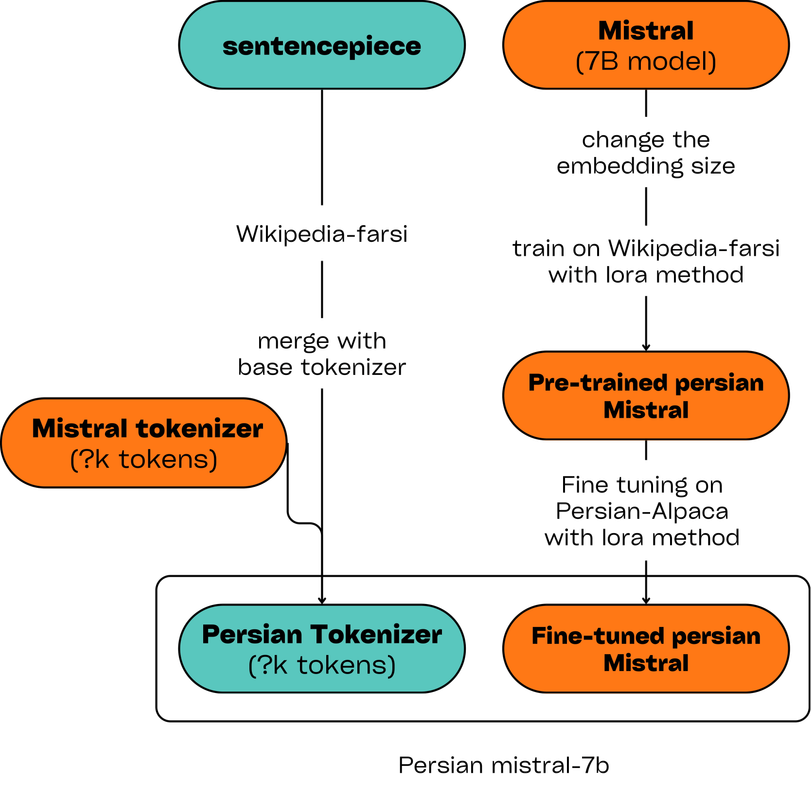

---- # Model description >Persian-mistral is the fintuned version of mistral-7b that design for persian QA and nlp tasks ---- # Example output: **Example 1:** - Input: "سلام، خوبی؟" - Output: "سلام، خوشحالم که با شما صحبت می کنم. چطور می توانم به شما کمک کنم؟" **Example 2:** - Input: "سلام، خوبی؟" - Output: "سلام، خوشحالم که با شما صحبت می کنم. چطور می توانم به شما کمک کنم؟" ---- # Banchmark results | model | dataset | score | |---------------|-------------------|--------| | base-model-7b | ARC-easy |41.92% | | base-model-7b | ARC-easy |39.12% | | fa-model-7b | ARC-easy |37.89% | | base-model-7b | ARC-challenge |37.12% | | fa-model-7b | ARC-challenge |39.29% | ---- # How to use ```python from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("aidal/Persian-Mistral-7B") model = AutoModelForCausalLM.from_pretrained("aidal/Persian-Mistral-7B") input_text = "پایتخت ایران کجاست؟" input_ids = tokenizer(input_text, return_tensors="pt") outputs = model.generate(**input_ids) print(tokenizer.decode(outputs[0])) ``` ---- # Training and finetuning - **Extend tokenzer:** The base Mistral tokenizer does not support Persian. As an initial step, we trained a SentencePiece tokenizer on the Farsi Wikipedia corpus and subsequently integrated it with the Mistral tokenizer. - **Pre-training:** In the following step, we expanded the embedding layer of the base model to match the size of the Persian tokenizer. We then employed the LoRA method to train the model on three distinct datasets: Wikipedia-Farsi, an Islamic book collection, and content from Khamenei.ir.