bubbliiiing

commited on

Commit

·

f2649d2

1

Parent(s):

d6d9c1b

Update Readme

Browse files- README.md +412 -3

- README_en.md +384 -0

README.md

CHANGED

|

@@ -1,3 +1,412 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

frameworks:

|

| 3 |

+

- Pytorch

|

| 4 |

+

license: other

|

| 5 |

+

tasks:

|

| 6 |

+

- text-to-video-synthesis

|

| 7 |

+

|

| 8 |

+

#model-type:

|

| 9 |

+

##如 gpt、phi、llama、chatglm、baichuan 等

|

| 10 |

+

#- gpt

|

| 11 |

+

|

| 12 |

+

#domain:

|

| 13 |

+

##如 nlp、cv、audio、multi-modal

|

| 14 |

+

#- nlp

|

| 15 |

+

|

| 16 |

+

#language:

|

| 17 |

+

##语言代码列表 https://help.aliyun.com/document_detail/215387.html?spm=a2c4g.11186623.0.0.9f8d7467kni6Aa

|

| 18 |

+

#- cn

|

| 19 |

+

|

| 20 |

+

#metrics:

|

| 21 |

+

##如 CIDEr、Blue、ROUGE 等

|

| 22 |

+

#- CIDEr

|

| 23 |

+

|

| 24 |

+

#tags:

|

| 25 |

+

##各种自定义,包括 pretrained、fine-tuned、instruction-tuned、RL-tuned 等训练方法和其他

|

| 26 |

+

#- pretrained

|

| 27 |

+

|

| 28 |

+

#tools:

|

| 29 |

+

##如 vllm、fastchat、llamacpp、AdaSeq 等

|

| 30 |

+

#- vllm

|

| 31 |

+

---

|

| 32 |

+

|

| 33 |

+

[](https://arxiv.org/abs/2405.18991)

|

| 34 |

+

[](https://easyanimate.github.io/)

|

| 35 |

+

[](https://modelscope.cn/studios/PAI/EasyAnimate/summary)

|

| 36 |

+

[](https://huggingface.co/spaces/alibaba-pai/EasyAnimate)

|

| 37 |

+

[](https://discord.gg/UzkpB4Bn)

|

| 38 |

+

|

| 39 |

+

# 简介

|

| 40 |

+

EasyAnimate是一个基于transformer结构的pipeline,可用于生成AI图片与视频、训练Diffusion Transformer的基线模型与Lora模型,我们支持从已经训练好的EasyAnimate模型直接进行预测,生成不同分辨率,6秒左右、fps8的视频(EasyAnimateV5.1,1 ~ 49帧),也支持用户训练自己的基线模型与Lora模型,进行一定的风格变换。

|

| 41 |

+

|

| 42 |

+

[English](./README_en.md) | [简体中文](./README.md)

|

| 43 |

+

|

| 44 |

+

# 模型地址

|

| 45 |

+

EasyAnimateV5.1:

|

| 46 |

+

|

| 47 |

+

12B:

|

| 48 |

+

| 名称 | 种类 | 存储空间 | Hugging Face | Model Scope | 描述 |

|

| 49 |

+

|--|--|--|--|--|--|

|

| 50 |

+

| EasyAnimateV5.1-12b-zh-InP | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-InP) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-InP)| 官方的图生视频权重。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以49帧、每秒8帧进行训练,支持多语言预测 |

|

| 51 |

+

| EasyAnimateV5.1-12b-zh-Control | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-Control) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-Control)| 官方的视频控制权重,支持不同的控制条件,如Canny、Depth、Pose、MLSD等,同时支持使用轨迹控制。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以49帧、每秒8帧进行训练,支持多语言预测 |

|

| 52 |

+

| EasyAnimateV5.1-12b-zh-Control-Camera | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-Control-Camera) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-Control-Camera)| 官方的视频相机控制权重,支持通过输入相机运动轨迹控制生成方向。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以49帧、每秒8帧进行训练,支持多语言预测 |

|

| 53 |

+

| EasyAnimateV5.1-12b-zh | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh)| 官方的文生视频权重。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以49帧、每秒8帧进行训练,支持多语言预测 |

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

# 视频作品

|

| 57 |

+

|

| 58 |

+

### 图生视频 EasyAnimateV5.1-12b-zh-InP

|

| 59 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 60 |

+

<tr>

|

| 61 |

+

<td>

|

| 62 |

+

<video src="https://github.com/user-attachments/assets/74a23109-f555-4026-a3d8-1ac27bb3884c" width="100%" controls autoplay loop></video>

|

| 63 |

+

</td>

|

| 64 |

+

<td>

|

| 65 |

+

<video src="https://github.com/user-attachments/assets/ab5aab27-fbd7-4f55-add9-29644125bde7" width="100%" controls autoplay loop></video>

|

| 66 |

+

</td>

|

| 67 |

+

<td>

|

| 68 |

+

<video src="https://github.com/user-attachments/assets/238043c2-cdbd-4288-9857-a273d96f021f" width="100%" controls autoplay loop></video>

|

| 69 |

+

</td>

|

| 70 |

+

<td>

|

| 71 |

+

<video src="https://github.com/user-attachments/assets/48881a0e-5513-4482-ae49-13a0ad7a2557" width="100%" controls autoplay loop></video>

|

| 72 |

+

</td>

|

| 73 |

+

</tr>

|

| 74 |

+

</table>

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 78 |

+

<tr>

|

| 79 |

+

<td>

|

| 80 |

+

<video src="https://github.com/user-attachments/assets/3e7aba7f-6232-4f39-80a8-6cfae968f38c" width="100%" controls autoplay loop></video>

|

| 81 |

+

</td>

|

| 82 |

+

<td>

|

| 83 |

+

<video src="https://github.com/user-attachments/assets/986d9f77-8dc3-45fa-bc9d-8b26023fffbc" width="100%" controls autoplay loop></video>

|

| 84 |

+

</td>

|

| 85 |

+

<td>

|

| 86 |

+

<video src="https://github.com/user-attachments/assets/7f62795a-2b3b-4c14-aeb1-1230cb818067" width="100%" controls autoplay loop></video>

|

| 87 |

+

</td>

|

| 88 |

+

<td>

|

| 89 |

+

<video src="https://github.com/user-attachments/assets/b581df84-ade1-4605-a7a8-fd735ce3e222

|

| 90 |

+

" width="100%" controls autoplay loop></video>

|

| 91 |

+

</td>

|

| 92 |

+

</tr>

|

| 93 |

+

</table>

|

| 94 |

+

|

| 95 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 96 |

+

<tr>

|

| 97 |

+

<td>

|

| 98 |

+

<video src="https://github.com/user-attachments/assets/eab1db91-1082-4de2-bb0a-d97fd25ceea1" width="100%" controls autoplay loop></video>

|

| 99 |

+

</td>

|

| 100 |

+

<td>

|

| 101 |

+

<video src="https://github.com/user-attachments/assets/3fda0e96-c1a8-4186-9c4c-043e11420f05" width="100%" controls autoplay loop></video>

|

| 102 |

+

</td>

|

| 103 |

+

<td>

|

| 104 |

+

<video src="https://github.com/user-attachments/assets/4b53145d-7e98-493a-83c9-4ea4f5b58289" width="100%" controls autoplay loop></video>

|

| 105 |

+

</td>

|

| 106 |

+

<td>

|

| 107 |

+

<video src="https://github.com/user-attachments/assets/75f7935f-17a8-4e20-b24c-b61479cf07fc" width="100%" controls autoplay loop></video>

|

| 108 |

+

</td>

|

| 109 |

+

</tr>

|

| 110 |

+

</table>

|

| 111 |

+

|

| 112 |

+

### 文生视频 EasyAnimateV5.1-12b-zh

|

| 113 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 114 |

+

<tr>

|

| 115 |

+

<td>

|

| 116 |

+

<video src="https://github.com/user-attachments/assets/8818dae8-e329-4b08-94fa-00d923f38fd2" width="100%" controls autoplay loop></video>

|

| 117 |

+

</td>

|

| 118 |

+

<td>

|

| 119 |

+

<video src="https://github.com/user-attachments/assets/d3e483c3-c710-47d2-9fac-89f732f2260a" width="100%" controls autoplay loop></video>

|

| 120 |

+

</td>

|

| 121 |

+

<td>

|

| 122 |

+

<video src="https://github.com/user-attachments/assets/4dfa2067-d5d4-4741-a52c-97483de1050d" width="100%" controls autoplay loop></video>

|

| 123 |

+

</td>

|

| 124 |

+

<td>

|

| 125 |

+

<video src="https://github.com/user-attachments/assets/fb44c2db-82c6-427e-9297-97dcce9a4948" width="100%" controls autoplay loop></video>

|

| 126 |

+

</td>

|

| 127 |

+

</tr>

|

| 128 |

+

</table>

|

| 129 |

+

|

| 130 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 131 |

+

<tr>

|

| 132 |

+

<td>

|

| 133 |

+

<video src="https://github.com/user-attachments/assets/dc6b8eaf-f21b-4576-a139-0e10438f20e4" width="100%" controls autoplay loop></video>

|

| 134 |

+

</td>

|

| 135 |

+

<td>

|

| 136 |

+

<video src="https://github.com/user-attachments/assets/b3f8fd5b-c5c8-44ee-9b27-49105a08fbff" width="100%" controls autoplay loop></video>

|

| 137 |

+

</td>

|

| 138 |

+

<td>

|

| 139 |

+

<video src="https://github.com/user-attachments/assets/a68ed61b-eed3-41d2-b208-5f039bf2788e" width="100%" controls autoplay loop></video>

|

| 140 |

+

</td>

|

| 141 |

+

<td>

|

| 142 |

+

<video src="https://github.com/user-attachments/assets/4e33f512-0126-4412-9ae8-236ff08bcd21" width="100%" controls autoplay loop></video>

|

| 143 |

+

</td>

|

| 144 |

+

</tr>

|

| 145 |

+

</table>

|

| 146 |

+

|

| 147 |

+

### 控制生视频 EasyAnimateV5.1-12b-zh-Control

|

| 148 |

+

|

| 149 |

+

轨迹控制

|

| 150 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 151 |

+

<tr>

|

| 152 |

+

<td>

|

| 153 |

+

<video src="https://github.com/user-attachments/assets/bf3b8970-ca7b-447f-8301-72dfe028055b" width="100%" controls autoplay loop></video>

|

| 154 |

+

</td>

|

| 155 |

+

<td>

|

| 156 |

+

<video src="https://github.com/user-attachments/assets/63a7057b-573e-4f73-9d7b-8f8001245af4" width="100%" controls autoplay loop></video>

|

| 157 |

+

</td>

|

| 158 |

+

<td>

|

| 159 |

+

<video src="https://github.com/user-attachments/assets/090ac2f3-1a76-45cf-abe5-4e326113389b" width="100%" controls autoplay loop></video>

|

| 160 |

+

</td>

|

| 161 |

+

<tr>

|

| 162 |

+

</table>

|

| 163 |

+

|

| 164 |

+

普通控制生视频(Canny、Pose、Depth等)

|

| 165 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 166 |

+

<tr>

|

| 167 |

+

<td>

|

| 168 |

+

<video src="https://github.com/user-attachments/assets/53002ce2-dd18-4d4f-8135-b6f68364cabd" width="100%" controls autoplay loop></video>

|

| 169 |

+

</td>

|

| 170 |

+

<td>

|

| 171 |

+

<video src="https://github.com/user-attachments/assets/fce43c0b-81fa-4ab2-9ca7-78d786f520e6" width="100%" controls autoplay loop></video>

|

| 172 |

+

</td>

|

| 173 |

+

<td>

|

| 174 |

+

<video src="https://github.com/user-attachments/assets/b208b92c-5add-4ece-a200-3dbbe47b93c3" width="100%" controls autoplay loop></video>

|

| 175 |

+

</td>

|

| 176 |

+

<tr>

|

| 177 |

+

<td>

|

| 178 |

+

<video src="https://github.com/user-attachments/assets/3aec95d5-d240-49fb-a9e9-914446c7a4cf" width="100%" controls autoplay loop></video>

|

| 179 |

+

</td>

|

| 180 |

+

<td>

|

| 181 |

+

<video src="https://github.com/user-attachments/assets/60fa063b-5c1f-485f-b663-09bd6669de3f" width="100%" controls autoplay loop></video>

|

| 182 |

+

</td>

|

| 183 |

+

<td>

|

| 184 |

+

<video src="https://github.com/user-attachments/assets/4adde728-8397-42f3-8a2a-23f7b39e9a1e" width="100%" controls autoplay loop></video>

|

| 185 |

+

</td>

|

| 186 |

+

</tr>

|

| 187 |

+

</table>

|

| 188 |

+

|

| 189 |

+

### 相机镜头控制 EasyAnimateV5.1-12b-zh-Control-Camera

|

| 190 |

+

|

| 191 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 192 |

+

<tr>

|

| 193 |

+

<td>

|

| 194 |

+

Pan Up

|

| 195 |

+

</td>

|

| 196 |

+

<td>

|

| 197 |

+

Pan Left

|

| 198 |

+

</td>

|

| 199 |

+

<td>

|

| 200 |

+

Pan Right

|

| 201 |

+

</td>

|

| 202 |

+

<tr>

|

| 203 |

+

<td>

|

| 204 |

+

<video src="https://github.com/user-attachments/assets/a88f81da-e263-4038-a5b3-77b26f79719e" width="100%" controls autoplay loop></video>

|

| 205 |

+

</td>

|

| 206 |

+

<td>

|

| 207 |

+

<video src="https://github.com/user-attachments/assets/e346c59d-7bca-4253-97fb-8cbabc484afb" width="100%" controls autoplay loop></video>

|

| 208 |

+

</td>

|

| 209 |

+

<td>

|

| 210 |

+

<video src="https://github.com/user-attachments/assets/4de470d4-47b7-46e3-82d3-b714a2f6aef6" width="100%" controls autoplay loop></video>

|

| 211 |

+

</td>

|

| 212 |

+

<tr>

|

| 213 |

+

<td>

|

| 214 |

+

Pan Down

|

| 215 |

+

</td>

|

| 216 |

+

<td>

|

| 217 |

+

Pan Up + Pan Left

|

| 218 |

+

</td>

|

| 219 |

+

<td>

|

| 220 |

+

Pan Up + Pan Right

|

| 221 |

+

</td>

|

| 222 |

+

<tr>

|

| 223 |

+

<td>

|

| 224 |

+

<video src="https://github.com/user-attachments/assets/7a3fecc2-d41a-4de3-86cd-5e19aea34a0d" width="100%" controls autoplay loop></video>

|

| 225 |

+

</td>

|

| 226 |

+

<td>

|

| 227 |

+

<video src="https://github.com/user-attachments/assets/cb281259-28b6-448e-a76f-643c3465672e" width="100%" controls autoplay loop></video>

|

| 228 |

+

</td>

|

| 229 |

+

<td>

|

| 230 |

+

<video src="https://github.com/user-attachments/assets/44faf5b6-d83c-4646-9436-971b2b9c7216" width="100%" controls autoplay loop></video>

|

| 231 |

+

</td>

|

| 232 |

+

</tr>

|

| 233 |

+

</table>

|

| 234 |

+

|

| 235 |

+

# 如何使用

|

| 236 |

+

|

| 237 |

+

#### a、显存节省方案

|

| 238 |

+

由于EasyAnimateV5和V5.1的参数非常大,我们需要考虑显存节省方案,以节省显存适应消费级显卡。我们给每个预测文件都提供了GPU_memory_mode,可以在model_cpu_offload,model_cpu_offload_and_qfloat8,sequential_cpu_offload中进行选择。

|

| 239 |

+

|

| 240 |

+

- model_cpu_offload代表整个模型在使用后会进入cpu,可以节省部分显存。

|

| 241 |

+

- model_cpu_offload_and_qfloat8代表整个模型在使用后会进入cpu,并且对transformer模型进行了float8的量化,可以节省更多的显存。

|

| 242 |

+

- sequential_cpu_offload代表模型的每一层在使用后会进入cpu,速度较慢,节省大量显存。

|

| 243 |

+

|

| 244 |

+

qfloat8会降低模型的性能,但可以节省更多的显存。如果显存足够,推荐使用model_cpu_offload。

|

| 245 |

+

|

| 246 |

+

#### b、通过comfyui

|

| 247 |

+

具体查看[ComfyUI README](https://github.com/aigc-apps/EasyAnimate/blob/main/comfyui/README.md)。

|

| 248 |

+

|

| 249 |

+

#### c、运行python文件

|

| 250 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 251 |

+

- 步骤2:根据不同的权重与预测目标使用不同的文件进行预测。

|

| 252 |

+

- 文生视频:

|

| 253 |

+

- 使用predict_t2v.py文件中修改prompt、neg_prompt、guidance_scale和seed。

|

| 254 |

+

- 而后运行predict_t2v.py文件,等待生成结果,结果保存在samples/easyanimate-videos文件夹中。

|

| 255 |

+

- 图生视频:

|

| 256 |

+

- 使用predict_i2v.py文件中修改validation_image_start、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 257 |

+

- validation_image_start是视频的开始图片,validation_image_end是视频的结尾图片。

|

| 258 |

+

- 而后运行predict_i2v.py文件,等待生成结果,结果保存在samples/easyanimate-videos_i2v文件夹中。

|

| 259 |

+

- 视频生视频:

|

| 260 |

+

- 使用predict_v2v.py文件中修改validation_video、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 261 |

+

- validation_video是视频生视频的参考视频。您可以使用以下视频运行演示:[演示视频](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/play_guitar.mp4)

|

| 262 |

+

- 而后运行predict_v2v.py文件,等待生成结果,结果保存在samples/easyanimate-videos_v2v文件夹中。

|

| 263 |

+

- 普通控制生视频(Canny、Pose、Depth等):

|

| 264 |

+

- 使用predict_v2v_control.py文件中修改control_video、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 265 |

+

- control_video是控制生视频的控制视频,是使用Canny、Pose、Depth等算子提取后的视频。您可以使用以下视频运行演示:[演示视频](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1.1/pose.mp4)

|

| 266 |

+

- 而后运行predict_v2v_control.py文件,等待生成结果,结果保存在samples/easyanimate-videos_v2v_control文件夹中。

|

| 267 |

+

- 轨迹控制视频:

|

| 268 |

+

- 使用predict_v2v_control.py文件中修改control_video、ref_image、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

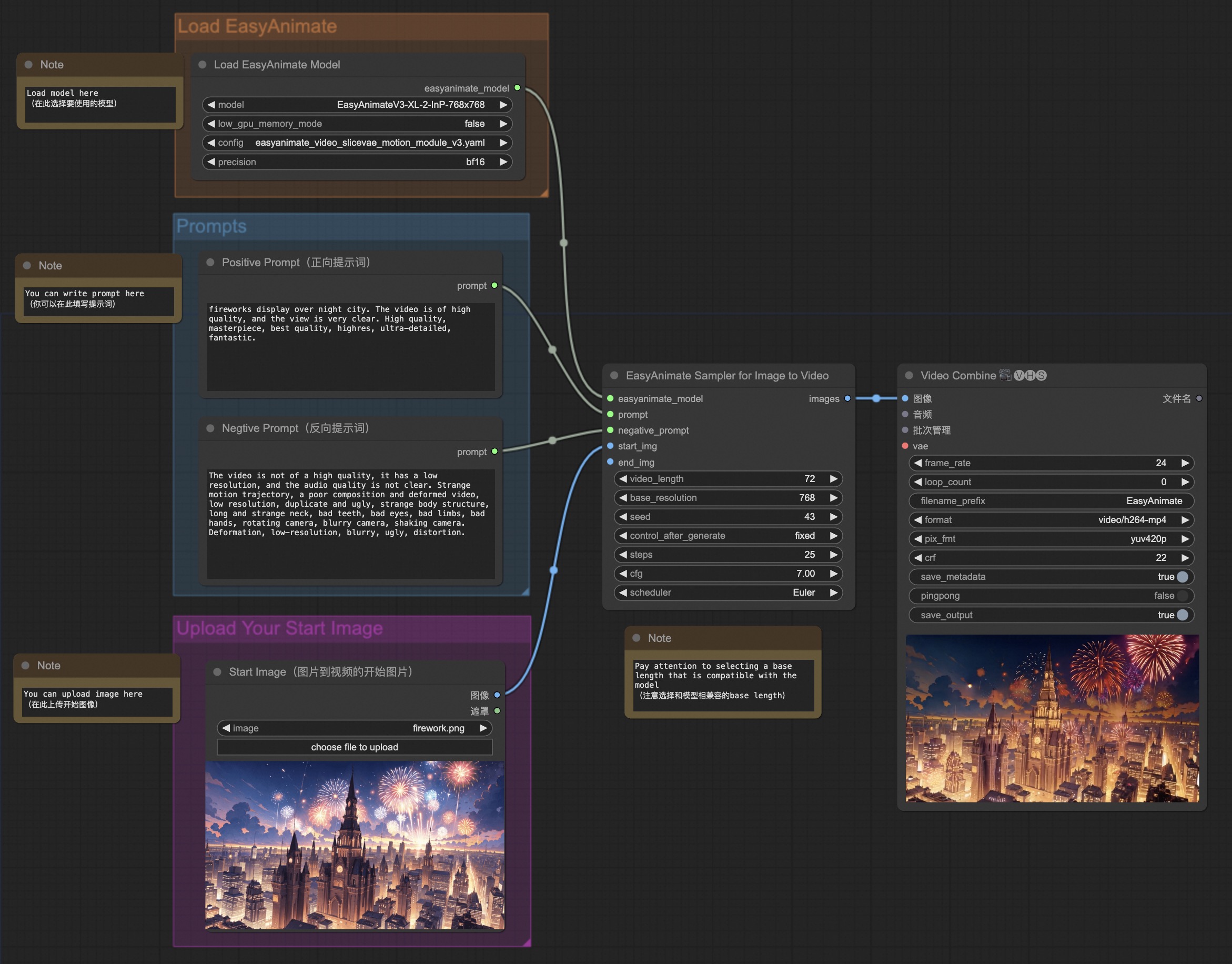

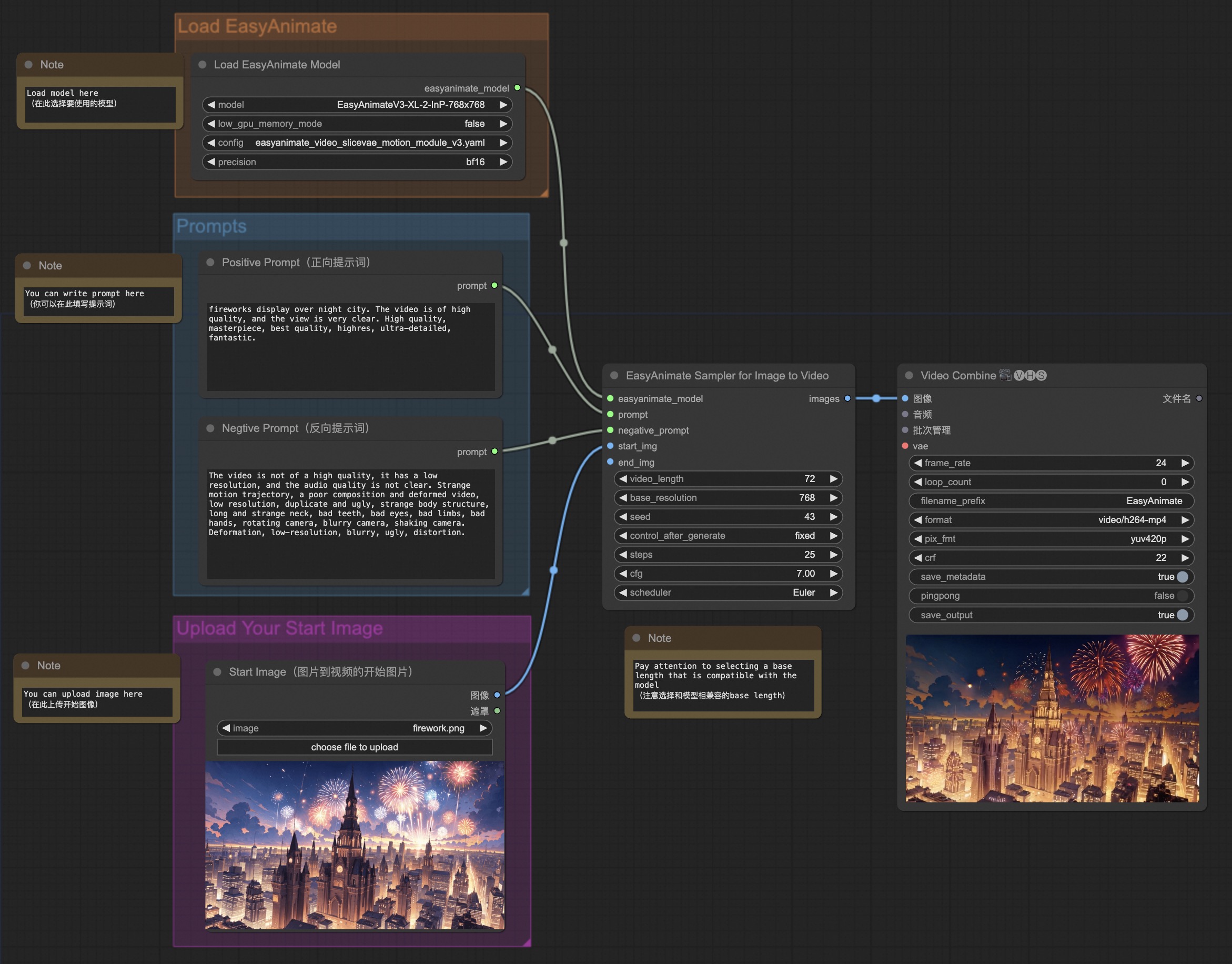

|

| 269 |

+

- control_video是轨迹控制视频的控制视频,ref_image是参考的首帧图片。您可以使用以下图片和控制视频运行演示:[演示图像](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/dog.png),[演示视频](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/trajectory_demo.mp4)

|

| 270 |

+

- 而后运行predict_v2v_control.py文件,等待生成结果,结果保存在samples/easyanimate-videos_v2v_control文件夹中。

|

| 271 |

+

- 推荐使用ComfyUI进行交互。

|

| 272 |

+

- 相机控制视频:

|

| 273 |

+

- 使用predict_v2v_control.py文件中修改control_video、ref_image、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 274 |

+

- control_camera_txt是相机控制视频的控制文件,ref_image是参考的首帧图片。您可以使用以下图片和控制视频运行演示:[演示图像](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/firework.png),[演示文件(来自于CameraCtrl)](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/0a3b5fb184936a83.txt)

|

| 275 |

+

- 而后运行predict_v2v_control.py文件,等待生成结果,结果保存在samples/easyanimate-videos_v2v_control文件夹中。

|

| 276 |

+

- 推荐使用ComfyUI进行交互。

|

| 277 |

+

- 步骤3:如果想结合自己训练的其他backbone与Lora,则看情况修改predict_t2v.py中的predict_t2v.py和lora_path。

|

| 278 |

+

|

| 279 |

+

#### d、通过ui界面

|

| 280 |

+

|

| 281 |

+

webui支持文生视频、图生视频、视频生视频和普通控制生视频(Canny、Pose、Depth等)

|

| 282 |

+

|

| 283 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 284 |

+

- 步骤2:运行app.py文件,进入gradio页面。

|

| 285 |

+

- 步骤3:根据页面选择生成模型,填入prompt、neg_prompt、guidance_scale和seed等,点击生成,等待生成结果,结果保存在sample文件夹中。

|

| 286 |

+

|

| 287 |

+

# 快速启动

|

| 288 |

+

### 1. 云使用: AliyunDSW/Docker

|

| 289 |

+

#### a. 通过阿里云 DSW

|

| 290 |

+

DSW 有免费 GPU 时间,用户可申请一次,申请后3个月内有效。

|

| 291 |

+

|

| 292 |

+

阿里云在[Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1)提供免费GPU时间,获取并在阿里云PAI-DSW中使用,5分钟内即可启动EasyAnimate

|

| 293 |

+

|

| 294 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/easyanimate_v5)

|

| 295 |

+

|

| 296 |

+

#### b. 通过ComfyUI

|

| 297 |

+

我们的ComfyUI界面如下,具体查看[ComfyUI README](https://github.com/aigc-apps/EasyAnimate/blob/main/comfyui/README.md)。

|

| 298 |

+

|

| 299 |

+

|

| 300 |

+

#### c. 通过docker

|

| 301 |

+

使用docker的情况下,请保证机器中已经正确安装显卡驱动与CUDA环境,然后以此执行以下命令:

|

| 302 |

+

```

|

| 303 |

+

# pull image

|

| 304 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

|

| 305 |

+

|

| 306 |

+

# enter image

|

| 307 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

|

| 308 |

+

|

| 309 |

+

# clone code

|

| 310 |

+

git clone https://github.com/aigc-apps/EasyAnimate.git

|

| 311 |

+

|

| 312 |

+

# enter EasyAnimate's dir

|

| 313 |

+

cd EasyAnimate

|

| 314 |

+

|

| 315 |

+

# download weights

|

| 316 |

+

mkdir models/Diffusion_Transformer

|

| 317 |

+

mkdir models/Motion_Module

|

| 318 |

+

mkdir models/Personalized_Model

|

| 319 |

+

|

| 320 |

+

# Please use the hugginface link or modelscope link to download the EasyAnimateV5.1 model.

|

| 321 |

+

# https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-InP

|

| 322 |

+

# https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-InP

|

| 323 |

+

```

|

| 324 |

+

|

| 325 |

+

### 2. 本地安装: 环境检查/下载/安装

|

| 326 |

+

#### a. 环境检查

|

| 327 |

+

我们已验证EasyAnimate可在以下环境中执行:

|

| 328 |

+

|

| 329 |

+

Windows 的详细信息:

|

| 330 |

+

- 操作系统 Windows 10

|

| 331 |

+

- python: python3.10 & python3.11

|

| 332 |

+

- pytorch: torch2.2.0

|

| 333 |

+

- CUDA: 11.8 & 12.1

|

| 334 |

+

- CUDNN: 8+

|

| 335 |

+

- GPU: Nvidia-3060 12G

|

| 336 |

+

|

| 337 |

+

Linux 的详细信息:

|

| 338 |

+

- 操作系统 Ubuntu 20.04, CentOS

|

| 339 |

+

- python: python3.10 & python3.11

|

| 340 |

+

- pytorch: torch2.2.0

|

| 341 |

+

- CUDA: 11.8 & 12.1

|

| 342 |

+

- CUDNN: 8+

|

| 343 |

+

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 344 |

+

|

| 345 |

+

我们需要大约 60GB 的可用磁盘空间,请检查!

|

| 346 |

+

|

| 347 |

+

EasyAnimateV5.1-12B的视频大小可以由不同的GPU Memory生成,包括:

|

| 348 |

+

| GPU memory |384x672x72|384x672x49|576x1008x25|576x1008x49|768x1344x25|768x1344x49|

|

| 349 |

+

|----------|----------|----------|----------|----------|----------|----------|

|

| 350 |

+

| 16GB | 🧡 | 🧡 | ❌ | ❌ | ❌ | ❌ |

|

| 351 |

+

| 24GB | 🧡 | 🧡 | 🧡 | 🧡 | ❌ | ❌ |

|

| 352 |

+

| 40GB | ✅ | ✅ | ✅ | ✅ | ❌ | ❌ |

|

| 353 |

+

| 80GB | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

|

| 354 |

+

|

| 355 |

+

EasyAnimateV5.1-7B的视频大小可以由不同的GPU Memory生成,包括:

|

| 356 |

+

| GPU memory |384x672x72|384x672x49|576x1008x25|576x1008x49|768x1344x25|768x1344x49|

|

| 357 |

+

|----------|----------|----------|----------|----------|----------|----------|

|

| 358 |

+

| 16GB | 🧡 | 🧡 | ❌ | ❌ | ❌ | ❌ |

|

| 359 |

+

| 24GB | ✅ | ✅ | 🧡 | 🧡 | ❌ | ❌ |

|

| 360 |

+

| 40GB | ✅ | ✅ | ✅ | ✅ | ❌ | ❌ |

|

| 361 |

+

| 80GB | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

|

| 362 |

+

|

| 363 |

+

✅ 表示它可以在"model_cpu_offload"的情况下运行,🧡代表它可以在"model_cpu_offload_and_qfloat8"的情况下运行,⭕️ 表示它可以在"sequential_cpu_offload"的情况下运行,❌ 表示它无法运行。请注意,使用sequential_cpu_offload运行会更慢。

|

| 364 |

+

|

| 365 |

+

有一些不支持torch.bfloat16的卡型,如2080ti、V100,需要将app.py、predict文件中的weight_dtype修改为torch.float16才可以运行。

|

| 366 |

+

|

| 367 |

+

EasyAnimateV5.1-12B使用不同GPU在25个steps中的生成时间如下:

|

| 368 |

+

| GPU |384x672x72|384x672x49|576x1008x25|576x1008x49|768x1344x25|768x1344x49|

|

| 369 |

+

|----------|----------|----------|----------|----------|----------|----------|

|

| 370 |

+

| A10 24GB |约120秒 (4.8s/it)|约240秒 (9.6s/it)|约320秒 (12.7s/it)| 约750秒 (29.8s/it)| ❌ | ❌ |

|

| 371 |

+

| A100 80GB |约45秒 (1.75s/it)|约90秒 (3.7s/it)|约120秒 (4.7s/it)|约300秒 (11.4s/it)|约265秒 (10.6s/it)| 约710秒 (28.3s/it)|

|

| 372 |

+

|

| 373 |

+

|

| 374 |

+

#### b. 权重放置

|

| 375 |

+

我们最好将[权重](#model-zoo)按照指定路径进行放置:

|

| 376 |

+

|

| 377 |

+

EasyAnimateV5.1:

|

| 378 |

+

```

|

| 379 |

+

📦 models/

|

| 380 |

+

├── 📂 Diffusion_Transformer/

|

| 381 |

+

│ ├── 📂 EasyAnimateV5.1-12b-zh-InP/

|

| 382 |

+

│ ├── 📂 EasyAnimateV5.1-12b-zh-Control/

|

| 383 |

+

│ ├── 📂 EasyAnimateV5.1-12b-zh-Control-Camera/

|

| 384 |

+

│ └── 📂 EasyAnimateV5.1-12b-zh/

|

| 385 |

+

├── 📂 Personalized_Model/

|

| 386 |

+

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 387 |

+

```

|

| 388 |

+

|

| 389 |

+

# 联系我们

|

| 390 |

+

1. 扫描下方二维码或搜索群号:77450006752 来加入钉钉群。

|

| 391 |

+

2. 扫描下方二维码来加入微信群(如果二维码失效,可扫描最右边同学的微信,邀请您入群)

|

| 392 |

+

<img src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/group/dd.png" alt="ding group" width="30%"/>

|

| 393 |

+

<img src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/group/wechat.jpg" alt="Wechat group" width="30%"/>

|

| 394 |

+

<img src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/group/person.jpg" alt="Person" width="30%"/>

|

| 395 |

+

|

| 396 |

+

# 参考文献

|

| 397 |

+

- CogVideo: https://github.com/THUDM/CogVideo/

|

| 398 |

+

- Flux: https://github.com/black-forest-labs/flux

|

| 399 |

+

- magvit: https://github.com/google-research/magvit

|

| 400 |

+

- PixArt: https://github.com/PixArt-alpha/PixArt-alpha

|

| 401 |

+

- Open-Sora-Plan: https://github.com/PKU-YuanGroup/Open-Sora-Plan

|

| 402 |

+

- Open-Sora: https://github.com/hpcaitech/Open-Sora

|

| 403 |

+

- Animatediff: https://github.com/guoyww/AnimateDiff

|

| 404 |

+

- HunYuan DiT: https://github.com/tencent/HunyuanDiT

|

| 405 |

+

- ComfyUI-KJNodes: https://github.com/kijai/ComfyUI-KJNodes

|

| 406 |

+

- ComfyUI-EasyAnimateWrapper: https://github.com/kijai/ComfyUI-EasyAnimateWrapper

|

| 407 |

+

- ComfyUI-CameraCtrl-Wrapper: https://github.com/chaojie/ComfyUI-CameraCtrl-Wrapper

|

| 408 |

+

- CameraCtrl: https://github.com/hehao13/CameraCtrl

|

| 409 |

+

- DragAnything: https://github.com/showlab/DragAnything

|

| 410 |

+

|

| 411 |

+

# 许可证

|

| 412 |

+

本项目采用 [Apache License (Version 2.0)](https://github.com/modelscope/modelscope/blob/master/LICENSE).

|

README_en.md

ADDED

|

@@ -0,0 +1,384 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

[](https://arxiv.org/abs/2405.18991)

|

| 3 |

+

[](https://easyanimate.github.io/)

|

| 4 |

+

[](https://modelscope.cn/studios/PAI/EasyAnimate/summary)

|

| 5 |

+

[](https://huggingface.co/spaces/alibaba-pai/EasyAnimate)

|

| 6 |

+

[](https://discord.gg/UzkpB4Bn)

|

| 7 |

+

|

| 8 |

+

# Introduction

|

| 9 |

+

|

| 10 |

+

EasyAnimate is a pipeline based on the transformer architecture, designed for generating AI images and videos, and for training baseline models and Lora models for Diffusion Transformer. We support direct prediction from pre-trained EasyAnimate models, allowing for the generation of videos with various resolutions, approximately 6 seconds in length, at 8fps (EasyAnimateV5, 1 to 49 frames). Additionally, users can train their own baseline and Lora models for specific style transformations.

|

| 11 |

+

|

| 12 |

+

[English](./README_en.md) | [简体中文](./README.md)

|

| 13 |

+

|

| 14 |

+

# Model zoo

|

| 15 |

+

|

| 16 |

+

EasyAnimateV5.1:

|

| 17 |

+

|

| 18 |

+

12B:

|

| 19 |

+

| Name | Type | Storage Space | Hugging Face | Model Scope | Description |

|

| 20 |

+

|--|--|--|--|--|--|

|

| 21 |

+

| EasyAnimateV5.1-12b-zh-InP | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-InP) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-InP) | Official image-to-video weights. Supports video prediction at multiple resolutions (512, 768, 1024), trained with 49 frames at 8 frames per second, and supports for multilingual prediction. |

|

| 22 |

+

| EasyAnimateV5.1-12b-zh-Control | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-Control) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-Control) | Official video control weights, supporting various control conditions such as Canny, Depth, Pose, MLSD, and trajectory control. Supports video prediction at multiple resolutions (512, 768, 1024), trained with 49 frames at 8 frames per second, and supports for multilingual prediction. |

|

| 23 |

+

| EasyAnimateV5.1-12b-zh-Control-Camera | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh-Control-Camera) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh-Control-Camera) | Official video camera control weights, supporting direction generation control by inputting camera motion trajectories. Supports video prediction at multiple resolutions (512, 768, 1024), trained with 49 frames at 8 frames per second, and supports for multilingual prediction. |

|

| 24 |

+

| EasyAnimateV5.1-12b-zh | EasyAnimateV5.1 | 39 GB | [🤗Link](https://huggingface.co/alibaba-pai/EasyAnimateV5.1-12b-zh) | [😄Link](https://modelscope.cn/models/PAI/EasyAnimateV5.1-12b-zh) | Official text-to-video weights. Supports video prediction at multiple resolutions (512, 768, 1024), trained with 49 frames at 8 frames per second, and supports for multilingual prediction. |

|

| 25 |

+

|

| 26 |

+

# Video Result

|

| 27 |

+

|

| 28 |

+

### Image to Video with EasyAnimateV5.1-12b-zh-InP

|

| 29 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 30 |

+

<tr>

|

| 31 |

+

<td>

|

| 32 |

+

<video src="https://github.com/user-attachments/assets/74a23109-f555-4026-a3d8-1ac27bb3884c" width="100%" controls autoplay loop></video>

|

| 33 |

+

</td>

|

| 34 |

+

<td>

|

| 35 |

+

<video src="https://github.com/user-attachments/assets/ab5aab27-fbd7-4f55-add9-29644125bde7" width="100%" controls autoplay loop></video>

|

| 36 |

+

</td>

|

| 37 |

+

<td>

|

| 38 |

+

<video src="https://github.com/user-attachments/assets/238043c2-cdbd-4288-9857-a273d96f021f" width="100%" controls autoplay loop></video>

|

| 39 |

+

</td>

|

| 40 |

+

<td>

|

| 41 |

+

<video src="https://github.com/user-attachments/assets/48881a0e-5513-4482-ae49-13a0ad7a2557" width="100%" controls autoplay loop></video>

|

| 42 |

+

</td>

|

| 43 |

+

</tr>

|

| 44 |

+

</table>

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 48 |

+

<tr>

|

| 49 |

+

<td>

|

| 50 |

+

<video src="https://github.com/user-attachments/assets/3e7aba7f-6232-4f39-80a8-6cfae968f38c" width="100%" controls autoplay loop></video>

|

| 51 |

+

</td>

|

| 52 |

+

<td>

|

| 53 |

+

<video src="https://github.com/user-attachments/assets/986d9f77-8dc3-45fa-bc9d-8b26023fffbc" width="100%" controls autoplay loop></video>

|

| 54 |

+

</td>

|

| 55 |

+

<td>

|

| 56 |

+

<video src="https://github.com/user-attachments/assets/7f62795a-2b3b-4c14-aeb1-1230cb818067" width="100%" controls autoplay loop></video>

|

| 57 |

+

</td>

|

| 58 |

+

<td>

|

| 59 |

+

<video src="https://github.com/user-attachments/assets/b581df84-ade1-4605-a7a8-fd735ce3e222

|

| 60 |

+

" width="100%" controls autoplay loop></video>

|

| 61 |

+

</td>

|

| 62 |

+

</tr>

|

| 63 |

+

</table>

|

| 64 |

+

|

| 65 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 66 |

+

<tr>

|

| 67 |

+

<td>

|

| 68 |

+

<video src="https://github.com/user-attachments/assets/eab1db91-1082-4de2-bb0a-d97fd25ceea1" width="100%" controls autoplay loop></video>

|

| 69 |

+

</td>

|

| 70 |

+

<td>

|

| 71 |

+

<video src="https://github.com/user-attachments/assets/3fda0e96-c1a8-4186-9c4c-043e11420f05" width="100%" controls autoplay loop></video>

|

| 72 |

+

</td>

|

| 73 |

+

<td>

|

| 74 |

+

<video src="https://github.com/user-attachments/assets/4b53145d-7e98-493a-83c9-4ea4f5b58289" width="100%" controls autoplay loop></video>

|

| 75 |

+

</td>

|

| 76 |

+

<td>

|

| 77 |

+

<video src="https://github.com/user-attachments/assets/75f7935f-17a8-4e20-b24c-b61479cf07fc" width="100%" controls autoplay loop></video>

|

| 78 |

+

</td>

|

| 79 |

+

</tr>

|

| 80 |

+

</table>

|

| 81 |

+

|

| 82 |

+

### Text to Video with EasyAnimateV5.1-12b-zh

|

| 83 |

+

|

| 84 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 85 |

+

<tr>

|

| 86 |

+

<td>

|

| 87 |

+

<video src="https://github.com/user-attachments/assets/8818dae8-e329-4b08-94fa-00d923f38fd2" width="100%" controls autoplay loop></video>

|

| 88 |

+

</td>

|

| 89 |

+

<td>

|

| 90 |

+

<video src="https://github.com/user-attachments/assets/d3e483c3-c710-47d2-9fac-89f732f2260a" width="100%" controls autoplay loop></video>

|

| 91 |

+

</td>

|

| 92 |

+

<td>

|

| 93 |

+

<video src="https://github.com/user-attachments/assets/4dfa2067-d5d4-4741-a52c-97483de1050d" width="100%" controls autoplay loop></video>

|

| 94 |

+

</td>

|

| 95 |

+

<td>

|

| 96 |

+

<video src="https://github.com/user-attachments/assets/fb44c2db-82c6-427e-9297-97dcce9a4948" width="100%" controls autoplay loop></video>

|

| 97 |

+

</td>

|

| 98 |

+

</tr>

|

| 99 |

+

</table>

|

| 100 |

+

|

| 101 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 102 |

+

<tr>

|

| 103 |

+

<td>

|

| 104 |

+

<video src="https://github.com/user-attachments/assets/dc6b8eaf-f21b-4576-a139-0e10438f20e4" width="100%" controls autoplay loop></video>

|

| 105 |

+

</td>

|

| 106 |

+

<td>

|

| 107 |

+

<video src="https://github.com/user-attachments/assets/b3f8fd5b-c5c8-44ee-9b27-49105a08fbff" width="100%" controls autoplay loop></video>

|

| 108 |

+

</td>

|

| 109 |

+

<td>

|

| 110 |

+

<video src="https://github.com/user-attachments/assets/a68ed61b-eed3-41d2-b208-5f039bf2788e" width="100%" controls autoplay loop></video>

|

| 111 |

+

</td>

|

| 112 |

+

<td>

|

| 113 |

+

<video src="https://github.com/user-attachments/assets/4e33f512-0126-4412-9ae8-236ff08bcd21" width="100%" controls autoplay loop></video>

|

| 114 |

+

</td>

|

| 115 |

+

</tr>

|

| 116 |

+

</table>

|

| 117 |

+

|

| 118 |

+

### Control Video with EasyAnimateV5.1-12b-zh-Control

|

| 119 |

+

|

| 120 |

+

Trajectory Control:

|

| 121 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 122 |

+

<tr>

|

| 123 |

+

<td>

|

| 124 |

+

<video src="https://github.com/user-attachments/assets/bf3b8970-ca7b-447f-8301-72dfe028055b" width="100%" controls autoplay loop></video>

|

| 125 |

+

</td>

|

| 126 |

+

<td>

|

| 127 |

+

<video src="https://github.com/user-attachments/assets/63a7057b-573e-4f73-9d7b-8f8001245af4" width="100%" controls autoplay loop></video>

|

| 128 |

+

</td>

|

| 129 |

+

<td>

|

| 130 |

+

<video src="https://github.com/user-attachments/assets/090ac2f3-1a76-45cf-abe5-4e326113389b" width="100%" controls autoplay loop></video>

|

| 131 |

+

</td>

|

| 132 |

+

<tr>

|

| 133 |

+

</table>

|

| 134 |

+

|

| 135 |

+

Generic Control Video (Canny, Pose, Depth, etc.):

|

| 136 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 137 |

+

<tr>

|

| 138 |

+

<td>

|

| 139 |

+

<video src="https://github.com/user-attachments/assets/53002ce2-dd18-4d4f-8135-b6f68364cabd" width="100%" controls autoplay loop></video>

|

| 140 |

+

</td>

|

| 141 |

+

<td>

|

| 142 |

+

<video src="https://github.com/user-attachments/assets/fce43c0b-81fa-4ab2-9ca7-78d786f520e6" width="100%" controls autoplay loop></video>

|

| 143 |

+

</td>

|

| 144 |

+

<td>

|

| 145 |

+

<video src="https://github.com/user-attachments/assets/b208b92c-5add-4ece-a200-3dbbe47b93c3" width="100%" controls autoplay loop></video>

|

| 146 |

+

</td>

|

| 147 |

+

<tr>

|

| 148 |

+

<td>

|

| 149 |

+

<video src="https://github.com/user-attachments/assets/3aec95d5-d240-49fb-a9e9-914446c7a4cf" width="100%" controls autoplay loop></video>

|

| 150 |

+

</td>

|

| 151 |

+

<td>

|

| 152 |

+

<video src="https://github.com/user-attachments/assets/60fa063b-5c1f-485f-b663-09bd6669de3f" width="100%" controls autoplay loop></video>

|

| 153 |

+

</td>

|

| 154 |

+

<td>

|

| 155 |

+

<video src="https://github.com/user-attachments/assets/4adde728-8397-42f3-8a2a-23f7b39e9a1e" width="100%" controls autoplay loop></video>

|

| 156 |

+

</td>

|

| 157 |

+

</tr>

|

| 158 |

+

</table>

|

| 159 |

+

|

| 160 |

+

### Camera Control with EasyAnimateV5.1-12b-zh-Control-Camera

|

| 161 |

+

|

| 162 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 163 |

+

<tr>

|

| 164 |

+

<td>

|

| 165 |

+

Pan Up

|

| 166 |

+

</td>

|

| 167 |

+

<td>

|

| 168 |

+

Pan Left

|

| 169 |

+

</td>

|

| 170 |

+

<td>

|

| 171 |

+

Pan Right

|

| 172 |

+

</td>

|

| 173 |

+

<tr>

|

| 174 |

+

<td>

|

| 175 |

+

<video src="https://github.com/user-attachments/assets/a88f81da-e263-4038-a5b3-77b26f79719e" width="100%" controls autoplay loop></video>

|

| 176 |

+

</td>

|

| 177 |

+

<td>

|

| 178 |

+

<video src="https://github.com/user-attachments/assets/e346c59d-7bca-4253-97fb-8cbabc484afb" width="100%" controls autoplay loop></video>

|

| 179 |

+

</td>

|

| 180 |

+

<td>

|

| 181 |

+

<video src="https://github.com/user-attachments/assets/4de470d4-47b7-46e3-82d3-b714a2f6aef6" width="100%" controls autoplay loop></video>

|

| 182 |

+

</td>

|

| 183 |

+

<tr>

|

| 184 |

+

<td>

|

| 185 |

+

Pan Down

|

| 186 |

+

</td>

|

| 187 |

+

<td>

|

| 188 |

+

Pan Up + Pan Left

|

| 189 |

+

</td>

|

| 190 |

+

<td>

|

| 191 |

+

Pan Up + Pan Right

|

| 192 |

+

</td>

|

| 193 |

+

<tr>

|

| 194 |

+

<td>

|

| 195 |

+

<video src="https://github.com/user-attachments/assets/7a3fecc2-d41a-4de3-86cd-5e19aea34a0d" width="100%" controls autoplay loop></video>

|

| 196 |

+

</td>

|

| 197 |

+

<td>

|

| 198 |

+

<video src="https://github.com/user-attachments/assets/cb281259-28b6-448e-a76f-643c3465672e" width="100%" controls autoplay loop></video>

|

| 199 |

+

</td>

|

| 200 |

+

<td>

|

| 201 |

+

<video src="https://github.com/user-attachments/assets/44faf5b6-d83c-4646-9436-971b2b9c7216" width="100%" controls autoplay loop></video>

|

| 202 |

+

</td>

|

| 203 |

+

</tr>

|

| 204 |

+

</table>

|

| 205 |

+

|

| 206 |

+

# How to use

|

| 207 |

+

|

| 208 |

+

#### a. Memory-Saving Options

|

| 209 |

+

Since EasyAnimateV5 and V5.1 have very large parameters, we need to consider memory-saving options to adapt to consumer-grade graphics cards. We provide GPU_memory_mode for each prediction file, allowing you to choose from model_cpu_offload, model_cpu_offload_and_qfloat8, or sequential_cpu_offload.

|

| 210 |

+

|

| 211 |

+

- model_cpu_offload means the entire model will move to the CPU after use, saving some memory.

|

| 212 |

+

- model_cpu_offload_and_qfloat8 means the entire model will move to the CPU after use and applies float8 quantization to the transformer model, saving more memory.

|

| 213 |

+

- sequential_cpu_offload means each layer of the model moves to CPU after use, which is slower but saves a lot of memory.

|

| 214 |

+

|

| 215 |

+

qfloat8 may reduce model performance but saves more memory. If memory is sufficient, it's recommended to use model_cpu_offload.

|

| 216 |

+

|

| 217 |

+

#### b. Via ComfyUI

|

| 218 |

+

For more details, see the [ComfyUI README](https://github.com/aigc-apps/EasyAnimate/blob/main/comfyui/README.md).

|

| 219 |

+

|

| 220 |

+

#### c. Run Python Files

|

| 221 |

+

- Step 1: Download the corresponding [weights](#model-zoo) and place them in the models folder.

|

| 222 |

+

- Step 2: Use different files for predictions based on the weights and prediction goals.

|

| 223 |

+

- Text-to-Video:

|

| 224 |

+

- Modify the prompt, neg_prompt, guidance_scale, and seed in the predict_t2v.py file.

|

| 225 |

+

- Then run the predict_t2v.py file and wait for the results, which are stored in the samples/easyanimate-videos folder.

|

| 226 |

+

- Image-to-Video:

|

| 227 |

+

- Modify validation_image_start, validation_image_end, prompt, neg_prompt, guidance_scale, and seed in the predict_i2v.py file.

|

| 228 |

+

- validation_image_start is the starting image, and validation_image_end is the ending image of the video.

|

| 229 |

+

- Then run the predict_i2v.py file and wait for the results, which are stored in the samples/easyanimate-videos_i2v folder.

|

| 230 |

+

- Video-to-Video:

|

| 231 |

+

- Modify validation_video, validation_image_end, prompt, neg_prompt, guidance_scale, and seed in the predict_v2v.py file.

|

| 232 |

+

- validation_video is the reference video for video-to-video. You can run a demo with the following video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/play_guitar.mp4)

|

| 233 |

+

- Then run the predict_v2v.py file and wait for the results, which are stored in samples/easyanimate-videos_v2v folder.

|

| 234 |

+

- Generic Control Video (Canny, Pose, Depth, etc.):

|

| 235 |

+

- Modify control_video, validation_image_end, prompt, neg_prompt, guidance_scale, and seed in the predict_v2v_control.py file.

|

| 236 |

+

- control_video is the control video for video generation, extracted using Canny, Pose, Depth, etc. You can run a demo with the following video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1.1/pose.mp4)

|

| 237 |

+

- Then run the predict_v2v_control.py file and wait for the results, which are stored in samples/easyanimate-videos_v2v_control folder.

|

| 238 |

+

- Trajectory Control Video:

|

| 239 |

+

- Modify control_video, ref_image, validation_image_end, prompt, neg_prompt, guidance_scale, and seed in the predict_v2v_control.py file.

|

| 240 |

+

- control_video is the control video, and ref_image is the reference first frame image. You can run a demo with the following image and video: [Demo Image](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/dog.png), [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/trajectory_demo.mp4)

|

| 241 |

+

- Then run the predict_v2v_control.py file and wait for the results, which are stored in samples/easyanimate-videos_v2v_control folder.

|

| 242 |

+

- Interaction via ComfyUI is recommended.

|

| 243 |

+

- Camera Control Video:

|

| 244 |

+

- Modify control_video, ref_image, validation_image_end, prompt, neg_prompt, guidance_scale, and seed in the predict_v2v_control.py file.

|

| 245 |

+

- control_camera_txt is the control file for camera control video, and ref_image is the reference first frame image. You can run a demo with the following image and control file: [Demo Image](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/firework.png), [Demo File (from CameraCtrl)](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/asset/v5.1/0a3b5fb184936a83.txt)

|

| 246 |

+

- Then run the predict_v2v_control.py file and wait for the results, which are stored in samples/easyanimate-videos_v2v_control folder.

|

| 247 |

+

- Interaction via ComfyUI is recommended.

|

| 248 |

+

- Step 3: To combine with other backbones and Lora trained by yourself, modify predict_t2v.py and lora_path accordingly in the predict_t2v.py file.

|

| 249 |

+

|

| 250 |

+

#### d. Via WebUI Interface

|

| 251 |

+

|

| 252 |

+

WebUI supports text-to-video, image-to-video, video-to-video, and control-based video generation (such as Canny, Pose, Depth, etc.).

|

| 253 |

+

|

| 254 |

+

- Step 1: Download the corresponding [weights](#model-zoo) and place them in the models folder.

|

| 255 |

+

- Step 2: Run the app.py file to enter the Gradio page.

|

| 256 |

+

- Step 3: Choose the generation model from the page, fill in prompt, neg_prompt, guidance_scale, seed, etc., click generate, and wait for the results, which are stored in the sample folder.

|

| 257 |

+

|

| 258 |

+

# Quick Start

|

| 259 |

+

### 1. Cloud usage: AliyunDSW/Docker

|

| 260 |

+

#### a. From AliyunDSW

|

| 261 |

+

DSW has free GPU time, which can be applied once by a user and is valid for 3 months after applying.

|

| 262 |

+

|

| 263 |

+

Aliyun provide free GPU time in [Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1), get it and use in Aliyun PAI-DSW to start EasyAnimate within 5min!

|

| 264 |

+

|

| 265 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/easyanimate_v5)

|

| 266 |

+

|

| 267 |

+

#### b. From ComfyUI

|

| 268 |

+

Our ComfyUI is as follows, please refer to [ComfyUI README](https://github.com/aigc-apps/EasyAnimate/blob/main/comfyui/README.md) for details.

|

| 269 |

+

|

| 270 |

+

|

| 271 |

+

#### c. From docker

|

| 272 |

+

If you are using docker, please make sure that the graphics card driver and CUDA environment have been installed correctly in your machine.

|

| 273 |

+

|

| 274 |

+

Then execute the following commands in this way:

|

| 275 |

+

```

|

| 276 |

+

# pull image

|

| 277 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

|

| 278 |

+

|

| 279 |

+

# enter image

|

| 280 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

|

| 281 |

+

|

| 282 |

+

# clone code

|

| 283 |

+

git clone https://github.com/aigc-apps/EasyAnimate.git

|

| 284 |

+

|

| 285 |

+

# enter EasyAnimate's dir

|

| 286 |

+

cd EasyAnimate

|

| 287 |

+

|

| 288 |

+

# download weights

|

| 289 |

+

mkdir models/Diffusion_Transformer

|

| 290 |

+

mkdir models/Motion_Module

|