|

--- |

|

license: apache-2.0 |

|

--- |

|

|

|

# DETR-Resnet50 (semantic segmentation) Core ML Models |

|

|

|

See [the Files tab](https://huggingface.co/coreml-projects/detr-resnet50-semantic-segmentation/tree/main) for converted models. |

|

|

|

DEtection TRansformer (DETR) model trained end-to-end on COCO 2017 object detection (118k annotated images). It was introduced in the paper [End-to-End Object Detection with Transformers](https://arxiv.org/abs/2005.12872) by Carion et al. and first released in [this repository](https://github.com/facebookresearch/detr). |

|

|

|

Disclaimer: The team releasing DETR did not write a model card for this model so this model card has been written by the Hugging Face team. |

|

|

|

## Model description |

|

|

|

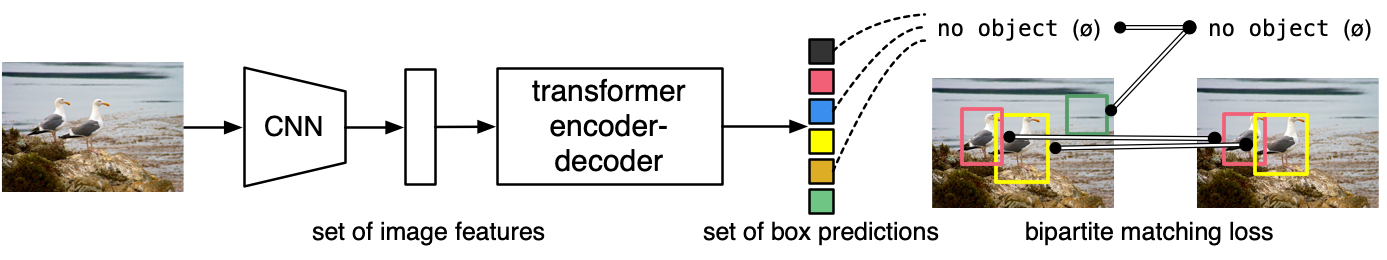

The DETR model is an encoder-decoder transformer with a convolutional backbone. Two heads are added on top of the decoder outputs in order to perform object detection: a linear layer for the class labels and a MLP (multi-layer perceptron) for the bounding boxes. The model uses so-called object queries to detect objects in an image. Each object query looks for a particular object in the image. For COCO, the number of object queries is set to 100. |

|

|

|

The model is trained using a "bipartite matching loss": one compares the predicted classes + bounding boxes of each of the N = 100 object queries to the ground truth annotations, padded up to the same length N (so if an image only contains 4 objects, 96 annotations will just have a "no object" as class and "no bounding box" as bounding box). The Hungarian matching algorithm is used to create an optimal one-to-one mapping between each of the N queries and each of the N annotations. Next, standard cross-entropy (for the classes) and a linear combination of the L1 and generalized IoU loss (for the bounding boxes) are used to optimize the parameters of the model. |

|

|

|

|

|

|

|

## Evaluation - Variants |

|

|

|

| Variant | Parameters | Size (MB) | Weight Precision | Act. Precision | IoU | Pixel accuracy | |

|

|-------------------------------------------------------------------------------------------------------|-----------:|----------:|------------------|----------------|------:|---------------:| |

|

| [facebook/detr-resnet-50-panoptic (PyTorch)](https://huggingface.co/facebook/detr-resnet-50-panoptic) | 43M | 172 | Float32 | Float32 | 0.393 | 0.746 | |

|

| [DETRResnet50SemanticSegmentationF32](DETRResnet50SemanticSegmentationF32.mlpackage) | 43M | 171 | Float32 | Float32 | 0.393 | 0.746 | |

|

| [DETRResnet50SemanticSegmentationF16](DETRResnet50SemanticSegmentationF16.mlpackage) | 43M | 86 | Float16 | Float16 | 0.395 | 0.746 | |

|

|

|

IoU and Pixel accuracy measured on 512 images from the COCO dataset. The ground truth labels were extracted from the panoptic segmentation annotations, transformed to semantic segmentation masks. Input images were resized so that the smaller edge equals 448, then center-cropped. |

|

|

|

## Inference time |

|

|

|

The following results refer to DETRResnet50SemanticSegmentationF16. The compute units for MacBook Pro (M1 Max) were manually selected to "CPU and Neural Engine". |

|

|

|

| Device | OS | Inference time (ms) | Dominant compute unit | |

|

| -------------------- | ---- | ------------------: | --------------------- | |

|

| iPhone 15 Pro Max | 17.5 | 40 | Neural Engine | |

|

| MacBook Pro (M1 Max) | 14.5 | 43 | Neural Engine | |

|

| iPhone 12 Pro Max | 18.0 | 52 | Neural Engine | |

|

| MacBook Pro (M3 Max) | 15.0 | 29 | Neural Engine | |

|

|

|

|

|

## Download |

|

|

|

Install `huggingface-hub` |

|

|

|

```bash |

|

pip install huggingface-hub |

|

``` |

|

|

|

To download one of the `.mlpackage` folders to the `models` directory: |

|

|

|

```bash |

|

huggingface-cli download \ |

|

--local-dir models --local-dir-use-symlinks False \ |

|

coreml-projects/detr-resnet50-semantic-segmentation \ |

|

--include "detr-resnet50-semantic-400-float16.mlpackage/*" |

|

``` |

|

|

|

To download everything, skip the `--include` argument. |