Upload 42 files

Browse files- feature_extractor/preprocessor_config.json +28 -0

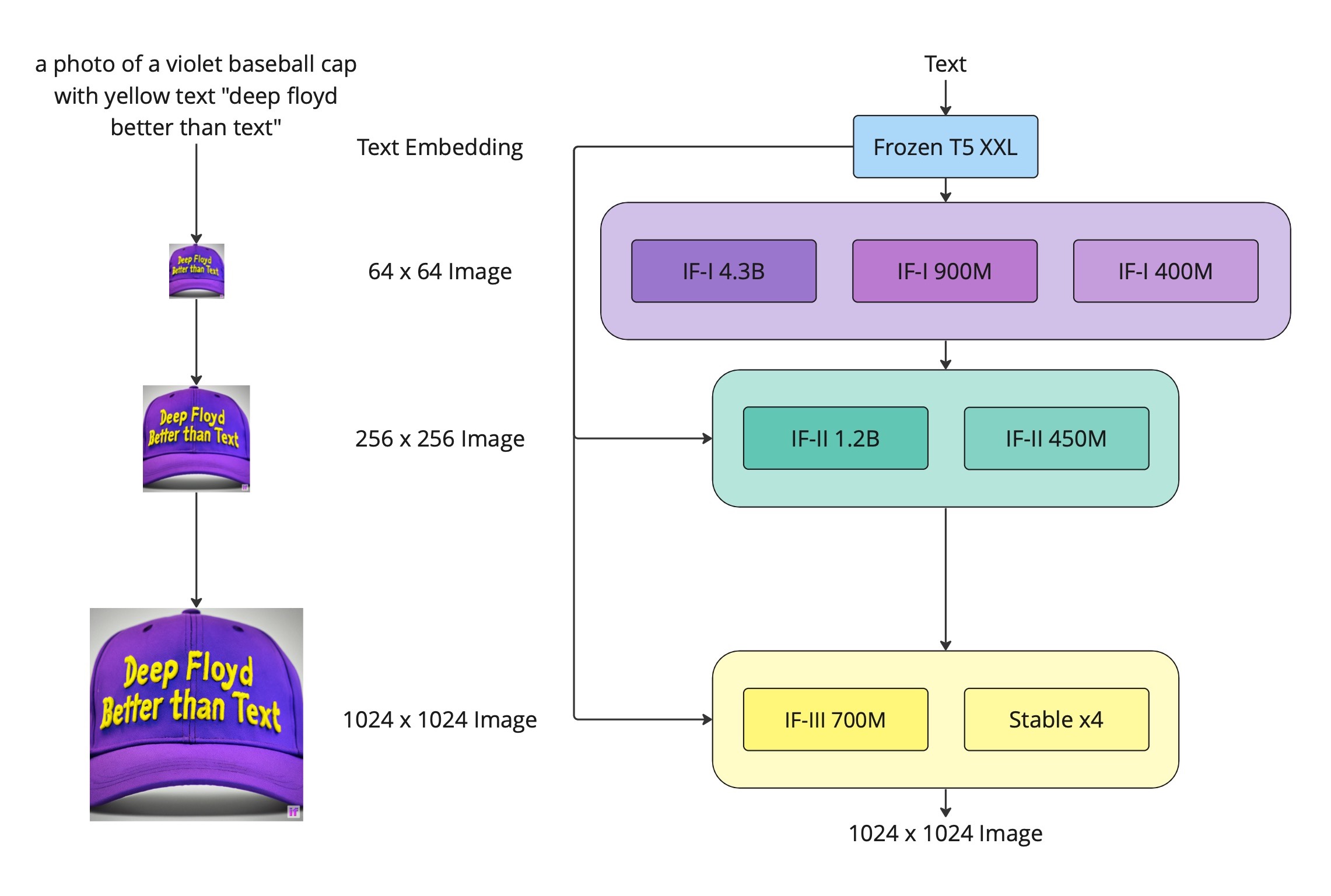

- pics/deepfloyd_if_scheme.jpg +0 -0

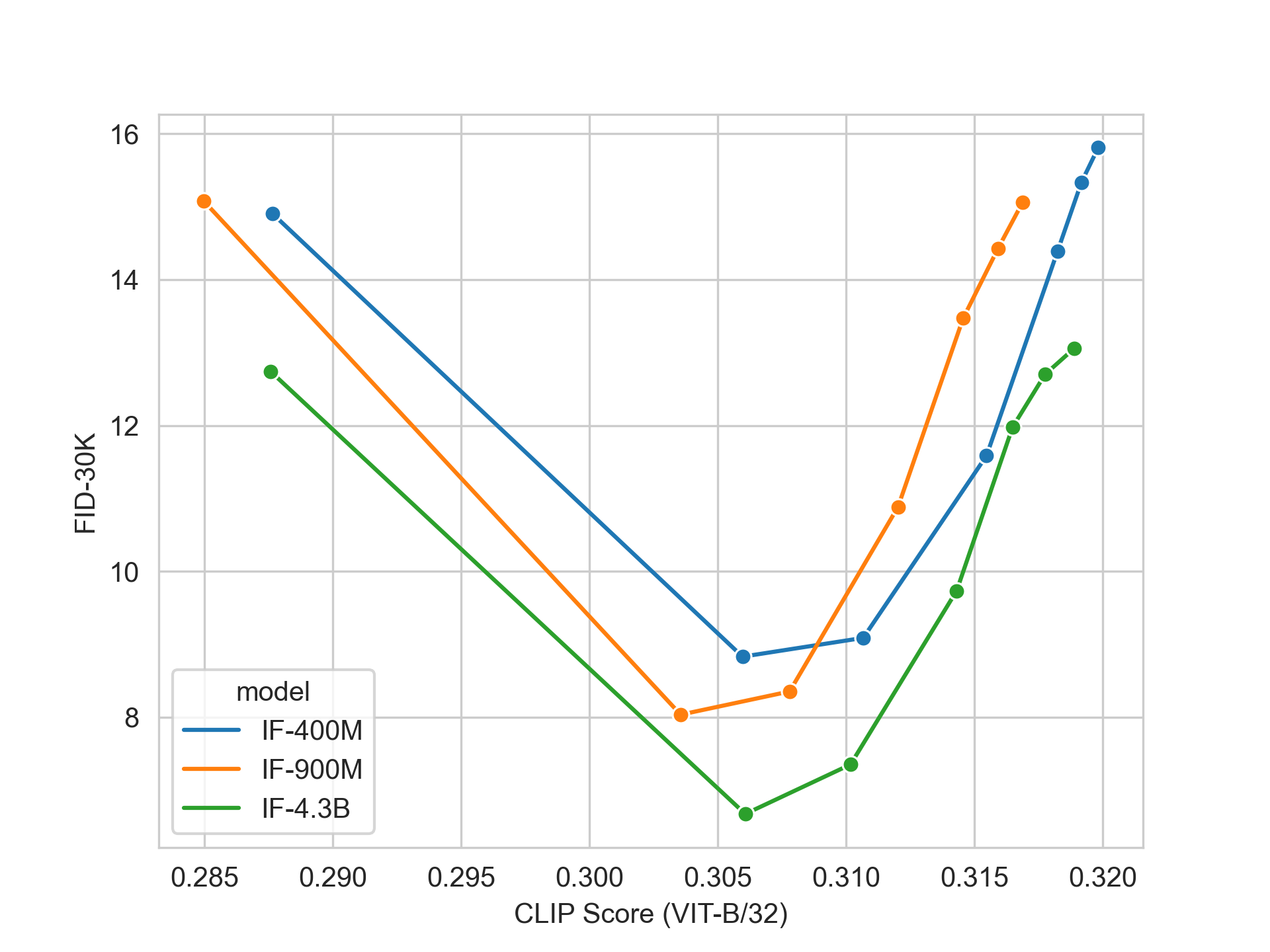

- pics/fid30k_if.jpg +0 -0

- pics/if_architecture.jpg +0 -0

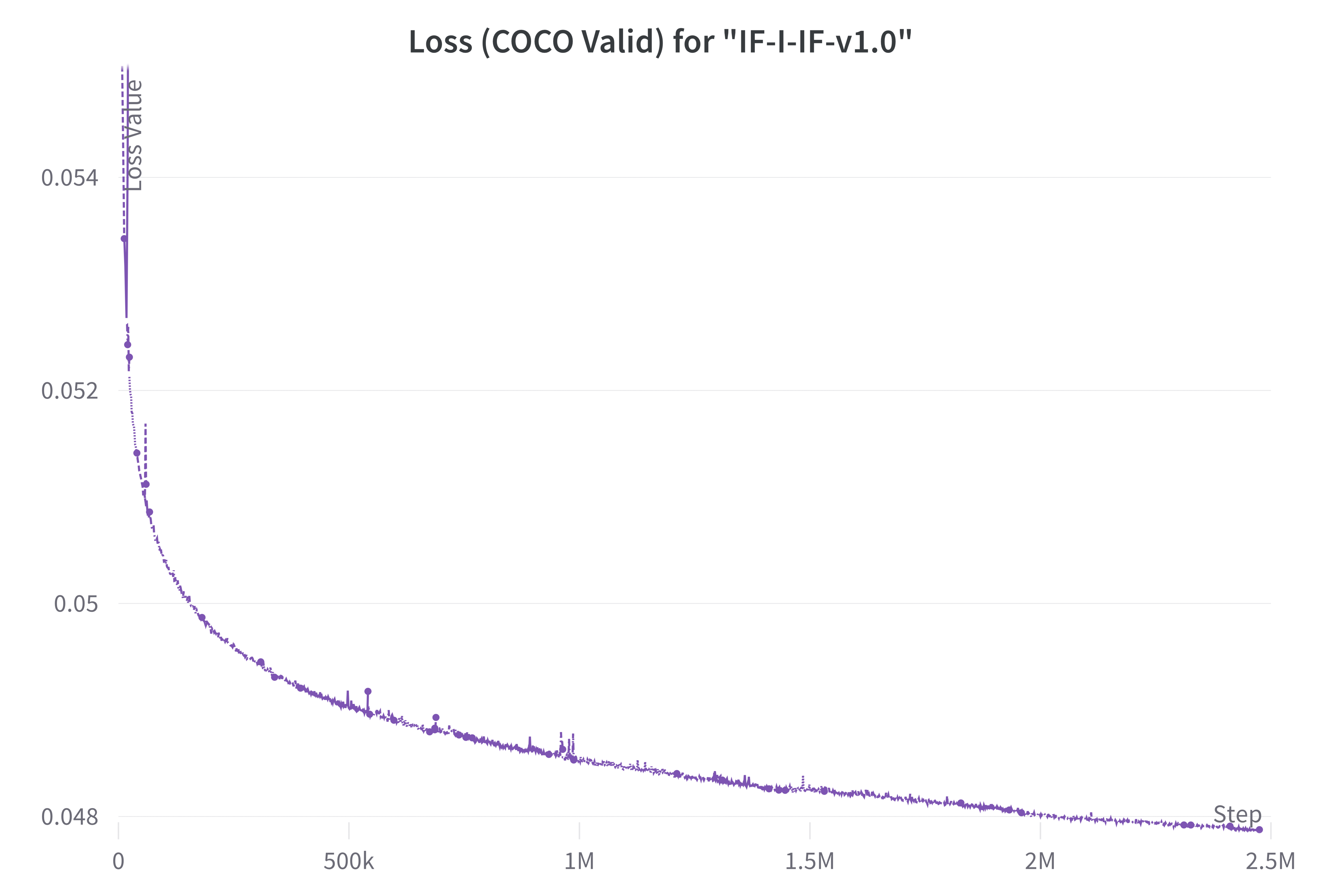

- pics/loss.jpg +0 -0

- pics/lr.jpg +0 -0

- pics/scheme-h.jpg +0 -0

- safety_checker/config.json +168 -0

- safety_checker/model.fp16.safetensors +3 -0

- safety_checker/model.safetensors +3 -0

- safety_checker/pytorch_model.bin +3 -0

- safety_checker/pytorch_model.fp16.bin +3 -0

- scheduler/scheduler_config.json +17 -0

- text_encoder/config.json +31 -0

- text_encoder/model-00001-of-00002.safetensors +3 -0

- text_encoder/model-00002-of-00002.safetensors +3 -0

- text_encoder/model.8bit.safetensors +3 -0

- text_encoder/model.fp16-00001-of-00002.safetensors +3 -0

- text_encoder/model.fp16-00002-of-00002.safetensors +3 -0

- text_encoder/model.safetensors.index.fp16.json +226 -0

- text_encoder/model.safetensors.index.json +226 -0

- text_encoder/pytorch_model-00001-of-00002.bin +3 -0

- text_encoder/pytorch_model-00002-of-00002.bin +3 -0

- text_encoder/pytorch_model.8bit.bin +3 -0

- text_encoder/pytorch_model.bin.index.fp16.json +226 -0

- text_encoder/pytorch_model.bin.index.json +227 -0

- text_encoder/pytorch_model.fp16-00001-of-00002.bin +3 -0

- text_encoder/pytorch_model.fp16-00002-of-00002.bin +3 -0

- text_encoder/special_tokens_map.json +1 -0

- text_encoder/spiece.model +3 -0

- text_encoder/tokenizer_config.json +1 -0

- tokenizer/special_tokens_map.json +107 -0

- tokenizer/spiece.model +3 -0

- tokenizer/tokenizer_config.json +112 -0

- unet/config.json +60 -0

- unet/diffusion_pytorch_model.bin +3 -0

- unet/diffusion_pytorch_model.fp16.bin +3 -0

- unet/diffusion_pytorch_model.fp16.safetensors +3 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- watermarker/config.json +4 -0

- watermarker/diffusion_pytorch_model.bin +3 -0

- watermarker/diffusion_pytorch_model.safetensors +3 -0

feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": {

|

| 3 |

+

"height": 224,

|

| 4 |

+

"width": 224

|

| 5 |

+

},

|

| 6 |

+

"do_center_crop": true,

|

| 7 |

+

"do_convert_rgb": true,

|

| 8 |

+

"do_normalize": true,

|

| 9 |

+

"do_rescale": true,

|

| 10 |

+

"do_resize": true,

|

| 11 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 12 |

+

"image_mean": [

|

| 13 |

+

0.48145466,

|

| 14 |

+

0.4578275,

|

| 15 |

+

0.40821073

|

| 16 |

+

],

|

| 17 |

+

"image_processor_type": "CLIPImageProcessor",

|

| 18 |

+

"image_std": [

|

| 19 |

+

0.26862954,

|

| 20 |

+

0.26130258,

|

| 21 |

+

0.27577711

|

| 22 |

+

],

|

| 23 |

+

"resample": 3,

|

| 24 |

+

"rescale_factor": 0.00392156862745098,

|

| 25 |

+

"size": {

|

| 26 |

+

"shortest_edge": 224

|

| 27 |

+

}

|

| 28 |

+

}

|

pics/deepfloyd_if_scheme.jpg

ADDED

|

pics/fid30k_if.jpg

ADDED

|

pics/if_architecture.jpg

ADDED

|

pics/loss.jpg

ADDED

|

pics/lr.jpg

ADDED

|

pics/scheme-h.jpg

ADDED

|

safety_checker/config.json

ADDED

|

@@ -0,0 +1,168 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"_name_or_path": ".",

|

| 4 |

+

"architectures": [

|

| 5 |

+

"IFSafetyChecker"

|

| 6 |

+

],

|

| 7 |

+

"initializer_factor": 1.0,

|

| 8 |

+

"logit_scale_init_value": 2.6592,

|

| 9 |

+

"model_type": "clip",

|

| 10 |

+

"projection_dim": 768,

|

| 11 |

+

"text_config": {

|

| 12 |

+

"_name_or_path": "",

|

| 13 |

+

"add_cross_attention": false,

|

| 14 |

+

"architectures": null,

|

| 15 |

+

"attention_dropout": 0.0,

|

| 16 |

+

"bad_words_ids": null,

|

| 17 |

+

"begin_suppress_tokens": null,

|

| 18 |

+

"bos_token_id": 0,

|

| 19 |

+

"chunk_size_feed_forward": 0,

|

| 20 |

+

"cross_attention_hidden_size": null,

|

| 21 |

+

"decoder_start_token_id": null,

|

| 22 |

+

"diversity_penalty": 0.0,

|

| 23 |

+

"do_sample": false,

|

| 24 |

+

"dropout": 0.0,

|

| 25 |

+

"early_stopping": false,

|

| 26 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 27 |

+

"eos_token_id": 2,

|

| 28 |

+

"exponential_decay_length_penalty": null,

|

| 29 |

+

"finetuning_task": null,

|

| 30 |

+

"forced_bos_token_id": null,

|

| 31 |

+

"forced_eos_token_id": null,

|

| 32 |

+

"hidden_act": "quick_gelu",

|

| 33 |

+

"hidden_size": 768,

|

| 34 |

+

"id2label": {

|

| 35 |

+

"0": "LABEL_0",

|

| 36 |

+

"1": "LABEL_1"

|

| 37 |

+

},

|

| 38 |

+

"initializer_factor": 1.0,

|

| 39 |

+

"initializer_range": 0.02,

|

| 40 |

+

"intermediate_size": 3072,

|

| 41 |

+

"is_decoder": false,

|

| 42 |

+

"is_encoder_decoder": false,

|

| 43 |

+

"label2id": {

|

| 44 |

+

"LABEL_0": 0,

|

| 45 |

+

"LABEL_1": 1

|

| 46 |

+

},

|

| 47 |

+

"layer_norm_eps": 1e-05,

|

| 48 |

+

"length_penalty": 1.0,

|

| 49 |

+

"max_length": 20,

|

| 50 |

+

"max_position_embeddings": 77,

|

| 51 |

+

"min_length": 0,

|

| 52 |

+

"model_type": "clip_text_model",

|

| 53 |

+

"no_repeat_ngram_size": 0,

|

| 54 |

+

"num_attention_heads": 12,

|

| 55 |

+

"num_beam_groups": 1,

|

| 56 |

+

"num_beams": 1,

|

| 57 |

+

"num_hidden_layers": 12,

|

| 58 |

+

"num_return_sequences": 1,

|

| 59 |

+

"output_attentions": false,

|

| 60 |

+

"output_hidden_states": false,

|

| 61 |

+

"output_scores": false,

|

| 62 |

+

"pad_token_id": 1,

|

| 63 |

+

"prefix": null,

|

| 64 |

+

"problem_type": null,

|

| 65 |

+

"projection_dim": 768,

|

| 66 |

+

"pruned_heads": {},

|

| 67 |

+

"remove_invalid_values": false,

|

| 68 |

+

"repetition_penalty": 1.0,

|

| 69 |

+

"return_dict": true,

|

| 70 |

+

"return_dict_in_generate": false,

|

| 71 |

+

"sep_token_id": null,

|

| 72 |

+

"suppress_tokens": null,

|

| 73 |

+

"task_specific_params": null,

|

| 74 |

+

"temperature": 1.0,

|

| 75 |

+

"tf_legacy_loss": false,

|

| 76 |

+

"tie_encoder_decoder": false,

|

| 77 |

+

"tie_word_embeddings": true,

|

| 78 |

+

"tokenizer_class": null,

|

| 79 |

+

"top_k": 50,

|

| 80 |

+

"top_p": 1.0,

|

| 81 |

+

"torch_dtype": null,

|

| 82 |

+

"torchscript": false,

|

| 83 |

+

"transformers_version": "4.27.4",

|

| 84 |

+

"typical_p": 1.0,

|

| 85 |

+

"use_bfloat16": false,

|

| 86 |

+

"vocab_size": 49408

|

| 87 |

+

},

|

| 88 |

+

"torch_dtype": "float16",

|

| 89 |

+

"transformers_version": null,

|

| 90 |

+

"vision_config": {

|

| 91 |

+

"_name_or_path": "",

|

| 92 |

+

"add_cross_attention": false,

|

| 93 |

+

"architectures": null,

|

| 94 |

+

"attention_dropout": 0.0,

|

| 95 |

+

"bad_words_ids": null,

|

| 96 |

+

"begin_suppress_tokens": null,

|

| 97 |

+

"bos_token_id": null,

|

| 98 |

+

"chunk_size_feed_forward": 0,

|

| 99 |

+

"cross_attention_hidden_size": null,

|

| 100 |

+

"decoder_start_token_id": null,

|

| 101 |

+

"diversity_penalty": 0.0,

|

| 102 |

+

"do_sample": false,

|

| 103 |

+

"dropout": 0.0,

|

| 104 |

+

"early_stopping": false,

|

| 105 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 106 |

+

"eos_token_id": null,

|

| 107 |

+

"exponential_decay_length_penalty": null,

|

| 108 |

+

"finetuning_task": null,

|

| 109 |

+

"forced_bos_token_id": null,

|

| 110 |

+

"forced_eos_token_id": null,

|

| 111 |

+

"hidden_act": "quick_gelu",

|

| 112 |

+

"hidden_size": 1024,

|

| 113 |

+

"id2label": {

|

| 114 |

+

"0": "LABEL_0",

|

| 115 |

+

"1": "LABEL_1"

|

| 116 |

+

},

|

| 117 |

+

"image_size": 224,

|

| 118 |

+

"initializer_factor": 1.0,

|

| 119 |

+

"initializer_range": 0.02,

|

| 120 |

+

"intermediate_size": 4096,

|

| 121 |

+

"is_decoder": false,

|

| 122 |

+

"is_encoder_decoder": false,

|

| 123 |

+

"label2id": {

|

| 124 |

+

"LABEL_0": 0,

|

| 125 |

+

"LABEL_1": 1

|

| 126 |

+

},

|

| 127 |

+

"layer_norm_eps": 1e-05,

|

| 128 |

+

"length_penalty": 1.0,

|

| 129 |

+

"max_length": 20,

|

| 130 |

+

"min_length": 0,

|

| 131 |

+

"model_type": "clip_vision_model",

|

| 132 |

+

"no_repeat_ngram_size": 0,

|

| 133 |

+

"num_attention_heads": 16,

|

| 134 |

+

"num_beam_groups": 1,

|

| 135 |

+

"num_beams": 1,

|

| 136 |

+

"num_channels": 3,

|

| 137 |

+

"num_hidden_layers": 24,

|

| 138 |

+

"num_return_sequences": 1,

|

| 139 |

+

"output_attentions": false,

|

| 140 |

+

"output_hidden_states": false,

|

| 141 |

+

"output_scores": false,

|

| 142 |

+

"pad_token_id": null,

|

| 143 |

+

"patch_size": 14,

|

| 144 |

+

"prefix": null,

|

| 145 |

+

"problem_type": null,

|

| 146 |

+

"projection_dim": 768,

|

| 147 |

+

"pruned_heads": {},

|

| 148 |

+

"remove_invalid_values": false,

|

| 149 |

+

"repetition_penalty": 1.0,

|

| 150 |

+

"return_dict": true,

|

| 151 |

+

"return_dict_in_generate": false,

|

| 152 |

+

"sep_token_id": null,

|

| 153 |

+

"suppress_tokens": null,

|

| 154 |

+

"task_specific_params": null,

|

| 155 |

+

"temperature": 1.0,

|

| 156 |

+

"tf_legacy_loss": false,

|

| 157 |

+

"tie_encoder_decoder": false,

|

| 158 |

+

"tie_word_embeddings": true,

|

| 159 |

+

"tokenizer_class": null,

|

| 160 |

+

"top_k": 50,

|

| 161 |

+

"top_p": 1.0,

|

| 162 |

+

"torch_dtype": null,

|

| 163 |

+

"torchscript": false,

|

| 164 |

+

"transformers_version": "4.27.4",

|

| 165 |

+

"typical_p": 1.0,

|

| 166 |

+

"use_bfloat16": false

|

| 167 |

+

}

|

| 168 |

+

}

|

safety_checker/model.fp16.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4c917ec4f9e64a1b45156c7fb81999c0f6e6379ec79cc3a576ad144ffb5bf572

|

| 3 |

+

size 607990743

|

safety_checker/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8ae81b4aeafdbc236594495a5aab48776892b18f996727ba7ad5bd24d6349817

|

| 3 |

+

size 1215926450

|

safety_checker/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0a3ad83d248f5eee559ad34ace75f517f6e2560ab42350dcbb8f26fc4519f6e6

|

| 3 |

+

size 1216009409

|

safety_checker/pytorch_model.fp16.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c637b781b23103fb6dc47d0862bdd717bd81bff84111efc5fe022a285d3b5c6b

|

| 3 |

+

size 608075916

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "DDPMScheduler",

|

| 3 |

+

"_diffusers_version": "0.15.0.dev0",

|

| 4 |

+

"beta_end": 0.02,

|

| 5 |

+

"beta_schedule": "squaredcos_cap_v2",

|

| 6 |

+

"beta_start": 0.0001,

|

| 7 |

+

"clip_sample": true,

|

| 8 |

+

"clip_sample_range": 1.0,

|

| 9 |

+

"dynamic_thresholding_ratio": 0.95,

|

| 10 |

+

"num_train_timesteps": 1000,

|

| 11 |

+

"prediction_type": "epsilon",

|

| 12 |

+

"sample_max_value": 1.5,

|

| 13 |

+

"thresholding": true,

|

| 14 |

+

"trained_betas": null,

|

| 15 |

+

"variance_type": "learned_range",

|

| 16 |

+

"lambda_min_clipped": -5.1

|

| 17 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "./",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"T5EncoderModel"

|

| 5 |

+

],

|

| 6 |

+

"d_ff": 10240,

|

| 7 |

+

"d_kv": 64,

|

| 8 |

+

"d_model": 4096,

|

| 9 |

+

"decoder_start_token_id": 0,

|

| 10 |

+

"dense_act_fn": "gelu_new",

|

| 11 |

+

"dropout_rate": 0.1,

|

| 12 |

+

"eos_token_id": 1,

|

| 13 |

+

"feed_forward_proj": "gated-gelu",

|

| 14 |

+

"initializer_factor": 1.0,

|

| 15 |

+

"is_encoder_decoder": true,

|

| 16 |

+

"is_gated_act": true,

|

| 17 |

+

"layer_norm_epsilon": 1e-06,

|

| 18 |

+

"model_type": "t5",

|

| 19 |

+

"num_decoder_layers": 24,

|

| 20 |

+

"num_heads": 64,

|

| 21 |

+

"num_layers": 24,

|

| 22 |

+

"output_past": true,

|

| 23 |

+

"pad_token_id": 0,

|

| 24 |

+

"relative_attention_max_distance": 128,

|

| 25 |

+

"relative_attention_num_buckets": 32,

|

| 26 |

+

"tie_word_embeddings": false,

|

| 27 |

+

"torch_dtype": "float16",

|

| 28 |

+

"transformers_version": "4.28.0.dev0",

|

| 29 |

+

"use_cache": true,

|

| 30 |

+

"vocab_size": 32128

|

| 31 |

+

}

|

text_encoder/model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5dfaaab934ff0359d88bda7e732f95e810ab711cf9df86d97856580564a6d3bf

|

| 3 |

+

size 9989150322

|

text_encoder/model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:41d72c7ea3f3a0026f529f71af16b7e562f1266d5e1dbc09fa3c6413c9a7122e

|

| 3 |

+

size 9060119390

|

text_encoder/model.8bit.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6a0b8c00b2e7a168f69a77b16c46240ce76f86e473aaebba2cfb1dd55bbc5303

|

| 3 |

+

size 7917591394

|

text_encoder/model.fp16-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:82c990c09b55affae9b36b51d4d7ba071dea3b467c71c3cc88e0d77735bb3b59

|

| 3 |

+

size 9960786792

|

text_encoder/model.fp16-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:42818a228ad7133141e6b1319faaa7fdc591c7b02873da3622a4f482cd266f9c

|

| 3 |

+

size 1577127548

|

text_encoder/model.safetensors.index.fp16.json

ADDED

|

@@ -0,0 +1,226 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 11537887232

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"encoder.block.0.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 7 |

+

"encoder.block.0.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 8 |

+

"encoder.block.0.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 9 |

+

"encoder.block.0.layer.0.SelfAttention.relative_attention_bias.weight": "model.fp16-00001-of-00002.safetensors",

|

| 10 |

+

"encoder.block.0.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 11 |

+

"encoder.block.0.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 12 |

+

"encoder.block.0.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 13 |

+

"encoder.block.0.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 14 |

+

"encoder.block.0.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 15 |

+

"encoder.block.0.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 16 |

+

"encoder.block.1.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 17 |

+

"encoder.block.1.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 18 |

+

"encoder.block.1.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 19 |

+

"encoder.block.1.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 20 |

+

"encoder.block.1.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 21 |

+

"encoder.block.1.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 22 |

+

"encoder.block.1.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 23 |

+

"encoder.block.1.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 24 |

+

"encoder.block.1.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 25 |

+

"encoder.block.10.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 26 |

+

"encoder.block.10.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 27 |

+

"encoder.block.10.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 28 |

+

"encoder.block.10.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 29 |

+

"encoder.block.10.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 30 |

+

"encoder.block.10.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 31 |

+

"encoder.block.10.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 32 |

+

"encoder.block.10.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 33 |

+

"encoder.block.10.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 34 |

+

"encoder.block.11.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 35 |

+

"encoder.block.11.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 36 |

+

"encoder.block.11.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 37 |

+

"encoder.block.11.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 38 |

+

"encoder.block.11.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 39 |

+

"encoder.block.11.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 40 |

+

"encoder.block.11.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 41 |

+

"encoder.block.11.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 42 |

+

"encoder.block.11.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 43 |

+

"encoder.block.12.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 44 |

+

"encoder.block.12.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 45 |

+

"encoder.block.12.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 46 |

+

"encoder.block.12.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 47 |

+

"encoder.block.12.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 48 |

+

"encoder.block.12.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 49 |

+

"encoder.block.12.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 50 |

+

"encoder.block.12.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 51 |

+

"encoder.block.12.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 52 |

+

"encoder.block.13.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 53 |

+

"encoder.block.13.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 54 |

+

"encoder.block.13.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 55 |

+

"encoder.block.13.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 56 |

+

"encoder.block.13.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 57 |

+

"encoder.block.13.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 58 |

+

"encoder.block.13.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 59 |

+

"encoder.block.13.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 60 |

+

"encoder.block.13.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 61 |

+

"encoder.block.14.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 62 |

+

"encoder.block.14.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 63 |

+

"encoder.block.14.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 64 |

+

"encoder.block.14.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 65 |

+

"encoder.block.14.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 66 |

+

"encoder.block.14.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 67 |

+

"encoder.block.14.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 68 |

+

"encoder.block.14.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 69 |

+

"encoder.block.14.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 70 |

+

"encoder.block.15.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 71 |

+

"encoder.block.15.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 72 |

+

"encoder.block.15.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 73 |

+

"encoder.block.15.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 74 |

+

"encoder.block.15.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 75 |

+

"encoder.block.15.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 76 |

+

"encoder.block.15.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 77 |

+

"encoder.block.15.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 78 |

+

"encoder.block.15.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 79 |

+

"encoder.block.16.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 80 |

+

"encoder.block.16.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 81 |

+

"encoder.block.16.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 82 |

+

"encoder.block.16.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 83 |

+

"encoder.block.16.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 84 |

+

"encoder.block.16.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 85 |

+

"encoder.block.16.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 86 |

+

"encoder.block.16.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 87 |

+

"encoder.block.16.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 88 |

+

"encoder.block.17.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 89 |

+

"encoder.block.17.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 90 |

+

"encoder.block.17.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 91 |

+

"encoder.block.17.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 92 |

+

"encoder.block.17.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 93 |

+

"encoder.block.17.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 94 |

+

"encoder.block.17.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 95 |

+

"encoder.block.17.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 96 |

+

"encoder.block.17.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 97 |

+

"encoder.block.18.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 98 |

+

"encoder.block.18.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 99 |

+

"encoder.block.18.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 100 |

+

"encoder.block.18.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 101 |

+

"encoder.block.18.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 102 |

+

"encoder.block.18.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 103 |

+

"encoder.block.18.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 104 |

+

"encoder.block.18.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 105 |

+

"encoder.block.18.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 106 |

+

"encoder.block.19.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 107 |

+

"encoder.block.19.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 108 |

+

"encoder.block.19.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 109 |

+

"encoder.block.19.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 110 |

+

"encoder.block.19.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 111 |

+

"encoder.block.19.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 112 |

+

"encoder.block.19.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 113 |

+

"encoder.block.19.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 114 |

+

"encoder.block.19.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 115 |

+

"encoder.block.2.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 116 |

+

"encoder.block.2.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 117 |

+

"encoder.block.2.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 118 |

+

"encoder.block.2.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 119 |

+

"encoder.block.2.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 120 |

+

"encoder.block.2.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 121 |

+

"encoder.block.2.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 122 |

+

"encoder.block.2.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 123 |

+

"encoder.block.2.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 124 |

+

"encoder.block.20.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 125 |

+

"encoder.block.20.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 126 |

+

"encoder.block.20.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 127 |

+

"encoder.block.20.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 128 |

+

"encoder.block.20.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 129 |

+

"encoder.block.20.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 130 |

+

"encoder.block.20.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 131 |

+

"encoder.block.20.layer.1.DenseReluDense.wo.weight": "model.fp16-00002-of-00002.safetensors",

|

| 132 |

+

"encoder.block.20.layer.1.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 133 |

+

"encoder.block.21.layer.0.SelfAttention.k.weight": "model.fp16-00002-of-00002.safetensors",

|

| 134 |

+

"encoder.block.21.layer.0.SelfAttention.o.weight": "model.fp16-00002-of-00002.safetensors",

|

| 135 |

+

"encoder.block.21.layer.0.SelfAttention.q.weight": "model.fp16-00002-of-00002.safetensors",

|

| 136 |

+

"encoder.block.21.layer.0.SelfAttention.v.weight": "model.fp16-00002-of-00002.safetensors",

|

| 137 |

+

"encoder.block.21.layer.0.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 138 |

+

"encoder.block.21.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00002-of-00002.safetensors",

|

| 139 |

+

"encoder.block.21.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00002-of-00002.safetensors",

|

| 140 |

+

"encoder.block.21.layer.1.DenseReluDense.wo.weight": "model.fp16-00002-of-00002.safetensors",

|

| 141 |

+

"encoder.block.21.layer.1.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 142 |

+

"encoder.block.22.layer.0.SelfAttention.k.weight": "model.fp16-00002-of-00002.safetensors",

|

| 143 |

+

"encoder.block.22.layer.0.SelfAttention.o.weight": "model.fp16-00002-of-00002.safetensors",

|

| 144 |

+

"encoder.block.22.layer.0.SelfAttention.q.weight": "model.fp16-00002-of-00002.safetensors",

|

| 145 |

+

"encoder.block.22.layer.0.SelfAttention.v.weight": "model.fp16-00002-of-00002.safetensors",

|

| 146 |

+

"encoder.block.22.layer.0.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 147 |

+

"encoder.block.22.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00002-of-00002.safetensors",

|

| 148 |

+

"encoder.block.22.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00002-of-00002.safetensors",

|

| 149 |

+

"encoder.block.22.layer.1.DenseReluDense.wo.weight": "model.fp16-00002-of-00002.safetensors",

|

| 150 |

+

"encoder.block.22.layer.1.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 151 |

+

"encoder.block.23.layer.0.SelfAttention.k.weight": "model.fp16-00002-of-00002.safetensors",

|

| 152 |

+

"encoder.block.23.layer.0.SelfAttention.o.weight": "model.fp16-00002-of-00002.safetensors",

|

| 153 |

+

"encoder.block.23.layer.0.SelfAttention.q.weight": "model.fp16-00002-of-00002.safetensors",

|

| 154 |

+

"encoder.block.23.layer.0.SelfAttention.v.weight": "model.fp16-00002-of-00002.safetensors",

|

| 155 |

+

"encoder.block.23.layer.0.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 156 |

+

"encoder.block.23.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00002-of-00002.safetensors",

|

| 157 |

+

"encoder.block.23.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00002-of-00002.safetensors",

|

| 158 |

+

"encoder.block.23.layer.1.DenseReluDense.wo.weight": "model.fp16-00002-of-00002.safetensors",

|

| 159 |

+

"encoder.block.23.layer.1.layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 160 |

+

"encoder.block.3.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 161 |

+

"encoder.block.3.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 162 |

+

"encoder.block.3.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 163 |

+

"encoder.block.3.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 164 |

+

"encoder.block.3.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 165 |

+

"encoder.block.3.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 166 |

+

"encoder.block.3.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 167 |

+

"encoder.block.3.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 168 |

+

"encoder.block.3.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 169 |

+

"encoder.block.4.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 170 |

+

"encoder.block.4.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 171 |

+

"encoder.block.4.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 172 |

+

"encoder.block.4.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 173 |

+

"encoder.block.4.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 174 |

+

"encoder.block.4.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 175 |

+

"encoder.block.4.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 176 |

+

"encoder.block.4.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 177 |

+

"encoder.block.4.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 178 |

+

"encoder.block.5.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 179 |

+

"encoder.block.5.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 180 |

+

"encoder.block.5.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 181 |

+

"encoder.block.5.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 182 |

+

"encoder.block.5.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 183 |

+

"encoder.block.5.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 184 |

+

"encoder.block.5.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 185 |

+

"encoder.block.5.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 186 |

+

"encoder.block.5.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 187 |

+

"encoder.block.6.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 188 |

+

"encoder.block.6.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 189 |

+

"encoder.block.6.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 190 |

+

"encoder.block.6.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 191 |

+

"encoder.block.6.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 192 |

+

"encoder.block.6.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 193 |

+

"encoder.block.6.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 194 |

+

"encoder.block.6.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 195 |

+

"encoder.block.6.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 196 |

+

"encoder.block.7.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 197 |

+

"encoder.block.7.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 198 |

+

"encoder.block.7.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 199 |

+

"encoder.block.7.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 200 |

+

"encoder.block.7.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 201 |

+

"encoder.block.7.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 202 |

+

"encoder.block.7.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 203 |

+

"encoder.block.7.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 204 |

+

"encoder.block.7.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 205 |

+

"encoder.block.8.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 206 |

+

"encoder.block.8.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 207 |

+

"encoder.block.8.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 208 |

+

"encoder.block.8.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 209 |

+

"encoder.block.8.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 210 |

+

"encoder.block.8.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 211 |

+

"encoder.block.8.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 212 |

+

"encoder.block.8.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 213 |

+

"encoder.block.8.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 214 |

+

"encoder.block.9.layer.0.SelfAttention.k.weight": "model.fp16-00001-of-00002.safetensors",

|

| 215 |

+

"encoder.block.9.layer.0.SelfAttention.o.weight": "model.fp16-00001-of-00002.safetensors",

|

| 216 |

+

"encoder.block.9.layer.0.SelfAttention.q.weight": "model.fp16-00001-of-00002.safetensors",

|

| 217 |

+

"encoder.block.9.layer.0.SelfAttention.v.weight": "model.fp16-00001-of-00002.safetensors",

|

| 218 |

+

"encoder.block.9.layer.0.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 219 |

+

"encoder.block.9.layer.1.DenseReluDense.wi_0.weight": "model.fp16-00001-of-00002.safetensors",

|

| 220 |

+

"encoder.block.9.layer.1.DenseReluDense.wi_1.weight": "model.fp16-00001-of-00002.safetensors",

|

| 221 |

+

"encoder.block.9.layer.1.DenseReluDense.wo.weight": "model.fp16-00001-of-00002.safetensors",

|

| 222 |

+

"encoder.block.9.layer.1.layer_norm.weight": "model.fp16-00001-of-00002.safetensors",

|

| 223 |

+

"encoder.final_layer_norm.weight": "model.fp16-00002-of-00002.safetensors",

|

| 224 |

+

"shared.weight": "model.fp16-00001-of-00002.safetensors"

|

| 225 |

+

}

|

| 226 |

+

}

|

text_encoder/model.safetensors.index.json

ADDED

|

@@ -0,0 +1,226 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 19049242624

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"encoder.block.0.layer.0.SelfAttention.k.weight": "model-00001-of-00002.safetensors",

|

| 7 |

+

"encoder.block.0.layer.0.SelfAttention.o.weight": "model-00001-of-00002.safetensors",

|

| 8 |

+

"encoder.block.0.layer.0.SelfAttention.q.weight": "model-00001-of-00002.safetensors",

|

| 9 |

+

"encoder.block.0.layer.0.SelfAttention.relative_attention_bias.weight": "model-00001-of-00002.safetensors",

|

| 10 |

+

"encoder.block.0.layer.0.SelfAttention.v.weight": "model-00001-of-00002.safetensors",

|

| 11 |

+

"encoder.block.0.layer.0.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 12 |

+

"encoder.block.0.layer.1.DenseReluDense.wi_0.weight": "model-00001-of-00002.safetensors",

|

| 13 |

+

"encoder.block.0.layer.1.DenseReluDense.wi_1.weight": "model-00001-of-00002.safetensors",

|

| 14 |

+

"encoder.block.0.layer.1.DenseReluDense.wo.weight": "model-00001-of-00002.safetensors",

|

| 15 |

+

"encoder.block.0.layer.1.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 16 |

+

"encoder.block.1.layer.0.SelfAttention.k.weight": "model-00001-of-00002.safetensors",

|

| 17 |

+

"encoder.block.1.layer.0.SelfAttention.o.weight": "model-00001-of-00002.safetensors",

|

| 18 |

+

"encoder.block.1.layer.0.SelfAttention.q.weight": "model-00001-of-00002.safetensors",

|

| 19 |

+

"encoder.block.1.layer.0.SelfAttention.v.weight": "model-00001-of-00002.safetensors",

|

| 20 |

+

"encoder.block.1.layer.0.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 21 |

+

"encoder.block.1.layer.1.DenseReluDense.wi_0.weight": "model-00001-of-00002.safetensors",

|

| 22 |

+

"encoder.block.1.layer.1.DenseReluDense.wi_1.weight": "model-00001-of-00002.safetensors",

|

| 23 |

+

"encoder.block.1.layer.1.DenseReluDense.wo.weight": "model-00001-of-00002.safetensors",

|

| 24 |

+

"encoder.block.1.layer.1.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 25 |

+

"encoder.block.10.layer.0.SelfAttention.k.weight": "model-00001-of-00002.safetensors",

|

| 26 |

+

"encoder.block.10.layer.0.SelfAttention.o.weight": "model-00001-of-00002.safetensors",

|

| 27 |

+

"encoder.block.10.layer.0.SelfAttention.q.weight": "model-00001-of-00002.safetensors",

|

| 28 |

+

"encoder.block.10.layer.0.SelfAttention.v.weight": "model-00001-of-00002.safetensors",

|

| 29 |

+

"encoder.block.10.layer.0.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 30 |

+

"encoder.block.10.layer.1.DenseReluDense.wi_0.weight": "model-00001-of-00002.safetensors",

|

| 31 |

+

"encoder.block.10.layer.1.DenseReluDense.wi_1.weight": "model-00001-of-00002.safetensors",

|

| 32 |

+

"encoder.block.10.layer.1.DenseReluDense.wo.weight": "model-00001-of-00002.safetensors",

|

| 33 |

+

"encoder.block.10.layer.1.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 34 |

+

"encoder.block.11.layer.0.SelfAttention.k.weight": "model-00001-of-00002.safetensors",

|

| 35 |

+

"encoder.block.11.layer.0.SelfAttention.o.weight": "model-00001-of-00002.safetensors",

|

| 36 |

+

"encoder.block.11.layer.0.SelfAttention.q.weight": "model-00001-of-00002.safetensors",

|

| 37 |

+

"encoder.block.11.layer.0.SelfAttention.v.weight": "model-00001-of-00002.safetensors",

|

| 38 |

+

"encoder.block.11.layer.0.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 39 |

+

"encoder.block.11.layer.1.DenseReluDense.wi_0.weight": "model-00001-of-00002.safetensors",

|

| 40 |

+

"encoder.block.11.layer.1.DenseReluDense.wi_1.weight": "model-00001-of-00002.safetensors",

|

| 41 |

+

"encoder.block.11.layer.1.DenseReluDense.wo.weight": "model-00001-of-00002.safetensors",

|

| 42 |

+

"encoder.block.11.layer.1.layer_norm.weight": "model-00001-of-00002.safetensors",

|

| 43 |

+

"encoder.block.12.layer.0.SelfAttention.k.weight": "model-00001-of-00002.safetensors",

|

| 44 |

+

"encoder.block.12.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 45 |

+

"encoder.block.12.layer.0.SelfAttention.q.weight": "model-00001-of-00002.safetensors",

|

| 46 |

+

"encoder.block.12.layer.0.SelfAttention.v.weight": "model-00001-of-00002.safetensors",

|

| 47 |

+

"encoder.block.12.layer.0.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 48 |

+

"encoder.block.12.layer.1.DenseReluDense.wi_0.weight": "model-00002-of-00002.safetensors",

|

| 49 |

+

"encoder.block.12.layer.1.DenseReluDense.wi_1.weight": "model-00002-of-00002.safetensors",

|

| 50 |

+

"encoder.block.12.layer.1.DenseReluDense.wo.weight": "model-00002-of-00002.safetensors",

|

| 51 |

+

"encoder.block.12.layer.1.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 52 |

+

"encoder.block.13.layer.0.SelfAttention.k.weight": "model-00002-of-00002.safetensors",

|

| 53 |

+

"encoder.block.13.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 54 |

+

"encoder.block.13.layer.0.SelfAttention.q.weight": "model-00002-of-00002.safetensors",

|

| 55 |

+

"encoder.block.13.layer.0.SelfAttention.v.weight": "model-00002-of-00002.safetensors",

|

| 56 |

+

"encoder.block.13.layer.0.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 57 |

+

"encoder.block.13.layer.1.DenseReluDense.wi_0.weight": "model-00002-of-00002.safetensors",

|

| 58 |

+

"encoder.block.13.layer.1.DenseReluDense.wi_1.weight": "model-00002-of-00002.safetensors",

|

| 59 |

+

"encoder.block.13.layer.1.DenseReluDense.wo.weight": "model-00002-of-00002.safetensors",

|

| 60 |

+

"encoder.block.13.layer.1.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 61 |

+

"encoder.block.14.layer.0.SelfAttention.k.weight": "model-00002-of-00002.safetensors",

|

| 62 |

+

"encoder.block.14.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 63 |

+

"encoder.block.14.layer.0.SelfAttention.q.weight": "model-00002-of-00002.safetensors",

|

| 64 |

+

"encoder.block.14.layer.0.SelfAttention.v.weight": "model-00002-of-00002.safetensors",

|

| 65 |

+

"encoder.block.14.layer.0.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 66 |

+

"encoder.block.14.layer.1.DenseReluDense.wi_0.weight": "model-00002-of-00002.safetensors",

|

| 67 |

+

"encoder.block.14.layer.1.DenseReluDense.wi_1.weight": "model-00002-of-00002.safetensors",

|

| 68 |

+

"encoder.block.14.layer.1.DenseReluDense.wo.weight": "model-00002-of-00002.safetensors",

|

| 69 |

+

"encoder.block.14.layer.1.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 70 |

+

"encoder.block.15.layer.0.SelfAttention.k.weight": "model-00002-of-00002.safetensors",

|

| 71 |

+

"encoder.block.15.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 72 |

+

"encoder.block.15.layer.0.SelfAttention.q.weight": "model-00002-of-00002.safetensors",

|

| 73 |

+

"encoder.block.15.layer.0.SelfAttention.v.weight": "model-00002-of-00002.safetensors",

|

| 74 |

+

"encoder.block.15.layer.0.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 75 |

+

"encoder.block.15.layer.1.DenseReluDense.wi_0.weight": "model-00002-of-00002.safetensors",

|

| 76 |

+

"encoder.block.15.layer.1.DenseReluDense.wi_1.weight": "model-00002-of-00002.safetensors",

|

| 77 |

+

"encoder.block.15.layer.1.DenseReluDense.wo.weight": "model-00002-of-00002.safetensors",

|

| 78 |

+

"encoder.block.15.layer.1.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 79 |

+

"encoder.block.16.layer.0.SelfAttention.k.weight": "model-00002-of-00002.safetensors",

|

| 80 |

+

"encoder.block.16.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 81 |

+

"encoder.block.16.layer.0.SelfAttention.q.weight": "model-00002-of-00002.safetensors",

|

| 82 |

+

"encoder.block.16.layer.0.SelfAttention.v.weight": "model-00002-of-00002.safetensors",

|

| 83 |

+

"encoder.block.16.layer.0.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 84 |

+

"encoder.block.16.layer.1.DenseReluDense.wi_0.weight": "model-00002-of-00002.safetensors",

|

| 85 |

+

"encoder.block.16.layer.1.DenseReluDense.wi_1.weight": "model-00002-of-00002.safetensors",

|

| 86 |

+

"encoder.block.16.layer.1.DenseReluDense.wo.weight": "model-00002-of-00002.safetensors",

|

| 87 |

+

"encoder.block.16.layer.1.layer_norm.weight": "model-00002-of-00002.safetensors",

|

| 88 |

+

"encoder.block.17.layer.0.SelfAttention.k.weight": "model-00002-of-00002.safetensors",

|

| 89 |

+

"encoder.block.17.layer.0.SelfAttention.o.weight": "model-00002-of-00002.safetensors",

|

| 90 |

+

"encoder.block.17.layer.0.SelfAttention.q.weight": "model-00002-of-00002.safetensors",

|

| 91 |

+