diff --git a/1 Getting Started/01_quickstart.md b/1 Getting Started/01_quickstart.md

new file mode 100644

index 0000000000000000000000000000000000000000..cc8dcb939e18e1b3b7fbfdb3bfb5a2749a8d615e

--- /dev/null

+++ b/1 Getting Started/01_quickstart.md

@@ -0,0 +1,119 @@

+

+# Quickstart

+

+Gradio is an open-source Python package that allows you to quickly **build** a demo or web application for your machine learning model, API, or any arbitary Python function. You can then **share** a link to your demo or web application in just a few seconds using Gradio's built-in sharing features. *No JavaScript, CSS, or web hosting experience needed!*

+

+

+

+It just takes a few lines of Python to create a demo like the one above, so let's get started 💫

+

+## Installation

+

+**Prerequisite**: Gradio requires [Python 3.8 or higher](https://www.python.org/downloads/)

+

+

+We recommend installing Gradio using `pip`, which is included by default in Python. Run this in your terminal or command prompt:

+

+```bash

+pip install gradio

+```

+

+

+Tip: it is best to install Gradio in a virtual environment. Detailed installation instructions for all common operating systems are provided here.

+

+## Building Your First Demo

+

+You can run Gradio in your favorite code editor, Jupyter notebook, Google Colab, or anywhere else you write Python. Let's write your first Gradio app:

+

+

+$code_hello_world_4

+

+

+Tip: We shorten the imported name from gradio to gr for better readability of code. This is a widely adopted convention that you should follow so that anyone working with your code can easily understand it.

+

+Now, run your code. If you've written the Python code in a file named, for example, `app.py`, then you would run `python app.py` from the terminal.

+

+The demo below will open in a browser on [http://localhost:7860](http://localhost:7860) if running from a file. If you are running within a notebook, the demo will appear embedded within the notebook.

+

+$demo_hello_world_4

+

+Type your name in the textbox on the left, drag the slider, and then press the Submit button. You should see a friendly greeting on the right.

+

+Tip: When developing locally, you can run your Gradio app in hot reload mode, which automatically reloads the Gradio app whenever you make changes to the file. To do this, simply type in gradio before the name of the file instead of python. In the example above, you would type: `gradio app.py` in your terminal. Learn more about hot reloading in the Hot Reloading Guide.

+

+

+**Understanding the `Interface` Class**

+

+You'll notice that in order to make your first demo, you created an instance of the `gr.Interface` class. The `Interface` class is designed to create demos for machine learning models which accept one or more inputs, and return one or more outputs.

+

+The `Interface` class has three core arguments:

+

+- `fn`: the function to wrap a user interface (UI) around

+- `inputs`: the Gradio component(s) to use for the input. The number of components should match the number of arguments in your function.

+- `outputs`: the Gradio component(s) to use for the output. The number of components should match the number of return values from your function.

+

+The `fn` argument is very flexible -- you can pass *any* Python function that you want to wrap with a UI. In the example above, we saw a relatively simple function, but the function could be anything from a music generator to a tax calculator to the prediction function of a pretrained machine learning model.

+

+The `inputs` and `outputs` arguments take one or more Gradio components. As we'll see, Gradio includes more than [30 built-in components](https://www.gradio.app/docs/gradio/components) (such as the `gr.Textbox()`, `gr.Image()`, and `gr.HTML()` components) that are designed for machine learning applications.

+

+Tip: For the `inputs` and `outputs` arguments, you can pass in the name of these components as a string (`"textbox"`) or an instance of the class (`gr.Textbox()`).

+

+If your function accepts more than one argument, as is the case above, pass a list of input components to `inputs`, with each input component corresponding to one of the arguments of the function, in order. The same holds true if your function returns more than one value: simply pass in a list of components to `outputs`. This flexibility makes the `Interface` class a very powerful way to create demos.

+

+We'll dive deeper into the `gr.Interface` on our series on [building Interfaces](https://www.gradio.app/main/guides/the-interface-class).

+

+## Sharing Your Demo

+

+What good is a beautiful demo if you can't share it? Gradio lets you easily share a machine learning demo without having to worry about the hassle of hosting on a web server. Simply set `share=True` in `launch()`, and a publicly accessible URL will be created for your demo. Let's revisit our example demo, but change the last line as follows:

+

+```python

+import gradio as gr

+

+def greet(name):

+ return "Hello " + name + "!"

+

+demo = gr.Interface(fn=greet, inputs="textbox", outputs="textbox")

+

+demo.launch(share=True) # Share your demo with just 1 extra parameter 🚀

+```

+

+When you run this code, a public URL will be generated for your demo in a matter of seconds, something like:

+

+👉 `https://a23dsf231adb.gradio.live`

+

+Now, anyone around the world can try your Gradio demo from their browser, while the machine learning model and all computation continues to run locally on your computer.

+

+To learn more about sharing your demo, read our dedicated guide on [sharing your Gradio application](https://www.gradio.app/guides/sharing-your-app).

+

+

+## Core Gradio Classes

+

+So far, we've been discussing the `Interface` class, which is a high-level class that lets to build demos quickly with Gradio. But what else does Gradio include?aaa

+

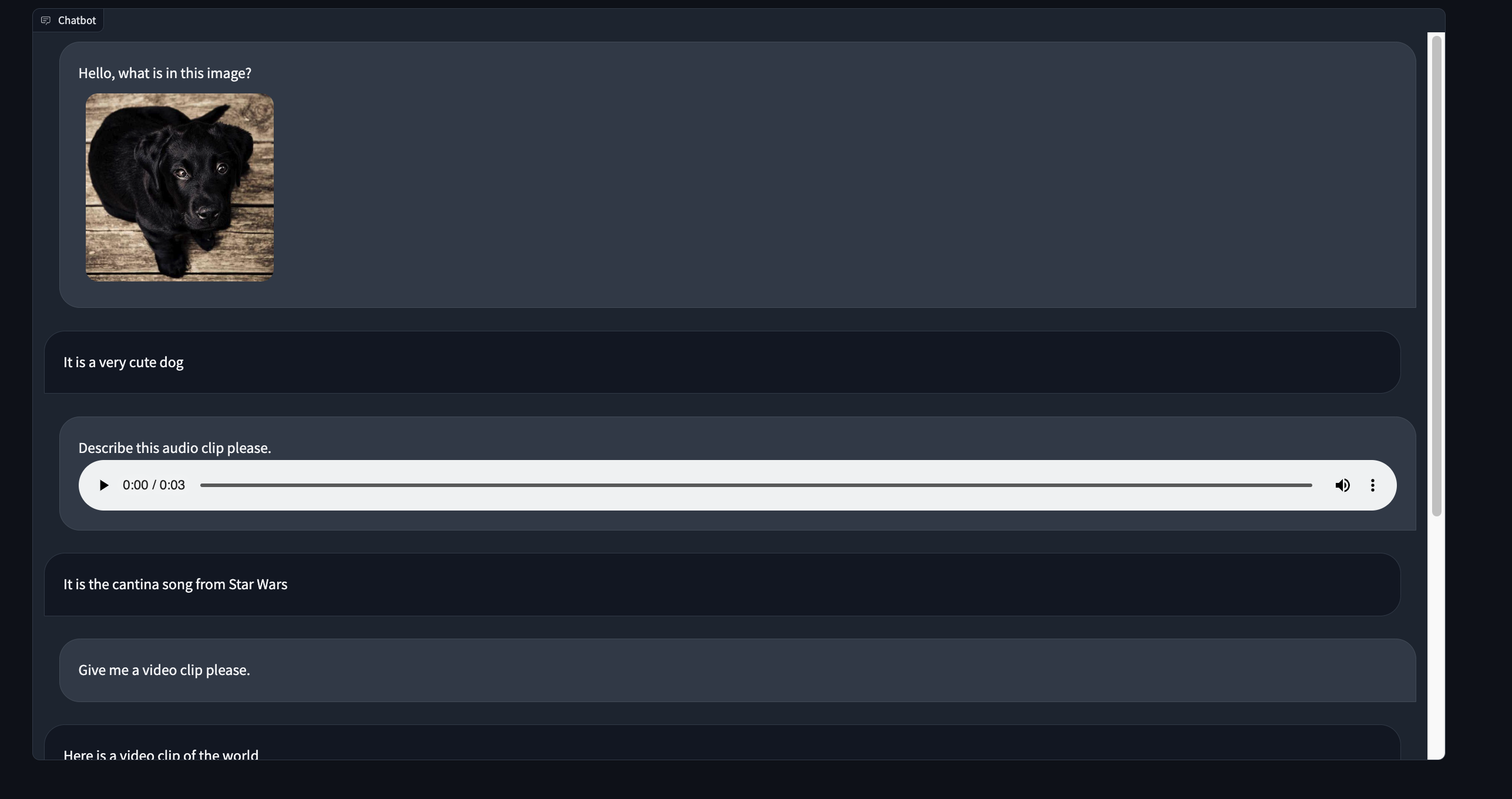

+### Chatbots with `gr.ChatInterface`

+

+Gradio includes another high-level class, `gr.ChatInterface`, which is specifically designed to create Chatbot UIs. Similar to `Interface`, you supply a function and Gradio creates a fully working Chatbot UI. If you're interested in creating a chatbot, you can jump straight to [our dedicated guide on `gr.ChatInterface`](https://www.gradio.app/guides/creating-a-chatbot-fast).

+

+### Custom Demos with `gr.Blocks`

+

+Gradio also offers a low-level approach for designing web apps with more flexible layouts and data flows with the `gr.Blocks` class. Blocks allows you to do things like control where components appear on the page, handle complex data flows (e.g. outputs can serve as inputs to other functions), and update properties/visibility of components based on user interaction — still all in Python.

+

+You can build very custom and complex applications using `gr.Blocks()`. For example, the popular image generation [Automatic1111 Web UI](https://github.com/AUTOMATIC1111/stable-diffusion-webui) is built using Gradio Blocks. We dive deeper into the `gr.Blocks` on our series on [building with Blocks](https://www.gradio.app/guides/blocks-and-event-listeners).

+

+

+### The Gradio Python & JavaScript Ecosystem

+

+That's the gist of the core `gradio` Python library, but Gradio is actually so much more! Its an entire ecosystem of Python and JavaScript libraries that let you build machine learning applications, or query them programmatically, in Python or JavaScript. Here are other related parts of the Gradio ecosystem:

+

+* [Gradio Python Client](https://www.gradio.app/guides/getting-started-with-the-python-client) (`gradio_client`): query any Gradio app programmatically in Python.

+* [Gradio JavaScript Client](https://www.gradio.app/guides/getting-started-with-the-js-client) (`@gradio/client`): query any Gradio app programmatically in JavaScript.

+* [Gradio-Lite](https://www.gradio.app/guides/gradio-lite) (`@gradio/lite`): write Gradio apps in Python that run entirely in the browser (no server needed!), thanks to Pyodide.

+* [Hugging Face Spaces](https://huggingface.co/spaces): the most popular place to host Gradio applications — for free!

+

+## What's Next?

+

+Keep learning about Gradio sequentially using the Gradio Guides, which include explanations as well as example code and embedded interactive demos. Next up: [let's dive deeper into the Interface class](https://www.gradio.app/guides/the-interface-class).

+

+Or, if you already know the basics and are looking for something specific, you can search the more [technical API documentation](https://www.gradio.app/docs/).

+

+

diff --git a/1 Getting Started/02_key-features.md b/1 Getting Started/02_key-features.md

new file mode 100644

index 0000000000000000000000000000000000000000..6c296840fdae07e81d0fbc0a59c25d1d08b55b9c

--- /dev/null

+++ b/1 Getting Started/02_key-features.md

@@ -0,0 +1,194 @@

+

+# Key Features

+

+Let's go through some of the key features of Gradio. This guide is intended to be a high-level overview of various things that you should be aware of as you build your demo. Where appropriate, we link to more detailed guides on specific topics.

+

+1. [Components](#components)

+2. [Queuing](#queuing)

+3. [Streaming outputs](#streaming-outputs)

+4. [Streaming inputs](#streaming-inputs)

+5. [Alert modals](#alert-modals)

+6. [Styling](#styling)

+7. [Progress bars](#progress-bars)

+8. [Batch functions](#batch-functions)

+

+## Components

+

+Gradio includes more than 30 pre-built components (as well as many user-built _custom components_) that can be used as inputs or outputs in your demo with a single line of code. These components correspond to common data types in machine learning and data science, e.g. the `gr.Image` component is designed to handle input or output images, the `gr.Label` component displays classification labels and probabilities, the `gr.Plot` component displays various kinds of plots, and so on.

+

+Each component includes various constructor attributes that control the properties of the component. For example, you can control the number of lines in a `gr.Textbox` using the `lines` argument (which takes a positive integer) in its constructor. Or you can control the way that a user can provide an image in the `gr.Image` component using the `sources` parameter (which takes a list like `["webcam", "upload"]`).

+

+**Static and Interactive Components**

+

+Every component has a _static_ version that is designed to *display* data, and most components also have an _interactive_ version designed to let users input or modify the data. Typically, you don't need to think about this distinction, because when you build a Gradio demo, Gradio automatically figures out whether the component should be static or interactive based on whether it is being used as an input or output. However, you can set this manually using the `interactive` argument that every component supports.

+

+**Preprocessing and Postprocessing**

+

+When a component is used as an input, Gradio automatically handles the _preprocessing_ needed to convert the data from a type sent by the user's browser (such as an uploaded image) to a form that can be accepted by your function (such as a `numpy` array).

+

+

+Similarly, when a component is used as an output, Gradio automatically handles the _postprocessing_ needed to convert the data from what is returned by your function (such as a list of image paths) to a form that can be displayed in the user's browser (a gallery of images).

+

+Consider an example demo with three input components (`gr.Textbox`, `gr.Number`, and `gr.Image`) and two outputs (`gr.Number` and `gr.Gallery`) that serve as a UI for your image-to-image generation model. Below is a diagram of what our preprocessing will send to the model and what our postprocessing will require from it.

+

+

+

+In this image, the following preprocessing steps happen to send the data from the browser to your function:

+

+* The text in the textbox is converted to a Python `str` (essentially no preprocessing)

+* The number in the number input is converted to a Python `int` (essentially no preprocessing)

+* Most importantly, ihe image supplied by the user is converted to a `numpy.array` representation of the RGB values in the image

+

+Images are converted to NumPy arrays because they are a common format for machine learning workflows. You can control the _preprocessing_ using the component's parameters when constructing the component. For example, if you instantiate the `Image` component with the following parameters, it will preprocess the image to the `PIL` format instead:

+

+```py

+img = gr.Image(type="pil")

+```

+

+Postprocessing is even simpler! Gradio automatically recognizes the format of the returned data (e.g. does the user's function return a `numpy` array or a `str` filepath for the `gr.Image` component?) and postprocesses it appropriately into a format that can be displayed by the browser.

+

+So in the image above, the following postprocessing steps happen to send the data returned from a user's function to the browser:

+

+* The `float` is displayed as a number and displayed directly to the user

+* The list of string filepaths (`list[str]`) is interpreted as a list of image filepaths and displayed as a gallery in the browser

+

+Take a look at the [Docs](https://gradio.app/docs) to see all the parameters for each Gradio component.

+

+## Queuing

+

+Every Gradio app comes with a built-in queuing system that can scale to thousands of concurrent users. You can configure the queue by using `queue()` method which is supported by the `gr.Interface`, `gr.Blocks`, and `gr.ChatInterface` classes.

+

+For example, you can control the number of requests processed at a single time by setting the `default_concurrency_limit` parameter of `queue()`, e.g.

+

+```python

+demo = gr.Interface(...).queue(default_concurrency_limit=5)

+demo.launch()

+```

+

+This limits the number of requests processed for this event listener at a single time to 5. By default, the `default_concurrency_limit` is actually set to `1`, which means that when many users are using your app, only a single user's request will be processed at a time. This is because many machine learning functions consume a significant amount of memory and so it is only suitable to have a single user using the demo at a time. However, you can change this parameter in your demo easily.

+

+See the [docs on queueing](https://gradio.app/docs/gradio/interface#interface-queue) for more details on configuring the queuing parameters.

+

+## Streaming outputs

+

+In some cases, you may want to stream a sequence of outputs rather than show a single output at once. For example, you might have an image generation model and you want to show the image that is generated at each step, leading up to the final image. Or you might have a chatbot which streams its response one token at a time instead of returning it all at once.

+

+In such cases, you can supply a **generator** function into Gradio instead of a regular function. Creating generators in Python is very simple: instead of a single `return` value, a function should `yield` a series of values instead. Usually the `yield` statement is put in some kind of loop. Here's an example of an generator that simply counts up to a given number:

+

+```python

+def my_generator(x):

+ for i in range(x):

+ yield i

+```

+

+You supply a generator into Gradio the same way as you would a regular function. For example, here's a a (fake) image generation model that generates noise for several steps before outputting an image using the `gr.Interface` class:

+

+$code_fake_diffusion

+$demo_fake_diffusion

+

+Note that we've added a `time.sleep(1)` in the iterator to create an artificial pause between steps so that you are able to observe the steps of the iterator (in a real image generation model, this probably wouldn't be necessary).

+

+## Streaming inputs

+

+Similarly, Gradio can handle streaming inputs, e.g. a live audio stream that can gets transcribed to text in real time, or an image generation model that reruns every time a user types a letter in a textbox. This is covered in more details in our guide on building [reactive Interfaces](/guides/reactive-interfaces).

+

+## Alert modals

+

+You may wish to raise alerts to the user. To do so, raise a `gr.Error("custom message")` to display an error message. You can also issue `gr.Warning("message")` and `gr.Info("message")` by having them as standalone lines in your function, which will immediately display modals while continuing the execution of your function. Queueing needs to be enabled for this to work.

+

+Note below how the `gr.Error` has to be raised, while the `gr.Warning` and `gr.Info` are single lines.

+

+```python

+def start_process(name):

+ gr.Info("Starting process")

+ if name is None:

+ gr.Warning("Name is empty")

+ ...

+ if success == False:

+ raise gr.Error("Process failed")

+```

+

+

+

+## Styling

+

+Gradio themes are the easiest way to customize the look and feel of your app. You can choose from a variety of themes, or create your own. To do so, pass the `theme=` kwarg to the `Interface` constructor. For example:

+

+```python

+demo = gr.Interface(..., theme=gr.themes.Monochrome())

+```

+

+Gradio comes with a set of prebuilt themes which you can load from `gr.themes.*`. You can extend these themes or create your own themes from scratch - see the [theming guide](https://gradio.app/guides/theming-guide) for more details.

+

+For additional styling ability, you can pass any CSS (as well as custom JavaScript) to your Gradio application. This is discussed in more detail in our [custom JS and CSS guide](/guides/custom-CSS-and-JS).

+

+

+## Progress bars

+

+Gradio supports the ability to create custom Progress Bars so that you have customizability and control over the progress update that you show to the user. In order to enable this, simply add an argument to your method that has a default value of a `gr.Progress` instance. Then you can update the progress levels by calling this instance directly with a float between 0 and 1, or using the `tqdm()` method of the `Progress` instance to track progress over an iterable, as shown below.

+

+$code_progress_simple

+$demo_progress_simple

+

+If you use the `tqdm` library, you can even report progress updates automatically from any `tqdm.tqdm` that already exists within your function by setting the default argument as `gr.Progress(track_tqdm=True)`!

+

+## Batch functions

+

+Gradio supports the ability to pass _batch_ functions. Batch functions are just

+functions which take in a list of inputs and return a list of predictions.

+

+For example, here is a batched function that takes in two lists of inputs (a list of

+words and a list of ints), and returns a list of trimmed words as output:

+

+```py

+import time

+

+def trim_words(words, lens):

+ trimmed_words = []

+ time.sleep(5)

+ for w, l in zip(words, lens):

+ trimmed_words.append(w[:int(l)])

+ return [trimmed_words]

+```

+

+The advantage of using batched functions is that if you enable queuing, the Gradio server can automatically _batch_ incoming requests and process them in parallel,

+potentially speeding up your demo. Here's what the Gradio code looks like (notice the `batch=True` and `max_batch_size=16`)

+

+With the `gr.Interface` class:

+

+```python

+demo = gr.Interface(

+ fn=trim_words,

+ inputs=["textbox", "number"],

+ outputs=["output"],

+ batch=True,

+ max_batch_size=16

+)

+

+demo.launch()

+```

+

+With the `gr.Blocks` class:

+

+```py

+import gradio as gr

+

+with gr.Blocks() as demo:

+ with gr.Row():

+ word = gr.Textbox(label="word")

+ leng = gr.Number(label="leng")

+ output = gr.Textbox(label="Output")

+ with gr.Row():

+ run = gr.Button()

+

+ event = run.click(trim_words, [word, leng], output, batch=True, max_batch_size=16)

+

+demo.launch()

+```

+

+In the example above, 16 requests could be processed in parallel (for a total inference time of 5 seconds), instead of each request being processed separately (for a total

+inference time of 80 seconds). Many Hugging Face `transformers` and `diffusers` models work very naturally with Gradio's batch mode: here's [an example demo using diffusers to

+generate images in batches](https://github.com/gradio-app/gradio/blob/main/demo/diffusers_with_batching/run.py)

+

+

+

diff --git a/2 Building Interfaces/00_the-interface-class.md b/2 Building Interfaces/00_the-interface-class.md

new file mode 100644

index 0000000000000000000000000000000000000000..a785fc53f14e5c7b385e8f681e55e44f3ac1385c

--- /dev/null

+++ b/2 Building Interfaces/00_the-interface-class.md

@@ -0,0 +1,110 @@

+

+# The `Interface` class

+

+As mentioned in the [Quickstart](/main/guides/quickstart), the `gr.Interface` class is a high-level abstraction in Gradio that allows you to quickly create a demo for any Python function simply by specifying the input types and the output types. Revisiting our first demo:

+

+$code_hello_world_4

+

+

+We see that the `Interface` class is initialized with three required parameters:

+

+- `fn`: the function to wrap a user interface (UI) around

+- `inputs`: which Gradio component(s) to use for the input. The number of components should match the number of arguments in your function.

+- `outputs`: which Gradio component(s) to use for the output. The number of components should match the number of return values from your function.

+

+In this Guide, we'll dive into `gr.Interface` and the various ways it can be customized, but before we do that, let's get a better understanding of Gradio components.

+

+## Gradio Components

+

+Gradio includes more than 30 pre-built components (as well as many [community-built _custom components_](https://www.gradio.app/custom-components/gallery)) that can be used as inputs or outputs in your demo. These components correspond to common data types in machine learning and data science, e.g. the `gr.Image` component is designed to handle input or output images, the `gr.Label` component displays classification labels and probabilities, the `gr.Plot` component displays various kinds of plots, and so on.

+

+**Static and Interactive Components**

+

+Every component has a _static_ version that is designed to *display* data, and most components also have an _interactive_ version designed to let users input or modify the data. Typically, you don't need to think about this distinction, because when you build a Gradio demo, Gradio automatically figures out whether the component should be static or interactive based on whether it is being used as an input or output. However, you can set this manually using the `interactive` argument that every component supports.

+

+**Preprocessing and Postprocessing**

+

+When a component is used as an input, Gradio automatically handles the _preprocessing_ needed to convert the data from a type sent by the user's browser (such as an uploaded image) to a form that can be accepted by your function (such as a `numpy` array).

+

+

+Similarly, when a component is used as an output, Gradio automatically handles the _postprocessing_ needed to convert the data from what is returned by your function (such as a list of image paths) to a form that can be displayed in the user's browser (a gallery of images).

+

+## Components Attributes

+

+We used the default versions of the `gr.Textbox` and `gr.Slider`, but what if you want to change how the UI components look or behave?

+

+Let's say you want to customize the slider to have values from 1 to 10, with a default of 2. And you wanted to customize the output text field — you want it to be larger and have a label.

+

+If you use the actual classes for `gr.Textbox` and `gr.Slider` instead of the string shortcuts, you have access to much more customizability through component attributes.

+

+$code_hello_world_2

+$demo_hello_world_2

+

+## Multiple Input and Output Components

+

+Suppose you had a more complex function, with multiple outputs as well. In the example below, we define a function that takes a string, boolean, and number, and returns a string and number.

+

+$code_hello_world_3

+$demo_hello_world_3

+

+Just as each component in the `inputs` list corresponds to one of the parameters of the function, in order, each component in the `outputs` list corresponds to one of the values returned by the function, in order.

+

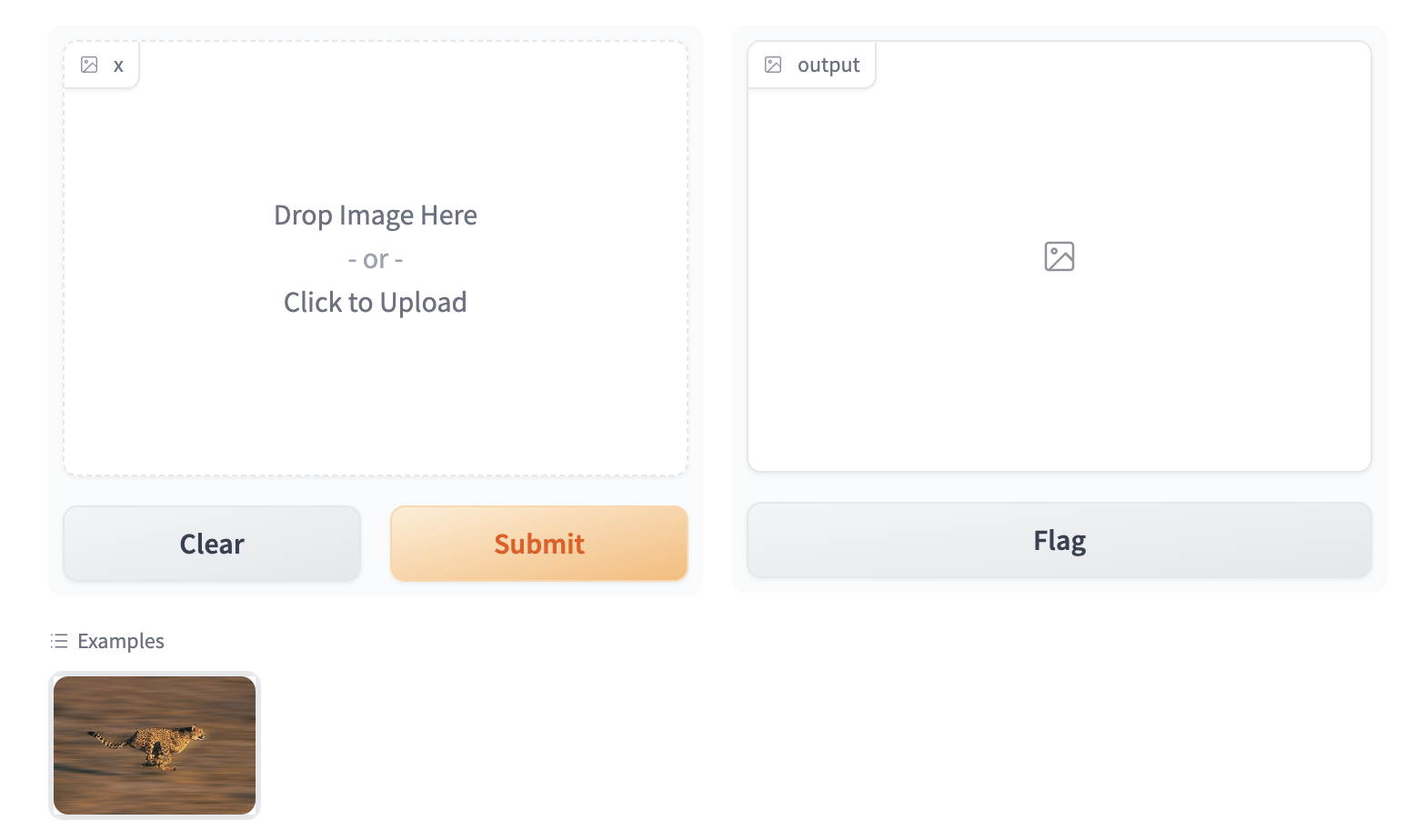

+## An Image Example

+

+Gradio supports many types of components, such as `Image`, `DataFrame`, `Video`, or `Label`. Let's try an image-to-image function to get a feel for these!

+

+$code_sepia_filter

+$demo_sepia_filter

+

+When using the `Image` component as input, your function will receive a NumPy array with the shape `(height, width, 3)`, where the last dimension represents the RGB values. We'll return an image as well in the form of a NumPy array.

+

+As mentioned above, Gradio handles the preprocessing and postprocessing to convert images to NumPy arrays and vice versa. You can also control the preprocessing performed with the `type=` keyword argument. For example, if you wanted your function to take a file path to an image instead of a NumPy array, the input `Image` component could be written as:

+

+```python

+gr.Image(type="filepath", shape=...)

+```

+

+You can read more about the built-in Gradio components and how to customize them in the [Gradio docs](https://gradio.app/docs).

+

+## Example Inputs

+

+You can provide example data that a user can easily load into `Interface`. This can be helpful to demonstrate the types of inputs the model expects, as well as to provide a way to explore your dataset in conjunction with your model. To load example data, you can provide a **nested list** to the `examples=` keyword argument of the Interface constructor. Each sublist within the outer list represents a data sample, and each element within the sublist represents an input for each input component. The format of example data for each component is specified in the [Docs](https://gradio.app/docs#components).

+

+$code_calculator

+$demo_calculator

+

+You can load a large dataset into the examples to browse and interact with the dataset through Gradio. The examples will be automatically paginated (you can configure this through the `examples_per_page` argument of `Interface`).

+

+Continue learning about examples in the [More On Examples](https://gradio.app/guides/more-on-examples) guide.

+

+## Descriptive Content

+

+In the previous example, you may have noticed the `title=` and `description=` keyword arguments in the `Interface` constructor that helps users understand your app.

+

+There are three arguments in the `Interface` constructor to specify where this content should go:

+

+- `title`: which accepts text and can display it at the very top of interface, and also becomes the page title.

+- `description`: which accepts text, markdown or HTML and places it right under the title.

+- `article`: which also accepts text, markdown or HTML and places it below the interface.

+

+

+

+Note: if you're using the `Blocks` class, you can insert text, markdown, or HTML anywhere in your application using the `gr.Markdown(...)` or `gr.HTML(...)` components.

+

+Another useful keyword argument is `label=`, which is present in every `Component`. This modifies the label text at the top of each `Component`. You can also add the `info=` keyword argument to form elements like `Textbox` or `Radio` to provide further information on their usage.

+

+```python

+gr.Number(label='Age', info='In years, must be greater than 0')

+```

+

+## Additional Inputs within an Accordion

+

+If your prediction function takes many inputs, you may want to hide some of them within a collapsed accordion to avoid cluttering the UI. The `Interface` class takes an `additional_inputs` argument which is similar to `inputs` but any input components included here are not visible by default. The user must click on the accordion to show these components. The additional inputs are passed into the prediction function, in order, after the standard inputs.

+

+You can customize the appearance of the accordion by using the optional `additional_inputs_accordion` argument, which accepts a string (in which case, it becomes the label of the accordion), or an instance of the `gr.Accordion()` class (e.g. this lets you control whether the accordion is open or closed by default).

+

+Here's an example:

+

+$code_interface_with_additional_inputs

+$demo_interface_with_additional_inputs

+

diff --git a/2 Building Interfaces/01_more-on-examples.md b/2 Building Interfaces/01_more-on-examples.md

new file mode 100644

index 0000000000000000000000000000000000000000..f3f752e61fd612a0d291906d1b78c4e2dd6cb487

--- /dev/null

+++ b/2 Building Interfaces/01_more-on-examples.md

@@ -0,0 +1,44 @@

+

+# More on Examples

+

+In the [previous Guide](/main/guides/the-interface-class), we discussed how to provide example inputs for your demo to make it easier for users to try it out. Here, we dive into more details.

+

+## Providing Examples

+

+Adding examples to an Interface is as easy as providing a list of lists to the `examples`

+keyword argument.

+Each sublist is a data sample, where each element corresponds to an input of the prediction function.

+The inputs must be ordered in the same order as the prediction function expects them.

+

+If your interface only has one input component, then you can provide your examples as a regular list instead of a list of lists.

+

+### Loading Examples from a Directory

+

+You can also specify a path to a directory containing your examples. If your Interface takes only a single file-type input, e.g. an image classifier, you can simply pass a directory filepath to the `examples=` argument, and the `Interface` will load the images in the directory as examples.

+In the case of multiple inputs, this directory must

+contain a log.csv file with the example values.

+In the context of the calculator demo, we can set `examples='/demo/calculator/examples'` and in that directory we include the following `log.csv` file:

+

+```csv

+num,operation,num2

+5,"add",3

+4,"divide",2

+5,"multiply",3

+```

+

+This can be helpful when browsing flagged data. Simply point to the flagged directory and the `Interface` will load the examples from the flagged data.

+

+### Providing Partial Examples

+

+Sometimes your app has many input components, but you would only like to provide examples for a subset of them. In order to exclude some inputs from the examples, pass `None` for all data samples corresponding to those particular components.

+

+## Caching examples

+

+You may wish to provide some cached examples of your model for users to quickly try out, in case your model takes a while to run normally.

+If `cache_examples=True`, your Gradio app will run all of the examples and save the outputs when you call the `launch()` method. This data will be saved in a directory called `gradio_cached_examples` in your working directory by default. You can also set this directory with the `GRADIO_EXAMPLES_CACHE` environment variable, which can be either an absolute path or a relative path to your working directory.

+

+Whenever a user clicks on an example, the output will automatically be populated in the app now, using data from this cached directory instead of actually running the function. This is useful so users can quickly try out your model without adding any load!

+

+Alternatively, you can set `cache_examples="lazy"`. This means that each particular example will only get cached after it is first used (by any user) in the Gradio app. This is helpful if your prediction function is long-running and you do not want to wait a long time for your Gradio app to start.

+

+Keep in mind once the cache is generated, it will not be updated automatically in future launches. If the examples or function logic change, delete the cache folder to clear the cache and rebuild it with another `launch()`.

diff --git a/2 Building Interfaces/02_flagging.md b/2 Building Interfaces/02_flagging.md

new file mode 100644

index 0000000000000000000000000000000000000000..04001846653dee5ccf45a12d9f53ad40e34a0636

--- /dev/null

+++ b/2 Building Interfaces/02_flagging.md

@@ -0,0 +1,46 @@

+

+# Flagging

+

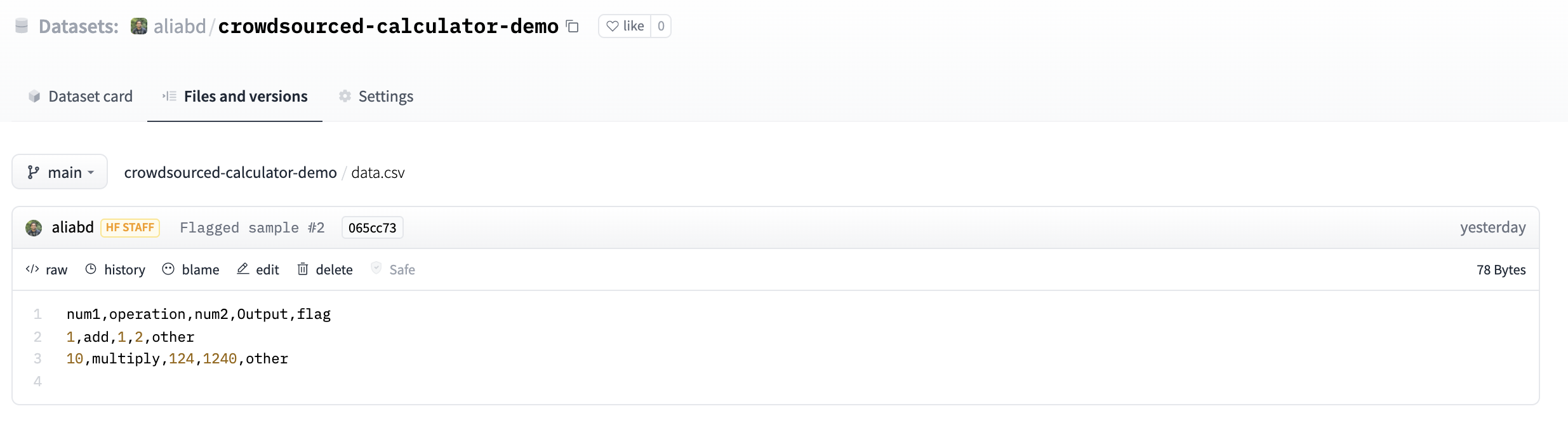

+You may have noticed the "Flag" button that appears by default in your `Interface`. When a user using your demo sees input with interesting output, such as erroneous or unexpected model behaviour, they can flag the input for you to review. Within the directory provided by the `flagging_dir=` argument to the `Interface` constructor, a CSV file will log the flagged inputs. If the interface involves file data, such as for Image and Audio components, folders will be created to store those flagged data as well.

+

+For example, with the calculator interface shown above, we would have the flagged data stored in the flagged directory shown below:

+

+```directory

++-- calculator.py

++-- flagged/

+| +-- logs.csv

+```

+

+_flagged/logs.csv_

+

+```csv

+num1,operation,num2,Output

+5,add,7,12

+6,subtract,1.5,4.5

+```

+

+With the sepia interface shown earlier, we would have the flagged data stored in the flagged directory shown below:

+

+```directory

++-- sepia.py

++-- flagged/

+| +-- logs.csv

+| +-- im/

+| | +-- 0.png

+| | +-- 1.png

+| +-- Output/

+| | +-- 0.png

+| | +-- 1.png

+```

+

+_flagged/logs.csv_

+

+```csv

+im,Output

+im/0.png,Output/0.png

+im/1.png,Output/1.png

+```

+

+If you wish for the user to provide a reason for flagging, you can pass a list of strings to the `flagging_options` argument of Interface. Users will have to select one of the strings when flagging, which will be saved as an additional column to the CSV.

+

+

diff --git a/2 Building Interfaces/03_interface-state.md b/2 Building Interfaces/03_interface-state.md

new file mode 100644

index 0000000000000000000000000000000000000000..327aecc2880dbc5cfb3aad73a59389341a62ec1a

--- /dev/null

+++ b/2 Building Interfaces/03_interface-state.md

@@ -0,0 +1,33 @@

+

+# Interface State

+

+So far, we've assumed that your demos are *stateless*: that they do not persist information beyond a single function call. What if you want to modify the behavior of your demo based on previous interactions with the demo? There are two approaches in Gradio: *global state* and *session state*.

+

+## Global State

+

+If the state is something that should be accessible to all function calls and all users, you can create a variable outside the function call and access it inside the function. For example, you may load a large model outside the function and use it inside the function so that every function call does not need to reload the model.

+

+$code_score_tracker

+

+In the code above, the `scores` array is shared between all users. If multiple users are accessing this demo, their scores will all be added to the same list, and the returned top 3 scores will be collected from this shared reference.

+

+## Session State

+

+Another type of data persistence Gradio supports is session state, where data persists across multiple submits within a page session. However, data is _not_ shared between different users of your model. To store data in a session state, you need to do three things:

+

+1. Pass in an extra parameter into your function, which represents the state of the interface.

+2. At the end of the function, return the updated value of the state as an extra return value.

+3. Add the `'state'` input and `'state'` output components when creating your `Interface`

+

+Here's a simple app to illustrate session state - this app simply stores users previous submissions and displays them back to the user:

+

+

+$code_interface_state

+$demo_interface_state

+

+

+Notice how the state persists across submits within each page, but if you load this demo in another tab (or refresh the page), the demos will not share chat history. Here, we could not store the submission history in a global variable, otherwise the submission history would then get jumbled between different users.

+

+The initial value of the `State` is `None` by default. If you pass a parameter to the `value` argument of `gr.State()`, it is used as the default value of the state instead.

+

+Note: the `Interface` class only supports a single session state variable (though it can be a list with multiple elements). For more complex use cases, you can use Blocks, [which supports multiple `State` variables](/guides/state-in-blocks/). Alternatively, if you are building a chatbot that maintains user state, consider using the `ChatInterface` abstraction, [which manages state automatically](/guides/creating-a-chatbot-fast).

diff --git a/2 Building Interfaces/04_reactive-interfaces.md b/2 Building Interfaces/04_reactive-interfaces.md

new file mode 100644

index 0000000000000000000000000000000000000000..f6d204a797283881935b9671ad57cd6e915e83b7

--- /dev/null

+++ b/2 Building Interfaces/04_reactive-interfaces.md

@@ -0,0 +1,29 @@

+

+# Reactive Interfaces

+

+Finally, we cover how to get Gradio demos to refresh automatically or continuously stream data.

+

+## Live Interfaces

+

+You can make interfaces automatically refresh by setting `live=True` in the interface. Now the interface will recalculate as soon as the user input changes.

+

+$code_calculator_live

+$demo_calculator_live

+

+Note there is no submit button, because the interface resubmits automatically on change.

+

+## Streaming Components

+

+Some components have a "streaming" mode, such as `Audio` component in microphone mode, or the `Image` component in webcam mode. Streaming means data is sent continuously to the backend and the `Interface` function is continuously being rerun.

+

+The difference between `gr.Audio(source='microphone')` and `gr.Audio(source='microphone', streaming=True)`, when both are used in `gr.Interface(live=True)`, is that the first `Component` will automatically submit data and run the `Interface` function when the user stops recording, whereas the second `Component` will continuously send data and run the `Interface` function _during_ recording.

+

+Here is example code of streaming images from the webcam.

+

+$code_stream_frames

+

+Streaming can also be done in an output component. A `gr.Audio(streaming=True)` output component can take a stream of audio data yielded piece-wise by a generator function and combines them into a single audio file.

+

+$code_stream_audio_out

+

+For a more detailed example, see our guide on performing [automatic speech recognition](/guides/real-time-speech-recognition) with Gradio.

diff --git a/2 Building Interfaces/05_four-kinds-of-interfaces.md b/2 Building Interfaces/05_four-kinds-of-interfaces.md

new file mode 100644

index 0000000000000000000000000000000000000000..132ef8a03e94ba5bc2879e13add0a40d351464a1

--- /dev/null

+++ b/2 Building Interfaces/05_four-kinds-of-interfaces.md

@@ -0,0 +1,45 @@

+

+# The 4 Kinds of Gradio Interfaces

+

+So far, we've always assumed that in order to build an Gradio demo, you need both inputs and outputs. But this isn't always the case for machine learning demos: for example, _unconditional image generation models_ don't take any input but produce an image as the output.

+

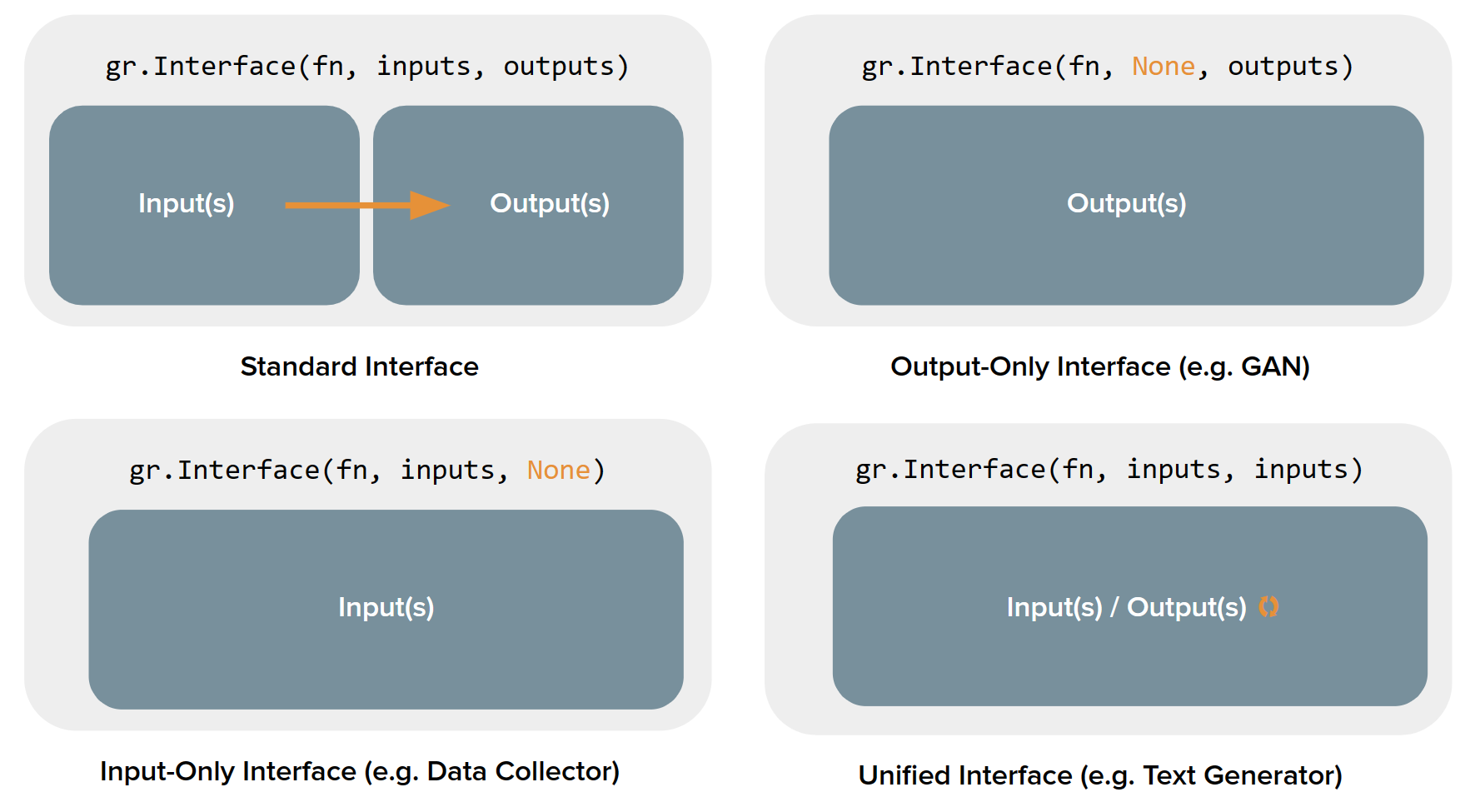

+It turns out that the `gradio.Interface` class can actually handle 4 different kinds of demos:

+

+1. **Standard demos**: which have both separate inputs and outputs (e.g. an image classifier or speech-to-text model)

+2. **Output-only demos**: which don't take any input but produce on output (e.g. an unconditional image generation model)

+3. **Input-only demos**: which don't produce any output but do take in some sort of input (e.g. a demo that saves images that you upload to a persistent external database)

+4. **Unified demos**: which have both input and output components, but the input and output components _are the same_. This means that the output produced overrides the input (e.g. a text autocomplete model)

+

+Depending on the kind of demo, the user interface (UI) looks slightly different:

+

+

+

+Let's see how to build each kind of demo using the `Interface` class, along with examples:

+

+## Standard demos

+

+To create a demo that has both the input and the output components, you simply need to set the values of the `inputs` and `outputs` parameter in `Interface()`. Here's an example demo of a simple image filter:

+

+$code_sepia_filter

+$demo_sepia_filter

+

+## Output-only demos

+

+What about demos that only contain outputs? In order to build such a demo, you simply set the value of the `inputs` parameter in `Interface()` to `None`. Here's an example demo of a mock image generation model:

+

+$code_fake_gan_no_input

+$demo_fake_gan_no_input

+

+## Input-only demos

+

+Similarly, to create a demo that only contains inputs, set the value of `outputs` parameter in `Interface()` to be `None`. Here's an example demo that saves any uploaded image to disk:

+

+$code_save_file_no_output

+$demo_save_file_no_output

+

+## Unified demos

+

+A demo that has a single component as both the input and the output. It can simply be created by setting the values of the `inputs` and `outputs` parameter as the same component. Here's an example demo of a text generation model:

+

+$code_unified_demo_text_generation

+$demo_unified_demo_text_generation

diff --git a/3 Additional Features/01_queuing.md b/3 Additional Features/01_queuing.md

new file mode 100644

index 0000000000000000000000000000000000000000..46479ce643554d957e118e397b2e9ab4344c9c30

--- /dev/null

+++ b/3 Additional Features/01_queuing.md

@@ -0,0 +1,17 @@

+

+# Queuing

+

+Every Gradio app comes with a built-in queuing system that can scale to thousands of concurrent users. You can configure the queue by using `queue()` method which is supported by the `gr.Interface`, `gr.Blocks`, and `gr.ChatInterface` classes.

+

+For example, you can control the number of requests processed at a single time by setting the `default_concurrency_limit` parameter of `queue()`, e.g.

+

+```python

+demo = gr.Interface(...).queue(default_concurrency_limit=5)

+demo.launch()

+```

+

+This limits the number of requests processed for this event listener at a single time to 5. By default, the `default_concurrency_limit` is actually set to `1`, which means that when many users are using your app, only a single user's request will be processed at a time. This is because many machine learning functions consume a significant amount of memory and so it is only suitable to have a single user using the demo at a time. However, you can change this parameter in your demo easily.

+

+See the [docs on queueing](/docs/gradio/interface#interface-queue) for more details on configuring the queuing parameters.

+

+You can see analytics on the number and status of all requests processed by the queue by visiting the `/monitoring` endpoint of your app. This endpoint will print a secret URL to your console that links to the full analytics dashboard.

\ No newline at end of file

diff --git a/3 Additional Features/02_streaming-outputs.md b/3 Additional Features/02_streaming-outputs.md

new file mode 100644

index 0000000000000000000000000000000000000000..1bae6fb449cd8d7a37b9fc0ca15c660e8e60fb4e

--- /dev/null

+++ b/3 Additional Features/02_streaming-outputs.md

@@ -0,0 +1,21 @@

+

+# Streaming outputs

+

+In some cases, you may want to stream a sequence of outputs rather than show a single output at once. For example, you might have an image generation model and you want to show the image that is generated at each step, leading up to the final image. Or you might have a chatbot which streams its response one token at a time instead of returning it all at once.

+

+In such cases, you can supply a **generator** function into Gradio instead of a regular function. Creating generators in Python is very simple: instead of a single `return` value, a function should `yield` a series of values instead. Usually the `yield` statement is put in some kind of loop. Here's an example of an generator that simply counts up to a given number:

+

+```python

+def my_generator(x):

+ for i in range(x):

+ yield i

+```

+

+You supply a generator into Gradio the same way as you would a regular function. For example, here's a a (fake) image generation model that generates noise for several steps before outputting an image using the `gr.Interface` class:

+

+$code_fake_diffusion

+$demo_fake_diffusion

+

+Note that we've added a `time.sleep(1)` in the iterator to create an artificial pause between steps so that you are able to observe the steps of the iterator (in a real image generation model, this probably wouldn't be necessary).

+

+Similarly, Gradio can handle streaming inputs, e.g. an image generation model that reruns every time a user types a letter in a textbox. This is covered in more details in our guide on building [reactive Interfaces](/guides/reactive-interfaces).

diff --git a/3 Additional Features/03_alerts.md b/3 Additional Features/03_alerts.md

new file mode 100644

index 0000000000000000000000000000000000000000..3641b9f9442781fbeddbbd3b585a6e7c227b37b9

--- /dev/null

+++ b/3 Additional Features/03_alerts.md

@@ -0,0 +1,19 @@

+

+# Alerts

+

+You may wish to display alerts to the user. To do so, raise a `gr.Error("custom message")` in your function to halt the execution of your function and display an error message to the user.

+

+Alternatively, can issue `gr.Warning("custom message")` or `gr.Info("custom message")` by having them as standalone lines in your function, which will immediately display modals while continuing the execution of your function. The only difference between `gr.Info()` and `gr.Warning()` is the color of the alert.

+

+```python

+def start_process(name):

+ gr.Info("Starting process")

+ if name is None:

+ gr.Warning("Name is empty")

+ ...

+ if success == False:

+ raise gr.Error("Process failed")

+```

+

+Tip: Note that `gr.Error()` is an exception that has to be raised, while `gr.Warning()` and `gr.Info()` are functions that are called directly.

+

diff --git a/3 Additional Features/04_styling.md b/3 Additional Features/04_styling.md

new file mode 100644

index 0000000000000000000000000000000000000000..c737ed1b538750874bb35dc70c8dd99f6ef0647c

--- /dev/null

+++ b/3 Additional Features/04_styling.md

@@ -0,0 +1,13 @@

+

+# Styling

+

+Gradio themes are the easiest way to customize the look and feel of your app. You can choose from a variety of themes, or create your own. To do so, pass the `theme=` kwarg to the `Interface` constructor. For example:

+

+```python

+demo = gr.Interface(..., theme=gr.themes.Monochrome())

+```

+

+Gradio comes with a set of prebuilt themes which you can load from `gr.themes.*`. You can extend these themes or create your own themes from scratch - see the [theming guide](https://gradio.app/guides/theming-guide) for more details.

+

+For additional styling ability, you can pass any CSS (as well as custom JavaScript) to your Gradio application. This is discussed in more detail in our [custom JS and CSS guide](/guides/custom-CSS-and-JS).

+

diff --git a/3 Additional Features/05_progress-bars.md b/3 Additional Features/05_progress-bars.md

new file mode 100644

index 0000000000000000000000000000000000000000..817366f64a4ec976024ef94aa4129ae62913033a

--- /dev/null

+++ b/3 Additional Features/05_progress-bars.md

@@ -0,0 +1,9 @@

+

+# Progress Bars

+

+Gradio supports the ability to create custom Progress Bars so that you have customizability and control over the progress update that you show to the user. In order to enable this, simply add an argument to your method that has a default value of a `gr.Progress` instance. Then you can update the progress levels by calling this instance directly with a float between 0 and 1, or using the `tqdm()` method of the `Progress` instance to track progress over an iterable, as shown below.

+

+$code_progress_simple

+$demo_progress_simple

+

+If you use the `tqdm` library, you can even report progress updates automatically from any `tqdm.tqdm` that already exists within your function by setting the default argument as `gr.Progress(track_tqdm=True)`!

diff --git a/3 Additional Features/06_batch-functions.md b/3 Additional Features/06_batch-functions.md

new file mode 100644

index 0000000000000000000000000000000000000000..9799c3c784277a9a5509b4995c30df29c7a635ee

--- /dev/null

+++ b/3 Additional Features/06_batch-functions.md

@@ -0,0 +1,60 @@

+

+# Batch functions

+

+Gradio supports the ability to pass _batch_ functions. Batch functions are just

+functions which take in a list of inputs and return a list of predictions.

+

+For example, here is a batched function that takes in two lists of inputs (a list of

+words and a list of ints), and returns a list of trimmed words as output:

+

+```py

+import time

+

+def trim_words(words, lens):

+ trimmed_words = []

+ time.sleep(5)

+ for w, l in zip(words, lens):

+ trimmed_words.append(w[:int(l)])

+ return [trimmed_words]

+```

+

+The advantage of using batched functions is that if you enable queuing, the Gradio server can automatically _batch_ incoming requests and process them in parallel,

+potentially speeding up your demo. Here's what the Gradio code looks like (notice the `batch=True` and `max_batch_size=16`)

+

+With the `gr.Interface` class:

+

+```python

+demo = gr.Interface(

+ fn=trim_words,

+ inputs=["textbox", "number"],

+ outputs=["output"],

+ batch=True,

+ max_batch_size=16

+)

+

+demo.launch()

+```

+

+With the `gr.Blocks` class:

+

+```py

+import gradio as gr

+

+with gr.Blocks() as demo:

+ with gr.Row():

+ word = gr.Textbox(label="word")

+ leng = gr.Number(label="leng")

+ output = gr.Textbox(label="Output")

+ with gr.Row():

+ run = gr.Button()

+

+ event = run.click(trim_words, [word, leng], output, batch=True, max_batch_size=16)

+

+demo.launch()

+```

+

+In the example above, 16 requests could be processed in parallel (for a total inference time of 5 seconds), instead of each request being processed separately (for a total

+inference time of 80 seconds). Many Hugging Face `transformers` and `diffusers` models work very naturally with Gradio's batch mode: here's [an example demo using diffusers to

+generate images in batches](https://github.com/gradio-app/gradio/blob/main/demo/diffusers_with_batching/run.py)

+

+

diff --git a/3 Additional Features/07_resource-cleanup.md b/3 Additional Features/07_resource-cleanup.md

new file mode 100644

index 0000000000000000000000000000000000000000..fa7b9f7555631f5d6816f04171fba2c537a9b80c

--- /dev/null

+++ b/3 Additional Features/07_resource-cleanup.md

@@ -0,0 +1,49 @@

+

+# Resource Cleanup

+

+Your Gradio application may create resources during its lifetime.

+Examples of resources are `gr.State` variables, any variables you create and explicitly hold in memory, or files you save to disk.

+Over time, these resources can use up all of your server's RAM or disk space and crash your application.

+

+Gradio provides some tools for you to clean up the resources created by your app:

+

+1. Automatic deletion of `gr.State` variables.

+2. Automatic cache cleanup with the `delete_cache` parameter.

+2. The `Blocks.unload` event.

+

+Let's take a look at each of them individually.

+

+## Automatic deletion of `gr.State`

+

+When a user closes their browser tab, Gradio will automatically delete any `gr.State` variables associated with that user session after 60 minutes. If the user connects again within those 60 minutes, no state will be deleted.

+

+You can control the deletion behavior further with the following two parameters of `gr.State`:

+

+1. `delete_callback` - An arbitrary function that will be called when the variable is deleted. This function must take the state value as input. This function is useful for deleting variables from GPU memory.

+2. `time_to_live` - The number of seconds the state should be stored for after it is created or updated. This will delete variables before the session is closed, so it's useful for clearing state for potentially long running sessions.

+

+## Automatic cache cleanup via `delete_cache`

+

+Your Gradio application will save uploaded and generated files to a special directory called the cache directory. Gradio uses a hashing scheme to ensure that duplicate files are not saved to the cache but over time the size of the cache will grow (especially if your app goes viral 😉).

+

+Gradio can periodically clean up the cache for you if you specify the `delete_cache` parameter of `gr.Blocks()`, `gr.Interface()`, or `gr.ChatInterface()`.

+This parameter is a tuple of the form `[frequency, age]` both expressed in number of seconds.

+Every `frequency` seconds, the temporary files created by this Blocks instance will be deleted if more than `age` seconds have passed since the file was created.

+For example, setting this to (86400, 86400) will delete temporary files every day if they are older than a day old.

+Additionally, the cache will be deleted entirely when the server restarts.

+

+## The `unload` event

+

+Additionally, Gradio now includes a `Blocks.unload()` event, allowing you to run arbitrary cleanup functions when users disconnect (this does not have a 60 minute delay).

+Unlike other gradio events, this event does not accept inputs or outptus.

+You can think of the `unload` event as the opposite of the `load` event.

+

+## Putting it all together

+

+The following demo uses all of these features. When a user visits the page, a special unique directory is created for that user.

+As the user interacts with the app, images are saved to disk in that special directory.

+When the user closes the page, the images created in that session are deleted via the `unload` event.

+The state and files in the cache are cleaned up automatically as well.

+

+$code_state_cleanup

+$demo_state_cleanup

\ No newline at end of file

diff --git a/3 Additional Features/08_environment-variables.md b/3 Additional Features/08_environment-variables.md

new file mode 100644

index 0000000000000000000000000000000000000000..5f7f53f303b243e6a525755c840400dbf91e369e

--- /dev/null

+++ b/3 Additional Features/08_environment-variables.md

@@ -0,0 +1,118 @@

+

+# Environment Variables

+

+Environment variables in Gradio provide a way to customize your applications and launch settings without changing the codebase. In this guide, we'll explore the key environment variables supported in Gradio and how to set them.

+

+## Key Environment Variables

+

+### 1. `GRADIO_SERVER_PORT`

+

+- **Description**: Specifies the port on which the Gradio app will run.

+- **Default**: `7860`

+- **Example**:

+ ```bash

+ export GRADIO_SERVER_PORT=8000

+ ```

+

+### 2. `GRADIO_SERVER_NAME`

+

+- **Description**: Defines the host name for the Gradio server. To make Gradio accessible from any IP address, set this to `"0.0.0.0"`

+- **Default**: `"127.0.0.1"`

+- **Example**:

+ ```bash

+ export GRADIO_SERVER_NAME="0.0.0.0"

+ ```

+

+### 3. `GRADIO_ANALYTICS_ENABLED`

+

+- **Description**: Whether Gradio should provide

+- **Default**: `"True"`

+- **Options**: `"True"`, `"False"`

+- **Example**:

+ ```sh

+ export GRADIO_ANALYTICS_ENABLED="True"

+ ```

+

+### 4. `GRADIO_DEBUG`

+

+- **Description**: Enables or disables debug mode in Gradio. If debug mode is enabled, the main thread does not terminate allowing error messages to be printed in environments such as Google Colab.

+- **Default**: `0`

+- **Example**:

+ ```sh

+ export GRADIO_DEBUG=1

+ ```

+

+### 5. `GRADIO_ALLOW_FLAGGING`

+

+- **Description**: Controls whether users can flag inputs/outputs in the Gradio interface. See [the Guide on flagging](/guides/using-flagging) for more details.

+- **Default**: `"manual"`

+- **Options**: `"never"`, `"manual"`, `"auto"`

+- **Example**:

+ ```sh

+ export GRADIO_ALLOW_FLAGGING="never"

+ ```

+

+### 6. `GRADIO_TEMP_DIR`

+

+- **Description**: Specifies the directory where temporary files created by Gradio are stored.

+- **Default**: System default temporary directory

+- **Example**:

+ ```sh

+ export GRADIO_TEMP_DIR="/path/to/temp"

+ ```

+

+### 7. `GRADIO_ROOT_PATH`

+

+- **Description**: Sets the root path for the Gradio application. Useful if running Gradio [behind a reverse proxy](/guides/running-gradio-on-your-web-server-with-nginx).

+- **Default**: `""`

+- **Example**:

+ ```sh

+ export GRADIO_ROOT_PATH="/myapp"

+ ```

+

+### 8. `GRADIO_SHARE`

+

+- **Description**: Enables or disables sharing the Gradio app.

+- **Default**: `"False"`

+- **Options**: `"True"`, `"False"`

+- **Example**:

+ ```sh

+ export GRADIO_SHARE="True"

+ ```

+

+### 9. `GRADIO_ALLOWED_PATHS`

+

+- **Description**: Sets a list of complete filepaths or parent directories that gradio is allowed to serve. Must be absolute paths. Warning: if you provide directories, any files in these directories or their subdirectories are accessible to all users of your app. Multiple items can be specified by separating items with commas.

+- **Default**: `""`

+- **Example**:

+ ```sh

+ export GRADIO_ALLOWED_PATHS="/mnt/sda1,/mnt/sda2"

+ ```

+

+### 10. `GRADIO_BLOCKED_PATHS`

+

+- **Description**: Sets a list of complete filepaths or parent directories that gradio is not allowed to serve (i.e. users of your app are not allowed to access). Must be absolute paths. Warning: takes precedence over `allowed_paths` and all other directories exposed by Gradio by default. Multiple items can be specified by separating items with commas.

+- **Default**: `""`

+- **Example**:

+ ```sh

+ export GRADIO_BLOCKED_PATHS="/users/x/gradio_app/admin,/users/x/gradio_app/keys"

+ ```

+

+

+## How to Set Environment Variables

+

+To set environment variables in your terminal, use the `export` command followed by the variable name and its value. For example:

+

+```sh

+export GRADIO_SERVER_PORT=8000

+```

+

+If you're using a `.env` file to manage your environment variables, you can add them like this:

+

+```sh

+GRADIO_SERVER_PORT=8000

+GRADIO_SERVER_NAME="localhost"

+```

+

+Then, use a tool like `dotenv` to load these variables when running your application.

+

diff --git a/3 Additional Features/09_sharing-your-app.md b/3 Additional Features/09_sharing-your-app.md

new file mode 100644

index 0000000000000000000000000000000000000000..45f4ca32d826fff5d083aab369e7fc436bc8029d

--- /dev/null

+++ b/3 Additional Features/09_sharing-your-app.md

@@ -0,0 +1,482 @@

+

+# Sharing Your App

+

+In this Guide, we dive more deeply into the various aspects of sharing a Gradio app with others. We will cover:

+

+1. [Sharing demos with the share parameter](#sharing-demos)

+2. [Hosting on HF Spaces](#hosting-on-hf-spaces)

+3. [Embedding hosted spaces](#embedding-hosted-spaces)

+4. [Using the API page](#api-page)

+5. [Accessing network requests](#accessing-the-network-request-directly)

+6. [Mounting within FastAPI](#mounting-within-another-fast-api-app)

+7. [Authentication](#authentication)

+8. [Security and file access](#security-and-file-access)

+9. [Analytics](#analytics)

+

+## Sharing Demos

+

+Gradio demos can be easily shared publicly by setting `share=True` in the `launch()` method. Like this:

+

+```python

+import gradio as gr

+

+def greet(name):

+ return "Hello " + name + "!"

+

+demo = gr.Interface(fn=greet, inputs="textbox", outputs="textbox")

+

+demo.launch(share=True) # Share your demo with just 1 extra parameter 🚀

+```

+

+This generates a public, shareable link that you can send to anybody! When you send this link, the user on the other side can try out the model in their browser. Because the processing happens on your device (as long as your device stays on), you don't have to worry about any packaging any dependencies.

+

+

+

+

+A share link usually looks something like this: **https://07ff8706ab.gradio.live**. Although the link is served through the Gradio Share Servers, these servers are only a proxy for your local server, and do not store any data sent through your app. Share links expire after 72 hours. (it is [also possible to set up your own Share Server](https://github.com/huggingface/frp/) on your own cloud server to overcome this restriction.)

+

+Tip: Keep in mind that share links are publicly accessible, meaning that anyone can use your model for prediction! Therefore, make sure not to expose any sensitive information through the functions you write, or allow any critical changes to occur on your device. Or you can [add authentication to your Gradio app](#authentication) as discussed below.

+

+Note that by default, `share=False`, which means that your server is only running locally. (This is the default, except in Google Colab notebooks, where share links are automatically created). As an alternative to using share links, you can use use [SSH port-forwarding](https://www.ssh.com/ssh/tunneling/example) to share your local server with specific users.

+

+

+## Hosting on HF Spaces

+

+If you'd like to have a permanent link to your Gradio demo on the internet, use Hugging Face Spaces. [Hugging Face Spaces](http://huggingface.co/spaces/) provides the infrastructure to permanently host your machine learning model for free!

+

+After you have [created a free Hugging Face account](https://huggingface.co/join), you have two methods to deploy your Gradio app to Hugging Face Spaces:

+

+1. From terminal: run `gradio deploy` in your app directory. The CLI will gather some basic metadata and then launch your app. To update your space, you can re-run this command or enable the Github Actions option to automatically update the Spaces on `git push`.

+

+2. From your browser: Drag and drop a folder containing your Gradio model and all related files [here](https://huggingface.co/new-space). See [this guide how to host on Hugging Face Spaces](https://huggingface.co/blog/gradio-spaces) for more information, or watch the embedded video:

+

+

+

+

+## Embedding Hosted Spaces

+

+Once you have hosted your app on Hugging Face Spaces (or on your own server), you may want to embed the demo on a different website, such as your blog or your portfolio. Embedding an interactive demo allows people to try out the machine learning model that you have built, without needing to download or install anything — right in their browser! The best part is that you can embed interactive demos even in static websites, such as GitHub pages.

+

+There are two ways to embed your Gradio demos. You can find quick links to both options directly on the Hugging Face Space page, in the "Embed this Space" dropdown option:

+

+

+

+### Embedding with Web Components

+

+Web components typically offer a better experience to users than IFrames. Web components load lazily, meaning that they won't slow down the loading time of your website, and they automatically adjust their height based on the size of the Gradio app.

+

+To embed with Web Components:

+

+1. Import the gradio JS library into into your site by adding the script below in your site (replace {GRADIO_VERSION} in the URL with the library version of Gradio you are using).

+

+```html

+

+```

+

+2. Add

+

+```html

+

+```

+

+element where you want to place the app. Set the `src=` attribute to your Space's embed URL, which you can find in the "Embed this Space" button. For example:

+

+```html

+

+```

+

+

+

+You can see examples of how web components look on the Gradio landing page.

+

+You can also customize the appearance and behavior of your web component with attributes that you pass into the `` tag:

+

+- `src`: as we've seen, the `src` attributes links to the URL of the hosted Gradio demo that you would like to embed

+- `space`: an optional shorthand if your Gradio demo is hosted on Hugging Face Space. Accepts a `username/space_name` instead of a full URL. Example: `gradio/Echocardiogram-Segmentation`. If this attribute attribute is provided, then `src` does not need to be provided.

+- `control_page_title`: a boolean designating whether the html title of the page should be set to the title of the Gradio app (by default `"false"`)

+- `initial_height`: the initial height of the web component while it is loading the Gradio app, (by default `"300px"`). Note that the final height is set based on the size of the Gradio app.

+- `container`: whether to show the border frame and information about where the Space is hosted (by default `"true"`)

+- `info`: whether to show just the information about where the Space is hosted underneath the embedded app (by default `"true"`)

+- `autoscroll`: whether to autoscroll to the output when prediction has finished (by default `"false"`)

+- `eager`: whether to load the Gradio app as soon as the page loads (by default `"false"`)

+- `theme_mode`: whether to use the `dark`, `light`, or default `system` theme mode (by default `"system"`)

+- `render`: an event that is triggered once the embedded space has finished rendering.

+

+Here's an example of how to use these attributes to create a Gradio app that does not lazy load and has an initial height of 0px.

+

+```html

+

+```

+

+Here's another example of how to use the `render` event. An event listener is used to capture the `render` event and will call the `handleLoadComplete()` function once rendering is complete.

+

+```html

+

+```

+

+_Note: While Gradio's CSS will never impact the embedding page, the embedding page can affect the style of the embedded Gradio app. Make sure that any CSS in the parent page isn't so general that it could also apply to the embedded Gradio app and cause the styling to break. Element selectors such as `header { ... }` and `footer { ... }` will be the most likely to cause issues._

+

+### Embedding with IFrames

+

+To embed with IFrames instead (if you cannot add javascript to your website, for example), add this element:

+

+```html

+

+```

+

+Again, you can find the `src=` attribute to your Space's embed URL, which you can find in the "Embed this Space" button.

+

+Note: if you use IFrames, you'll probably want to add a fixed `height` attribute and set `style="border:0;"` to remove the boreder. In addition, if your app requires permissions such as access to the webcam or the microphone, you'll need to provide that as well using the `allow` attribute.

+

+## API Page

+

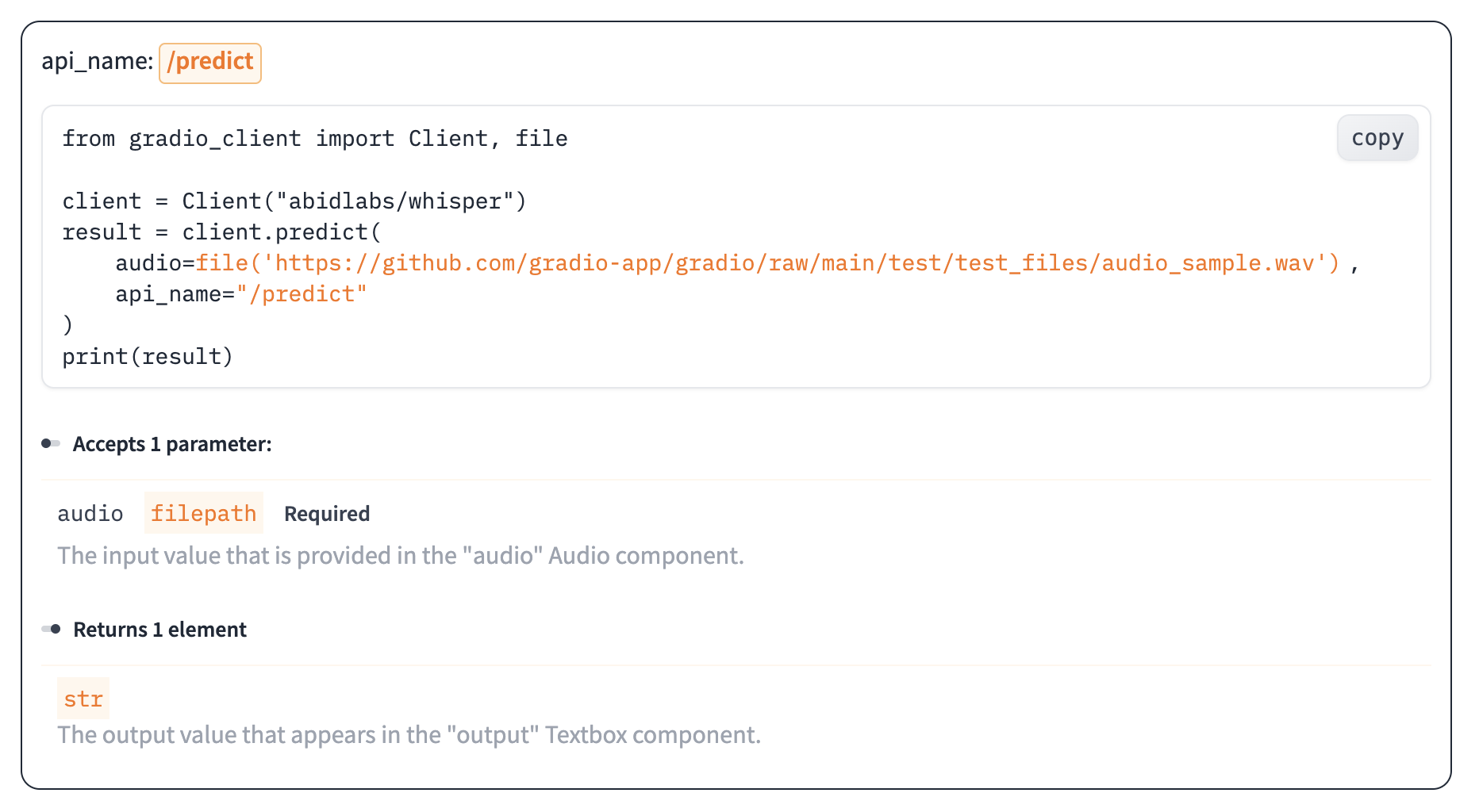

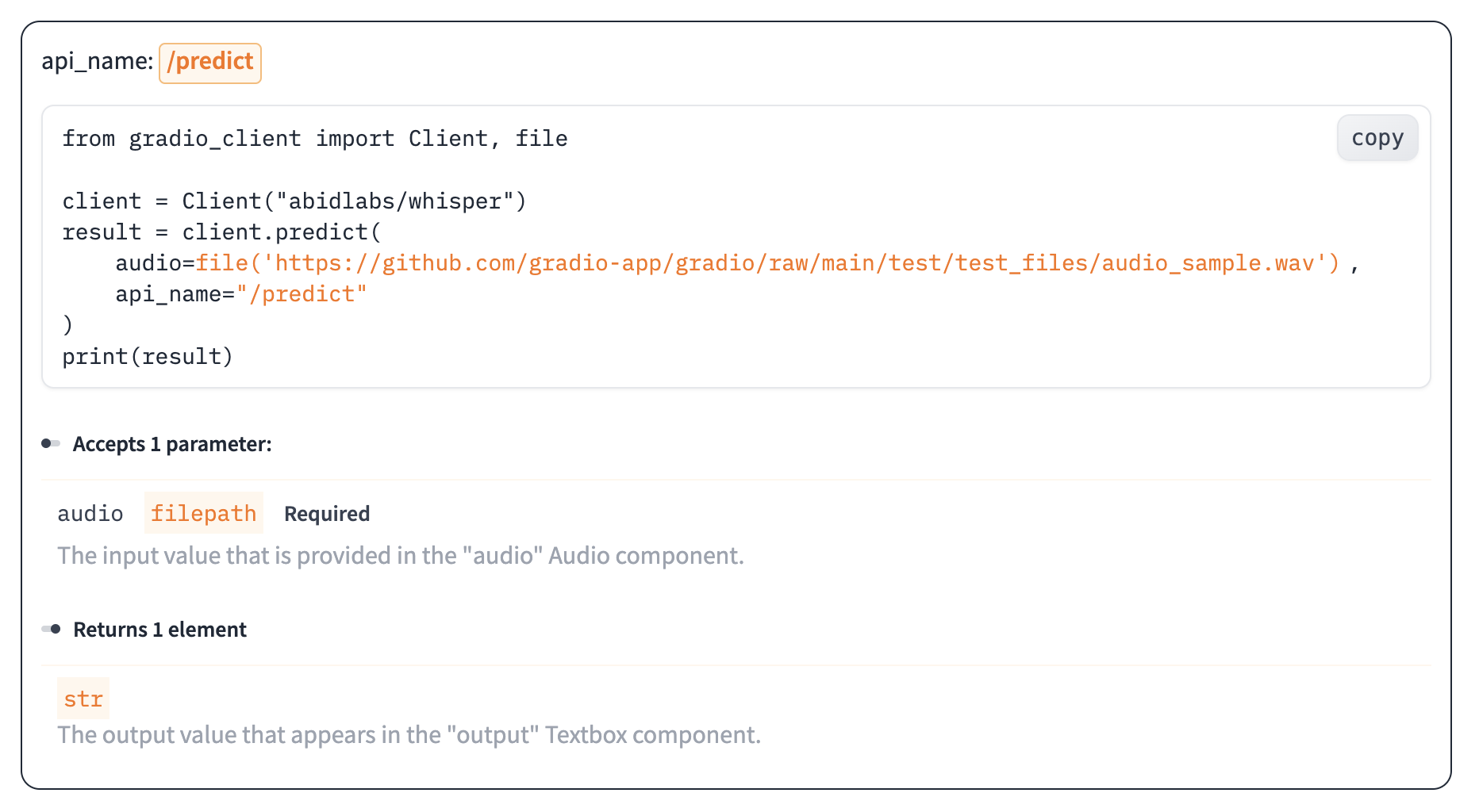

+You can use almost any Gradio app as an API! In the footer of a Gradio app [like this one](https://huggingface.co/spaces/gradio/hello_world), you'll see a "Use via API" link.

+

+

+

+This is a page that lists the endpoints that can be used to query the Gradio app, via our supported clients: either [the Python client](https://gradio.app/guides/getting-started-with-the-python-client/), or [the JavaScript client](https://gradio.app/guides/getting-started-with-the-js-client/). For each endpoint, Gradio automatically generates the parameters and their types, as well as example inputs, like this.

+

+

+

+The endpoints are automatically created when you launch a Gradio `Interface`. If you are using Gradio `Blocks`, you can also set up a Gradio API page, though we recommend that you explicitly name each event listener, such as

+

+```python

+btn.click(add, [num1, num2], output, api_name="addition")

+```

+

+This will add and document the endpoint `/api/addition/` to the automatically generated API page. Otherwise, your API endpoints will appear as "unnamed" endpoints.

+

+## Accessing the Network Request Directly

+

+When a user makes a prediction to your app, you may need the underlying network request, in order to get the request headers (e.g. for advanced authentication), log the client's IP address, getting the query parameters, or for other reasons. Gradio supports this in a similar manner to FastAPI: simply add a function parameter whose type hint is `gr.Request` and Gradio will pass in the network request as that parameter. Here is an example:

+

+```python

+import gradio as gr

+

+def echo(text, request: gr.Request):

+ if request:

+ print("Request headers dictionary:", request.headers)

+ print("IP address:", request.client.host)

+ print("Query parameters:", dict(request.query_params))

+ return text

+

+io = gr.Interface(echo, "textbox", "textbox").launch()

+```

+

+Note: if your function is called directly instead of through the UI (this happens, for

+example, when examples are cached, or when the Gradio app is called via API), then `request` will be `None`.

+You should handle this case explicitly to ensure that your app does not throw any errors. That is why

+we have the explicit check `if request`.

+

+## Mounting Within Another FastAPI App

+

+In some cases, you might have an existing FastAPI app, and you'd like to add a path for a Gradio demo.

+You can easily do this with `gradio.mount_gradio_app()`.

+

+Here's a complete example:

+

+$code_custom_path

+

+Note that this approach also allows you run your Gradio apps on custom paths (`http://localhost:8000/gradio` in the example above).

+

+

+## Authentication

+

+### Password-protected app

+

+You may wish to put an authentication page in front of your app to limit who can open your app. With the `auth=` keyword argument in the `launch()` method, you can provide a tuple with a username and password, or a list of acceptable username/password tuples; Here's an example that provides password-based authentication for a single user named "admin":

+

+```python

+demo.launch(auth=("admin", "pass1234"))

+```

+

+For more complex authentication handling, you can even pass a function that takes a username and password as arguments, and returns `True` to allow access, `False` otherwise.

+

+Here's an example of a function that accepts any login where the username and password are the same:

+

+```python

+def same_auth(username, password):

+ return username == password

+demo.launch(auth=same_auth)

+```

+

+If you have multiple users, you may wish to customize the content that is shown depending on the user that is logged in. You can retrieve the logged in user by [accessing the network request directly](#accessing-the-network-request-directly) as discussed above, and then reading the `.username` attribute of the request. Here's an example:

+

+

+```python

+import gradio as gr

+

+def update_message(request: gr.Request):

+ return f"Welcome, {request.username}"

+

+with gr.Blocks() as demo:

+ m = gr.Markdown()

+ demo.load(update_message, None, m)

+

+demo.launch(auth=[("Abubakar", "Abubakar"), ("Ali", "Ali")])

+```

+

+Note: For authentication to work properly, third party cookies must be enabled in your browser. This is not the case by default for Safari or for Chrome Incognito Mode.

+

+If users visit the `/logout` page of your Gradio app, they will automatically be logged out and session cookies deleted. This allows you to add logout functionality to your Gradio app as well. Let's update the previous example to include a log out button:

+

+```python

+import gradio as gr

+

+def update_message(request: gr.Request):

+ return f"Welcome, {request.username}"

+

+with gr.Blocks() as demo:

+ m = gr.Markdown()

+ logout_button = gr.Button("Logout", link="/logout")

+ demo.load(update_message, None, m)

+

+demo.launch(auth=[("Pete", "Pete"), ("Dawood", "Dawood")])

+```

+

+Note: Gradio's built-in authentication provides a straightforward and basic layer of access control but does not offer robust security features for applications that require stringent access controls (e.g. multi-factor authentication, rate limiting, or automatic lockout policies).

+

+### OAuth (Login via Hugging Face)

+

+Gradio natively supports OAuth login via Hugging Face. In other words, you can easily add a _"Sign in with Hugging Face"_ button to your demo, which allows you to get a user's HF username as well as other information from their HF profile. Check out [this Space](https://huggingface.co/spaces/Wauplin/gradio-oauth-demo) for a live demo.

+

+To enable OAuth, you must set `hf_oauth: true` as a Space metadata in your README.md file. This will register your Space

+as an OAuth application on Hugging Face. Next, you can use `gr.LoginButton` to add a login button to

+your Gradio app. Once a user is logged in with their HF account, you can retrieve their profile by adding a parameter of type

+`gr.OAuthProfile` to any Gradio function. The user profile will be automatically injected as a parameter value. If you want

+to perform actions on behalf of the user (e.g. list user's private repos, create repo, etc.), you can retrieve the user

+token by adding a parameter of type `gr.OAuthToken`. You must define which scopes you will use in your Space metadata

+(see [documentation](https://huggingface.co/docs/hub/spaces-oauth#scopes) for more details).

+

+Here is a short example:

+

+```py

+import gradio as gr

+from huggingface_hub import whoami

+

+def hello(profile: gr.OAuthProfile | None) -> str:

+ if profile is None:

+ return "I don't know you."