File size: 1,480 Bytes

7b83941 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 |

---

language: en

tags:

- science

- multi-displinary

license: apache-2.0

---

# ScholarBERT_1 Model

This is the **ScholarBERT_1** variant of the ScholarBERT model family.

The model is pertrained on a large collection of scientific research articles (**2.2B tokens**).

This is a **cased** (case-sensitive) model. The tokenizer will not convert all inputs to lower-case by default.

The model is based on the same architecture as [BERT-large](https://huggingface.co/bert-large-cased) and has a total of 340M parameters.

# Model Architecture

| Hyperparameter | Value |

|-----------------|:-------:|

| Layers | 24 |

| Hidden Size | 1024 |

| Attention Heads | 16 |

| Total Parameters | 340M |

# Training Dataset

The vocab and the model are pertrained on **100% of the PRD** scientific literature dataset.

The PRD dataset is provided by Public.Resource.Org, Inc. (“Public Resource”),

a nonprofit organization based in California. This dataset was constructed from a corpus

of journal article files, from which We successfully extracted text from 75,496,055 articles from 178,928 journals.

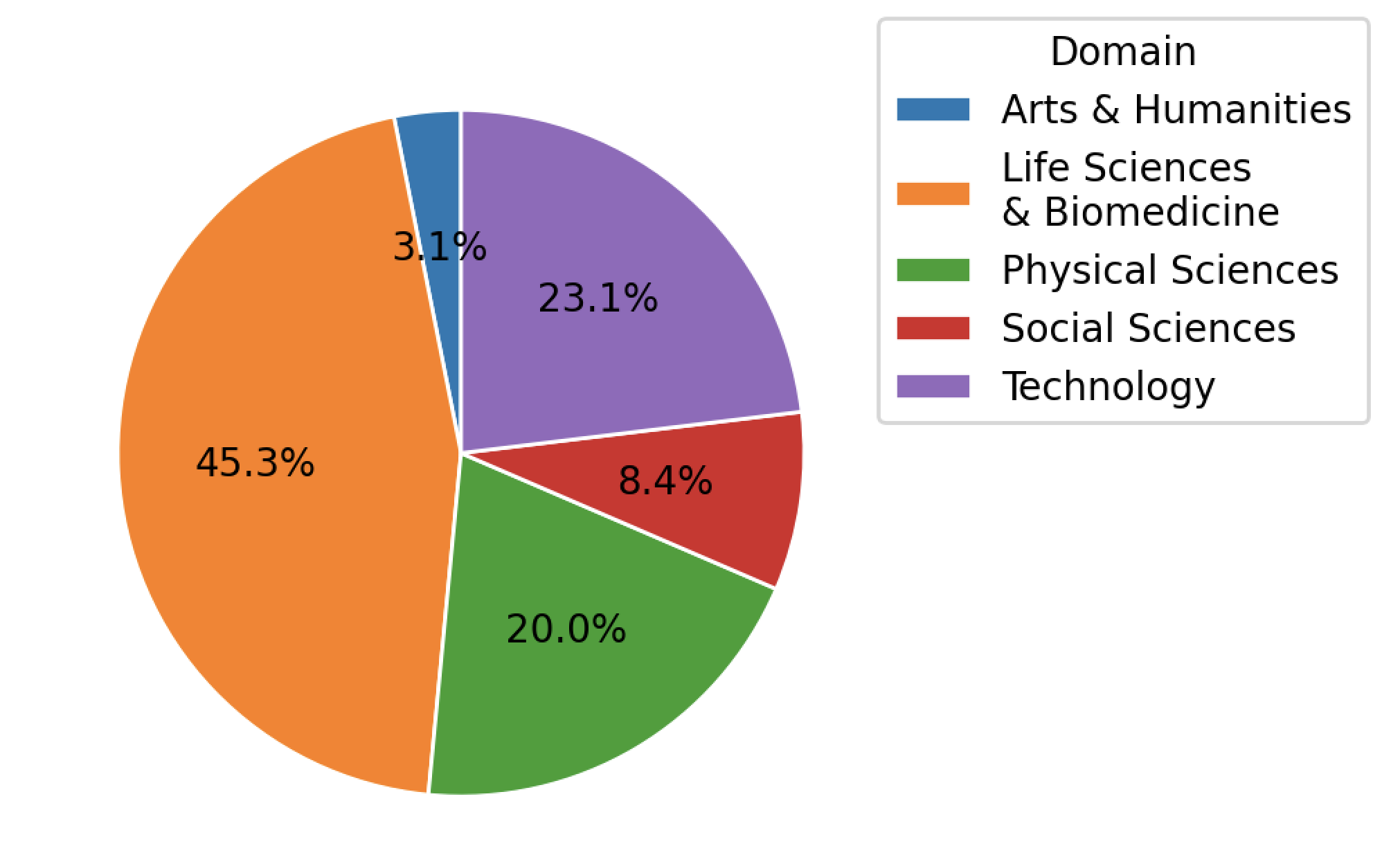

The articles span across Arts & Humanities, Life Sciences & Biomedicine, Physical Sciences,

Social Sciences, and Technology. The distribution of articles is shown below.

# BibTeX entry and citation info

If using this model, please cite this paper:

[To be added] |