Update README.md

Browse files

README.md

CHANGED

|

@@ -24,11 +24,14 @@ metrics:

|

|

| 24 |

pipeline_tag: text-generation

|

| 25 |

---

|

| 26 |

|

| 27 |

-

Vimarckoso is a

|

| 28 |

|

| 29 |

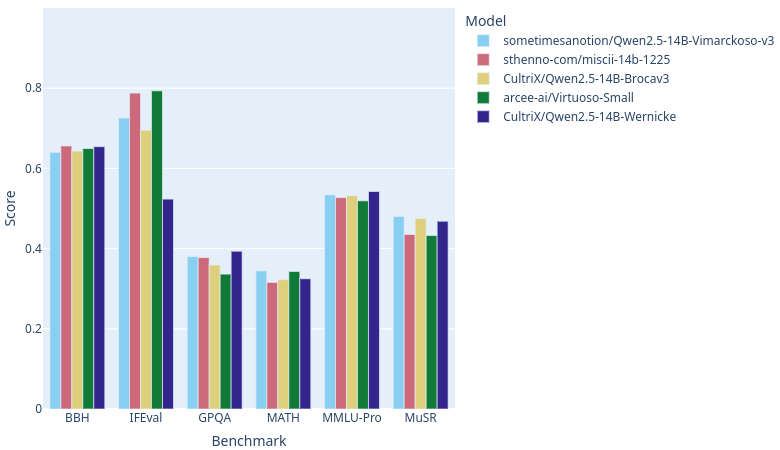

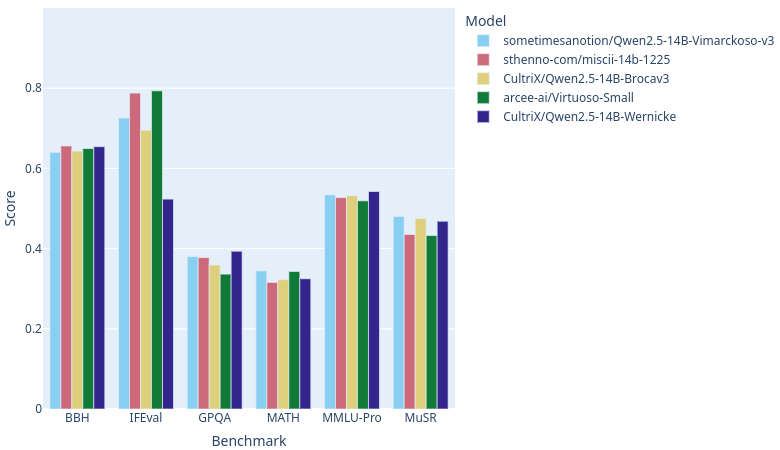

As of this writing, with [open-llm-leaderboard](https://huggingface.co/open-llm-leaderboard) catching up on rankings, Vimarckoso v3 should join Arcee AI's [Virtuoso-Small](https://huggingface.co/arcee-ai/Virtuoso-Small), Sthenno's [miscii-14b-1225](https://huggingface.co/sthenno-com/miscii-14b-1225) and Cultrix's [Qwen2.5-14B-Brocav3](https://huggingface.co/CultriX/Qwen2.5-14B-Brocav3) at the top of the 14B parameter LLM category on this site. As the recipe below will show, their models contribute strongly to Virmarckoso - CultriX's through a strong influence on Lamarck v0.3. Congratulations to everyone whose work went into this!

|

| 30 |

|

| 31 |

|

|

|

|

|

|

|

|

|

|

| 32 |

---

|

| 33 |

|

| 34 |

### Configuration

|

|

|

|

| 24 |

pipeline_tag: text-generation

|

| 25 |

---

|

| 26 |

|

| 27 |

+

Vimarckoso is a reasoning-focused part of the [Lamarck](https://huggingface.co/sometimesanotion/Lamarck-14B-v0.4-Qwenvergence) project. It began with a recipe based on [CultriX/Qwen2.5-14B-Wernicke](https://huggingface.co/CultriX/Qwen2.5-14B-Wernicke), and then I set out to fix the initial version's instruction following without any great loss to reasoning. The results surpassed my expectations.

|

| 28 |

|

| 29 |

As of this writing, with [open-llm-leaderboard](https://huggingface.co/open-llm-leaderboard) catching up on rankings, Vimarckoso v3 should join Arcee AI's [Virtuoso-Small](https://huggingface.co/arcee-ai/Virtuoso-Small), Sthenno's [miscii-14b-1225](https://huggingface.co/sthenno-com/miscii-14b-1225) and Cultrix's [Qwen2.5-14B-Brocav3](https://huggingface.co/CultriX/Qwen2.5-14B-Brocav3) at the top of the 14B parameter LLM category on this site. As the recipe below will show, their models contribute strongly to Virmarckoso - CultriX's through a strong influence on Lamarck v0.3. Congratulations to everyone whose work went into this!

|

| 30 |

|

| 31 |

|

| 32 |

+

|

| 33 |

+

Wernicke and Vimarckoso both inherit very strong reasoning, and hence high GPQA and MUSR scores, from [EVA-UNIT-01/EVA-Qwen2.5-14B-v0.2](https://huggingface.co/EVA-UNIT-01/EVA-Qwen2.5-14B-v0.2). Prose quality gets a boost from models blended in [Qwenvergence-14B-v6-Prose](https://huggingface.co/Qwenvergence-14B-v6-Prose), and instruction following gets healed after the merges thanks to LoRAs based on [huihui-ai/Qwen2.5-14B-Instruct-abliterated-v2](https://huggingface.co/huihui-ai/Qwen2.5-14B-Instruct-abliterated-v2).

|

| 34 |

+

|

| 35 |

---

|

| 36 |

|

| 37 |

### Configuration

|