Spaces:

Sleeping

Sleeping

Initial Commit

Browse files- .DS_Store +0 -0

- README.md +4 -3

- app.py +79 -0

- data/label_map.pbtxt +8 -0

- requirements.txt +6 -0

- test_samples/.DS_Store +0 -0

- test_samples/gramophone_test.jpeg +0 -0

- test_samples/sample_gramophone.jpeg +0 -0

- test_samples/veena_test.jpeg +0 -0

.DS_Store

ADDED

|

Binary file (10.2 kB). View file

|

|

|

README.md

CHANGED

|

@@ -1,10 +1,11 @@

|

|

| 1 |

---

|

| 2 |

title: 23A052W

|

| 3 |

emoji: 🦀

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

|

|

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version: 4.

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

|

|

|

| 1 |

---

|

| 2 |

title: 23A052W

|

| 3 |

emoji: 🦀

|

| 4 |

+

colorFrom: indigo

|

| 5 |

+

colorTo: red

|

| 6 |

+

python_version: 3.8

|

| 7 |

sdk: gradio

|

| 8 |

+

sdk_version: 4.0.2

|

| 9 |

app_file: app.py

|

| 10 |

pinned: false

|

| 11 |

license: apache-2.0

|

app.py

ADDED

|

@@ -0,0 +1,79 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

from huggingface_hub import snapshot_download

|

| 3 |

+

import tensorflow as tf

|

| 4 |

+

from PIL import Image

|

| 5 |

+

import numpy as np

|

| 6 |

+

from object_detection.utils import label_map_util

|

| 7 |

+

from object_detection.utils import visualization_utils as viz_utils

|

| 8 |

+

|

| 9 |

+

# Path to the label map

|

| 10 |

+

PATH_TO_LABELS = 'data/label_map.pbtxt'

|

| 11 |

+

category_index = label_map_util.create_category_index_from_labelmap(PATH_TO_LABELS, use_display_name=True)

|

| 12 |

+

|

| 13 |

+

def pil_image_as_numpy_array(pilimg):

|

| 14 |

+

img_array = tf.keras.utils.img_to_array(pilimg)

|

| 15 |

+

img_array = np.expand_dims(img_array, axis=0)

|

| 16 |

+

return img_array

|

| 17 |

+

|

| 18 |

+

def load_model(repo_id):

|

| 19 |

+

download_dir = snapshot_download(repo_id)

|

| 20 |

+

saved_model_dir = os.path.join(download_dir, "saved_model")

|

| 21 |

+

detection_model = tf.saved_model.load(saved_model_dir)

|

| 22 |

+

return detection_model

|

| 23 |

+

|

| 24 |

+

def predict(pilimg):

|

| 25 |

+

image_np = pil_image_as_numpy_array(pilimg)

|

| 26 |

+

return predict_combined_models(image_np, detection_model1, detection_model2)

|

| 27 |

+

|

| 28 |

+

def predict_combined_models(image_np, model1, model2):

|

| 29 |

+

# Process with first model

|

| 30 |

+

results1 = model1(image_np)

|

| 31 |

+

result1 = {key:value.numpy() for key,value in results1.items()}

|

| 32 |

+

|

| 33 |

+

# Process with second model

|

| 34 |

+

results2 = model2(image_np)

|

| 35 |

+

result2 = {key:value.numpy() for key,value in results2.items()}

|

| 36 |

+

|

| 37 |

+

# Visualization for model 1

|

| 38 |

+

image_np_with_detections = image_np.copy()

|

| 39 |

+

viz_utils.visualize_boxes_and_labels_on_image_array(

|

| 40 |

+

image_np_with_detections[0],

|

| 41 |

+

result1['detection_boxes'][0],

|

| 42 |

+

(result1['detection_classes'][0]).astype(int),

|

| 43 |

+

result1['detection_scores'][0],

|

| 44 |

+

category_index,

|

| 45 |

+

use_normalized_coordinates=True,

|

| 46 |

+

max_boxes_to_draw=200,

|

| 47 |

+

min_score_thresh=.60,

|

| 48 |

+

agnostic_mode=False,

|

| 49 |

+

line_thickness=2)

|

| 50 |

+

|

| 51 |

+

# Visualization for model 2 (can adjust styles to differentiate)

|

| 52 |

+

viz_utils.visualize_boxes_and_labels_on_image_array(

|

| 53 |

+

image_np_with_detections[0],

|

| 54 |

+

result2['detection_boxes'][0],

|

| 55 |

+

(result2['detection_classes'][0]).astype(int),

|

| 56 |

+

result2['detection_scores'][0],

|

| 57 |

+

category_index,

|

| 58 |

+

use_normalized_coordinates=True,

|

| 59 |

+

max_boxes_to_draw=200,

|

| 60 |

+

min_score_thresh=.60,

|

| 61 |

+

agnostic_mode=False,

|

| 62 |

+

line_thickness=2)

|

| 63 |

+

|

| 64 |

+

# Combine and return final image

|

| 65 |

+

result_pil_img = tf.keras.utils.array_to_img(image_np_with_detections[0])

|

| 66 |

+

return result_pil_img

|

| 67 |

+

|

| 68 |

+

# Load your models

|

| 69 |

+

REPO_ID1 = "dtyago/23a052w-iti107-assn2_tfodmodel"

|

| 70 |

+

REPO_ID2 = "dtyago/23a052w-iti107-assn2_tfodmodel_run1"

|

| 71 |

+

detection_model1 = load_model(REPO_ID1)

|

| 72 |

+

detection_model2 = load_model(REPO_ID2)

|

| 73 |

+

|

| 74 |

+

# Gradio interface

|

| 75 |

+

gr.Interface(

|

| 76 |

+

fn=predict,

|

| 77 |

+

inputs=gr.Image(type="pil"),

|

| 78 |

+

outputs=gr.Image(type="pil")

|

| 79 |

+

).launch(share=True)

|

data/label_map.pbtxt

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

item {

|

| 2 |

+

id: 2

|

| 3 |

+

name: 'Gramophone'

|

| 4 |

+

}

|

| 5 |

+

item {

|

| 6 |

+

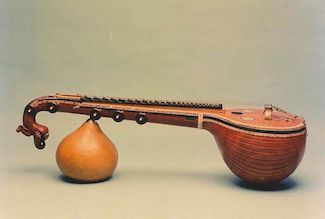

id: 3

|

| 7 |

+

name: 'Veena'

|

| 8 |

+

}

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#tf2-tensorflow-object-detection-api

|

| 2 |

+

tf-models-research-object-detection

|

| 3 |

+

matplotlib

|

| 4 |

+

wget

|

| 5 |

+

Pillow==9.5

|

| 6 |

+

huggingface_hub

|

test_samples/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

test_samples/gramophone_test.jpeg

ADDED

|

test_samples/sample_gramophone.jpeg

ADDED

|

test_samples/veena_test.jpeg

ADDED

|