Spaces:

Running

on

Zero

Running

on

Zero

David Day

commited on

Upload code.

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- README.md +1 -1

- app.py +529 -48

- constants.py +13 -0

- controller.py +298 -0

- conversation.py +396 -0

- examples/example1.jpeg +0 -0

- examples/example2.jpeg +0 -0

- llava/__init__.py +1 -0

- llava/constants.py +12 -0

- llava/conversation.py +381 -0

- llava/eval/eval_gpt_review.py +113 -0

- llava/eval/eval_gpt_review_bench.py +121 -0

- llava/eval/eval_gpt_review_visual.py +118 -0

- llava/eval/eval_science_qa.py +99 -0

- llava/eval/eval_science_qa_gpt4.py +104 -0

- llava/eval/eval_science_qa_gpt4_requery.py +149 -0

- llava/eval/generate_webpage_data_from_table.py +111 -0

- llava/eval/model_qa.py +85 -0

- llava/eval/model_vqa.py +112 -0

- llava/eval/model_vqa_science.py +141 -0

- llava/eval/qa_baseline_gpt35.py +74 -0

- llava/eval/run_llava.py +97 -0

- llava/eval/summarize_gpt_review.py +50 -0

- llava/eval/webpage/figures/alpaca.png +0 -0

- llava/eval/webpage/figures/bard.jpg +0 -0

- llava/eval/webpage/figures/chatgpt.svg +1 -0

- llava/eval/webpage/figures/llama.jpg +0 -0

- llava/eval/webpage/figures/swords_FILL0_wght300_GRAD0_opsz48.svg +1 -0

- llava/eval/webpage/figures/vicuna.jpeg +0 -0

- llava/eval/webpage/index.html +162 -0

- llava/eval/webpage/script.js +245 -0

- llava/eval/webpage/styles.css +105 -0

- llava/mm_utils.py +99 -0

- llava/model/__init__.py +2 -0

- llava/model/apply_delta.py +48 -0

- llava/model/builder.py +151 -0

- llava/model/consolidate.py +29 -0

- llava/model/language_model/llava_llama.py +140 -0

- llava/model/language_model/llava_mpt.py +113 -0

- llava/model/language_model/mpt/adapt_tokenizer.py +41 -0

- llava/model/language_model/mpt/attention.py +300 -0

- llava/model/language_model/mpt/blocks.py +41 -0

- llava/model/language_model/mpt/configuration_mpt.py +118 -0

- llava/model/language_model/mpt/custom_embedding.py +11 -0

- llava/model/language_model/mpt/flash_attn_triton.py +484 -0

- llava/model/language_model/mpt/hf_prefixlm_converter.py +415 -0

- llava/model/language_model/mpt/meta_init_context.py +94 -0

- llava/model/language_model/mpt/modeling_mpt.py +331 -0

- llava/model/language_model/mpt/norm.py +56 -0

- llava/model/language_model/mpt/param_init_fns.py +181 -0

README.md

CHANGED

|

@@ -4,7 +4,7 @@ emoji: 💬

|

|

| 4 |

colorFrom: yellow

|

| 5 |

colorTo: purple

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version:

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

|

|

|

| 4 |

colorFrom: yellow

|

| 5 |

colorTo: purple

|

| 6 |

sdk: gradio

|

| 7 |

+

sdk_version: 3.35.2

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

app.py

CHANGED

|

@@ -1,63 +1,544 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

-

|

| 3 |

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

|

|

|

|

| 9 |

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

system_message,

|

| 14 |

-

max_tokens,

|

| 15 |

-

temperature,

|

| 16 |

-

top_p,

|

| 17 |

-

):

|

| 18 |

-

messages = [{"role": "system", "content": system_message}]

|

| 19 |

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

messages.append({"role": "assistant", "content": val[1]})

|

| 25 |

|

| 26 |

-

messages.append({"role": "user", "content": message})

|

| 27 |

|

| 28 |

-

|

|

|

|

|

|

|

|

|

|

| 29 |

|

| 30 |

-

for message in client.chat_completion(

|

| 31 |

-

messages,

|

| 32 |

-

max_tokens=max_tokens,

|

| 33 |

-

stream=True,

|

| 34 |

-

temperature=temperature,

|

| 35 |

-

top_p=top_p,

|

| 36 |

-

):

|

| 37 |

-

token = message.choices[0].delta.content

|

| 38 |

|

| 39 |

-

|

| 40 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 41 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 42 |

"""

|

| 43 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 44 |

"""

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

gr.

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

| 59 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 60 |

|

| 61 |

|

| 62 |

if __name__ == "__main__":

|

| 63 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import datetime

|

| 3 |

+

import hashlib

|

| 4 |

+

import json

|

| 5 |

+

import os

|

| 6 |

+

import subprocess

|

| 7 |

+

import sys

|

| 8 |

+

import time

|

| 9 |

+

|

| 10 |

import gradio as gr

|

| 11 |

+

import requests

|

| 12 |

|

| 13 |

+

from constants import LOGDIR

|

| 14 |

+

from conversation import (default_conversation, conv_templates,

|

| 15 |

+

SeparatorStyle)

|

| 16 |

+

from utils import (build_logger, server_error_msg,

|

| 17 |

+

violates_moderation, moderation_msg)

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

logger = build_logger("gradio_web_server", "gradio_web_server.log")

|

| 21 |

|

| 22 |

+

headers = {"User-Agent": "LLaVA Client"}

|

| 23 |

|

| 24 |

+

no_change_btn = gr.Button()

|

| 25 |

+

enable_btn = gr.Button(interactive=True)

|

| 26 |

+

disable_btn = gr.Button(interactive=False)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 27 |

|

| 28 |

+

priority = {

|

| 29 |

+

"vicuna-13b": "aaaaaaa",

|

| 30 |

+

"koala-13b": "aaaaaab",

|

| 31 |

+

}

|

|

|

|

| 32 |

|

|

|

|

| 33 |

|

| 34 |

+

def get_conv_log_filename():

|

| 35 |

+

t = datetime.datetime.now()

|

| 36 |

+

name = os.path.join(LOGDIR, f"{t.year}-{t.month:02d}-{t.day:02d}-conv.json")

|

| 37 |

+

return name

|

| 38 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 39 |

|

| 40 |

+

def get_model_list():

|

| 41 |

+

ret = requests.post(args.controller_url + "/refresh_all_workers")

|

| 42 |

+

assert ret.status_code == 200

|

| 43 |

+

ret = requests.post(args.controller_url + "/list_models")

|

| 44 |

+

models = ret.json()["models"]

|

| 45 |

+

models.sort(key=lambda x: priority.get(x, x))

|

| 46 |

+

logger.info(f"Models: {models}")

|

| 47 |

+

return models

|

| 48 |

|

| 49 |

+

|

| 50 |

+

get_window_url_params = """

|

| 51 |

+

function() {

|

| 52 |

+

const params = new URLSearchParams(window.location.search);

|

| 53 |

+

url_params = Object.fromEntries(params);

|

| 54 |

+

console.log(url_params);

|

| 55 |

+

return url_params;

|

| 56 |

+

}

|

| 57 |

"""

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

def load_demo(url_params, request: gr.Request):

|

| 61 |

+

logger.info(f"load_demo. ip: {request.client.host}. params: {url_params}")

|

| 62 |

+

|

| 63 |

+

dropdown_update = gr.Dropdown(visible=True)

|

| 64 |

+

if "model" in url_params:

|

| 65 |

+

model = url_params["model"]

|

| 66 |

+

if model in models:

|

| 67 |

+

dropdown_update = gr.Dropdown(value=model, visible=True)

|

| 68 |

+

|

| 69 |

+

state = default_conversation.copy()

|

| 70 |

+

return state, dropdown_update

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

def load_demo_refresh_model_list(request: gr.Request):

|

| 74 |

+

logger.info(f"load_demo. ip: {request.client.host}")

|

| 75 |

+

models = get_model_list()

|

| 76 |

+

state = default_conversation.copy()

|

| 77 |

+

dropdown_update = gr.Dropdown(

|

| 78 |

+

choices=models,

|

| 79 |

+

value=models[0] if len(models) > 0 else ""

|

| 80 |

+

)

|

| 81 |

+

return state, dropdown_update

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

def vote_last_response(state, vote_type, model_selector, request: gr.Request):

|

| 85 |

+

with open(get_conv_log_filename(), "a") as fout:

|

| 86 |

+

data = {

|

| 87 |

+

"tstamp": round(time.time(), 4),

|

| 88 |

+

"type": vote_type,

|

| 89 |

+

"model": model_selector,

|

| 90 |

+

"state": state.dict(),

|

| 91 |

+

"ip": request.client.host,

|

| 92 |

+

}

|

| 93 |

+

fout.write(json.dumps(data) + "\n")

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

def upvote_last_response(state, model_selector, request: gr.Request):

|

| 97 |

+

logger.info(f"upvote. ip: {request.client.host}")

|

| 98 |

+

vote_last_response(state, "upvote", model_selector, request)

|

| 99 |

+

return ("",) + (disable_btn,) * 3

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

def downvote_last_response(state, model_selector, request: gr.Request):

|

| 103 |

+

logger.info(f"downvote. ip: {request.client.host}")

|

| 104 |

+

vote_last_response(state, "downvote", model_selector, request)

|

| 105 |

+

return ("",) + (disable_btn,) * 3

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

def flag_last_response(state, model_selector, request: gr.Request):

|

| 109 |

+

logger.info(f"flag. ip: {request.client.host}")

|

| 110 |

+

vote_last_response(state, "flag", model_selector, request)

|

| 111 |

+

return ("",) + (disable_btn,) * 3

|

| 112 |

+

|

| 113 |

+

|

| 114 |

+

def regenerate(state, image_process_mode, request: gr.Request):

|

| 115 |

+

logger.info(f"regenerate. ip: {request.client.host}")

|

| 116 |

+

state.messages[-1][-1] = None

|

| 117 |

+

prev_human_msg = state.messages[-2]

|

| 118 |

+

if type(prev_human_msg[1]) in (tuple, list):

|

| 119 |

+

prev_human_msg[1] = (*prev_human_msg[1][:2], image_process_mode)

|

| 120 |

+

state.skip_next = False

|

| 121 |

+

return (state, state.to_gradio_chatbot(), "", None) + (disable_btn,) * 5

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

def clear_history(request: gr.Request):

|

| 125 |

+

logger.info(f"clear_history. ip: {request.client.host}")

|

| 126 |

+

state = default_conversation.copy()

|

| 127 |

+

return (state, state.to_gradio_chatbot(), "", None) + (disable_btn,) * 5

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

def add_text(state, text, image, image_process_mode, request: gr.Request):

|

| 131 |

+

logger.info(f"add_text. ip: {request.client.host}. len: {len(text)}")

|

| 132 |

+

if len(text) <= 0 and image is None:

|

| 133 |

+

state.skip_next = True

|

| 134 |

+

return (state, state.to_gradio_chatbot(), "", None) + (no_change_btn,) * 5

|

| 135 |

+

if args.moderate:

|

| 136 |

+

flagged = violates_moderation(text)

|

| 137 |

+

if flagged:

|

| 138 |

+

state.skip_next = True

|

| 139 |

+

return (state, state.to_gradio_chatbot(), moderation_msg, None) + (

|

| 140 |

+

no_change_btn,) * 5

|

| 141 |

+

|

| 142 |

+

text = text[:1536] # Hard cut-off

|

| 143 |

+

if image is not None:

|

| 144 |

+

text = text[:1200] # Hard cut-off for images

|

| 145 |

+

if '<image>' not in text:

|

| 146 |

+

# text = '<Image><image></Image>' + text

|

| 147 |

+

text = text + '\n<image>'

|

| 148 |

+

text = (text, image, image_process_mode)

|

| 149 |

+

state = default_conversation.copy()

|

| 150 |

+

state.append_message(state.roles[0], text)

|

| 151 |

+

state.append_message(state.roles[1], None)

|

| 152 |

+

state.skip_next = False

|

| 153 |

+

return (state, state.to_gradio_chatbot(), "", None) + (disable_btn,) * 5

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

def http_bot(state, model_selector, temperature, top_p, max_new_tokens, request: gr.Request):

|

| 157 |

+

logger.info(f"http_bot. ip: {request.client.host}")

|

| 158 |

+

start_tstamp = time.time()

|

| 159 |

+

model_name = model_selector

|

| 160 |

+

|

| 161 |

+

if state.skip_next:

|

| 162 |

+

# This generate call is skipped due to invalid inputs

|

| 163 |

+

yield (state, state.to_gradio_chatbot()) + (no_change_btn,) * 5

|

| 164 |

+

return

|

| 165 |

+

|

| 166 |

+

if len(state.messages) == state.offset + 2:

|

| 167 |

+

# First round of conversation

|

| 168 |

+

if "llava" in model_name.lower():

|

| 169 |

+

if 'llama-2' in model_name.lower():

|

| 170 |

+

template_name = "llava_llama_2"

|

| 171 |

+

elif "mistral" in model_name.lower() or "mixtral" in model_name.lower():

|

| 172 |

+

if 'orca' in model_name.lower():

|

| 173 |

+

template_name = "mistral_orca"

|

| 174 |

+

elif 'hermes' in model_name.lower():

|

| 175 |

+

template_name = "chatml_direct"

|

| 176 |

+

else:

|

| 177 |

+

template_name = "mistral_instruct"

|

| 178 |

+

elif 'llava-v1.6-34b' in model_name.lower():

|

| 179 |

+

template_name = "chatml_direct"

|

| 180 |

+

elif "v1" in model_name.lower():

|

| 181 |

+

if 'mmtag' in model_name.lower():

|

| 182 |

+

template_name = "v1_mmtag"

|

| 183 |

+

elif 'plain' in model_name.lower() and 'finetune' not in model_name.lower():

|

| 184 |

+

template_name = "v1_mmtag"

|

| 185 |

+

else:

|

| 186 |

+

template_name = "llava_v1"

|

| 187 |

+

elif "mpt" in model_name.lower():

|

| 188 |

+

template_name = "mpt"

|

| 189 |

+

else:

|

| 190 |

+

if 'mmtag' in model_name.lower():

|

| 191 |

+

template_name = "v0_mmtag"

|

| 192 |

+

elif 'plain' in model_name.lower() and 'finetune' not in model_name.lower():

|

| 193 |

+

template_name = "v0_mmtag"

|

| 194 |

+

else:

|

| 195 |

+

template_name = "llava_v0"

|

| 196 |

+

elif "mpt" in model_name:

|

| 197 |

+

template_name = "mpt_text"

|

| 198 |

+

elif "llama-2" in model_name:

|

| 199 |

+

template_name = "llama_2"

|

| 200 |

+

else:

|

| 201 |

+

template_name = "vicuna_v1"

|

| 202 |

+

new_state = conv_templates[template_name].copy()

|

| 203 |

+

new_state.append_message(new_state.roles[0], state.messages[-2][1])

|

| 204 |

+

new_state.append_message(new_state.roles[1], None)

|

| 205 |

+

state = new_state

|

| 206 |

+

|

| 207 |

+

# Query worker address

|

| 208 |

+

controller_url = args.controller_url

|

| 209 |

+

ret = requests.post(controller_url + "/get_worker_address",

|

| 210 |

+

json={"model": model_name})

|

| 211 |

+

worker_addr = ret.json()["address"]

|

| 212 |

+

logger.info(f"model_name: {model_name}, worker_addr: {worker_addr}")

|

| 213 |

+

|

| 214 |

+

# No available worker

|

| 215 |

+

if worker_addr == "":

|

| 216 |

+

state.messages[-1][-1] = server_error_msg

|

| 217 |

+

yield (state, state.to_gradio_chatbot(), disable_btn, disable_btn, disable_btn, enable_btn, enable_btn)

|

| 218 |

+

return

|

| 219 |

+

|

| 220 |

+

# Construct prompt

|

| 221 |

+

prompt = state.get_prompt()

|

| 222 |

+

|

| 223 |

+

all_images = state.get_images(return_pil=True)

|

| 224 |

+

all_image_hash = [hashlib.md5(image.tobytes()).hexdigest() for image in all_images]

|

| 225 |

+

for image, hash in zip(all_images, all_image_hash):

|

| 226 |

+

t = datetime.datetime.now()

|

| 227 |

+

filename = os.path.join(LOGDIR, "serve_images", f"{t.year}-{t.month:02d}-{t.day:02d}", f"{hash}.jpg")

|

| 228 |

+

if not os.path.isfile(filename):

|

| 229 |

+

os.makedirs(os.path.dirname(filename), exist_ok=True)

|

| 230 |

+

image.save(filename)

|

| 231 |

+

|

| 232 |

+

# Make requests

|

| 233 |

+

pload = {

|

| 234 |

+

"model": model_name,

|

| 235 |

+

"prompt": prompt,

|

| 236 |

+

"temperature": float(temperature),

|

| 237 |

+

"top_p": float(top_p),

|

| 238 |

+

"max_new_tokens": min(int(max_new_tokens), 1536),

|

| 239 |

+

"stop": state.sep if state.sep_style in [SeparatorStyle.SINGLE, SeparatorStyle.MPT] else state.sep2,

|

| 240 |

+

"images": f'List of {len(state.get_images())} images: {all_image_hash}',

|

| 241 |

+

}

|

| 242 |

+

logger.info(f"==== request ====\n{pload}")

|

| 243 |

+

|

| 244 |

+

pload['images'] = state.get_images()

|

| 245 |

+

|

| 246 |

+

state.messages[-1][-1] = "▌"

|

| 247 |

+

yield (state, state.to_gradio_chatbot()) + (disable_btn,) * 5

|

| 248 |

+

|

| 249 |

+

try:

|

| 250 |

+

# Stream output

|

| 251 |

+

response = requests.post(worker_addr + "/worker_generate_stream",

|

| 252 |

+

headers=headers, json=pload, stream=True, timeout=10)

|

| 253 |

+

for chunk in response.iter_lines(decode_unicode=False, delimiter=b"\0"):

|

| 254 |

+

if chunk:

|

| 255 |

+

data = json.loads(chunk.decode())

|

| 256 |

+

if data["error_code"] == 0:

|

| 257 |

+

output = data["text"][len(prompt):].strip()

|

| 258 |

+

state.messages[-1][-1] = output + "▌"

|

| 259 |

+

yield (state, state.to_gradio_chatbot()) + (disable_btn,) * 5

|

| 260 |

+

else:

|

| 261 |

+

output = data["text"] + f" (error_code: {data['error_code']})"

|

| 262 |

+

state.messages[-1][-1] = output

|

| 263 |

+

yield (state, state.to_gradio_chatbot()) + (disable_btn, disable_btn, disable_btn, enable_btn, enable_btn)

|

| 264 |

+

return

|

| 265 |

+

time.sleep(0.03)

|

| 266 |

+

except requests.exceptions.RequestException as e:

|

| 267 |

+

state.messages[-1][-1] = server_error_msg

|

| 268 |

+

yield (state, state.to_gradio_chatbot()) + (disable_btn, disable_btn, disable_btn, enable_btn, enable_btn)

|

| 269 |

+

return

|

| 270 |

+

|

| 271 |

+

state.messages[-1][-1] = state.messages[-1][-1][:-1]

|

| 272 |

+

yield (state, state.to_gradio_chatbot()) + (enable_btn,) * 5

|

| 273 |

+

|

| 274 |

+

finish_tstamp = time.time()

|

| 275 |

+

logger.info(f"{output}")

|

| 276 |

+

|

| 277 |

+

with open(get_conv_log_filename(), "a") as fout:

|

| 278 |

+

data = {

|

| 279 |

+

"tstamp": round(finish_tstamp, 4),

|

| 280 |

+

"type": "chat",

|

| 281 |

+

"model": model_name,

|

| 282 |

+

"start": round(start_tstamp, 4),

|

| 283 |

+

"finish": round(finish_tstamp, 4),

|

| 284 |

+

"state": state.dict(),

|

| 285 |

+

"images": all_image_hash,

|

| 286 |

+

"ip": request.client.host,

|

| 287 |

+

}

|

| 288 |

+

fout.write(json.dumps(data) + "\n")

|

| 289 |

+

|

| 290 |

+

title_markdown = ("""

|

| 291 |

+

# Dr-LLaVA: Visual Instruction Tuning with Symbolic Clinical Grounding

|

| 292 |

+

[[Project Page](https://XXXXX)] [[Code](https://github.com/AlaaLab/Dr-LLaVA)] | 📚 [[Dr-LLaVA](https://arxiv.org/abs/2405.19567)]]

|

| 293 |

+

""")

|

| 294 |

+

|

| 295 |

+

tos_markdown = ("""

|

| 296 |

+

User agrees to the following terms of use:

|

| 297 |

+

1. The service is a research preview intended for non-commercial use only.

|

| 298 |

+

2. The service is provided "as is" without warranty of any kind.

|

| 299 |

+

""")

|

| 300 |

+

|

| 301 |

+

|

| 302 |

+

learn_more_markdown = ("""

|

| 303 |

+

### License

|

| 304 |

+

The service is a research preview intended for non-commercial use only, subject to the model [License](https://github.com/facebookresearch/llama/blob/main/MODEL_CARD.md) of LLaMA, [Terms of Use](https://openai.com/policies/terms-of-use) of the data generated by OpenAI, and [Privacy Practices](https://chrome.google.com/webstore/detail/sharegpt-share-your-chatg/daiacboceoaocpibfodeljbdfacokfjb) of ShareGPT. Please contact us if you find any potential violation.

|

| 305 |

+

""")

|

| 306 |

+

|

| 307 |

+

block_css = """

|

| 308 |

+

|

| 309 |

+

#buttons button {

|

| 310 |

+

min-width: min(120px,100%);

|

| 311 |

+

}

|

| 312 |

+

|

| 313 |

"""

|

| 314 |

+

|

| 315 |

+

def build_demo(embed_mode, cur_dir=None, concurrency_count=10):

|

| 316 |

+

textbox = gr.Textbox(show_label=False, placeholder="Enter text and press ENTER", container=False)

|

| 317 |

+

with gr.Blocks(title="LLaVA", theme=gr.themes.Default(), css=block_css) as demo:

|

| 318 |

+

state = gr.State()

|

| 319 |

+

|

| 320 |

+

if not embed_mode:

|

| 321 |

+

gr.Markdown(title_markdown)

|

| 322 |

+

|

| 323 |

+

with gr.Row():

|

| 324 |

+

with gr.Column(scale=2):

|

| 325 |

+

# add a description

|

| 326 |

+

gr.Markdown("""<p style='text-align: center'> Shenghuan Sun, Gregory Goldgof, Alex Schubert, Zhiqing Sun, Atul Butte, Ahmed Alaa <br>

|

| 327 |

+

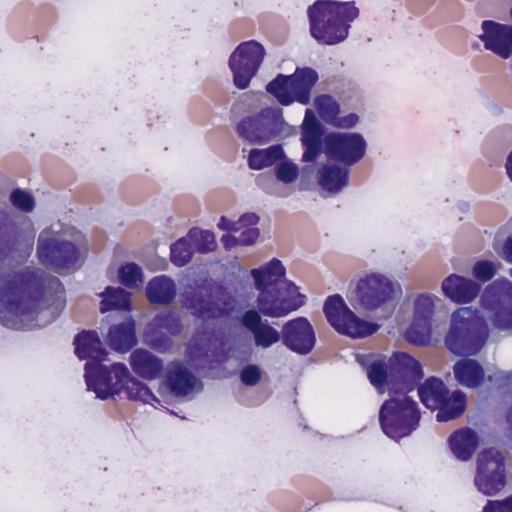

This is the demo for Dr-LLaVA. So far it could only be used for H&E stained Bone Marrow Aspirate images application.</p>

|

| 328 |

+

|

| 329 |

+

<b>Tips for using this demo:</b>

|

| 330 |

+

<ul>

|

| 331 |

+

<li>Drop a single image from a bone marrow aspirate whole slide image taken at 40x.</li>

|

| 332 |

+

</ul>

|

| 333 |

+

""")

|

| 334 |

+

# Replace 'path_to_image' with the path to your image file

|

| 335 |

+

gr.Image(value="https://i.postimg.cc/tJzyq5Dh/Dr-LLa-VA-Fig-1.png",

|

| 336 |

+

width=600, interactive=False, type="pil")

|

| 337 |

+

with gr.Column(scale=3):

|

| 338 |

+

with gr.Row(elem_id="model_selector_row"):

|

| 339 |

+

model_selector = gr.Dropdown(

|

| 340 |

+

choices=models,

|

| 341 |

+

value=models[0] if len(models) > 0 else "",

|

| 342 |

+

interactive=True,

|

| 343 |

+

show_label=False,

|

| 344 |

+

container=False)

|

| 345 |

+

|

| 346 |

+

imagebox = gr.Image(type="pil")

|

| 347 |

+

image_process_mode = gr.Radio(

|

| 348 |

+

["Crop", "Resize", "Pad", "Default"],

|

| 349 |

+

value="Default",

|

| 350 |

+

label="Preprocess for non-square image", visible=False)

|

| 351 |

+

|

| 352 |

+

if cur_dir is None:

|

| 353 |

+

cur_dir = os.path.dirname(os.path.abspath(__file__))

|

| 354 |

+

gr.Examples(examples=[

|

| 355 |

+

[f"{cur_dir}/examples/example1.jpeg", "Can you assess if these pathology images are suitable for identifying cancer upon inspection?"],

|

| 356 |

+

[f"{cur_dir}/examples/example2.jpeg", "Are you able to recognize the probable illness in the image patch?"],

|

| 357 |

+

], inputs=[imagebox, textbox])

|

| 358 |

+

|

| 359 |

+

with gr.Accordion("Parameters", open=False) as parameter_row:

|

| 360 |

+

temperature = gr.Slider(minimum=0.0, maximum=1.0, value=0.2, step=0.1, interactive=True, label="Temperature",)

|

| 361 |

+

top_p = gr.Slider(minimum=0.0, maximum=1.0, value=0.7, step=0.1, interactive=True, label="Top P",)

|

| 362 |

+

max_output_tokens = gr.Slider(minimum=0, maximum=1024, value=512, step=64, interactive=True, label="Max output tokens",)

|

| 363 |

+

|

| 364 |

+

with gr.Column(scale=6):

|

| 365 |

+

chatbot = gr.Chatbot(

|

| 366 |

+

elem_id="chatbot",

|

| 367 |

+

label="LLaVA Chatbot",

|

| 368 |

+

height=470,

|

| 369 |

+

layout="panel",

|

| 370 |

+

)

|

| 371 |

+

with gr.Row():

|

| 372 |

+

with gr.Column(scale=8):

|

| 373 |

+

textbox.render()

|

| 374 |

+

with gr.Column(scale=1, min_width=50):

|

| 375 |

+

submit_btn = gr.Button(value="Send", variant="primary")

|

| 376 |

+

with gr.Row(elem_id="buttons") as button_row:

|

| 377 |

+

upvote_btn = gr.Button(value="👍 Upvote", interactive=False)

|

| 378 |

+

downvote_btn = gr.Button(value="👎 Downvote", interactive=False)

|

| 379 |

+

flag_btn = gr.Button(value="⚠️ Flag", interactive=False)

|

| 380 |

+

#stop_btn = gr.Button(value="⏹️ Stop Generation", interactive=False)

|

| 381 |

+

regenerate_btn = gr.Button(value="🔄 Regenerate", interactive=False)

|

| 382 |

+

clear_btn = gr.Button(value="🗑️ Clear", interactive=False)

|

| 383 |

+

|

| 384 |

+

if not embed_mode:

|

| 385 |

+

gr.Markdown(tos_markdown)

|

| 386 |

+

gr.Markdown(learn_more_markdown)

|

| 387 |

+

url_params = gr.JSON(visible=False)

|

| 388 |

+

|

| 389 |

+

# Register listeners

|

| 390 |

+

btn_list = [upvote_btn, downvote_btn, flag_btn, regenerate_btn, clear_btn]

|

| 391 |

+

upvote_btn.click(

|

| 392 |

+

upvote_last_response,

|

| 393 |

+

[state, model_selector],

|

| 394 |

+

[textbox, upvote_btn, downvote_btn, flag_btn]

|

| 395 |

+

)

|

| 396 |

+

downvote_btn.click(

|

| 397 |

+

downvote_last_response,

|

| 398 |

+

[state, model_selector],

|

| 399 |

+

[textbox, upvote_btn, downvote_btn, flag_btn]

|

| 400 |

+

)

|

| 401 |

+

flag_btn.click(

|

| 402 |

+

flag_last_response,

|

| 403 |

+

[state, model_selector],

|

| 404 |

+

[textbox, upvote_btn, downvote_btn, flag_btn]

|

| 405 |

+

)

|

| 406 |

+

|

| 407 |

+

regenerate_btn.click(

|

| 408 |

+

regenerate,

|

| 409 |

+

[state, image_process_mode],

|

| 410 |

+

[state, chatbot, textbox, imagebox] + btn_list

|

| 411 |

+

).then(

|

| 412 |

+

http_bot,

|

| 413 |

+

[state, model_selector, temperature, top_p, max_output_tokens],

|

| 414 |

+

[state, chatbot] + btn_list,

|

| 415 |

+

concurrency_limit=concurrency_count

|

| 416 |

+

)

|

| 417 |

+

|

| 418 |

+

clear_btn.click(

|

| 419 |

+

clear_history,

|

| 420 |

+

None,

|

| 421 |

+

[state, chatbot, textbox, imagebox] + btn_list,

|

| 422 |

+

queue=False

|

| 423 |

+

)

|

| 424 |

+

|

| 425 |

+

textbox.submit(

|

| 426 |

+

add_text,

|

| 427 |

+

[state, textbox, imagebox, image_process_mode],

|

| 428 |

+

[state, chatbot, textbox, imagebox] + btn_list,

|

| 429 |

+

queue=False

|

| 430 |

+

).then(

|

| 431 |

+

http_bot,

|

| 432 |

+

[state, model_selector, temperature, top_p, max_output_tokens],

|

| 433 |

+

[state, chatbot] + btn_list,

|

| 434 |

+

concurrency_limit=concurrency_count

|

| 435 |

+

)

|

| 436 |

+

|

| 437 |

+

submit_btn.click(

|

| 438 |

+

add_text,

|

| 439 |

+

[state, textbox, imagebox, image_process_mode],

|

| 440 |

+

[state, chatbot, textbox, imagebox] + btn_list

|

| 441 |

+

).then(

|

| 442 |

+

http_bot,

|

| 443 |

+

[state, model_selector, temperature, top_p, max_output_tokens],

|

| 444 |

+

[state, chatbot] + btn_list,

|

| 445 |

+

concurrency_limit=concurrency_count

|

| 446 |

+

)

|

| 447 |

+

|

| 448 |

+

if args.model_list_mode == "once":

|

| 449 |

+

demo.load(

|

| 450 |

+

load_demo,

|

| 451 |

+

[url_params],

|

| 452 |

+

[state, model_selector],

|

| 453 |

+

js=get_window_url_params

|

| 454 |

+

)

|

| 455 |

+

elif args.model_list_mode == "reload":

|

| 456 |

+

demo.load(

|

| 457 |

+

load_demo_refresh_model_list,

|

| 458 |

+

None,

|

| 459 |

+

[state, model_selector],

|

| 460 |

+

queue=False

|

| 461 |

+

)

|

| 462 |

+

else:

|

| 463 |

+

raise ValueError(f"Unknown model list mode: {args.model_list_mode}")

|

| 464 |

+

|

| 465 |

+

return demo

|

| 466 |

+

|

| 467 |

+

def start_controller():

|

| 468 |

+

logger.info("Starting the controller")

|

| 469 |

+

controller_command = [

|

| 470 |

+

"python",

|

| 471 |

+

"-m",

|

| 472 |

+

"controller",

|

| 473 |

+

"--host",

|

| 474 |

+

"0.0.0.0",

|

| 475 |

+

"--port",

|

| 476 |

+

"10000",

|

| 477 |

+

]

|

| 478 |

+

return subprocess.Popen(controller_command)

|

| 479 |

+

|

| 480 |

+

def start_worker():

|

| 481 |

+

logger.info(f"Starting the model worker")

|

| 482 |

+

worker_command = [

|

| 483 |

+

"python",

|

| 484 |

+

"-m",

|

| 485 |

+

"model_worker",

|

| 486 |

+

"--host",

|

| 487 |

+

"0.0.0.0",

|

| 488 |

+

"--controller",

|

| 489 |

+

"http://localhost:10000",

|

| 490 |

+

"--load-bf16",

|

| 491 |

+

"--model-name",

|

| 492 |

+

"llava-rlhf-13b-v1.5-336",

|

| 493 |

+

"--model-path",

|

| 494 |

+

"daviddaytw/Dr-LLaVA-sft",

|

| 495 |

+

"--lora-path",

|

| 496 |

+

"daviddaytw/Dr-LLaVA-lora-adapter",

|

| 497 |

+

]

|

| 498 |

+

return subprocess.Popen(worker_command)

|

| 499 |

+

|

| 500 |

+

def get_args():

|

| 501 |

+

parser = argparse.ArgumentParser()

|

| 502 |

+

parser.add_argument("--host", type=str, default="0.0.0.0")

|

| 503 |

+

parser.add_argument("--port", type=int)

|

| 504 |

+

parser.add_argument("--controller-url", type=str, default="http://localhost:10000")

|

| 505 |

+

parser.add_argument("--concurrency-count", type=int, default=16)

|

| 506 |

+

parser.add_argument(

|

| 507 |

+

"--model-list-mode", type=str, default="reload", choices=["once", "reload"]

|

| 508 |

+

)

|

| 509 |

+

parser.add_argument("--share", action="store_true")

|

| 510 |

+

parser.add_argument("--moderate", action="store_true")

|

| 511 |

+

parser.add_argument("--embed", action="store_true")

|

| 512 |

+

args = parser.parse_args()

|

| 513 |

+

|

| 514 |

+

return args

|

| 515 |

+

|

| 516 |

+

|

| 517 |

+

def start_demo(args):

|

| 518 |

+

demo = build_demo(args.embed)

|

| 519 |

+

demo.queue(

|

| 520 |

+

concurrency_count=args.concurrency_count, status_update_rate=10, api_open=False

|

| 521 |

+

).launch(server_name=args.host, server_port=args.port, share=args.share)

|

| 522 |

|

| 523 |

|

| 524 |

if __name__ == "__main__":

|

| 525 |

+

args = get_args()

|

| 526 |

+

logger.info(f"args: {args}")

|

| 527 |

+

|

| 528 |

+

controller_proc = start_controller()

|

| 529 |

+

worker_proc = start_worker()

|

| 530 |

+

|

| 531 |

+

# Wait for worker and controller to start

|

| 532 |

+

time.sleep(10)

|

| 533 |

+

|

| 534 |

+

exit_status = 0

|

| 535 |

+

try:

|

| 536 |

+

start_demo(args)

|

| 537 |

+

except Exception as e:

|

| 538 |

+

print(e)

|

| 539 |

+

exit_status = 1

|

| 540 |

+

finally:

|

| 541 |

+

worker_proc.kill()

|

| 542 |

+

controller_proc.kill()

|

| 543 |

+

|

| 544 |

+

sys.exit(exit_status)

|

constants.py

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

CONTROLLER_HEART_BEAT_EXPIRATION = 30

|

| 2 |

+

WORKER_HEART_BEAT_INTERVAL = 15

|

| 3 |

+

|

| 4 |

+

LOGDIR = "."

|

| 5 |

+

|

| 6 |

+

# Model Constants

|

| 7 |

+

IGNORE_INDEX = -100

|

| 8 |

+

IMAGE_TOKEN_INDEX = -200

|

| 9 |

+

DEFAULT_IMAGE_TOKEN = "<image>"

|

| 10 |

+

DEFAULT_IMAGE_PATCH_TOKEN = "<im_patch>"

|

| 11 |

+

DEFAULT_IM_START_TOKEN = "<im_start>"

|

| 12 |

+

DEFAULT_IM_END_TOKEN = "<im_end>"

|

| 13 |

+

IMAGE_PLACEHOLDER = "<image-placeholder>"

|

controller.py

ADDED

|

@@ -0,0 +1,298 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

A controller manages distributed workers.

|

| 3 |

+

It sends worker addresses to clients.

|

| 4 |

+

"""

|

| 5 |

+

import argparse

|

| 6 |

+

import asyncio

|

| 7 |

+

import dataclasses

|

| 8 |

+

from enum import Enum, auto

|

| 9 |

+

import json

|

| 10 |

+

import logging

|

| 11 |

+

import time

|

| 12 |

+

from typing import List, Union

|

| 13 |

+

import threading

|

| 14 |

+

|

| 15 |

+

from fastapi import FastAPI, Request

|

| 16 |

+

from fastapi.responses import StreamingResponse

|

| 17 |

+

import numpy as np

|

| 18 |

+

import requests

|

| 19 |

+

import uvicorn

|

| 20 |

+

|

| 21 |

+

from constants import CONTROLLER_HEART_BEAT_EXPIRATION

|

| 22 |

+

from utils import build_logger, server_error_msg

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

logger = build_logger("controller", "controller.log")

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

class DispatchMethod(Enum):

|

| 29 |

+

LOTTERY = auto()

|

| 30 |

+

SHORTEST_QUEUE = auto()

|

| 31 |

+

|

| 32 |

+

@classmethod

|

| 33 |

+

def from_str(cls, name):

|

| 34 |

+

if name == "lottery":

|

| 35 |

+

return cls.LOTTERY

|

| 36 |

+

elif name == "shortest_queue":

|

| 37 |

+

return cls.SHORTEST_QUEUE

|

| 38 |

+

else:

|

| 39 |

+

raise ValueError(f"Invalid dispatch method")

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

@dataclasses.dataclass

|

| 43 |

+

class WorkerInfo:

|

| 44 |

+

model_names: List[str]

|

| 45 |

+

speed: int

|

| 46 |

+

queue_length: int

|

| 47 |

+

check_heart_beat: bool

|

| 48 |

+

last_heart_beat: str

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

def heart_beat_controller(controller):

|

| 52 |

+

while True:

|

| 53 |

+

time.sleep(CONTROLLER_HEART_BEAT_EXPIRATION)

|

| 54 |

+

controller.remove_stable_workers_by_expiration()

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

class Controller:

|

| 58 |

+

def __init__(self, dispatch_method: str):

|

| 59 |

+

# Dict[str -> WorkerInfo]

|

| 60 |

+

self.worker_info = {}

|

| 61 |

+

self.dispatch_method = DispatchMethod.from_str(dispatch_method)

|

| 62 |

+

|

| 63 |

+

self.heart_beat_thread = threading.Thread(

|

| 64 |

+

target=heart_beat_controller, args=(self,), daemon=True)

|

| 65 |

+

self.heart_beat_thread.start()

|

| 66 |

+

|

| 67 |

+

logger.info("Init controller")

|

| 68 |

+

|

| 69 |

+

def register_worker(self, worker_name: str, check_heart_beat: bool,

|

| 70 |

+

worker_status: dict):

|

| 71 |

+

if worker_name not in self.worker_info:

|

| 72 |

+

logger.info(f"Register a new worker: {worker_name}")

|

| 73 |

+

else:

|

| 74 |

+

logger.info(f"Register an existing worker: {worker_name}")

|

| 75 |

+

|

| 76 |

+

if not worker_status:

|

| 77 |

+

worker_status = self.get_worker_status(worker_name)

|

| 78 |

+

if not worker_status:

|

| 79 |

+

return False

|

| 80 |

+

|

| 81 |

+

self.worker_info[worker_name] = WorkerInfo(

|

| 82 |

+

worker_status["model_names"], worker_status["speed"], worker_status["queue_length"],

|

| 83 |

+

check_heart_beat, time.time())

|

| 84 |

+

|

| 85 |

+

logger.info(f"Register done: {worker_name}, {worker_status}")

|

| 86 |

+

return True

|

| 87 |

+

|

| 88 |

+

def get_worker_status(self, worker_name: str):

|

| 89 |

+

try:

|

| 90 |

+

r = requests.post(worker_name + "/worker_get_status", timeout=5)

|

| 91 |

+

except requests.exceptions.RequestException as e:

|

| 92 |

+

logger.error(f"Get status fails: {worker_name}, {e}")

|

| 93 |

+

return None

|

| 94 |

+

|

| 95 |

+

if r.status_code != 200:

|

| 96 |

+

logger.error(f"Get status fails: {worker_name}, {r}")

|

| 97 |

+

return None

|

| 98 |

+

|

| 99 |

+

return r.json()

|

| 100 |

+

|

| 101 |

+

def remove_worker(self, worker_name: str):

|

| 102 |

+

del self.worker_info[worker_name]

|

| 103 |

+

|

| 104 |

+

def refresh_all_workers(self):

|

| 105 |

+

old_info = dict(self.worker_info)

|

| 106 |

+

self.worker_info = {}

|

| 107 |

+

|

| 108 |

+

for w_name, w_info in old_info.items():

|

| 109 |

+

if not self.register_worker(w_name, w_info.check_heart_beat, None):

|

| 110 |

+

logger.info(f"Remove stale worker: {w_name}")

|

| 111 |

+

|

| 112 |

+

def list_models(self):

|

| 113 |

+

model_names = set()

|

| 114 |

+

|

| 115 |

+

for w_name, w_info in self.worker_info.items():

|

| 116 |

+

model_names.update(w_info.model_names)

|

| 117 |

+

|

| 118 |

+

return list(model_names)

|

| 119 |

+

|

| 120 |

+

def get_worker_address(self, model_name: str):

|

| 121 |

+

if self.dispatch_method == DispatchMethod.LOTTERY:

|

| 122 |

+

worker_names = []

|

| 123 |

+

worker_speeds = []

|

| 124 |

+

for w_name, w_info in self.worker_info.items():

|

| 125 |

+

if model_name in w_info.model_names:

|

| 126 |

+

worker_names.append(w_name)

|

| 127 |

+

worker_speeds.append(w_info.speed)

|

| 128 |

+

worker_speeds = np.array(worker_speeds, dtype=np.float32)

|

| 129 |

+

norm = np.sum(worker_speeds)

|

| 130 |

+

if norm < 1e-4:

|

| 131 |

+

return ""

|

| 132 |

+

worker_speeds = worker_speeds / norm

|

| 133 |

+

if True: # Directly return address

|

| 134 |

+

pt = np.random.choice(np.arange(len(worker_names)),

|

| 135 |

+

p=worker_speeds)

|

| 136 |

+

worker_name = worker_names[pt]

|

| 137 |

+

return worker_name

|

| 138 |

+

|

| 139 |

+

# Check status before returning

|

| 140 |

+

while True:

|

| 141 |

+

pt = np.random.choice(np.arange(len(worker_names)),

|

| 142 |

+

p=worker_speeds)

|

| 143 |

+

worker_name = worker_names[pt]

|

| 144 |

+

|

| 145 |

+

if self.get_worker_status(worker_name):

|

| 146 |

+

break

|

| 147 |

+

else:

|

| 148 |

+

self.remove_worker(worker_name)

|

| 149 |

+

worker_speeds[pt] = 0

|

| 150 |

+

norm = np.sum(worker_speeds)

|

| 151 |

+

if norm < 1e-4:

|

| 152 |

+

return ""

|

| 153 |

+

worker_speeds = worker_speeds / norm

|

| 154 |

+

continue

|

| 155 |

+

return worker_name

|

| 156 |

+

elif self.dispatch_method == DispatchMethod.SHORTEST_QUEUE:

|

| 157 |

+

worker_names = []

|

| 158 |

+

worker_qlen = []

|

| 159 |

+

for w_name, w_info in self.worker_info.items():

|

| 160 |

+

if model_name in w_info.model_names:

|

| 161 |

+

worker_names.append(w_name)

|

| 162 |

+

worker_qlen.append(w_info.queue_length / w_info.speed)

|

| 163 |

+

if len(worker_names) == 0:

|

| 164 |

+

return ""

|

| 165 |

+

min_index = np.argmin(worker_qlen)

|

| 166 |

+

w_name = worker_names[min_index]

|

| 167 |

+

self.worker_info[w_name].queue_length += 1

|

| 168 |

+

logger.info(f"names: {worker_names}, queue_lens: {worker_qlen}, ret: {w_name}")

|

| 169 |

+

return w_name

|

| 170 |

+

else:

|

| 171 |

+

raise ValueError(f"Invalid dispatch method: {self.dispatch_method}")

|

| 172 |

+

|

| 173 |

+

def receive_heart_beat(self, worker_name: str, queue_length: int):

|

| 174 |

+

if worker_name not in self.worker_info:

|

| 175 |

+

logger.info(f"Receive unknown heart beat. {worker_name}")

|

| 176 |

+

return False

|

| 177 |

+

|

| 178 |

+

self.worker_info[worker_name].queue_length = queue_length

|

| 179 |

+

self.worker_info[worker_name].last_heart_beat = time.time()

|

| 180 |

+

logger.info(f"Receive heart beat. {worker_name}")

|

| 181 |

+

return True

|

| 182 |

+

|

| 183 |

+

def remove_stable_workers_by_expiration(self):

|

| 184 |

+

expire = time.time() - CONTROLLER_HEART_BEAT_EXPIRATION

|

| 185 |

+

to_delete = []

|

| 186 |

+

for worker_name, w_info in self.worker_info.items():

|

| 187 |

+

if w_info.check_heart_beat and w_info.last_heart_beat < expire:

|

| 188 |

+

to_delete.append(worker_name)

|

| 189 |

+

|

| 190 |

+

for worker_name in to_delete:

|

| 191 |

+

self.remove_worker(worker_name)

|

| 192 |

+

|

| 193 |

+

def worker_api_generate_stream(self, params):

|

| 194 |

+

worker_addr = self.get_worker_address(params["model"])

|

| 195 |

+

if not worker_addr:

|

| 196 |

+

logger.info(f"no worker: {params['model']}")

|

| 197 |

+

ret = {

|

| 198 |

+

"text": server_error_msg,

|

| 199 |

+

"error_code": 2,

|

| 200 |

+

}

|

| 201 |

+

yield json.dumps(ret).encode() + b"\0"

|

| 202 |

+

|

| 203 |

+

try:

|

| 204 |

+

response = requests.post(worker_addr + "/worker_generate_stream",

|

| 205 |

+

json=params, stream=True, timeout=5)

|

| 206 |

+

for chunk in response.iter_lines(decode_unicode=False, delimiter=b"\0"):

|

| 207 |

+

if chunk:

|

| 208 |

+

yield chunk + b"\0"

|

| 209 |

+

except requests.exceptions.RequestException as e:

|

| 210 |

+

logger.info(f"worker timeout: {worker_addr}")

|

| 211 |

+

ret = {

|

| 212 |

+

"text": server_error_msg,

|

| 213 |

+

"error_code": 3,

|

| 214 |

+

}

|

| 215 |

+

yield json.dumps(ret).encode() + b"\0"

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

# Let the controller act as a worker to achieve hierarchical

|

| 219 |

+

# management. This can be used to connect isolated sub networks.

|

| 220 |

+

def worker_api_get_status(self):

|

| 221 |

+

model_names = set()

|

| 222 |

+

speed = 0

|

| 223 |

+

queue_length = 0

|

| 224 |

+

|

| 225 |

+

for w_name in self.worker_info:

|

| 226 |

+

worker_status = self.get_worker_status(w_name)

|

| 227 |

+