ABOUT_TEXT = """# Context

The growing number of code models released by the community necessitates a comprehensive evaluation to reliably benchmark their capabilities. Similar to the [🤗 Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard), we selected two common benchmarks for evaluating Code LLMs on multiple programming languages:

- **[HumanEval](https://huggingface.co/datasets/openai_humaneval)** - benchmark for measuring functional correctness for synthesizing programs from docstrings. It consists of 164 Python programming problems.

- **[MultiPL-E](https://huggingface.co/datasets/nuprl/MultiPL-E)** - Translation of HumanEval to 18 programming languages.

- **Throughput Measurement** - In addition to these benchmarks, we also measure model throughput on a batch size of 1 and 50 to compare their inference speed.

### Benchamrks & Prompts

- HumanEval-Python reports the pass@1 on HumanEval; the rest is from MultiPL-E benchmark.

- For all languages, we use the original benchamrk prompts for all models except HumanEval-Python, where we separate base from instruction models. We use the original code completion prompts for HumanEval for all base models, but for Instruction models, we use the Instruction version of HumanEval in [HumanEvalSynthesize](https://huggingface.co/datasets/bigcode/humanevalpack) delimited by the tokens/text recommended by the authors of each model.

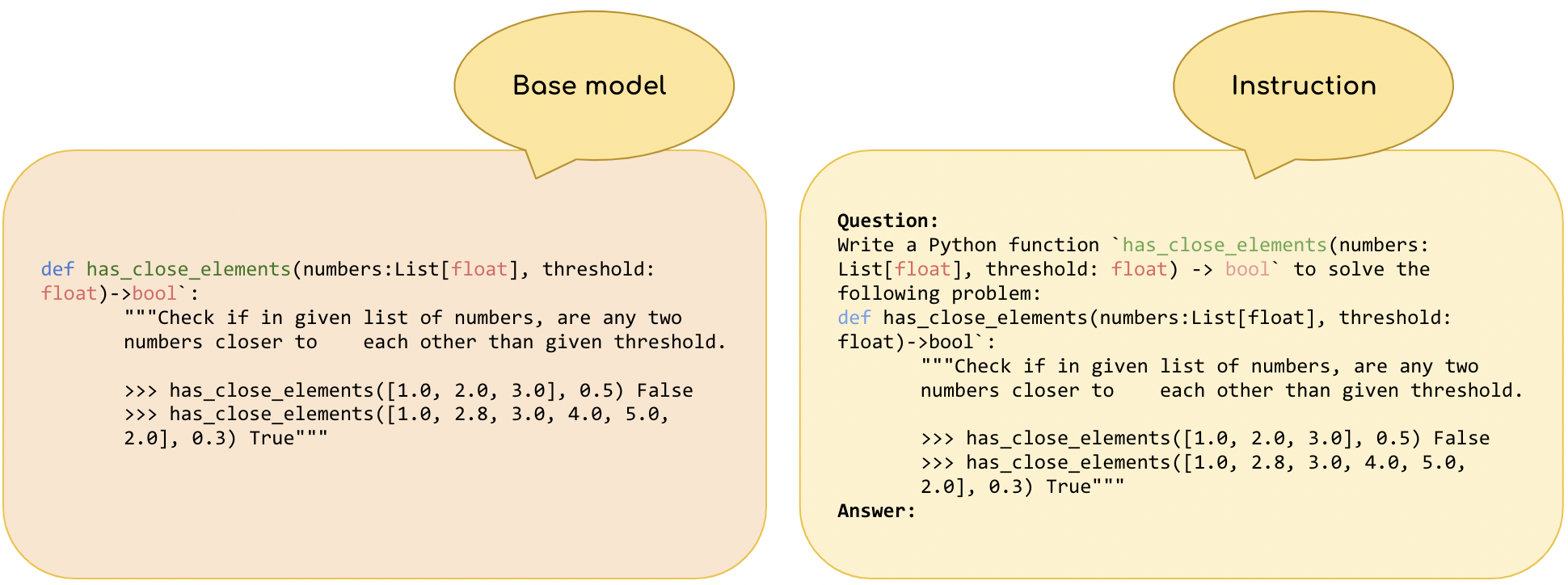

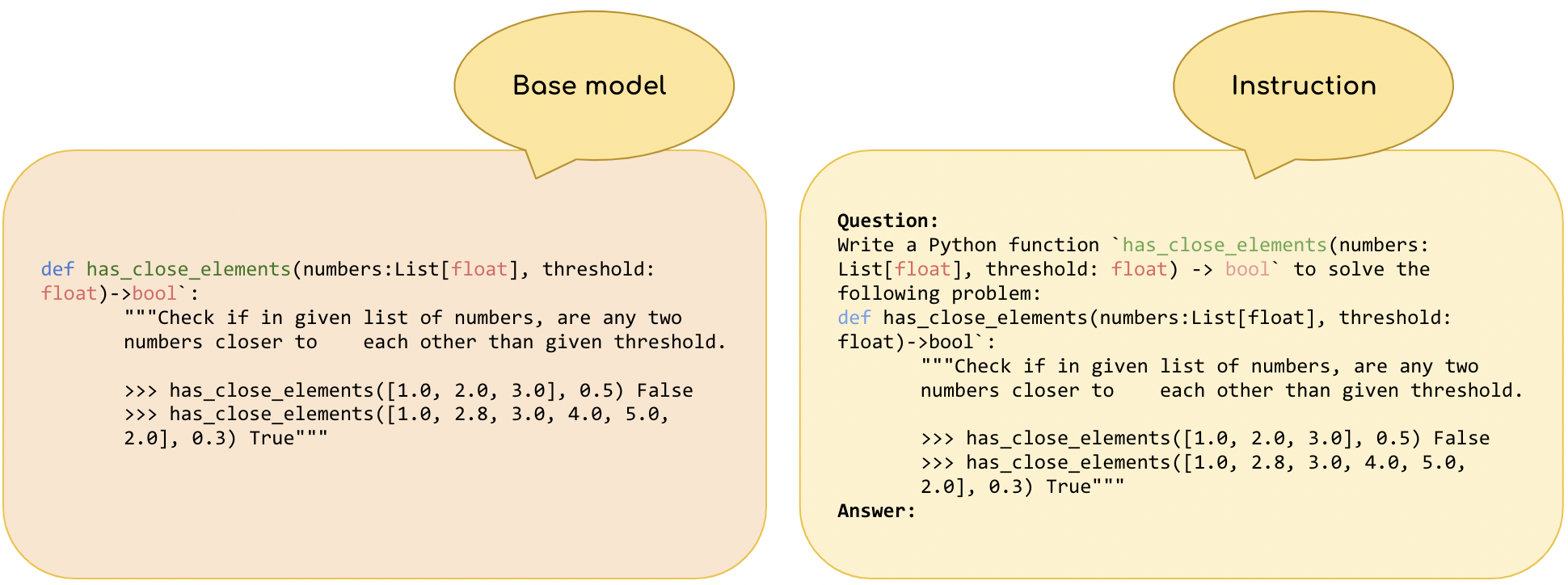

Figure below shows the example of OctoCoder vs Base HumanEval prompt, you can find the other prompts [here](https://github.com/bigcode-project/bigcode-evaluation-harness/blob/1d5e773a65a764ce091dd3eded78005e9144935e/lm_eval/tasks/humanevalpack.py#L211).

- An exception to this is the Phind models. They seem to follow to base prompts better than the instruction versions. Therefore, following the authors' recommendation we use base HumanEval prompts.

- Also note that for WizardCoder-Python-34B-V1.0 & WizardCoder-Python-13B-V1.0 (CodeLLaMa based), we use the HumanEval-Python instruction prompt that the original authors used with their postprocessing (instead of HumanEvalSynthesize), code is available [here](https://github.com/bigcode-project/bigcode-evaluation-harness/pull/133)).

### Evaluation Parameters

- All models were evaluated with the [bigcode-evaluation-harness](https://github.com/bigcode-project/bigcode-evaluation-harness/tree/main) with top-p=0.95, temperature=0.2, max_length_generation 512, and n_samples=50.

### Throughput and Memory Usage

- Throughputs and peak memory usage are measured using [Optimum-Benchmark](https://github.com/huggingface/optimum-benchmark/tree/main) which powers [Open LLM-Perf Leaderboard](https://huggingface.co/spaces/optimum/llm-perf-leaderboard). (0 throughput corresponds to OOM).

### Scoring and Rankings

- Average score is the average pass@1 over all languages. For Win Rate, we find model rank for each language and compute `num_models - (rank -1)`, then average this result over all languages.

### Miscellaneous

- #Languages column represents the number of programming languages included during the pretraining. UNK means the number of languages is unknown.

"""

SUBMISSION_TEXT = """

- An exception to this is the Phind models. They seem to follow to base prompts better than the instruction versions. Therefore, following the authors' recommendation we use base HumanEval prompts.

- Also note that for WizardCoder-Python-34B-V1.0 & WizardCoder-Python-13B-V1.0 (CodeLLaMa based), we use the HumanEval-Python instruction prompt that the original authors used with their postprocessing (instead of HumanEvalSynthesize), code is available [here](https://github.com/bigcode-project/bigcode-evaluation-harness/pull/133)).

### Evaluation Parameters

- All models were evaluated with the [bigcode-evaluation-harness](https://github.com/bigcode-project/bigcode-evaluation-harness/tree/main) with top-p=0.95, temperature=0.2, max_length_generation 512, and n_samples=50.

### Throughput and Memory Usage

- Throughputs and peak memory usage are measured using [Optimum-Benchmark](https://github.com/huggingface/optimum-benchmark/tree/main) which powers [Open LLM-Perf Leaderboard](https://huggingface.co/spaces/optimum/llm-perf-leaderboard). (0 throughput corresponds to OOM).

### Scoring and Rankings

- Average score is the average pass@1 over all languages. For Win Rate, we find model rank for each language and compute `num_models - (rank -1)`, then average this result over all languages.

### Miscellaneous

- #Languages column represents the number of programming languages included during the pretraining. UNK means the number of languages is unknown.

"""

SUBMISSION_TEXT = """

How to submit models/results to the leaderboard?

We welcome the community to submit evaluation results of new models. We also provide an experiental feature for submitting models that our team will evaluate on the 🤗 cluster.

## Submitting Models (experimental feature)

Inspired from the Open LLM Leaderboard, we welcome code models submission from the community that will be automatically evaluated. Please note that this is still an experimental feature.

Below are some guidlines to follow before submitting your model:

#### 1) Make sure you can load your model and tokenizer using AutoClasses:

```python

from transformers import AutoConfig, AutoModel, AutoTokenizer

config = AutoConfig.from_pretrained("your model name", revision=revision)

model = AutoModel.from_pretrained("your model name", revision=revision)

tokenizer = AutoTokenizer.from_pretrained("your model name", revision=revision)

```

If this step fails, follow the error messages to debug your model before submitting it. It's likely your model has been improperly uploaded.

Note: make sure your model is public!

Note: if your model needs `use_remote_code=True`, we do not support this option yet.

#### 2) Convert your model weights to [safetensors](https://huggingface.co/docs/safetensors/index)

It's a new format for storing weights which is safer and faster to load and use. It will also allow us to add the number of parameters of your model to the `Extended Viewer`!

#### 3) Make sure your model has an open license!

This is a leaderboard for Open LLMs, and we'd love for as many people as possible to know they can use your model 🤗

#### 4) Fill up your model card

When we add extra information about models to the leaderboard, it will be automatically taken from the model card.

"""

SUBMISSION_TEXT_2 = """

## Sumbitting Results

You also have the option for running evaluation yourself and submitting results. These results will be added as non-verified, the authors are however required to upload their generations in case other members want to check.

### 1 - Running Evaluation

We wrote a detailed guide for running the evaluation on your model. You can find the it in [bigcode-evaluation-harness/leaderboard](https://github.com/bigcode-project/bigcode-evaluation-harness/tree/main/leaderboard). This will generate a json file summarizing the results, in addition to the raw generations and metric files.

### 2- Submitting Results 🚀

To submit your results create a **Pull Request** in the community tab to add them under the [folder](https://huggingface.co/spaces/bigcode/multilingual-code-evals/tree/main/community_results) `community_results` in this repository:

- Create a folder called `ORG_MODELNAME_USERNAME` for example `bigcode_starcoder_loubnabnl`

- Put your json file with grouped scores from the guide, in addition generations folder and metrics folder in it.

The title of the PR should be `[Community Submission] Model: org/model, Username: your_username`, replace org and model with those corresponding to the model you evaluated.

""" - An exception to this is the Phind models. They seem to follow to base prompts better than the instruction versions. Therefore, following the authors' recommendation we use base HumanEval prompts.

- Also note that for WizardCoder-Python-34B-V1.0 & WizardCoder-Python-13B-V1.0 (CodeLLaMa based), we use the HumanEval-Python instruction prompt that the original authors used with their postprocessing (instead of HumanEvalSynthesize), code is available [here](https://github.com/bigcode-project/bigcode-evaluation-harness/pull/133)).

### Evaluation Parameters

- All models were evaluated with the [bigcode-evaluation-harness](https://github.com/bigcode-project/bigcode-evaluation-harness/tree/main) with top-p=0.95, temperature=0.2, max_length_generation 512, and n_samples=50.

### Throughput and Memory Usage

- Throughputs and peak memory usage are measured using [Optimum-Benchmark](https://github.com/huggingface/optimum-benchmark/tree/main) which powers [Open LLM-Perf Leaderboard](https://huggingface.co/spaces/optimum/llm-perf-leaderboard). (0 throughput corresponds to OOM).

### Scoring and Rankings

- Average score is the average pass@1 over all languages. For Win Rate, we find model rank for each language and compute `num_models - (rank -1)`, then average this result over all languages.

### Miscellaneous

- #Languages column represents the number of programming languages included during the pretraining. UNK means the number of languages is unknown.

"""

SUBMISSION_TEXT = """

- An exception to this is the Phind models. They seem to follow to base prompts better than the instruction versions. Therefore, following the authors' recommendation we use base HumanEval prompts.

- Also note that for WizardCoder-Python-34B-V1.0 & WizardCoder-Python-13B-V1.0 (CodeLLaMa based), we use the HumanEval-Python instruction prompt that the original authors used with their postprocessing (instead of HumanEvalSynthesize), code is available [here](https://github.com/bigcode-project/bigcode-evaluation-harness/pull/133)).

### Evaluation Parameters

- All models were evaluated with the [bigcode-evaluation-harness](https://github.com/bigcode-project/bigcode-evaluation-harness/tree/main) with top-p=0.95, temperature=0.2, max_length_generation 512, and n_samples=50.

### Throughput and Memory Usage

- Throughputs and peak memory usage are measured using [Optimum-Benchmark](https://github.com/huggingface/optimum-benchmark/tree/main) which powers [Open LLM-Perf Leaderboard](https://huggingface.co/spaces/optimum/llm-perf-leaderboard). (0 throughput corresponds to OOM).

### Scoring and Rankings

- Average score is the average pass@1 over all languages. For Win Rate, we find model rank for each language and compute `num_models - (rank -1)`, then average this result over all languages.

### Miscellaneous

- #Languages column represents the number of programming languages included during the pretraining. UNK means the number of languages is unknown.

"""

SUBMISSION_TEXT = """