Spaces:

Sleeping

Sleeping

Upload 163 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- README.md +6 -6

- __pycache__/multipage.cpython-37.pyc +0 -0

- app_pages/__pycache__/about.cpython-37.pyc +0 -0

- app_pages/__pycache__/home.cpython-37.pyc +0 -0

- app_pages/__pycache__/ocr_comparator.cpython-37.pyc +0 -0

- app_pages/about.py +37 -0

- app_pages/home.py +19 -0

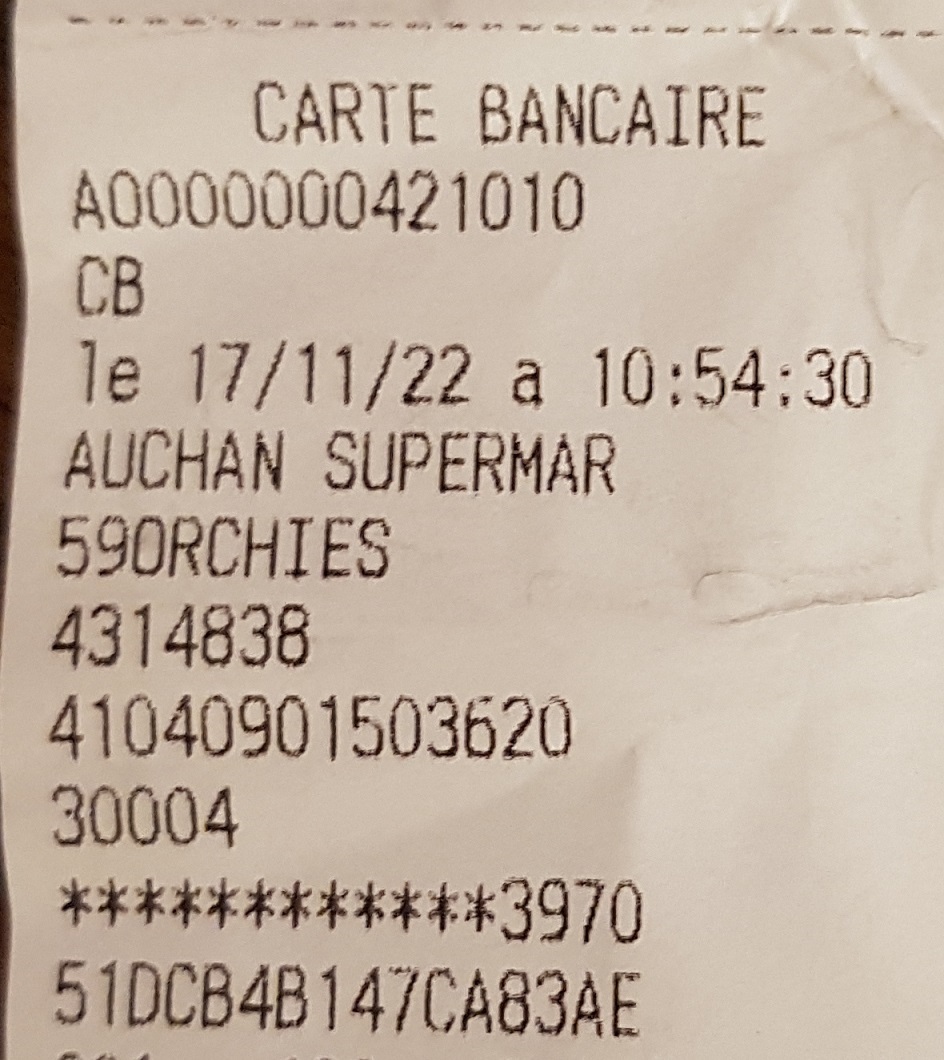

- app_pages/img_demo_1.jpg +0 -0

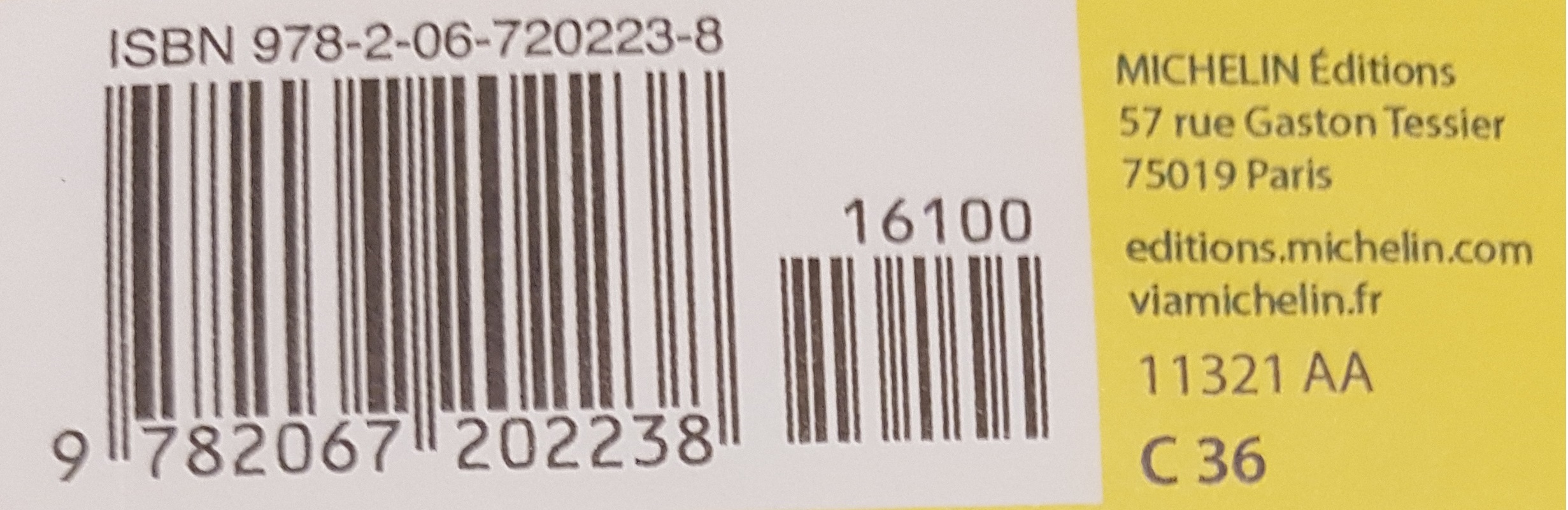

- app_pages/img_demo_2.jpg +0 -0

- app_pages/ocr.png +0 -0

- app_pages/ocr_comparator.py +1447 -0

- configs/_base_/default_runtime.py +17 -0

- configs/_base_/det_datasets/ctw1500.py +18 -0

- configs/_base_/det_datasets/icdar2015.py +18 -0

- configs/_base_/det_datasets/icdar2017.py +18 -0

- configs/_base_/det_datasets/synthtext.py +18 -0

- configs/_base_/det_datasets/toy_data.py +41 -0

- configs/_base_/det_models/dbnet_r18_fpnc.py +21 -0

- configs/_base_/det_models/dbnet_r50dcnv2_fpnc.py +23 -0

- configs/_base_/det_models/dbnetpp_r50dcnv2_fpnc.py +28 -0

- configs/_base_/det_models/drrg_r50_fpn_unet.py +21 -0

- configs/_base_/det_models/fcenet_r50_fpn.py +33 -0

- configs/_base_/det_models/fcenet_r50dcnv2_fpn.py +35 -0

- configs/_base_/det_models/ocr_mask_rcnn_r50_fpn_ohem.py +126 -0

- configs/_base_/det_models/ocr_mask_rcnn_r50_fpn_ohem_poly.py +126 -0

- configs/_base_/det_models/panet_r18_fpem_ffm.py +43 -0

- configs/_base_/det_models/panet_r50_fpem_ffm.py +21 -0

- configs/_base_/det_models/psenet_r50_fpnf.py +51 -0

- configs/_base_/det_models/textsnake_r50_fpn_unet.py +22 -0

- configs/_base_/det_pipelines/dbnet_pipeline.py +88 -0

- configs/_base_/det_pipelines/drrg_pipeline.py +60 -0

- configs/_base_/det_pipelines/fcenet_pipeline.py +118 -0

- configs/_base_/det_pipelines/maskrcnn_pipeline.py +57 -0

- configs/_base_/det_pipelines/panet_pipeline.py +156 -0

- configs/_base_/det_pipelines/psenet_pipeline.py +70 -0

- configs/_base_/det_pipelines/textsnake_pipeline.py +65 -0

- configs/_base_/recog_datasets/MJ_train.py +21 -0

- configs/_base_/recog_datasets/ST_MJ_alphanumeric_train.py +31 -0

- configs/_base_/recog_datasets/ST_MJ_train.py +29 -0

- configs/_base_/recog_datasets/ST_SA_MJ_real_train.py +81 -0

- configs/_base_/recog_datasets/ST_SA_MJ_train.py +48 -0

- configs/_base_/recog_datasets/ST_charbox_train.py +23 -0

- configs/_base_/recog_datasets/academic_test.py +57 -0

- configs/_base_/recog_datasets/seg_toy_data.py +34 -0

- configs/_base_/recog_datasets/toy_data.py +54 -0

- configs/_base_/recog_models/abinet.py +70 -0

- configs/_base_/recog_models/crnn.py +12 -0

- configs/_base_/recog_models/crnn_tps.py +18 -0

- configs/_base_/recog_models/master.py +61 -0

- configs/_base_/recog_models/nrtr_modality_transform.py +11 -0

README.md

CHANGED

|

@@ -1,11 +1,11 @@

|

|

| 1 |

---

|

| 2 |

title: Streamlit OCR

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

-

sdk:

|

| 7 |

-

sdk_version:

|

| 8 |

-

app_file:

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

| 11 |

---

|

|

|

|

| 1 |

---

|

| 2 |

title: Streamlit OCR

|

| 3 |

+

emoji: ⚡

|

| 4 |

+

colorFrom: purple

|

| 5 |

+

colorTo: green

|

| 6 |

+

sdk: gradio

|

| 7 |

+

sdk_version: 4.36.1

|

| 8 |

+

app_file: ocr_streamlit.py

|

| 9 |

pinned: false

|

| 10 |

license: apache-2.0

|

| 11 |

---

|

__pycache__/multipage.cpython-37.pyc

ADDED

|

Binary file (2.65 kB). View file

|

|

|

app_pages/__pycache__/about.cpython-37.pyc

ADDED

|

Binary file (2.02 kB). View file

|

|

|

app_pages/__pycache__/home.cpython-37.pyc

ADDED

|

Binary file (889 Bytes). View file

|

|

|

app_pages/__pycache__/ocr_comparator.cpython-37.pyc

ADDED

|

Binary file (48.1 kB). View file

|

|

|

app_pages/about.py

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import streamlit as st

|

| 2 |

+

|

| 3 |

+

def app():

|

| 4 |

+

st.title("OCR solutions comparator")

|

| 5 |

+

|

| 6 |

+

st.write("")

|

| 7 |

+

st.write("")

|

| 8 |

+

st.write("")

|

| 9 |

+

|

| 10 |

+

st.markdown("##### This app allows you to compare, from a given picture, the results of different solutions:")

|

| 11 |

+

st.markdown("##### *EasyOcr, PaddleOCR, MMOCR, Tesseract*")

|

| 12 |

+

st.write("")

|

| 13 |

+

st.write("")

|

| 14 |

+

|

| 15 |

+

st.markdown(''' The 1st step is to choose the language for the text recognition (not all solutions \

|

| 16 |

+

support the same languages), and then choose the picture to consider. It is possible to upload a file, \

|

| 17 |

+

to take a picture, or to use a demo file. \

|

| 18 |

+

It is then possible to change the default values for the text area detection process, \

|

| 19 |

+

before launching the detection task for each solution.''')

|

| 20 |

+

st.write("")

|

| 21 |

+

|

| 22 |

+

st.markdown(''' The different results are then presented. The 2nd step is to choose one of these \

|

| 23 |

+

detection results, in order to carry out the text recognition process there. It is also possible to change \

|

| 24 |

+

the default settings for each solution.''')

|

| 25 |

+

st.write("")

|

| 26 |

+

|

| 27 |

+

st.markdown("###### The recognition results appear in 2 formats:")

|

| 28 |

+

st.markdown(''' - a visual format resumes the initial image, replacing the detected areas with \

|

| 29 |

+

the recognized text. The background is + or - strongly colored in green according to the \

|

| 30 |

+

confidence level of the recognition.

|

| 31 |

+

A slider allows you to change the font size, another \

|

| 32 |

+

allows you to modify the confidence threshold above which the text color changes: if it is at \

|

| 33 |

+

70% for example, then all the texts with a confidence threshold higher or equal to 70 will appear \

|

| 34 |

+

in white, in black otherwise.''')

|

| 35 |

+

|

| 36 |

+

st.markdown(" - a detailed format presents the results in a table, for each text box detected. \

|

| 37 |

+

It is possible to download this results in a local csv file.")

|

app_pages/home.py

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import streamlit as st

|

| 2 |

+

|

| 3 |

+

def app():

|

| 4 |

+

st.image('ocr.png')

|

| 5 |

+

|

| 6 |

+

st.write("")

|

| 7 |

+

|

| 8 |

+

st.markdown('''#### OCR, or Optical Character Recognition, is a computer vision task, \

|

| 9 |

+

which includes the detection of text areas, and the recognition of characters.''')

|

| 10 |

+

st.write("")

|

| 11 |

+

st.write("")

|

| 12 |

+

|

| 13 |

+

st.markdown("##### This app allows you to compare, from a given image, the results of different solutions:")

|

| 14 |

+

st.markdown("##### *EasyOcr, PaddleOCR, MMOCR, Tesseract*")

|

| 15 |

+

st.write("")

|

| 16 |

+

st.write("")

|

| 17 |

+

st.markdown("👈 Select the **About** page from the sidebar for information on how the app works")

|

| 18 |

+

|

| 19 |

+

st.markdown("👈 or directly select the **App** page")

|

app_pages/img_demo_1.jpg

ADDED

|

app_pages/img_demo_2.jpg

ADDED

|

app_pages/ocr.png

ADDED

|

app_pages/ocr_comparator.py

ADDED

|

@@ -0,0 +1,1447 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""This Streamlit app allows you to compare, from a given image, the results of different solutions:

|

| 2 |

+

EasyOcr, PaddleOCR, MMOCR, Tesseract

|

| 3 |

+

"""

|

| 4 |

+

|

| 5 |

+

#import mim

|

| 6 |

+

#

|

| 7 |

+

#mim.install(['mmengine>=0.7.1,<1.1.0'])

|

| 8 |

+

#mim.install(['mmcv>=2.0.0rc4,<2.1.0'])

|

| 9 |

+

#mim.install(['mmdet>=3.0.rc5,<3.2.0'])

|

| 10 |

+

#mim.install(['mmocr'])

|

| 11 |

+

|

| 12 |

+

import streamlit as st

|

| 13 |

+

import plotly.express as px

|

| 14 |

+

import numpy as np

|

| 15 |

+

import math

|

| 16 |

+

import pandas as pd

|

| 17 |

+

from time import sleep

|

| 18 |

+

|

| 19 |

+

import cv2

|

| 20 |

+

from PIL import Image, ImageColor

|

| 21 |

+

import PIL

|

| 22 |

+

import easyocr

|

| 23 |

+

from paddleocr import PaddleOCR

|

| 24 |

+

#from mmocr.utils.ocr import MMOCR

|

| 25 |

+

import pytesseract

|

| 26 |

+

from pytesseract import Output

|

| 27 |

+

import os

|

| 28 |

+

from mycolorpy import colorlist as mcp

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

###################################################################################################

|

| 32 |

+

## MAIN

|

| 33 |

+

###################################################################################################

|

| 34 |

+

def app():

|

| 35 |

+

|

| 36 |

+

###################################################################################################

|

| 37 |

+

## FUNCTIONS

|

| 38 |

+

###################################################################################################

|

| 39 |

+

|

| 40 |

+

@st.cache

|

| 41 |

+

def convert_df(in_df):

|

| 42 |

+

"""Convert data frame function, used by download button

|

| 43 |

+

|

| 44 |

+

Args:

|

| 45 |

+

in_df (data frame): data frame to convert

|

| 46 |

+

|

| 47 |

+

Returns:

|

| 48 |

+

data frame: converted data frame

|

| 49 |

+

"""

|

| 50 |

+

# IMPORTANT: Cache the conversion to prevent computation on every rerun

|

| 51 |

+

return in_df.to_csv().encode('utf-8')

|

| 52 |

+

|

| 53 |

+

###

|

| 54 |

+

def easyocr_coord_convert(in_list_coord):

|

| 55 |

+

"""Convert easyocr coordinates to standard format used by others functions

|

| 56 |

+

|

| 57 |

+

Args:

|

| 58 |

+

in_list_coord (list of numbers): format [x_min, x_max, y_min, y_max]

|

| 59 |

+

|

| 60 |

+

Returns:

|

| 61 |

+

list of lists: format [ [x_min, y_min], [x_max, y_min], [x_max, y_max], [x_min, y_max] ]

|

| 62 |

+

"""

|

| 63 |

+

|

| 64 |

+

coord = in_list_coord

|

| 65 |

+

return [[coord[0], coord[2]], [coord[1], coord[2]], [coord[1], coord[3]], [coord[0], coord[3]]]

|

| 66 |

+

|

| 67 |

+

###

|

| 68 |

+

@st.cache(show_spinner=False)

|

| 69 |

+

def initializations():

|

| 70 |

+

"""Initializations for the app

|

| 71 |

+

|

| 72 |

+

Returns:

|

| 73 |

+

list of strings : list of OCR solutions names

|

| 74 |

+

(['EasyOCR', 'PPOCR', 'MMOCR', 'Tesseract'])

|

| 75 |

+

dict : names and indices of the OCR solutions

|

| 76 |

+

({'EasyOCR': 0, 'PPOCR': 1, 'MMOCR': 2, 'Tesseract': 3})

|

| 77 |

+

list of dicts : list of languages supported by each OCR solution

|

| 78 |

+

list of int : columns for recognition details results

|

| 79 |

+

dict : confidence color scale

|

| 80 |

+

plotly figure : confidence color scale figure

|

| 81 |

+

"""

|

| 82 |

+

# the readers considered

|

| 83 |

+

#out_reader_type_list = ['EasyOCR', 'PPOCR', 'MMOCR', 'Tesseract']

|

| 84 |

+

#out_reader_type_dict = {'EasyOCR': 0, 'PPOCR': 1, 'MMOCR': 2, 'Tesseract': 3}

|

| 85 |

+

out_reader_type_list = ['EasyOCR', 'PPOCR', 'Tesseract']

|

| 86 |

+

out_reader_type_dict = {'EasyOCR': 0, 'PPOCR': 1, 'Tesseract': 2}

|

| 87 |

+

|

| 88 |

+

# Columns for recognition details results

|

| 89 |

+

out_cols_size = [2] + [2,1]*(len(out_reader_type_list)-1) # Except Tesseract

|

| 90 |

+

|

| 91 |

+

# Dicts of laguages supported by each reader

|

| 92 |

+

out_dict_lang_easyocr = {'Abaza': 'abq', 'Adyghe': 'ady', 'Afrikaans': 'af', 'Angika': 'ang', \

|

| 93 |

+

'Arabic': 'ar', 'Assamese': 'as', 'Avar': 'ava', 'Azerbaijani': 'az', 'Belarusian': 'be', \

|

| 94 |

+

'Bulgarian': 'bg', 'Bihari': 'bh', 'Bhojpuri': 'bho', 'Bengali': 'bn', 'Bosnian': 'bs', \

|

| 95 |

+

'Simplified Chinese': 'ch_sim', 'Traditional Chinese': 'ch_tra', 'Chechen': 'che', \

|

| 96 |

+

'Czech': 'cs', 'Welsh': 'cy', 'Danish': 'da', 'Dargwa': 'dar', 'German': 'de', \

|

| 97 |

+

'English': 'en', 'Spanish': 'es', 'Estonian': 'et', 'Persian (Farsi)': 'fa', 'French': 'fr', \

|

| 98 |

+

'Irish': 'ga', 'Goan Konkani': 'gom', 'Hindi': 'hi', 'Croatian': 'hr', 'Hungarian': 'hu', \

|

| 99 |

+

'Indonesian': 'id', 'Ingush': 'inh', 'Icelandic': 'is', 'Italian': 'it', 'Japanese': 'ja', \

|

| 100 |

+

'Kabardian': 'kbd', 'Kannada': 'kn', 'Korean': 'ko', 'Kurdish': 'ku', 'Latin': 'la', \

|

| 101 |

+

'Lak': 'lbe', 'Lezghian': 'lez', 'Lithuanian': 'lt', 'Latvian': 'lv', 'Magahi': 'mah', \

|

| 102 |

+

'Maithili': 'mai', 'Maori': 'mi', 'Mongolian': 'mn', 'Marathi': 'mr', 'Malay': 'ms', \

|

| 103 |

+

'Maltese': 'mt', 'Nepali': 'ne', 'Newari': 'new', 'Dutch': 'nl', 'Norwegian': 'no', \

|

| 104 |

+

'Occitan': 'oc', 'Pali': 'pi', 'Polish': 'pl', 'Portuguese': 'pt', 'Romanian': 'ro', \

|

| 105 |

+

'Russian': 'ru', 'Serbian (cyrillic)': 'rs_cyrillic', 'Serbian (latin)': 'rs_latin', \

|

| 106 |

+

'Nagpuri': 'sck', 'Slovak': 'sk', 'Slovenian': 'sl', 'Albanian': 'sq', 'Swedish': 'sv', \

|

| 107 |

+

'Swahili': 'sw', 'Tamil': 'ta', 'Tabassaran': 'tab', 'Telugu': 'te', 'Thai': 'th', \

|

| 108 |

+

'Tajik': 'tjk', 'Tagalog': 'tl', 'Turkish': 'tr', 'Uyghur': 'ug', 'Ukranian': 'uk', \

|

| 109 |

+

'Urdu': 'ur', 'Uzbek': 'uz', 'Vietnamese': 'vi'}

|

| 110 |

+

|

| 111 |

+

out_dict_lang_ppocr = {'Abaza': 'abq', 'Adyghe': 'ady', 'Afrikaans': 'af', 'Albanian': 'sq', \

|

| 112 |

+

'Angika': 'ang', 'Arabic': 'ar', 'Avar': 'ava', 'Azerbaijani': 'az', 'Belarusian': 'be', \

|

| 113 |

+

'Bhojpuri': 'bho','Bihari': 'bh','Bosnian': 'bs','Bulgarian': 'bg','Chinese & English': 'ch', \

|

| 114 |

+

'Chinese Traditional': 'chinese_cht', 'Croatian': 'hr', 'Czech': 'cs', 'Danish': 'da', \

|

| 115 |

+

'Dargwa': 'dar', 'Dutch': 'nl', 'English': 'en', 'Estonian': 'et', 'French': 'fr', \

|

| 116 |

+

'German': 'german','Goan Konkani': 'gom','Hindi': 'hi','Hungarian': 'hu','Icelandic': 'is', \

|

| 117 |

+

'Indonesian': 'id', 'Ingush': 'inh', 'Irish': 'ga', 'Italian': 'it', 'Japan': 'japan', \

|

| 118 |

+

'Kabardian': 'kbd', 'Korean': 'korean', 'Kurdish': 'ku', 'Lak': 'lbe', 'Latvian': 'lv', \

|

| 119 |

+

'Lezghian': 'lez', 'Lithuanian': 'lt', 'Magahi': 'mah', 'Maithili': 'mai', 'Malay': 'ms', \

|

| 120 |

+

'Maltese': 'mt', 'Maori': 'mi', 'Marathi': 'mr', 'Mongolian': 'mn', 'Nagpur': 'sck', \

|

| 121 |

+

'Nepali': 'ne', 'Newari': 'new', 'Norwegian': 'no', 'Occitan': 'oc', 'Persian': 'fa', \

|

| 122 |

+

'Polish': 'pl', 'Portuguese': 'pt', 'Romanian': 'ro', 'Russia': 'ru', 'Saudi Arabia': 'sa', \

|

| 123 |

+

'Serbian(cyrillic)': 'rs_cyrillic', 'Serbian(latin)': 'rs_latin', 'Slovak': 'sk', \

|

| 124 |

+

'Slovenian': 'sl', 'Spanish': 'es', 'Swahili': 'sw', 'Swedish': 'sv', 'Tabassaran': 'tab', \

|

| 125 |

+

'Tagalog': 'tl', 'Tamil': 'ta', 'Telugu': 'te', 'Turkish': 'tr', 'Ukranian': 'uk', \

|

| 126 |

+

'Urdu': 'ur', 'Uyghur': 'ug', 'Uzbek': 'uz', 'Vietnamese': 'vi', 'Welsh': 'cy'}

|

| 127 |

+

|

| 128 |

+

#out_dict_lang_mmocr = {'English & Chinese': 'en'}

|

| 129 |

+

|

| 130 |

+

out_dict_lang_tesseract = {'Afrikaans': 'afr','Albanian': 'sqi','Amharic': 'amh', \

|

| 131 |

+

'Arabic': 'ara', 'Armenian': 'hye','Assamese': 'asm','Azerbaijani - Cyrilic': 'aze_cyrl', \

|

| 132 |

+

'Azerbaijani': 'aze', 'Basque': 'eus','Belarusian': 'bel','Bengali': 'ben','Bosnian': 'bos', \

|

| 133 |

+

'Breton': 'bre', 'Bulgarian': 'bul','Burmese': 'mya','Catalan; Valencian': 'cat', \

|

| 134 |

+

'Cebuano': 'ceb', 'Central Khmer': 'khm','Cherokee': 'chr','Chinese - Simplified': 'chi_sim', \

|

| 135 |

+

'Chinese - Traditional': 'chi_tra','Corsican': 'cos','Croatian': 'hrv','Czech': 'ces', \

|

| 136 |

+

'Danish':'dan','Dutch; Flemish':'nld','Dzongkha':'dzo','English, Middle (1100-1500)':'enm', \

|

| 137 |

+

'English': 'eng','Esperanto': 'epo','Estonian': 'est','Faroese': 'fao', \

|

| 138 |

+

'Filipino (old - Tagalog)': 'fil','Finnish': 'fin','French, Middle (ca.1400-1600)': 'frm', \

|

| 139 |

+

'French': 'fra','Galician': 'glg','Georgian - Old': 'kat_old','Georgian': 'kat', \

|

| 140 |

+

'German - Fraktur': 'frk','German': 'deu','Greek, Modern (1453-)': 'ell','Gujarati': 'guj', \

|

| 141 |

+

'Haitian; Haitian Creole': 'hat','Hebrew': 'heb','Hindi': 'hin','Hungarian': 'hun', \

|

| 142 |

+

'Icelandic': 'isl','Indonesian': 'ind','Inuktitut': 'iku','Irish': 'gle', \

|

| 143 |

+

'Italian - Old': 'ita_old','Italian': 'ita','Japanese': 'jpn','Javanese': 'jav', \

|

| 144 |

+

'Kannada': 'kan','Kazakh': 'kaz','Kirghiz; Kyrgyz': 'kir','Korean (vertical)': 'kor_vert', \

|

| 145 |

+

'Korean': 'kor','Kurdish (Arabic Script)': 'kur_ara','Lao': 'lao','Latin': 'lat', \

|

| 146 |

+

'Latvian':'lav','Lithuanian':'lit','Luxembourgish':'ltz','Macedonian':'mkd','Malay':'msa', \

|

| 147 |

+

'Malayalam': 'mal','Maltese': 'mlt','Maori': 'mri','Marathi': 'mar','Mongolian': 'mon', \

|

| 148 |

+

'Nepali': 'nep','Norwegian': 'nor','Occitan (post 1500)': 'oci', \

|

| 149 |

+

'Orientation and script detection module':'osd','Oriya':'ori','Panjabi; Punjabi':'pan', \

|

| 150 |

+

'Persian':'fas','Polish':'pol','Portuguese':'por','Pushto; Pashto':'pus','Quechua':'que', \

|

| 151 |

+

'Romanian; Moldavian; Moldovan': 'ron','Russian': 'rus','Sanskrit': 'san', \

|

| 152 |

+

'Scottish Gaelic': 'gla','Serbian - Latin': 'srp_latn','Serbian': 'srp','Sindhi': 'snd', \

|

| 153 |

+

'Sinhala; Sinhalese': 'sin','Slovak': 'slk','Slovenian': 'slv', \

|

| 154 |

+

'Spanish; Castilian - Old': 'spa_old','Spanish; Castilian': 'spa','Sundanese': 'sun', \

|

| 155 |

+

'Swahili': 'swa','Swedish': 'swe','Syriac': 'syr','Tajik': 'tgk','Tamil': 'tam', \

|

| 156 |

+

'Tatar':'tat','Telugu':'tel','Thai':'tha','Tibetan':'bod','Tigrinya':'tir','Tonga':'ton', \

|

| 157 |

+

'Turkish': 'tur','Uighur; Uyghur': 'uig','Ukrainian': 'ukr','Urdu': 'urd', \

|

| 158 |

+

'Uzbek - Cyrilic': 'uzb_cyrl','Uzbek': 'uzb','Vietnamese': 'vie','Welsh': 'cym', \

|

| 159 |

+

'Western Frisian': 'fry','Yiddish': 'yid','Yoruba': 'yor'}

|

| 160 |

+

|

| 161 |

+

out_list_dict_lang = [out_dict_lang_easyocr, out_dict_lang_ppocr, \

|

| 162 |

+

#out_dict_lang_mmocr, \

|

| 163 |

+

out_dict_lang_tesseract]

|

| 164 |

+

|

| 165 |

+

# Initialization of detection form

|

| 166 |

+

if 'columns_size' not in st.session_state:

|

| 167 |

+

st.session_state.columns_size = [2] + [1 for x in out_reader_type_list[1:]]

|

| 168 |

+

if 'column_width' not in st.session_state:

|

| 169 |

+

st.session_state.column_width = [400] + [300 for x in out_reader_type_list[1:]]

|

| 170 |

+

if 'columns_color' not in st.session_state:

|

| 171 |

+

st.session_state.columns_color = ["rgb(228,26,28)"] + \

|

| 172 |

+

["rgb(79, 43, 255)" for x in out_reader_type_list[1:]]

|

| 173 |

+

if 'list_coordinates' not in st.session_state:

|

| 174 |

+

st.session_state.list_coordinates = []

|

| 175 |

+

|

| 176 |

+

# Confidence color scale

|

| 177 |

+

out_list_confid = list(np.arange(0,101,1))

|

| 178 |

+

out_list_grad = mcp.gen_color_normalized(cmap="Greens",data_arr=np.array(out_list_confid))

|

| 179 |

+

out_dict_back_colors = {out_list_confid[i]: out_list_grad[i] \

|

| 180 |

+

for i in range(len(out_list_confid))}

|

| 181 |

+

|

| 182 |

+

list_y = [1 for i in out_list_confid]

|

| 183 |

+

df_confid = pd.DataFrame({'% confidence scale': out_list_confid, 'y': list_y})

|

| 184 |

+

|

| 185 |

+

out_fig = px.scatter(df_confid, x='% confidence scale', y='y', \

|

| 186 |

+

hover_data={'% confidence scale': True, 'y': False},

|

| 187 |

+

color=out_dict_back_colors.values(), range_y=[0.9,1.1], range_x=[0,100],

|

| 188 |

+

color_discrete_map="identity",height=50,symbol='y',symbol_sequence=['square'])

|

| 189 |

+

out_fig.update_xaxes(showticklabels=False)

|

| 190 |

+

out_fig.update_yaxes(showticklabels=False, range=[0.1, 1.1], visible=False)

|

| 191 |

+

out_fig.update_traces(marker_size=50)

|

| 192 |

+

out_fig.update_layout(paper_bgcolor="white", margin=dict(b=0,r=0,t=0,l=0), xaxis_side="top", \

|

| 193 |

+

showlegend=False)

|

| 194 |

+

|

| 195 |

+

return out_reader_type_list, out_reader_type_dict, out_list_dict_lang, \

|

| 196 |

+

out_cols_size, out_dict_back_colors, out_fig

|

| 197 |

+

|

| 198 |

+

###

|

| 199 |

+

@st.experimental_memo(show_spinner=False)

|

| 200 |

+

def init_easyocr(in_params):

|

| 201 |

+

"""Initialization of easyOCR reader

|

| 202 |

+

|

| 203 |

+

Args:

|

| 204 |

+

in_params (list): list with the language

|

| 205 |

+

|

| 206 |

+

Returns:

|

| 207 |

+

easyocr reader: the easyocr reader instance

|

| 208 |

+

"""

|

| 209 |

+

out_ocr = easyocr.Reader(in_params)

|

| 210 |

+

return out_ocr

|

| 211 |

+

|

| 212 |

+

###

|

| 213 |

+

@st.cache(show_spinner=False)

|

| 214 |

+

def init_ppocr(in_params):

|

| 215 |

+

"""Initialization of PPOCR reader

|

| 216 |

+

|

| 217 |

+

Args:

|

| 218 |

+

in_params (dict): dict with parameters

|

| 219 |

+

|

| 220 |

+

Returns:

|

| 221 |

+

ppocr reader: the ppocr reader instance

|

| 222 |

+

"""

|

| 223 |

+

out_ocr = PaddleOCR(lang=in_params[0], **in_params[1])

|

| 224 |

+

return out_ocr

|

| 225 |

+

|

| 226 |

+

###

|

| 227 |

+

#@st.experimental_memo(show_spinner=False)

|

| 228 |

+

#def init_mmocr(in_params):

|

| 229 |

+

# """Initialization of MMOCR reader

|

| 230 |

+

#

|

| 231 |

+

# Args:

|

| 232 |

+

# in_params (dict): dict with parameters

|

| 233 |

+

#

|

| 234 |

+

# Returns:

|

| 235 |

+

# mmocr reader: the ppocr reader instance

|

| 236 |

+

# """

|

| 237 |

+

# out_ocr = MMOCR(recog=None, **in_params[1])

|

| 238 |

+

# return out_ocr

|

| 239 |

+

|

| 240 |

+

###

|

| 241 |

+

def init_readers(in_list_params):

|

| 242 |

+

"""Initialization of the readers, and return them as list

|

| 243 |

+

|

| 244 |

+

Args:

|

| 245 |

+

in_list_params (list): list of dicts of parameters for each reader

|

| 246 |

+

|

| 247 |

+

Returns:

|

| 248 |

+

list: list of the reader's instances

|

| 249 |

+

"""

|

| 250 |

+

# Instantiations of the readers :

|

| 251 |

+

# - EasyOCR

|

| 252 |

+

with st.spinner("EasyOCR reader initialization in progress ..."):

|

| 253 |

+

reader_easyocr = init_easyocr([in_list_params[0][0]])

|

| 254 |

+

|

| 255 |

+

# - PPOCR

|

| 256 |

+

# Paddleocr

|

| 257 |

+

with st.spinner("PPOCR reader initialization in progress ..."):

|

| 258 |

+

reader_ppocr = init_ppocr(in_list_params[1])

|

| 259 |

+

|

| 260 |

+

# - MMOCR

|

| 261 |

+

#with st.spinner("MMOCR reader initialization in progress ..."):

|

| 262 |

+

# reader_mmocr = init_mmocr(in_list_params[2])

|

| 263 |

+

|

| 264 |

+

out_list_readers = [reader_easyocr, reader_ppocr] #, reader_mmocr]

|

| 265 |

+

|

| 266 |

+

return out_list_readers

|

| 267 |

+

|

| 268 |

+

###

|

| 269 |

+

def load_image(in_image_file):

|

| 270 |

+

"""Load input file and open it

|

| 271 |

+

|

| 272 |

+

Args:

|

| 273 |

+

in_image_file (string or Streamlit UploadedFile): image to consider

|

| 274 |

+

|

| 275 |

+

Returns:

|

| 276 |

+

string : locally saved image path (img.)

|

| 277 |

+

PIL.Image : input file opened with Pillow

|

| 278 |

+

matrix : input file opened with Opencv

|

| 279 |

+

"""

|

| 280 |

+

|

| 281 |

+

#if isinstance(in_image_file, str):

|

| 282 |

+

# out_image_path = "img."+in_image_file.split('.')[-1]

|

| 283 |

+

#else:

|

| 284 |

+

# out_image_path = "img."+in_image_file.name.split('.')[-1]

|

| 285 |

+

|

| 286 |

+

if isinstance(in_image_file, str):

|

| 287 |

+

out_image_path = "tmp_"+in_image_file

|

| 288 |

+

else:

|

| 289 |

+

out_image_path = "tmp_"+in_image_file.name

|

| 290 |

+

|

| 291 |

+

img = Image.open(in_image_file)

|

| 292 |

+

img_saved = img.save(out_image_path)

|

| 293 |

+

|

| 294 |

+

# Read image

|

| 295 |

+

out_image_orig = Image.open(out_image_path)

|

| 296 |

+

out_image_cv2 = cv2.cvtColor(cv2.imread(out_image_path), cv2.COLOR_BGR2RGB)

|

| 297 |

+

|

| 298 |

+

return out_image_path, out_image_orig, out_image_cv2

|

| 299 |

+

|

| 300 |

+

###

|

| 301 |

+

@st.experimental_memo(show_spinner=False)

|

| 302 |

+

def easyocr_detect(_in_reader, in_image_path, in_params):

|

| 303 |

+

"""Detection with EasyOCR

|

| 304 |

+

|

| 305 |

+

Args:

|

| 306 |

+

_in_reader (EasyOCR reader) : the previously initialized instance

|

| 307 |

+

in_image_path (string ) : locally saved image path

|

| 308 |

+

in_params (list) : list with the parameters for detection

|

| 309 |

+

|

| 310 |

+

Returns:

|

| 311 |

+

list : list of the boxes coordinates

|

| 312 |

+

exception on error, string 'OK' otherwise

|

| 313 |

+

"""

|

| 314 |

+

try:

|

| 315 |

+

dict_param = in_params[1]

|

| 316 |

+

detection_result = _in_reader.detect(in_image_path,

|

| 317 |

+

#width_ths=0.7,

|

| 318 |

+

#mag_ratio=1.5

|

| 319 |

+

**dict_param

|

| 320 |

+

)

|

| 321 |

+

easyocr_coordinates = detection_result[0][0]

|

| 322 |

+

|

| 323 |

+

# The format of the coordinate is as follows: [x_min, x_max, y_min, y_max]

|

| 324 |

+

# Format boxes coordinates for draw

|

| 325 |

+

out_easyocr_boxes_coordinates = list(map(easyocr_coord_convert, easyocr_coordinates))

|

| 326 |

+

out_status = 'OK'

|

| 327 |

+

except Exception as e:

|

| 328 |

+

out_easyocr_boxes_coordinates = []

|

| 329 |

+

out_status = e

|

| 330 |

+

|

| 331 |

+

return out_easyocr_boxes_coordinates, out_status

|

| 332 |

+

|

| 333 |

+

###

|

| 334 |

+

@st.experimental_memo(show_spinner=False)

|

| 335 |

+

def ppocr_detect(_in_reader, in_image_path):

|

| 336 |

+

"""Detection with PPOCR

|

| 337 |

+

|

| 338 |

+

Args:

|

| 339 |

+

_in_reader (PPOCR reader) : the previously initialized instance

|

| 340 |

+

in_image_path (string ) : locally saved image path

|

| 341 |

+

|

| 342 |

+

Returns:

|

| 343 |

+

list : list of the boxes coordinates

|

| 344 |

+

exception on error, string 'OK' otherwise

|

| 345 |

+

"""

|

| 346 |

+

# PPOCR detection method

|

| 347 |

+

try:

|

| 348 |

+

out_ppocr_boxes_coordinates = _in_reader.ocr(in_image_path, rec=False)

|

| 349 |

+

out_status = 'OK'

|

| 350 |

+

except Exception as e:

|

| 351 |

+

out_ppocr_boxes_coordinates = []

|

| 352 |

+

out_status = e

|

| 353 |

+

|

| 354 |

+

return out_ppocr_boxes_coordinates, out_status

|

| 355 |

+

|

| 356 |

+

###

|

| 357 |

+

#@st.experimental_memo(show_spinner=False)

|

| 358 |

+

#def mmocr_detect(_in_reader, in_image_path):

|

| 359 |

+

# """Detection with MMOCR

|

| 360 |

+

#

|

| 361 |

+

# Args:

|

| 362 |

+

# _in_reader (EasyORC reader) : the previously initialized instance

|

| 363 |

+

# in_image_path (string) : locally saved image path

|

| 364 |

+

# in_params (list) : list with the parameters

|

| 365 |

+

#

|

| 366 |

+

# Returns:

|

| 367 |

+

# list : list of the boxes coordinates

|

| 368 |

+

# exception on error, string 'OK' otherwise

|

| 369 |

+

# """

|

| 370 |

+

# # MMOCR detection method

|

| 371 |

+

# out_mmocr_boxes_coordinates = []

|

| 372 |

+

# try:

|

| 373 |

+

# det_result = _in_reader.readtext(in_image_path, details=True)

|

| 374 |

+

# bboxes_list = [res['boundary_result'] for res in det_result]

|

| 375 |

+

# for bboxes in bboxes_list:

|

| 376 |

+

# for bbox in bboxes:

|

| 377 |

+

# if len(bbox) > 9:

|

| 378 |

+

# min_x = min(bbox[0:-1:2])

|

| 379 |

+

# min_y = min(bbox[1:-1:2])

|

| 380 |

+

# max_x = max(bbox[0:-1:2])

|

| 381 |

+

# max_y = max(bbox[1:-1:2])

|

| 382 |

+

# #box = [min_x, min_y, max_x, min_y, max_x, max_y, min_x, max_y]

|

| 383 |

+

# else:

|

| 384 |

+

# min_x = min(bbox[0:-1:2])

|

| 385 |

+

# min_y = min(bbox[1::2])

|

| 386 |

+

# max_x = max(bbox[0:-1:2])

|

| 387 |

+

# max_y = max(bbox[1::2])

|

| 388 |

+

# box4 = [ [min_x, min_y], [max_x, min_y], [max_x, max_y], [min_x, max_y] ]

|

| 389 |

+

# out_mmocr_boxes_coordinates.append(box4)

|

| 390 |

+

# out_status = 'OK'

|

| 391 |

+

# except Exception as e:

|

| 392 |

+

# out_status = e

|

| 393 |

+

#

|

| 394 |

+

# return out_mmocr_boxes_coordinates, out_status

|

| 395 |

+

|

| 396 |

+

###

|

| 397 |

+

def cropped_1box(in_box, in_img):

|

| 398 |

+

"""Construction of an cropped image corresponding to an area of the initial image

|

| 399 |

+

|

| 400 |

+

Args:

|

| 401 |

+

in_box (list) : box with coordinates

|

| 402 |

+

in_img (matrix) : image

|

| 403 |

+

|

| 404 |