Spaces:

Running

Running

Commit

·

fd7a708

1

Parent(s):

9eda409

Init

Browse files- README.md +3 -3

- app.py +55 -0

- images/244-186-801-1681-1.jpg +0 -0

- images/560-085-462-5494-1.jpg +0 -0

- images/761-650-065-7595-1.jpg +0 -0

- images/761-670-010-2017-1.jpg +0 -0

- images/800-434-901-2658-1.jpg +0 -0

- requirements.txt +4 -0

- weights/best.pt +3 -0

README.md

CHANGED

|

@@ -1,14 +1,14 @@

|

|

| 1 |

---

|

| 2 |

title: Crop Detection

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

colorTo: red

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 4.44.0

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: agpl-3.0

|

| 11 |

-

short_description:

|

| 12 |

---

|

| 13 |

|

| 14 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 1 |

---

|

| 2 |

title: Crop Detection

|

| 3 |

+

emoji: 🍋🟩

|

| 4 |

+

colorFrom: purple

|

| 5 |

colorTo: red

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 4.44.0

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

license: agpl-3.0

|

| 11 |

+

short_description: 'Detect product on pictures and crop it. '

|

| 12 |

---

|

| 13 |

|

| 14 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

app.py

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from pathlib import Path

|

| 2 |

+

|

| 3 |

+

import gradio as gr

|

| 4 |

+

from ultralytics import YOLO

|

| 5 |

+

from PIL import Image

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

# Load YOLOv8n model

|

| 9 |

+

MODEL = YOLO('weights/best.pt')

|

| 10 |

+

IMAGES_PATH = Path("images/")

|

| 11 |

+

|

| 12 |

+

INF_PARAMETERS = {

|

| 13 |

+

"imgsz": 640, # image size

|

| 14 |

+

"conf": 0.8, # confidence threshold

|

| 15 |

+

"max_det": 1 # maximum number of detections

|

| 16 |

+

}

|

| 17 |

+

|

| 18 |

+

EXAMPLES = [path for path in IMAGES_PATH.iterdir()]

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

# Function to detect objects and crop the image

|

| 22 |

+

def detect_and_crop(image: Image.Image) -> Image.Image:

|

| 23 |

+

# Perform object detection

|

| 24 |

+

results = MODEL.predict(image,**INF_PARAMETERS)

|

| 25 |

+

result = results[0]

|

| 26 |

+

for box in result.boxes.xyxy.cpu().numpy():

|

| 27 |

+

cropped_image = image.crop(box=box)

|

| 28 |

+

return cropped_image

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

# Gradio UI

|

| 32 |

+

title = "Crop-Detection"

|

| 33 |

+

description = """## 🍋🟩 Automatically crop product pictures! 🍋🟩

|

| 34 |

+

When contributors use the mobile app, they are asked to take pictures of the product, then to crop it.

|

| 35 |

+

To assist users during the process, we create a crop-detection model desin to detect the product edges.

|

| 36 |

+

|

| 37 |

+

We fine-tuned Yolov8n on images extracted from the Open Food Facts database.

|

| 38 |

+

Check the [model repo page](https://huggingface.co/openfoodfacts/crop-detection) for more information.

|

| 39 |

+

"""

|

| 40 |

+

|

| 41 |

+

# Gradio Interface

|

| 42 |

+

demo = gr.Interface(

|

| 43 |

+

fn=detect_and_crop,

|

| 44 |

+

inputs=gr.Image(type="pil", width=300),

|

| 45 |

+

outputs=gr.Image(type="pil", width=300),

|

| 46 |

+

title=title,

|

| 47 |

+

description=description,

|

| 48 |

+

allow_flagging="never",

|

| 49 |

+

examples=EXAMPLES

|

| 50 |

+

)

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

# Launch the Gradio app

|

| 54 |

+

if __name__ == "__main__":

|

| 55 |

+

demo.launch()

|

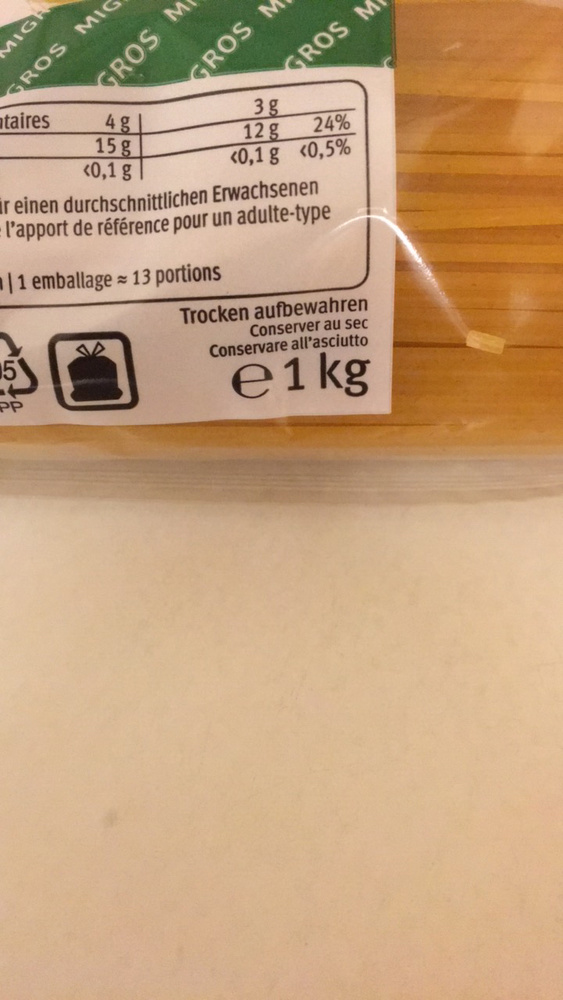

images/244-186-801-1681-1.jpg

ADDED

|

images/560-085-462-5494-1.jpg

ADDED

|

images/761-650-065-7595-1.jpg

ADDED

|

images/761-670-010-2017-1.jpg

ADDED

|

images/800-434-901-2658-1.jpg

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

ultralytics=8.2.94

|

| 2 |

+

gradio

|

| 3 |

+

spaces

|

| 4 |

+

numpy

|

weights/best.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e1b6eeff46da1f6c57d6835b695d074b1cb4d1194cee8f395007a48f99fd077c

|

| 3 |

+

size 6267491

|