+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ChatGPT 学术优化 {get_current_version()}

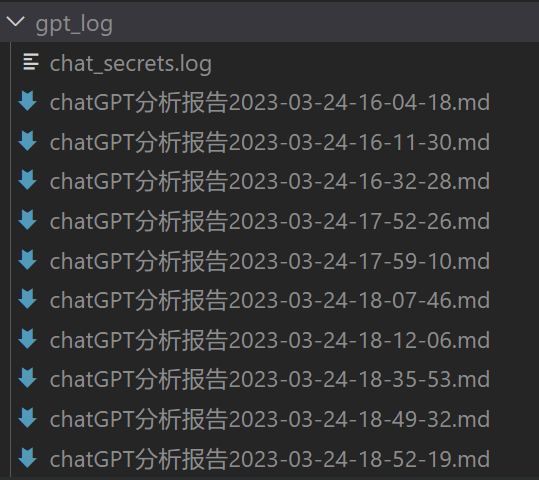

" +description = """代码开源和更新[地址🚀](https://github.com/binary-husky/chatgpt_academic),感谢热情的[开发者们❤️](https://github.com/binary-husky/chatgpt_academic/graphs/contributors)""" + +# 问询记录, python 版本建议3.9+(越新越好) +import logging +os.makedirs("gpt_log", exist_ok=True) +try:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO, encoding="utf-8") +except:logging.basicConfig(filename="gpt_log/chat_secrets.log", level=logging.INFO) +print("所有问询记录将自动保存在本地目录./gpt_log/chat_secrets.log, 请注意自我隐私保护哦!") + +# 一些普通功能模块 +from core_functional import get_core_functions +functional = get_core_functions() + +# 高级函数插件 +from crazy_functional import get_crazy_functions +crazy_fns = get_crazy_functions() + +# 处理markdown文本格式的转变 +gr.Chatbot.postprocess = format_io + +# 做一些外观色彩上的调整 +from theme import adjust_theme, advanced_css +set_theme = adjust_theme() + +# 代理与自动更新 +from check_proxy import check_proxy, auto_update +proxy_info = check_proxy(proxies) + +gr_L1 = lambda: gr.Row().style() +gr_L2 = lambda scale: gr.Column(scale=scale) +if LAYOUT == "TOP-DOWN": + gr_L1 = lambda: DummyWith() + gr_L2 = lambda scale: gr.Row() + CHATBOT_HEIGHT /= 2 + +cancel_handles = [] +with gr.Blocks(title="ChatGPT 学术优化", theme=set_theme, analytics_enabled=False, css=advanced_css) as demo: + gr.HTML(title_html) + gr.HTML('''切忌在“复制空间”(Duplicate Space)之前填入API_KEY或进行提问,否则您的API_KEY将极可能被空间所有者攫取!

', '.....').replace('$', '.')+"`... ]" + observe_win.append(print_something_really_funny) + stat_str = ''.join([f'`{mutable[thread_index][2]}`: {obs}\n\n' + if not done else f'`{mutable[thread_index][2]}`\n\n' + for thread_index, done, obs in zip(range(len(worker_done)), worker_done, observe_win)]) + chatbot[-1] = [chatbot[-1][0], f'多线程操作已经开始,完成情况: \n\n{stat_str}' + ''.join(['.']*(cnt % 10+1))] + msg = "正常" + yield from update_ui(chatbot=chatbot, history=[]) # 刷新界面 + # 异步任务结束 + gpt_response_collection = [] + for inputs_show_user, f in zip(inputs_show_user_array, futures): + gpt_res = f.result() + gpt_response_collection.extend([inputs_show_user, gpt_res]) + + if show_user_at_complete: + for inputs_show_user, f in zip(inputs_show_user_array, futures): + gpt_res = f.result() + chatbot.append([inputs_show_user, gpt_res]) + yield from update_ui(chatbot=chatbot, history=[]) # 刷新界面 + time.sleep(1) + return gpt_response_collection + + +def WithRetry(f): + """ + 装饰器函数,用于自动重试。 + """ + def decorated(retry, res_when_fail, *args, **kwargs): + assert retry >= 0 + while True: + try: + res = yield from f(*args, **kwargs) + return res + except: + retry -= 1 + if retry<0: + print("达到最大重试次数") + break + else: + print("重试中……") + continue + return res_when_fail + return decorated + + +def breakdown_txt_to_satisfy_token_limit(txt, get_token_fn, limit): + def cut(txt_tocut, must_break_at_empty_line): # 递归 + if get_token_fn(txt_tocut) <= limit: + return [txt_tocut] + else: + lines = txt_tocut.split('\n') + estimated_line_cut = limit / get_token_fn(txt_tocut) * len(lines) + estimated_line_cut = int(estimated_line_cut) + for cnt in reversed(range(estimated_line_cut)): + if must_break_at_empty_line: + if lines[cnt] != "": + continue + print(cnt) + prev = "\n".join(lines[:cnt]) + post = "\n".join(lines[cnt:]) + if get_token_fn(prev) < limit: + break + if cnt == 0: + print('what the fuck ?') + raise RuntimeError("存在一行极长的文本!") + # print(len(post)) + # 列表递归接龙 + result = [prev] + result.extend(cut(post, must_break_at_empty_line)) + return result + try: + return cut(txt, must_break_at_empty_line=True) + except RuntimeError: + return cut(txt, must_break_at_empty_line=False) + + +def breakdown_txt_to_satisfy_token_limit_for_pdf(txt, get_token_fn, limit): + def cut(txt_tocut, must_break_at_empty_line): # 递归 + if get_token_fn(txt_tocut) <= limit: + return [txt_tocut] + else: + lines = txt_tocut.split('\n') + estimated_line_cut = limit / get_token_fn(txt_tocut) * len(lines) + estimated_line_cut = int(estimated_line_cut) + cnt = 0 + for cnt in reversed(range(estimated_line_cut)): + if must_break_at_empty_line: + if lines[cnt] != "": + continue + print(cnt) + prev = "\n".join(lines[:cnt]) + post = "\n".join(lines[cnt:]) + if get_token_fn(prev) < limit: + break + if cnt == 0: + # print('what the fuck ? 存在一行极长的文本!') + raise RuntimeError("存在一行极长的文本!") + # print(len(post)) + # 列表递归接龙 + result = [prev] + result.extend(cut(post, must_break_at_empty_line)) + return result + try: + return cut(txt, must_break_at_empty_line=True) + except RuntimeError: + try: + return cut(txt, must_break_at_empty_line=False) + except RuntimeError: + # 这个中文的句号是故意的,作为一个标识而存在 + res = cut(txt.replace('.', '。\n'), must_break_at_empty_line=False) + return [r.replace('。\n', '.') for r in res] diff --git a/crazy_functions/test_project/cpp/cppipc/buffer.cpp b/crazy_functions/test_project/cpp/cppipc/buffer.cpp new file mode 100644 index 0000000000000000000000000000000000000000..084b8153e9401f4e9dc5a6a67cfb5f48b0183ccb --- /dev/null +++ b/crazy_functions/test_project/cpp/cppipc/buffer.cpp @@ -0,0 +1,87 @@ +#include "libipc/buffer.h" +#include "libipc/utility/pimpl.h" + +#include

(params)...);

+ });

+ }

+

+ template (params)...);

+ });

+ }

+

+ template (params)...);

+ }

+

+ template (params)...);

+ }

+

+ bool pop(T& item) {

+ return base_t::pop(item, [](bool) {});

+ }

+

+ template