bert-base-uncased-Winowhy

This model is a fine-tuned version of bert-base-uncased.

It achieves the following results on the evaluation set:

- Loss: 0.8005

- Accuracy: 0.7118

Model description

For more information on how it was created, check out the following link: https://github.com/DunnBC22/NLP_Projects/blob/main/Multiple%20Choice/Winowhy/Winowhy%20-%20Multiple%20Choice%20Using%20BERT.ipynb

Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

Training and evaluation data

Dataset Source: https://huggingface.co/datasets/tasksource/bigbench/viewer/winowhy/train

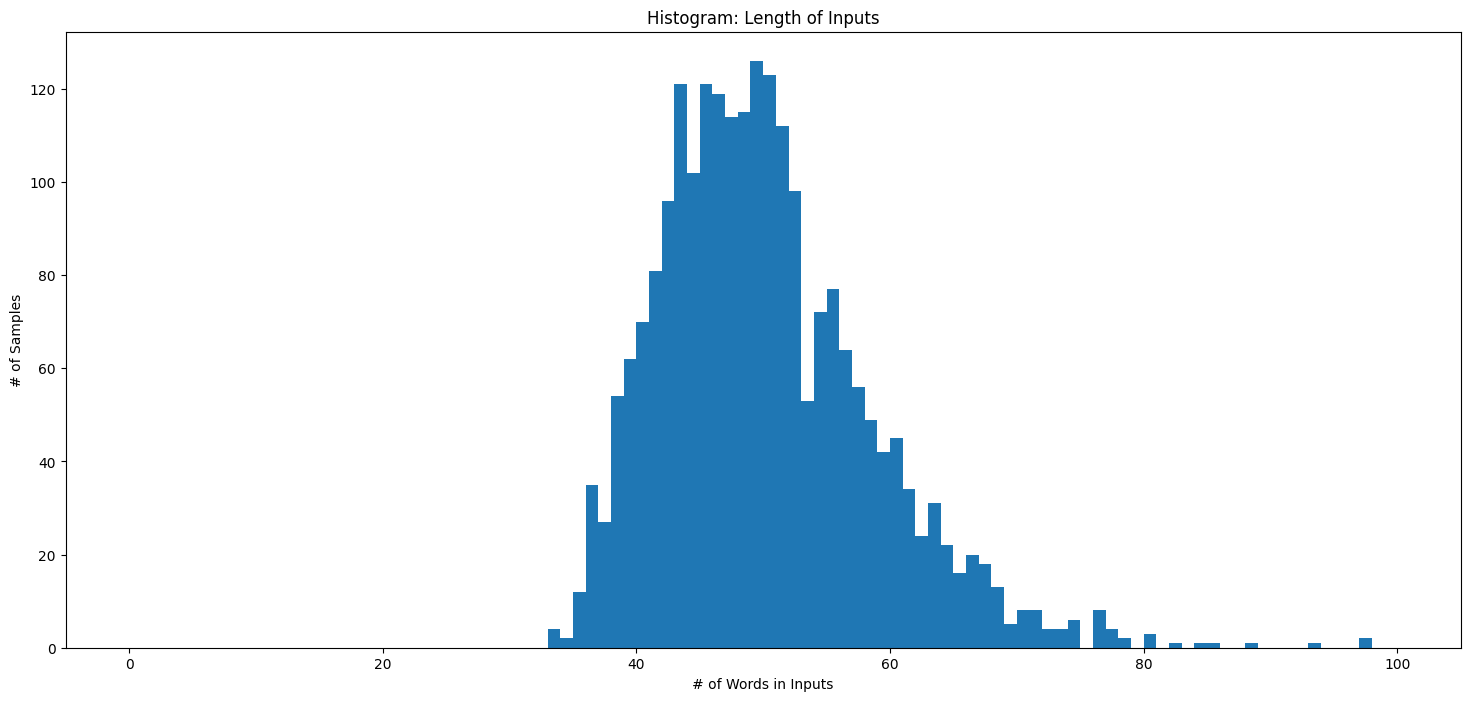

Histogram of Input Lengths

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|---|---|---|---|---|

| 0.7028 | 1.0 | 115 | 0.6916 | 0.5371 |

| 0.6119 | 2.0 | 230 | 0.5572 | 0.7031 |

| 0.4959 | 3.0 | 345 | 0.5328 | 0.7118 |

| 0.4537 | 4.0 | 460 | 0.5829 | 0.7118 |

| 0.2275 | 5.0 | 575 | 0.8005 | 0.7118 |

Framework versions

- Transformers 4.26.1

- Pytorch 2.0.1

- Datasets 2.13.1

- Tokenizers 0.13.3

- Downloads last month

- 14

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.