Model description

This model is a part of project targeting Debiasing of generative stable diffusion models.

LoRA text2image fine-tuning - NYUAD-ComNets/Middle_Eastern_Male_Profession_Model

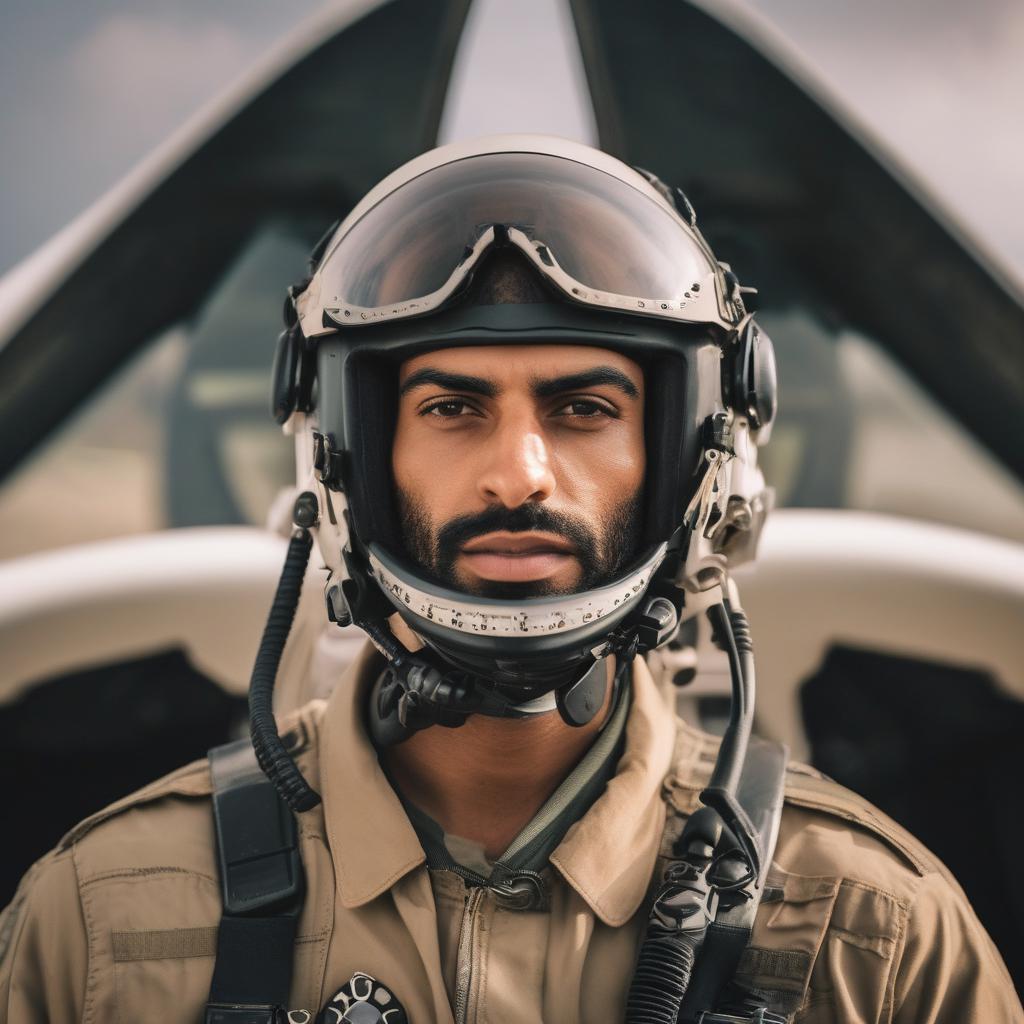

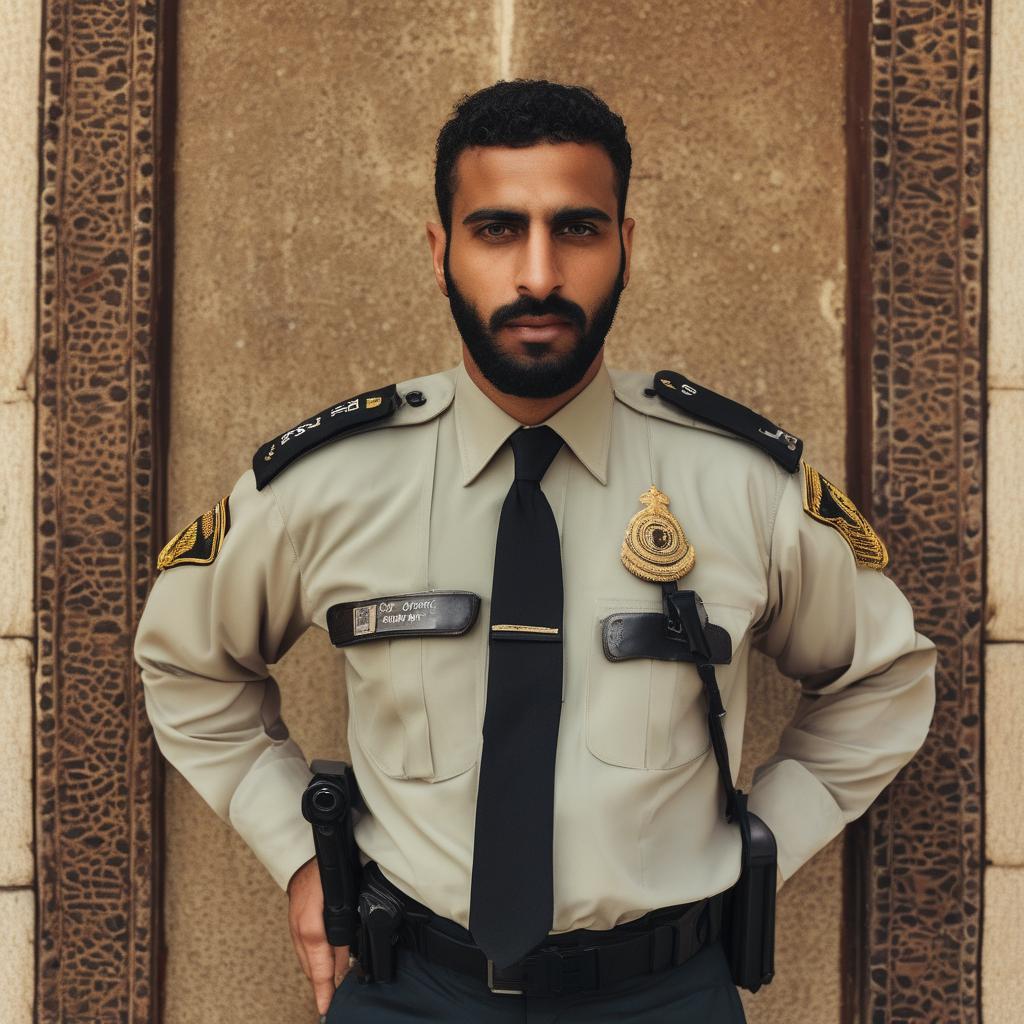

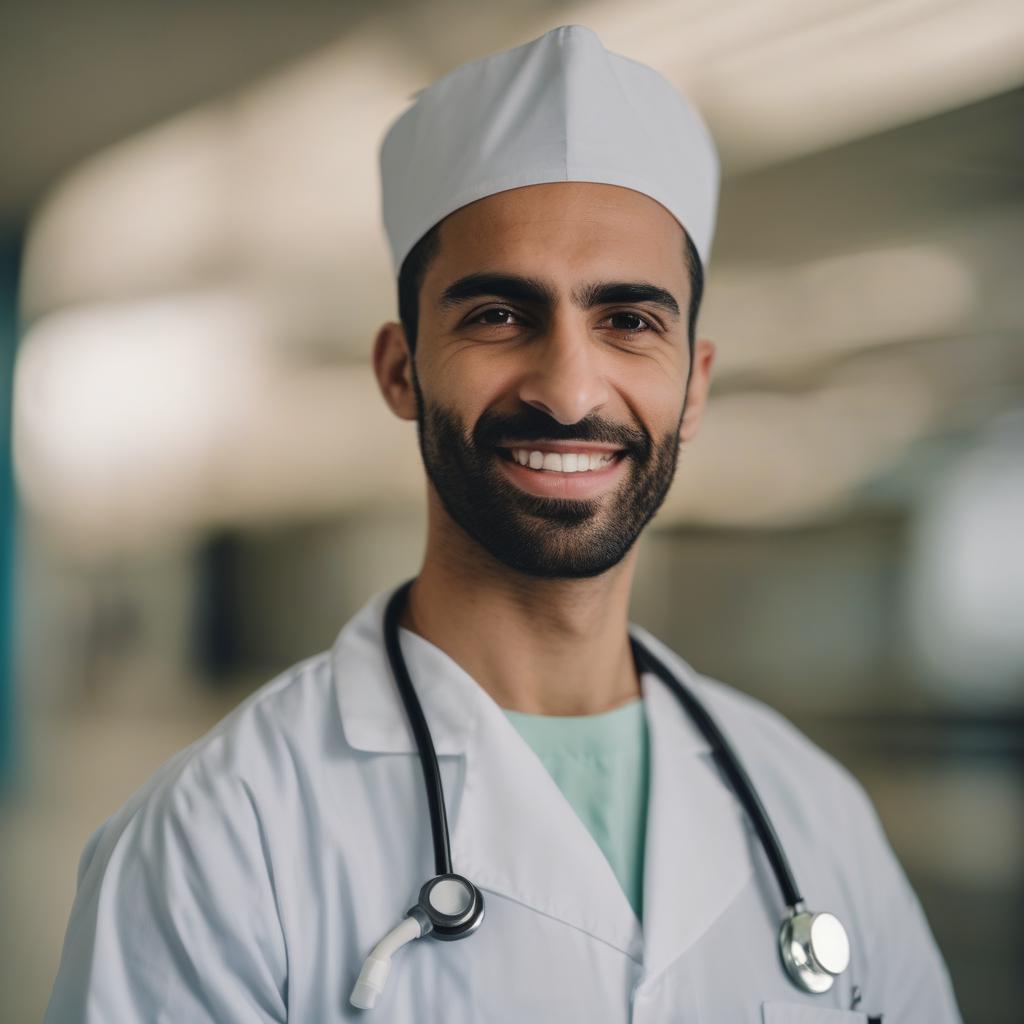

These are LoRA adaption weights for stabilityai/stable-diffusion-xl-base-1.0. The weights were fine-tuned on the NYUAD-ComNets/Middle_Eastern_Male_Profession dataset. You can find some example images.

prompt: a photo of a {profession}, looking at the camera, closeup headshot facing forward, ultra quality, sharp focus

How to use this model:

import requests

API_URL = "https://api-inference.huggingface.co/models/NYUAD-ComNets/Middle_Eastern_Male_Profession_Model"

headers = {"Authorization": "Bearer {hugging_face token}"}

def query(payload):

response = requests.post(API_URL, headers=headers, json=payload)

return response.content

image_bytes = query({

"inputs": "a headshot of a person with green hair and eyeglasses",

"parameters": {"negative_prompt": "cartoon",

"seed":766},

})

# You can access the image with PIL.Image for example

import io

from PIL import Image

image = Image.open(io.BytesIO(image_bytes))

image

import torch

from compel import Compel, ReturnedEmbeddingsType

from diffusers import DiffusionPipeline

import random

negative_prompt = "cartoon, anime, 3d, painting, b&w, low quality"

models=["NYUAD-ComNets/Asian_Female_Profession_Model","NYUAD-ComNets/Black_Female_Profession_Model","NYUAD-ComNets/White_Female_Profession_Model",

"NYUAD-ComNets/Indian_Female_Profession_Model","NYUAD-ComNets/Latino_Hispanic_Female_Profession_Model","NYUAD-ComNets/Middle_Eastern_Female_Profession_Model",

"NYUAD-ComNets/Asian_Male_Profession_Model","NYUAD-ComNets/Black_Male_Profession_Model","NYUAD-ComNets/White_Male_Profession_Model",

"NYUAD-ComNets/Indian_Male_Profession_Model","NYUAD-ComNets/Latino_Hispanic_Male_Profession_Model","NYUAD-ComNets/Middle_Eastern_Male_Profession_Model"]

adapters=["asian_female","black_female","white_female","indian_female","latino_female","middle_east_female",

"asian_male","black_male","white_male","indian_male","latino_male","middle_east_male"]

pipeline = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-base-1.0", variant="fp16", use_safetensors=True, torch_dtype=torch.float16).to("cuda")

for i,j in zip(models,adapters):

pipeline.load_lora_weights(i, weight_name="pytorch_lora_weights.safetensors",adapter_name=j)

pipeline.set_adapters(random.choice(adapters))

compel = Compel(tokenizer=[pipeline.tokenizer, pipeline.tokenizer_2] ,

text_encoder=[pipeline.text_encoder, pipeline.text_encoder_2],

returned_embeddings_type=ReturnedEmbeddingsType.PENULTIMATE_HIDDEN_STATES_NON_NORMALIZED,

requires_pooled=[False, True],truncate_long_prompts=False)

conditioning, pooled = compel("a photo of a doctor, looking at the camera, closeup headshot facing forward, ultra quality, sharp focus")

negative_conditioning, negative_pooled = compel(negative_prompt)

[conditioning, negative_conditioning] = compel.pad_conditioning_tensors_to_same_length([conditioning, negative_conditioning])

image = pipeline(prompt_embeds=conditioning, negative_prompt_embeds=negative_conditioning,

pooled_prompt_embeds=pooled, negative_pooled_prompt_embeds=negative_pooled,

num_inference_steps=40).images[0]

image.save('/../../x.jpg')

Examples

|

|

|

|

|

|

|

|

|

|

|

|

Training data

NYUAD-ComNets/Middle_Eastern_Male_Profession dataset was used to fine-tune stabilityai/stable-diffusion-xl-base-1.0

profession list =['pilot','doctor','nurse','pharmacist','dietitian','professor','teacher','mathematics scientist','computer engineer','programmer','tailor','cleaner', 'soldier','security guard','lawyer','manager','accountant','secretary','singer','journalist','youtuber','tiktoker','fashion model','chef','sushi chef']

Configurations

LoRA for the text encoder was enabled: False.

Special VAE used for training: madebyollin/sdxl-vae-fp16-fix.

BibTeX entry and citation info

@article{aldahoul2024ai,

title={AI-generated faces free from racial and gender stereotypes},

author={AlDahoul, Nouar and Rahwan, Talal and Zaki, Yasir},

journal={arXiv preprint arXiv:2402.01002},

year={2024}

}

@misc{ComNets,

url={[https://huggingface.co/NYUAD-ComNets/Middle_Eastern_Male_Profession_Model](https://huggingface.co/NYUAD-ComNets/Middle_Eastern_Male_Profession_Model)},

title={Middle_Eastern_Male_Profession_Model},

author={Nouar AlDahoul, Talal Rahwan, Yasir Zaki}

}

- Downloads last month

- 22

Model tree for NYUAD-ComNets/Middle_Eastern_Male_Profession_Model

Base model

stabilityai/stable-diffusion-xl-base-1.0