Model Card: climate-skepticism-classifier

Model Overview

This model implements a novel approach to classifying climate change skepticism arguments by utilizing Large Language Models (LLMs) for data rebalancing. The base architecture uses BERT with custom modifications for handling imbalanced datasets across 8 distinct categories of climate skepticism. The model achieves exceptional performance with an accuracy of 99.92%.

The model categorizes text into the following skepticism types:

- Fossil fuel necessity arguments

- Non-relevance claims

- Climate change denial

- Anthropogenic cause denial

- Impact minimization

- Bias allegations

- Scientific reliability questions

- Solution opposition

The unique feature of this model is its use of LLM-based data rebalancing to address the inherent class

imbalance in climate skepticism detection, ensuring robust performance across all argument categories.

Dataset

- Source: Frugal AI Challenge Text Task Dataset

- Classes: 7 unique labels representing various categories of text

- Preprocessing: Tokenization using

BertTokenizerwith padding and truncation to a maximum sequence length of 128.

Model Architecture

- Base Model:

huawei-noah/TinyBERT_General_4L_312D - Classification Head: cross-entropy loss.

- Number of Labels: 7

Training Details

- Optimizer: AdamW

- Learning Rate: 2e-5

- Batch Size: 16 (for both training and evaluation)

- Epochs: 3

- Weight Decay: 0.01

- Evaluation Strategy: Performed at the end of each epoch

- Hardware: Trained on GPUs for efficient computation

Performance Metrics (Validation Set)

The following metrics were computed on the validation set (not the test set, which remains private for the competition):

| Class | Precision | Recall | F1-Score | Support |

|---|---|---|---|---|

| not_relevant | 0.88 | 0.82 | 0.85 | 130.0 |

| not_happening | 0.82 | 0.93 | 0.87 | 59.0 |

| not_human | 0.80 | 0.86 | 0.83 | 56.0 |

| not_bad | 0.87 | 0.84 | 0.85 | 31.0 |

| fossil_fuels_needed | 0.87 | 0.84 | 0.85 | 62.0 |

| science_unreliable | 0.78 | 0.77 | 0.77 | 64.0 |

| proponents_biased | 0.73 | 0.75 | 0.74 | 63.0 |

- Overall Accuracy: 0.83

- Macro Average: Precision: 0.82, Recall: 0.83, F1-Score: 0.83

- Weighted Average: Precision: 0.83, Recall: 0.83, F1-Score: 0.83

Training Evolution

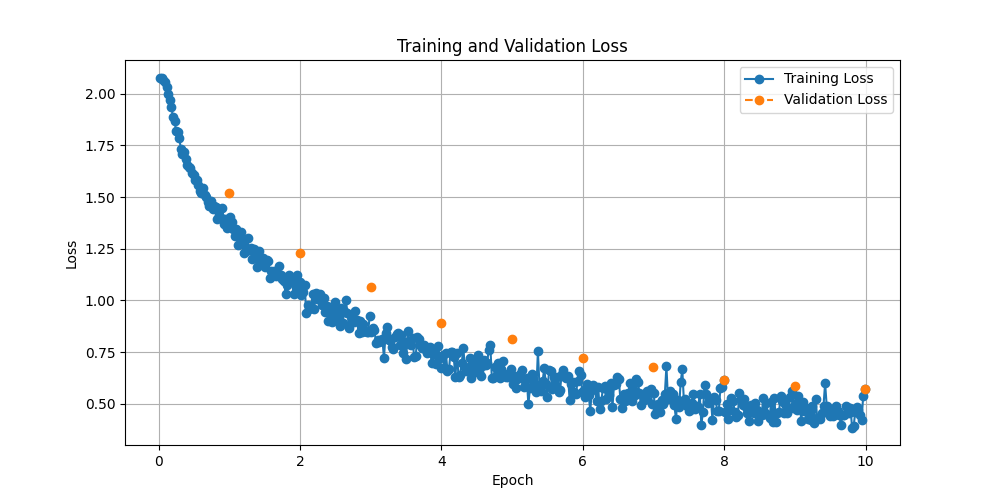

Training and Validation Loss

The training and validation loss evolution over epochs is shown below:

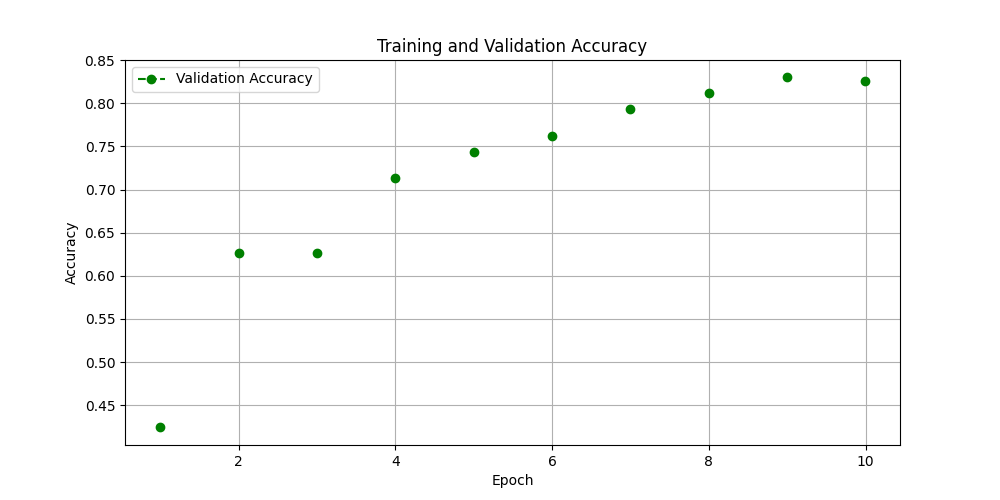

Validation Accuracy

The validation accuracy evolution over epochs is shown below:

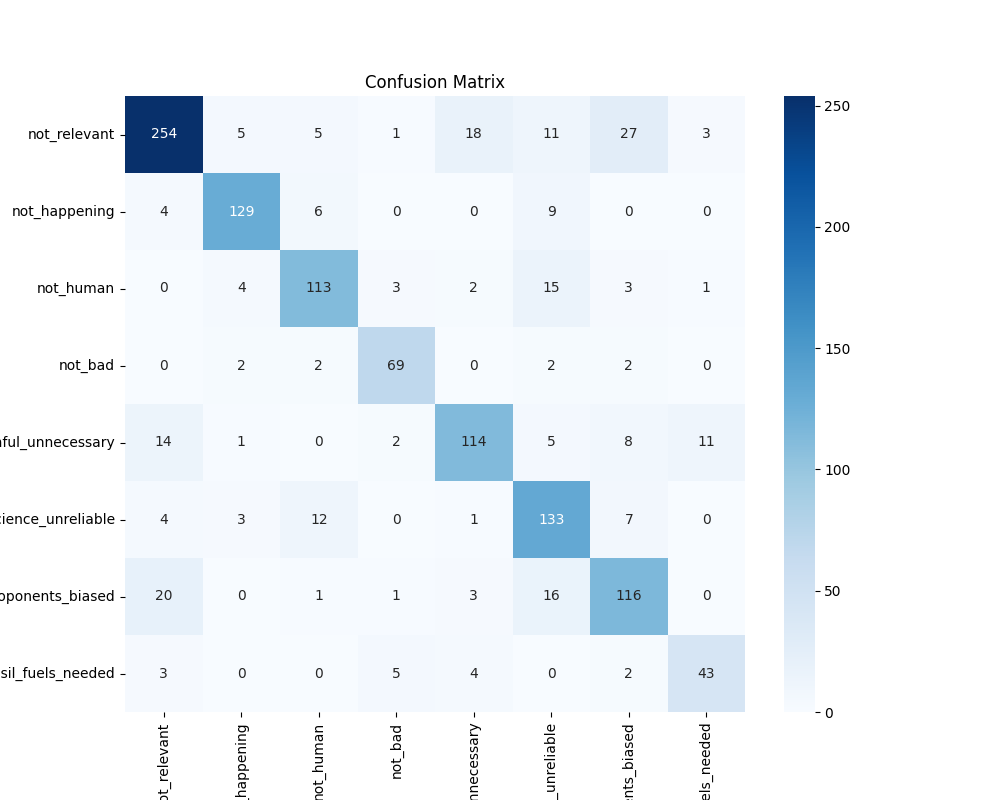

Confusion Matrix

The confusion matrix below illustrates the model's performance on the validation set, highlighting areas of strength and potential misclassifications:

Key Features

- Class Weighting: Addressed dataset imbalance by incorporating class weights during training.

- Custom Loss Function: Used weighted cross-entropy loss for better handling of underrepresented classes.

- Evaluation Metrics: Accuracy, precision, recall, and F1-score were computed to provide a comprehensive understanding of the model's performance.

Class Mapping

The mapping between model output indices and class names is as follows: 0: not_relevant, 1: not_happening, 2: not_human, 3: not_bad, 4: fossil_fuels_needed, 5: science_unreliable, 6: proponents_biased

Usage

This model can be used for multi-class text classification tasks where the input text needs to be categorized into one of the eight predefined classes. It is particularly suited for datasets with class imbalance, thanks to its weighted loss function.

Example Usage

from transformers import AutoModelForSequenceClassification, AutoTokenizer

# Load the fine-tuned model and tokenizer

model = AutoModelForSequenceClassification.from_pretrained("climate-skepticism-classifier")

tokenizer = AutoTokenizer.from_pretrained("climate-skepticism-classifier")

# Tokenize input text

text = "Your input text here"

inputs = tokenizer(text, return_tensors="pt", padding="max_length", truncation=True, max_length=128)

# Perform inference

outputs = model(**inputs)

predicted_class = outputs.logits.argmax(-1).item()

print(f"Predicted Class: {predicted_class}")

Limitations

- Performance may vary on extremely imbalanced datasets

- Requires significant computational resources for training

- Model performance is dependent on the quality of LLM-generated balanced data

- May not perform optimally on very long text sequences (>128 tokens)

- May struggle with novel or evolving climate skepticism arguments

- Could be sensitive to subtle variations in argument framing

- May require periodic updates to capture emerging skepticism patterns

Citation

If you use this model, please cite: @article{your_name2024climateskepticism, title={LLM-Rebalanced Transformer for Climate Change Skepticism Classification}, author={Your Name}, year={2024}, journal={Preprint} }

Acknowledgments

Special thanks to the Frugal AI Challenge organizers for providing the dataset and fostering innovation in AI research.

- Downloads last month

- 45

Model tree for ParisNeo/TinyBert-frugal-ai-text-classification

Base model

huawei-noah/TinyBERT_General_4L_312D