metadata

pipeline_tag: text-to-image

widget:

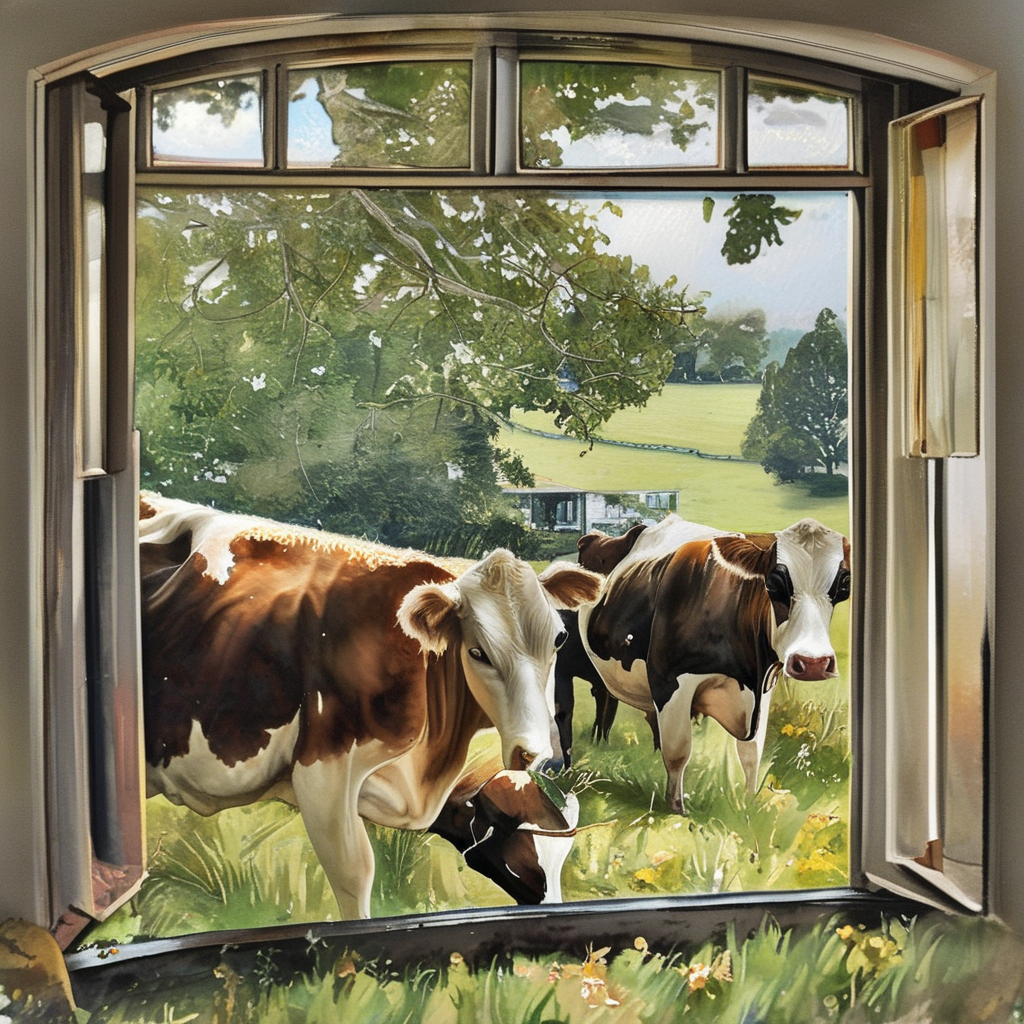

- text: Three cow grazing in a bay window

output:

url: cow.png

- text: >-

Super Closeup Portrait, action shot, Profoundly dark whitish meadow, glass

flowers, Stains, space grunge style, Jeanne d'Arc wearing White Olive

green used styled Cotton frock, Wielding thin silver sword, Sci-fi vibe,

dirty, noisy, Vintage monk style, very detailed, hd

output:

url: girl.png

- text: >-

spacious,circular underground room,{dirtied and bloodied white

tiles},amalgamation,flesh,plastic,dark fabric,core,pulsating

heart,limbs,human-like arms,twisted angelic wings,arms,covered in

skin,feathers,scales,undulate slowly,unseen current,convulsing,head

area,chaotic,mass of eyes,mouths,no human features,smaller

forms,cherubs,demons,golden wires,surround,holy light,tv static

effect,golden glow,shadows,terrifying essence,overwhelming

presence,nightmarish,landscape,sparse,cavernous,eerie,dynamic,motion,striking,awe-inspiring,nightmarish,nightmarish,nightmare,horrifying,bio-mechanical,body

horror,amalgamation

output:

url: aigle.png

license: gpl-3.0

- Prompt

- Three cow grazing in a bay window

- Prompt

- Super Closeup Portrait, action shot, Profoundly dark whitish meadow, glass flowers, Stains, space grunge style, Jeanne d'Arc wearing White Olive green used styled Cotton frock, Wielding thin silver sword, Sci-fi vibe, dirty, noisy, Vintage monk style, very detailed, hd

- Prompt

- spacious,circular underground room,{dirtied and bloodied white tiles},amalgamation,flesh,plastic,dark fabric,core,pulsating heart,limbs,human-like arms,twisted angelic wings,arms,covered in skin,feathers,scales,undulate slowly,unseen current,convulsing,head area,chaotic,mass of eyes,mouths,no human features,smaller forms,cherubs,demons,golden wires,surround,holy light,tv static effect,golden glow,shadows,terrifying essence,overwhelming presence,nightmarish,landscape,sparse,cavernous,eerie,dynamic,motion,striking,awe-inspiring,nightmarish,nightmarish,nightmare,horrifying,bio-mechanical,body horror,amalgamation

This is the initial version of the image model trained on the Bittensor network within subnet 17. It's not expected for this model to perform as well as MidJourney V6 at the moment. However, it does generate better images than base SDXL model.

Trained on the dataset of Subnet 19 Vision.

Subnet 17 Checkpoint

Model ID : gtsru/sn17-dek-012

Revision : 5852d39e8413a377a3477b8278ade9af311f83a4

UID : 42

Perplexity : 1.1325

Settings for BitDiffusionV0.1

Use these settings for the best results with BitDiffusionV0.1:

CFG Scale: Use a CFG scale of 8

Steps: 40 to 60 steps

Sampler: DPM++ 2M SDE

Scheduler: Karras

Resolution: 1024x1024

For best results, set a negative_prompt

Use it with 🧨 diffusers

import torch

from diffusers import (

StableDiffusionXLPipeline,

KDPM2AncestralDiscreteScheduler,

AutoencoderKL

)

# Load VAE component

vae = AutoencoderKL.from_pretrained(

"madebyollin/sdxl-vae-fp16-fix",

torch_dtype=torch.float16

)

# Configure the pipeline

pipe = StableDiffusionXLPipeline.from_pretrained(

"PlixAI/BitDiffusionV0.1",

vae=vae,

torch_dtype=torch.float16

)

pipe.scheduler = KDPM2AncestralDiscreteScheduler.from_config(pipe.scheduler.config)

pipe.to('cuda')

# Define prompts and generate image

prompt = "black fluffy gorgeous dangerous cat animal creature, large orange eyes, big fluffy ears, piercing gaze, full moon, dark ambiance, best quality, extremely detailed"

negative_prompt = "nsfw, bad quality, bad anatomy, worst quality, low quality, low resolutions, extra fingers, blur, blurry, ugly, wrongs proportions, watermark, image artifacts, lowres, ugly, jpeg artifacts, deformed, noisy image"

image = pipe(

prompt,

negative_prompt=negative_prompt,

width=1024,

height=1024,

guidance_scale=7.5,

num_inference_steps=50

).images[0]

Training Subnet : https://github.com/PlixML/pixel

Data Subnet : https://github.com/namoray/vision