QuantFactory/shisa-gamma-7b-v1-GGUF

This is quantized version of augmxnt/shisa-gamma-7b-v1 created using llama.cpp

Model Description

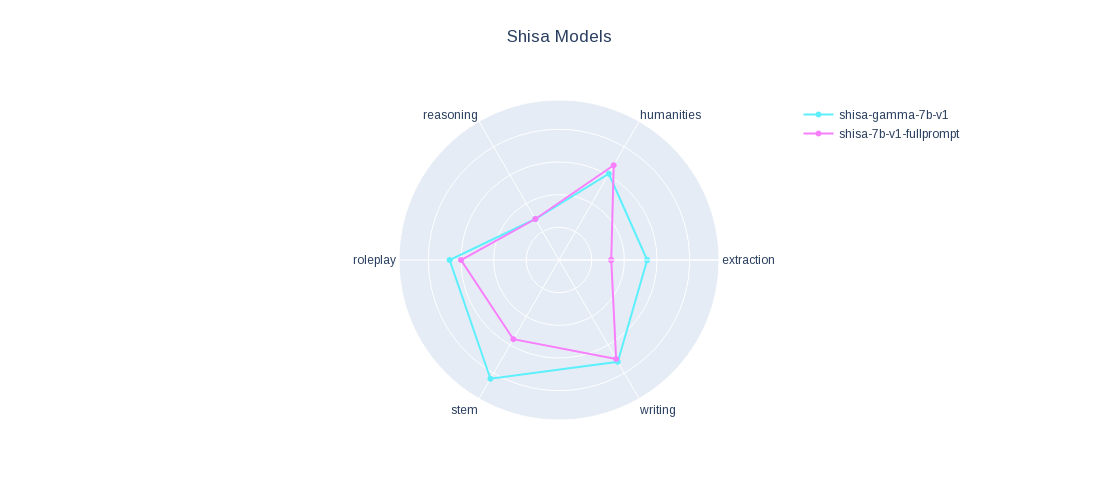

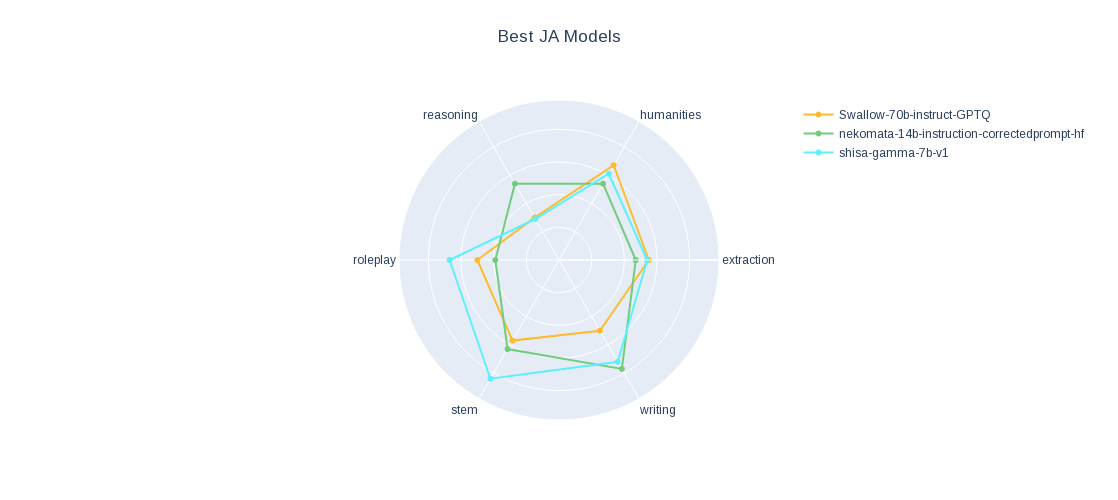

For more information see our main Shisa 7B model

We applied a version of our fine-tune data set onto Japanese Stable LM Base Gamma 7B and it performed pretty well, just sharing since it might be of interest.

Check out our JA MT-Bench results.

- Downloads last month

- 134

Inference Providers

NEW

This model is not currently available via any of the supported Inference Providers.

The model cannot be deployed to the HF Inference API:

The model has no library tag.

Model tree for QuantFactory/shisa-gamma-7b-v1-GGUF

Base model

stabilityai/japanese-stablelm-base-gamma-7b

Finetuned

augmxnt/shisa-gamma-7b-v1