metadata

language: sv

license: apache-2.0

tags:

- seq-2-seq asr

- whisper

datasets:

- mozilla-foundation/common_voice_11_0

library_name: torch

Model description

This model is a fine-tuned version of OpenAI's pre-trained Whisper small. Source

The model were trained on 680 000 hours of audio with corresponding transcripts from the internet, 65% of which was in english audio and 83 % of which had english transcripts.

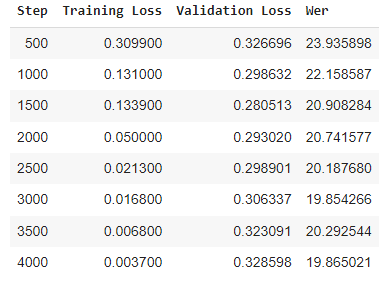

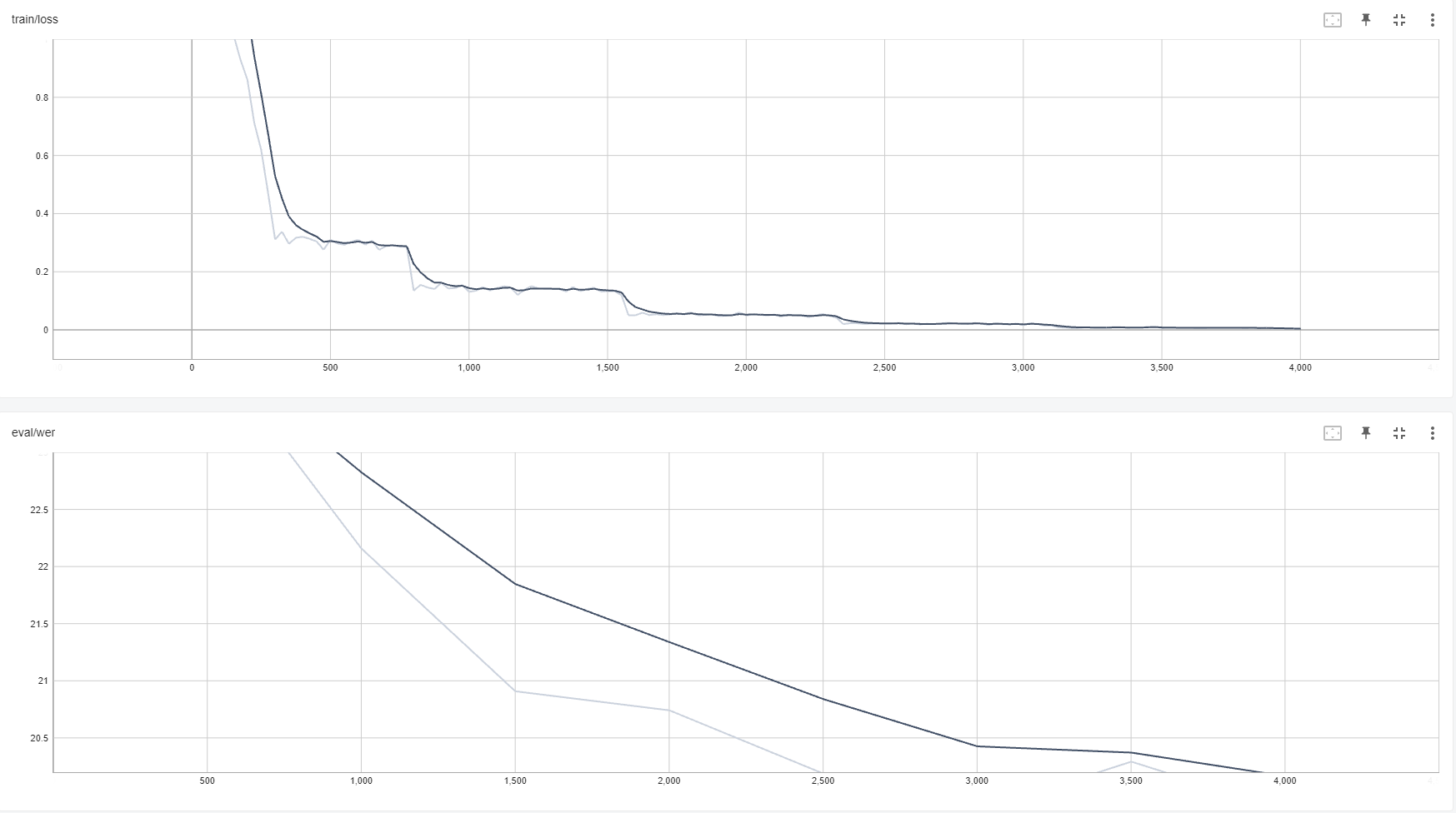

The model was then further trained for 4000 iterations, 500 of which as warm-up, on Swedish data from Common_voice 11.0. Achieving a WER of 19.865.