url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

1.28B

| node_id

stringlengths 18

32

| number

int64 1

4.56k

| title

stringlengths 1

276

| user

dict | labels

list | state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

list | milestone

dict | comments

sequence | created_at

int64 1,587B

1,656B

| updated_at

int64 1,587B

1,656B

| closed_at

int64 1,587B

1,656B

⌀ | author_association

stringclasses 3

values | active_lock_reason

null | draft

bool 2

classes | pull_request

dict | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | state_reason

stringclasses 1

value | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/1711 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1711/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1711/comments | https://api.github.com/repos/huggingface/datasets/issues/1711/events | https://github.com/huggingface/datasets/pull/1711 | 782,129,083 | MDExOlB1bGxSZXF1ZXN0NTUxNzQxODA2 | 1,711 | Fix windows path scheme in cached path | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,113,556,000 | 1,610,357,000,000 | 1,610,356,999,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1711",

"html_url": "https://github.com/huggingface/datasets/pull/1711",

"diff_url": "https://github.com/huggingface/datasets/pull/1711.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1711.patch",

"merged_at": 1610356999000

} | As noticed in #807 there's currently an issue with `cached_path` not raising `FileNotFoundError` on windows for absolute paths. This is due to the way we check for a path to be local or not. The check on the scheme using urlparse was incomplete.

I fixed this and added tests | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1711/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1711/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1710 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1710/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1710/comments | https://api.github.com/repos/huggingface/datasets/issues/1710/events | https://github.com/huggingface/datasets/issues/1710 | 781,914,951 | MDU6SXNzdWU3ODE5MTQ5NTE= | 1,710 | IsADirectoryError when trying to download C4 | {

"login": "fredriko",

"id": 5771366,

"node_id": "MDQ6VXNlcjU3NzEzNjY=",

"avatar_url": "https://avatars.githubusercontent.com/u/5771366?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/fredriko",

"html_url": "https://github.com/fredriko",

"followers_url": "https://api.github.com/users/fredriko/followers",

"following_url": "https://api.github.com/users/fredriko/following{/other_user}",

"gists_url": "https://api.github.com/users/fredriko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/fredriko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/fredriko/subscriptions",

"organizations_url": "https://api.github.com/users/fredriko/orgs",

"repos_url": "https://api.github.com/users/fredriko/repos",

"events_url": "https://api.github.com/users/fredriko/events{/privacy}",

"received_events_url": "https://api.github.com/users/fredriko/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"I haven't tested C4 on my side so there so there may be a few bugs in the code/adjustments to make.\r\nHere it looks like in c4.py, line 190 one of the `files_to_download` is `'/'` which is invalid.\r\nValid files are paths to local files or URLs to remote files."

] | 1,610,091,090,000 | 1,610,531,053,000 | null | NONE | null | null | null | **TLDR**:

I fail to download C4 and see a stacktrace originating in `IsADirectoryError` as an explanation for failure.

How can the problem be fixed?

**VERBOSE**:

I use Python version 3.7 and have the following dependencies listed in my project:

```

datasets==1.2.0

apache-beam==2.26.0

```

When running the following code, where `/data/huggingface/unpacked/` contains a single unzipped `wet.paths` file manually downloaded as per the instructions for C4:

```

from datasets import load_dataset

load_dataset("c4", "en", data_dir="/data/huggingface/unpacked", beam_runner='DirectRunner')

```

I get the following stacktrace:

```

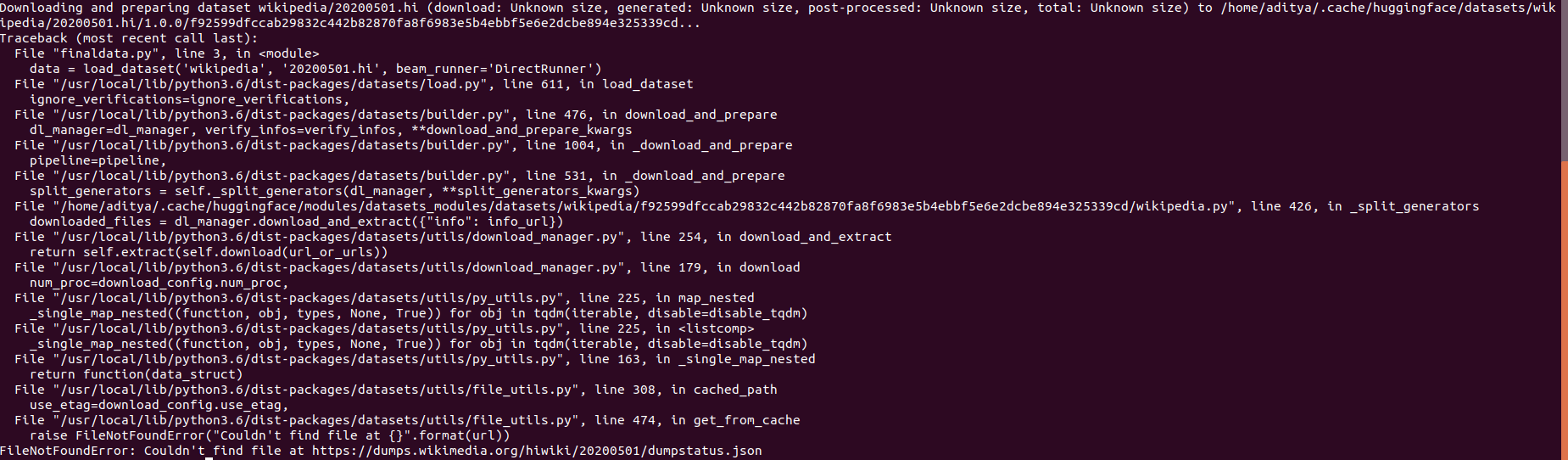

/Users/fredriko/venv/misc/bin/python /Users/fredriko/source/misc/main.py

Downloading and preparing dataset c4/en (download: Unknown size, generated: Unknown size, post-processed: Unknown size, total: Unknown size) to /Users/fredriko/.cache/huggingface/datasets/c4/en/2.3.0/8304cf264cc42bdebcb13fca4b9cb36368a96f557d36f9dc969bebbe2568b283...

Traceback (most recent call last):

File "/Users/fredriko/source/misc/main.py", line 3, in <module>

load_dataset("c4", "en", data_dir="/data/huggingface/unpacked", beam_runner='DirectRunner')

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/load.py", line 612, in load_dataset

ignore_verifications=ignore_verifications,

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/builder.py", line 527, in download_and_prepare

dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/builder.py", line 1066, in _download_and_prepare

pipeline=pipeline,

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/builder.py", line 582, in _download_and_prepare

split_generators = self._split_generators(dl_manager, **split_generators_kwargs)

File "/Users/fredriko/.cache/huggingface/modules/datasets_modules/datasets/c4/8304cf264cc42bdebcb13fca4b9cb36368a96f557d36f9dc969bebbe2568b283/c4.py", line 190, in _split_generators

file_paths = dl_manager.download_and_extract(files_to_download)

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/utils/download_manager.py", line 258, in download_and_extract

return self.extract(self.download(url_or_urls))

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/utils/download_manager.py", line 189, in download

self._record_sizes_checksums(url_or_urls, downloaded_path_or_paths)

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/utils/download_manager.py", line 117, in _record_sizes_checksums

self._recorded_sizes_checksums[str(url)] = get_size_checksum_dict(path)

File "/Users/fredriko/venv/misc/lib/python3.7/site-packages/datasets/utils/info_utils.py", line 80, in get_size_checksum_dict

with open(path, "rb") as f:

IsADirectoryError: [Errno 21] Is a directory: '/'

Process finished with exit code 1

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1710/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1710/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/1709 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1709/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1709/comments | https://api.github.com/repos/huggingface/datasets/issues/1709/events | https://github.com/huggingface/datasets/issues/1709 | 781,875,640 | MDU6SXNzdWU3ODE4NzU2NDA= | 1,709 | Databases | {

"login": "JimmyJim1",

"id": 68724553,

"node_id": "MDQ6VXNlcjY4NzI0NTUz",

"avatar_url": "https://avatars.githubusercontent.com/u/68724553?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/JimmyJim1",

"html_url": "https://github.com/JimmyJim1",

"followers_url": "https://api.github.com/users/JimmyJim1/followers",

"following_url": "https://api.github.com/users/JimmyJim1/following{/other_user}",

"gists_url": "https://api.github.com/users/JimmyJim1/gists{/gist_id}",

"starred_url": "https://api.github.com/users/JimmyJim1/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/JimmyJim1/subscriptions",

"organizations_url": "https://api.github.com/users/JimmyJim1/orgs",

"repos_url": "https://api.github.com/users/JimmyJim1/repos",

"events_url": "https://api.github.com/users/JimmyJim1/events{/privacy}",

"received_events_url": "https://api.github.com/users/JimmyJim1/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,086,443,000 | 1,610,096,408,000 | 1,610,096,408,000 | NONE | null | null | null | ## Adding a Dataset

- **Name:** *name of the dataset*

- **Description:** *short description of the dataset (or link to social media or blog post)*

- **Paper:** *link to the dataset paper if available*

- **Data:** *link to the Github repository or current dataset location*

- **Motivation:** *what are some good reasons to have this dataset*

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md). | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1709/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1709/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/1708 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1708/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1708/comments | https://api.github.com/repos/huggingface/datasets/issues/1708/events | https://github.com/huggingface/datasets/issues/1708 | 781,631,455 | MDU6SXNzdWU3ODE2MzE0NTU= | 1,708 | <html dir="ltr" lang="en" class="focus-outline-visible"><head><meta http-equiv="Content-Type" content="text/html; charset=UTF-8"> | {

"login": "Louiejay54",

"id": 77126849,

"node_id": "MDQ6VXNlcjc3MTI2ODQ5",

"avatar_url": "https://avatars.githubusercontent.com/u/77126849?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Louiejay54",

"html_url": "https://github.com/Louiejay54",

"followers_url": "https://api.github.com/users/Louiejay54/followers",

"following_url": "https://api.github.com/users/Louiejay54/following{/other_user}",

"gists_url": "https://api.github.com/users/Louiejay54/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Louiejay54/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Louiejay54/subscriptions",

"organizations_url": "https://api.github.com/users/Louiejay54/orgs",

"repos_url": "https://api.github.com/users/Louiejay54/repos",

"events_url": "https://api.github.com/users/Louiejay54/events{/privacy}",

"received_events_url": "https://api.github.com/users/Louiejay54/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,055,924,000 | 1,610,096,401,000 | 1,610,096,401,000 | NONE | null | null | null | ## Adding a Dataset

- **Name:** *name of the dataset*

- **Description:** *short description of the dataset (or link to social media or blog post)*

- **Paper:** *link to the dataset paper if available*

- **Data:** *link to the Github repository or current dataset location*

- **Motivation:** *what are some good reasons to have this dataset*

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md). | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1708/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1708/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/1707 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1707/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1707/comments | https://api.github.com/repos/huggingface/datasets/issues/1707/events | https://github.com/huggingface/datasets/pull/1707 | 781,507,545 | MDExOlB1bGxSZXF1ZXN0NTUxMjE5MDk2 | 1,707 | Added generated READMEs for datasets that were missing one. | {

"login": "madlag",

"id": 272253,

"node_id": "MDQ6VXNlcjI3MjI1Mw==",

"avatar_url": "https://avatars.githubusercontent.com/u/272253?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/madlag",

"html_url": "https://github.com/madlag",

"followers_url": "https://api.github.com/users/madlag/followers",

"following_url": "https://api.github.com/users/madlag/following{/other_user}",

"gists_url": "https://api.github.com/users/madlag/gists{/gist_id}",

"starred_url": "https://api.github.com/users/madlag/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/madlag/subscriptions",

"organizations_url": "https://api.github.com/users/madlag/orgs",

"repos_url": "https://api.github.com/users/madlag/repos",

"events_url": "https://api.github.com/users/madlag/events{/privacy}",

"received_events_url": "https://api.github.com/users/madlag/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Looks like we need to trim the ones with too many configs, will look into it tomorrow!"

] | 1,610,043,006,000 | 1,610,980,353,000 | 1,610,980,353,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1707",

"html_url": "https://github.com/huggingface/datasets/pull/1707",

"diff_url": "https://github.com/huggingface/datasets/pull/1707.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1707.patch",

"merged_at": 1610980353000

} | This is it: we worked on a generator with Yacine @yjernite , and we generated dataset cards for all missing ones (161), with all the information we could gather from datasets repository, and using dummy_data to generate examples when possible.

Code is available here for the moment: https://github.com/madlag/datasets_readme_generator .

We will move it to a Hugging Face repository and to https://huggingface.co/datasets/card-creator/ later.

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1707/reactions",

"total_count": 2,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 2,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1707/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1706 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1706/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1706/comments | https://api.github.com/repos/huggingface/datasets/issues/1706/events | https://github.com/huggingface/datasets/issues/1706 | 781,494,476 | MDU6SXNzdWU3ODE0OTQ0NzY= | 1,706 | Error when downloading a large dataset on slow connection. | {

"login": "lucadiliello",

"id": 23355969,

"node_id": "MDQ6VXNlcjIzMzU1OTY5",

"avatar_url": "https://avatars.githubusercontent.com/u/23355969?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lucadiliello",

"html_url": "https://github.com/lucadiliello",

"followers_url": "https://api.github.com/users/lucadiliello/followers",

"following_url": "https://api.github.com/users/lucadiliello/following{/other_user}",

"gists_url": "https://api.github.com/users/lucadiliello/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lucadiliello/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lucadiliello/subscriptions",

"organizations_url": "https://api.github.com/users/lucadiliello/orgs",

"repos_url": "https://api.github.com/users/lucadiliello/repos",

"events_url": "https://api.github.com/users/lucadiliello/events{/privacy}",

"received_events_url": "https://api.github.com/users/lucadiliello/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Hi ! Is this an issue you have with `openwebtext` specifically or also with other datasets ?\r\n\r\nIt looks like the downloaded file is corrupted and can't be extracted using `tarfile`.\r\nCould you try loading it again with \r\n```python\r\nimport datasets\r\ndatasets.load_dataset(\"openwebtext\", download_mode=\"force_redownload\")\r\n```"

] | 1,610,041,695,000 | 1,610,534,102,000 | null | CONTRIBUTOR | null | null | null | I receive the following error after about an hour trying to download the `openwebtext` dataset.

The code used is:

```python

import datasets

datasets.load_dataset("openwebtext")

```

> Traceback (most recent call last): [4/28]

> File "<stdin>", line 1, in <module>

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/load.py", line 610, in load_dataset

> ignore_verifications=ignore_verifications,

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/builder.py", line 515, in download_and_prepare

> dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/builder.py", line 570, in _download_and_prepare

> split_generators = self._split_generators(dl_manager, **split_generators_kwargs)

> File "/home/lucadiliello/.cache/huggingface/modules/datasets_modules/datasets/openwebtext/5c636399c7155da97c982d0d70ecdce30fbca66a4eb4fc768ad91f8331edac02/openwebtext.py", line 62, in _split_generators

> dl_dir = dl_manager.download_and_extract(_URL)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/utils/download_manager.py", line 254, in download_and_extract

> return self.extract(self.download(url_or_urls))

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/utils/download_manager.py", line 235, in extract

> num_proc=num_proc,

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/utils/py_utils.py", line 225, in map_nested

> return function(data_struct)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/site-packages/datasets/utils/file_utils.py", line 343, in cached_path

> tar_file.extractall(output_path_extracted)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/tarfile.py", line 2000, in extractall

> numeric_owner=numeric_owner)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/tarfile.py", line 2042, in extract

> numeric_owner=numeric_owner)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/tarfile.py", line 2112, in _extract_member

> self.makefile(tarinfo, targetpath)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/tarfile.py", line 2161, in makefile

> copyfileobj(source, target, tarinfo.size, ReadError, bufsize)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/tarfile.py", line 253, in copyfileobj

> buf = src.read(remainder)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/lzma.py", line 200, in read

> return self._buffer.read(size)

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/_compression.py", line 68, in readinto

> data = self.read(len(byte_view))

> File "/home/lucadiliello/anaconda3/envs/nlp/lib/python3.7/_compression.py", line 99, in read

> raise EOFError("Compressed file ended before the "

> EOFError: Compressed file ended before the end-of-stream marker was reached

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1706/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1706/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/1705 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1705/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1705/comments | https://api.github.com/repos/huggingface/datasets/issues/1705/events | https://github.com/huggingface/datasets/pull/1705 | 781,474,949 | MDExOlB1bGxSZXF1ZXN0NTUxMTkyMTc4 | 1,705 | Add information about caching and verifications in "Load a Dataset" docs | {

"login": "SBrandeis",

"id": 33657802,

"node_id": "MDQ6VXNlcjMzNjU3ODAy",

"avatar_url": "https://avatars.githubusercontent.com/u/33657802?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/SBrandeis",

"html_url": "https://github.com/SBrandeis",

"followers_url": "https://api.github.com/users/SBrandeis/followers",

"following_url": "https://api.github.com/users/SBrandeis/following{/other_user}",

"gists_url": "https://api.github.com/users/SBrandeis/gists{/gist_id}",

"starred_url": "https://api.github.com/users/SBrandeis/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/SBrandeis/subscriptions",

"organizations_url": "https://api.github.com/users/SBrandeis/orgs",

"repos_url": "https://api.github.com/users/SBrandeis/repos",

"events_url": "https://api.github.com/users/SBrandeis/events{/privacy}",

"received_events_url": "https://api.github.com/users/SBrandeis/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892861,

"node_id": "MDU6TGFiZWwxOTM1ODkyODYx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/documentation",

"name": "documentation",

"color": "0075ca",

"default": true,

"description": "Improvements or additions to documentation"

}

] | closed | false | null | [] | null | [] | 1,610,039,924,000 | 1,610,460,481,000 | 1,610,460,481,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1705",

"html_url": "https://github.com/huggingface/datasets/pull/1705",

"diff_url": "https://github.com/huggingface/datasets/pull/1705.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1705.patch",

"merged_at": 1610460481000

} | Related to #215.

Missing improvements from @lhoestq's #1703. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1705/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1705/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1704 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1704/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1704/comments | https://api.github.com/repos/huggingface/datasets/issues/1704/events | https://github.com/huggingface/datasets/pull/1704 | 781,402,757 | MDExOlB1bGxSZXF1ZXN0NTUxMTMyNDI1 | 1,704 | Update XSUM Factuality DatasetCard | {

"login": "vineeths96",

"id": 50873201,

"node_id": "MDQ6VXNlcjUwODczMjAx",

"avatar_url": "https://avatars.githubusercontent.com/u/50873201?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/vineeths96",

"html_url": "https://github.com/vineeths96",

"followers_url": "https://api.github.com/users/vineeths96/followers",

"following_url": "https://api.github.com/users/vineeths96/following{/other_user}",

"gists_url": "https://api.github.com/users/vineeths96/gists{/gist_id}",

"starred_url": "https://api.github.com/users/vineeths96/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vineeths96/subscriptions",

"organizations_url": "https://api.github.com/users/vineeths96/orgs",

"repos_url": "https://api.github.com/users/vineeths96/repos",

"events_url": "https://api.github.com/users/vineeths96/events{/privacy}",

"received_events_url": "https://api.github.com/users/vineeths96/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,033,834,000 | 1,610,458,204,000 | 1,610,458,204,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1704",

"html_url": "https://github.com/huggingface/datasets/pull/1704",

"diff_url": "https://github.com/huggingface/datasets/pull/1704.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1704.patch",

"merged_at": 1610458204000

} | Update XSUM Factuality DatasetCard | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1704/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1704/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1703 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1703/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1703/comments | https://api.github.com/repos/huggingface/datasets/issues/1703/events | https://github.com/huggingface/datasets/pull/1703 | 781,395,146 | MDExOlB1bGxSZXF1ZXN0NTUxMTI2MjA5 | 1,703 | Improvements regarding caching and fingerprinting | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"I few comments here for discussion:\r\n- I'm not convinced yet the end user should really have to understand the difference between \"caching\" and 'fingerprinting\", what do you think? I think fingerprinting should probably stay as an internal thing. Is there a case where we want cahing without fingerprinting or vice-versa?\r\n- while I think the random fingerprint mechanism is smart, I have one question: when we disable caching or fingerprinting we also probably don't want the disk usage to grow so we should then try to keep only one cache file. Is it the case currently?\r\n- the warning should be emitted only once per session if possible (we have a mechanism to do that in transformers, you should ask Lysandre/Sylvain)\r\n\r\n",

"About your points:\r\n- Yes I agree, I just wanted to bring the discussion on this point. Until now fingerprinting hasn't been blocking for user experience. I'll probably remove the enable/disable fingerprinting function to keep things simple from the user's perspective.\r\n- Right now every time a not in-place transform (i.e. map, filter) is applied, a new cache file is created. It is the case even if caching is disabled since disabling it only means that the cache file won't be reloaded. Therefore you're right that it might end up filling the disk with files that won't be reused. I like the idea of keeping only one cache file. Currently all the cache files are kept on disk until the user clears the cache. To be able to keep only one, we need to know if a dataset that has been transformed is still loaded or not. For example\r\n```python\r\n# case 1 - keep both cache files (dataset1 and dataset2)\r\ndataset2 = dataset1.map(...)\r\n# case 2 - keep only the new cache file\r\ndataset1 = dataset1.map(...)\r\n```\r\nIn python it doesn't seem trivial to detect such changes. One thing that we can actually do on the other hand is store the cache files in a temporary directory that is cleared when the session closes. I think that's a good a simple solution for this problem.\r\n- Yes good idea ! I don't like spam either :) ",

"> * To be able to keep only one, we need to know if a dataset that has been transformed is still loaded or not. For example\r\n> \r\n> ```python\r\n> # case 1 - keep both cache files (dataset1 and dataset2)\r\n> dataset2 = dataset1.map(...)\r\n> # case 2 - keep only the new cache file\r\n> dataset1 = dataset1.map(...)\r\n> ```\r\n\r\nI see what you mean. It's a tricky question. One option would be that if caching is deactivated we have a single memory mapped file and have copy act as a copy by reference instead of a copy by value. We will then probably want a `copy()` or `deepcopy()` functionality. Maybe we should think a little bit about it though.",

"- I like the idea of using a temporary directory per session!\r\n- If the default behavior when caching is disabled is to re-use the same file, I'm a little worried about people making mistakes and having to re-download and process from scratch.\r\n- So we already have a keyword argument for `dataset1 = dataset1.map(..., in_place=True)`?",

"> * If the default behavior when caching is disabled is to re-use the same file, I'm a little worried about people making mistakes and having to re-download and process from scratch.\r\n\r\nWe should distinguish between the caching from load_dataset (base dataset cache files) and the caching after dataset transforms such as map or filter (transformed dataset cache files). When disabling caching only the second type (for map and filter) doesn't reload from cache files.\r\nTherefore nothing is re-downloaded. To re-download the dataset entirely the argument `download_mode=\"force_redownload\"` must be used in `load_dataset`.\r\nDo we have to think more about the naming to make things less confusing in your opinion ?\r\n\r\n> * So we already have a keyword argument for `dataset1 = dataset1.map(..., in_place=True)`?\r\n\r\nThere's no such `in_place` parameter in map, what do you mean exactly ?",

"I updated the PR:\r\n- I removed the enable/disable fingerprinting function\r\n- if caching is disabled arrow files are written in a temporary directory that is deleted when session closes\r\n- the warning that is showed when hashing a transform fails is only showed once\r\n- I added the `set_caching_enabled` function to the docs and explained the caching mechanism and its relation with fingerprinting\r\n\r\nI would love to have some feedback :) ",

"> > * So we already have a keyword argument for `dataset1 = dataset1.map(..., in_place=True)`?\r\n> \r\n> There's no such `in_place` parameter in map, what do you mean exactly ?\r\n\r\nSorry, that wasn't clear at all. I was responding to your previous comment about case 1 / case 2. I don't think the behavior should depend on the command, but we could have:\r\n\r\n```\r\n# case 1 - keep both cache files (dataset1 and dataset2)\r\ndataset2 = dataset1.map(...)\r\n# case 2 - keep only the new cache file\r\ndataset1 = dataset1.map(..., in_place=True)\r\n```\r\n\r\nCase 1 returns a new reference using the new cache file, case 2 returns the same reference",

"> Sorry, that wasn't clear at all. I was responding to your previous comment about case 1 / case 2. I don't think the behavior should depend on the command, but we could have:\r\n> \r\n> ```\r\n> # case 1 - keep both cache files (dataset1 and dataset2)\r\n> dataset2 = dataset1.map(...)\r\n> # case 2 - keep only the new cache file\r\n> dataset1 = dataset1.map(..., in_place=True)\r\n> ```\r\n> \r\n> Case 1 returns a new reference using the new cache file, case 2 returns the same reference\r\n\r\nOk I see !\r\n`in_place` is a parameter that is used in general to designate a transform so I would name that differently (maybe `overwrite` or something like that).\r\nNot sure if it's possible to update an already existing arrow file that is memory-mapped, let me check real quick.\r\nAlso it's possible to call `dataset2.cleanup_cache_files()` to delete the other cache files if we create a new one after the transform. Or even to get the cache file with `dataset1.cache_files` and let the user remove them by hand.\r\n\r\nEDIT: updating an arrow file in place is not part of the current API of pyarrow, so we would have to make new files.\r\n"

] | 1,610,033,189,000 | 1,611,077,531,000 | 1,611,077,530,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1703",

"html_url": "https://github.com/huggingface/datasets/pull/1703",

"diff_url": "https://github.com/huggingface/datasets/pull/1703.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1703.patch",

"merged_at": 1611077530000

} | This PR adds these features:

- Enable/disable caching

If disabled, the library will no longer reload cached datasets files when applying transforms to the datasets.

It is equivalent to setting `load_from_cache` to `False` in dataset transforms.

```python

from datasets import set_caching_enabled

set_caching_enabled(False)

```

- Allow unpicklable functions in `map`

If an unpicklable function is used, then it's not possible to hash it to update the dataset fingerprint that is used to name cache files. To workaround that, a random fingerprint is generated instead and a warning is raised.

```python

logger.warning(

f"Transform {transform} couldn't be hashed properly, a random hash was used instead. "

"Make sure your transforms and parameters are serializable with pickle or dill for the dataset fingerprinting and caching to work. "

"If you reuse this transform, the caching mechanism will consider it to be different from the previous calls and recompute everything."

)

```

and also (open to discussion, EDIT: actually NOT included):

- Enable/disable fingerprinting

Fingerprinting allows to have one deterministic fingerprint per dataset state.

A dataset fingerprint is updated after each transform.

Re-running the same transforms on a dataset in a different session results in the same fingerprint.

Disabling the fingerprinting mechanism makes all the fingerprints random.

Since the caching mechanism uses fingerprints to name the cache files, then cache file names will be different.

Therefore disabling fingerprinting will prevent the caching mechanism from reloading datasets files that have already been computed.

Disabling fingerprinting may speed up the lib for users that don't care about this feature and don't want to use caching.

```python

from datasets import set_fingerprinting_enabled

set_fingerprinting_enabled(False)

```

Other details:

- I renamed the `fingerprint` decorator to `fingerprint_transform` since the name was clearly not explicit. This decorator is used on dataset transform functions to allow them to update fingerprints.

- I added some `ignore_kwargs` when decorating transforms with `fingerprint_transform`, to make the fingerprint update not sensible to kwargs like `load_from_cache` or `cache_file_name`.

Todo: tests for set_fingerprinting_enabled + documentation for all the above features | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1703/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1703/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1702 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1702/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1702/comments | https://api.github.com/repos/huggingface/datasets/issues/1702/events | https://github.com/huggingface/datasets/pull/1702 | 781,383,277 | MDExOlB1bGxSZXF1ZXN0NTUxMTE2NDc0 | 1,702 | Fix importlib metdata import in py38 | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,032,230,000 | 1,610,102,835,000 | 1,610,102,835,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1702",

"html_url": "https://github.com/huggingface/datasets/pull/1702",

"diff_url": "https://github.com/huggingface/datasets/pull/1702.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1702.patch",

"merged_at": 1610102834000

} | In Python 3.8 there's no need to install `importlib_metadata` since it already exists as `importlib.metadata` in the standard lib. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1702/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1702/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1701 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1701/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1701/comments | https://api.github.com/repos/huggingface/datasets/issues/1701/events | https://github.com/huggingface/datasets/issues/1701 | 781,345,717 | MDU6SXNzdWU3ODEzNDU3MTc= | 1,701 | Some datasets miss dataset_infos.json or dummy_data.zip | {

"login": "madlag",

"id": 272253,

"node_id": "MDQ6VXNlcjI3MjI1Mw==",

"avatar_url": "https://avatars.githubusercontent.com/u/272253?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/madlag",

"html_url": "https://github.com/madlag",

"followers_url": "https://api.github.com/users/madlag/followers",

"following_url": "https://api.github.com/users/madlag/following{/other_user}",

"gists_url": "https://api.github.com/users/madlag/gists{/gist_id}",

"starred_url": "https://api.github.com/users/madlag/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/madlag/subscriptions",

"organizations_url": "https://api.github.com/users/madlag/orgs",

"repos_url": "https://api.github.com/users/madlag/repos",

"events_url": "https://api.github.com/users/madlag/events{/privacy}",

"received_events_url": "https://api.github.com/users/madlag/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Thanks for reporting.\r\nWe should indeed add all the missing dummy_data.zip and also the dataset_infos.json at least for lm1b, reclor and wikihow.\r\n\r\nFor c4 I haven't tested the script and I think we'll require some optimizations regarding beam datasets before processing it.\r\n"

] | 1,610,029,033,000 | 1,610,458,846,000 | null | CONTRIBUTOR | null | null | null | While working on dataset REAME generation script at https://github.com/madlag/datasets_readme_generator , I noticed that some datasets miss a dataset_infos.json :

```

c4

lm1b

reclor

wikihow

```

And some does not have a dummy_data.zip :

```

kor_nli

math_dataset

mlqa

ms_marco

newsgroup

qa4mre

qangaroo

reddit_tifu

super_glue

trivia_qa

web_of_science

wmt14

wmt15

wmt16

wmt17

wmt18

wmt19

xtreme

```

But it seems that some of those last do have a "dummy" directory .

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1701/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1701/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/1700 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1700/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1700/comments | https://api.github.com/repos/huggingface/datasets/issues/1700/events | https://github.com/huggingface/datasets/pull/1700 | 781,333,589 | MDExOlB1bGxSZXF1ZXN0NTUxMDc1NTg2 | 1,700 | Update Curiosity dialogs DatasetCard | {

"login": "vineeths96",

"id": 50873201,

"node_id": "MDQ6VXNlcjUwODczMjAx",

"avatar_url": "https://avatars.githubusercontent.com/u/50873201?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/vineeths96",

"html_url": "https://github.com/vineeths96",

"followers_url": "https://api.github.com/users/vineeths96/followers",

"following_url": "https://api.github.com/users/vineeths96/following{/other_user}",

"gists_url": "https://api.github.com/users/vineeths96/gists{/gist_id}",

"starred_url": "https://api.github.com/users/vineeths96/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vineeths96/subscriptions",

"organizations_url": "https://api.github.com/users/vineeths96/orgs",

"repos_url": "https://api.github.com/users/vineeths96/repos",

"events_url": "https://api.github.com/users/vineeths96/events{/privacy}",

"received_events_url": "https://api.github.com/users/vineeths96/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,610,027,967,000 | 1,610,477,492,000 | 1,610,477,492,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1700",

"html_url": "https://github.com/huggingface/datasets/pull/1700",

"diff_url": "https://github.com/huggingface/datasets/pull/1700.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1700.patch",

"merged_at": 1610477492000

} | Update Curiosity dialogs DatasetCard

There are some entries in the data fields section yet to be filled. There is little information regarding those fields. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1700/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1700/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1699 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1699/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1699/comments | https://api.github.com/repos/huggingface/datasets/issues/1699/events | https://github.com/huggingface/datasets/pull/1699 | 781,271,558 | MDExOlB1bGxSZXF1ZXN0NTUxMDIzODE5 | 1,699 | Update DBRD dataset card and download URL | {

"login": "benjaminvdb",

"id": 8875786,

"node_id": "MDQ6VXNlcjg4NzU3ODY=",

"avatar_url": "https://avatars.githubusercontent.com/u/8875786?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/benjaminvdb",

"html_url": "https://github.com/benjaminvdb",

"followers_url": "https://api.github.com/users/benjaminvdb/followers",

"following_url": "https://api.github.com/users/benjaminvdb/following{/other_user}",

"gists_url": "https://api.github.com/users/benjaminvdb/gists{/gist_id}",

"starred_url": "https://api.github.com/users/benjaminvdb/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/benjaminvdb/subscriptions",

"organizations_url": "https://api.github.com/users/benjaminvdb/orgs",

"repos_url": "https://api.github.com/users/benjaminvdb/repos",

"events_url": "https://api.github.com/users/benjaminvdb/events{/privacy}",

"received_events_url": "https://api.github.com/users/benjaminvdb/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"not sure why the CI was not triggered though"

] | 1,610,021,803,000 | 1,610,026,899,000 | 1,610,026,859,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1699",

"html_url": "https://github.com/huggingface/datasets/pull/1699",

"diff_url": "https://github.com/huggingface/datasets/pull/1699.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1699.patch",

"merged_at": 1610026859000

} | I've added the Dutch Bood Review Dataset (DBRD) during the recent sprint. This pull request makes two minor changes:

1. I'm changing the download URL from Google Drive to the dataset's GitHub release package. This is now possible because of PR #1316.

2. I've updated the dataset card.

Cheers! 😄 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1699/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1699/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1698 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1698/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1698/comments | https://api.github.com/repos/huggingface/datasets/issues/1698/events | https://github.com/huggingface/datasets/pull/1698 | 781,152,561 | MDExOlB1bGxSZXF1ZXN0NTUwOTI0ODQ3 | 1,698 | Update Coached Conv Pref DatasetCard | {

"login": "vineeths96",

"id": 50873201,

"node_id": "MDQ6VXNlcjUwODczMjAx",

"avatar_url": "https://avatars.githubusercontent.com/u/50873201?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/vineeths96",

"html_url": "https://github.com/vineeths96",

"followers_url": "https://api.github.com/users/vineeths96/followers",

"following_url": "https://api.github.com/users/vineeths96/following{/other_user}",

"gists_url": "https://api.github.com/users/vineeths96/gists{/gist_id}",

"starred_url": "https://api.github.com/users/vineeths96/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vineeths96/subscriptions",

"organizations_url": "https://api.github.com/users/vineeths96/orgs",

"repos_url": "https://api.github.com/users/vineeths96/repos",

"events_url": "https://api.github.com/users/vineeths96/events{/privacy}",

"received_events_url": "https://api.github.com/users/vineeths96/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Really cool!\r\n\r\nCan you add some task tags for `dialogue-modeling` (under `sequence-modeling`) and `parsing` (under `structured-prediction`)?"

] | 1,610,010,436,000 | 1,610,125,473,000 | 1,610,125,472,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1698",

"html_url": "https://github.com/huggingface/datasets/pull/1698",

"diff_url": "https://github.com/huggingface/datasets/pull/1698.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1698.patch",

"merged_at": 1610125472000

} | Update Coached Conversation Preferance DatasetCard | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1698/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1698/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1697 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1697/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1697/comments | https://api.github.com/repos/huggingface/datasets/issues/1697/events | https://github.com/huggingface/datasets/pull/1697 | 781,126,579 | MDExOlB1bGxSZXF1ZXN0NTUwOTAzNzI5 | 1,697 | Update DialogRE DatasetCard | {

"login": "vineeths96",

"id": 50873201,

"node_id": "MDQ6VXNlcjUwODczMjAx",

"avatar_url": "https://avatars.githubusercontent.com/u/50873201?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/vineeths96",

"html_url": "https://github.com/vineeths96",

"followers_url": "https://api.github.com/users/vineeths96/followers",

"following_url": "https://api.github.com/users/vineeths96/following{/other_user}",

"gists_url": "https://api.github.com/users/vineeths96/gists{/gist_id}",

"starred_url": "https://api.github.com/users/vineeths96/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vineeths96/subscriptions",

"organizations_url": "https://api.github.com/users/vineeths96/orgs",

"repos_url": "https://api.github.com/users/vineeths96/repos",

"events_url": "https://api.github.com/users/vineeths96/events{/privacy}",

"received_events_url": "https://api.github.com/users/vineeths96/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Same as #1698, can you add a task tag for dialogue-modeling (under sequence-modeling) :) ?"

] | 1,610,007,753,000 | 1,610,026,468,000 | 1,610,026,468,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1697",

"html_url": "https://github.com/huggingface/datasets/pull/1697",

"diff_url": "https://github.com/huggingface/datasets/pull/1697.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1697.patch",

"merged_at": 1610026468000

} | Update the information in the dataset card for the Dialog RE dataset. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/1697/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/1697/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1696 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1696/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1696/comments | https://api.github.com/repos/huggingface/datasets/issues/1696/events | https://github.com/huggingface/datasets/issues/1696 | 781,096,918 | MDU6SXNzdWU3ODEwOTY5MTg= | 1,696 | Unable to install datasets | {

"login": "glee2429",

"id": 12635475,

"node_id": "MDQ6VXNlcjEyNjM1NDc1",

"avatar_url": "https://avatars.githubusercontent.com/u/12635475?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/glee2429",

"html_url": "https://github.com/glee2429",

"followers_url": "https://api.github.com/users/glee2429/followers",

"following_url": "https://api.github.com/users/glee2429/following{/other_user}",

"gists_url": "https://api.github.com/users/glee2429/gists{/gist_id}",

"starred_url": "https://api.github.com/users/glee2429/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/glee2429/subscriptions",

"organizations_url": "https://api.github.com/users/glee2429/orgs",

"repos_url": "https://api.github.com/users/glee2429/repos",

"events_url": "https://api.github.com/users/glee2429/events{/privacy}",

"received_events_url": "https://api.github.com/users/glee2429/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Maybe try to create a virtual env with python 3.8 or 3.7",

"Thanks, @thomwolf! I fixed the issue by downgrading python to 3.7. ",

"Damn sorry",

"Damn sorry"

] | 1,610,004,277,000 | 1,610,065,985,000 | 1,610,057,165,000 | NONE | null | null | null | ** Edit **

I believe there's a bug with the package when you're installing it with Python 3.9. I recommend sticking with previous versions. Thanks, @thomwolf for the insight!

**Short description**

I followed the instructions for installing datasets (https://huggingface.co/docs/datasets/installation.html). However, while I tried to download datasets using `pip install datasets` I got a massive error message after getting stuck at "Installing build dependencies..."

I was wondering if this problem can be fixed by creating a virtual environment, but it didn't help. Can anyone offer some advice on how to fix this issue?

Here's an error message:

`(env) Gas-MacBook-Pro:Downloads destiny$ pip install datasets

Collecting datasets

Using cached datasets-1.2.0-py3-none-any.whl (159 kB)

Collecting numpy>=1.17

Using cached numpy-1.19.5-cp39-cp39-macosx_10_9_x86_64.whl (15.6 MB)

Collecting pyarrow>=0.17.1

Using cached pyarrow-2.0.0.tar.gz (58.9 MB)

....

_configtest.c:9:5: warning: incompatible redeclaration of library function 'ceilf' [-Wincompatible-library-redeclaration]

int ceilf (void);

^

_configtest.c:9:5: note: 'ceilf' is a builtin with type 'float (float)'

_configtest.c:10:5: warning: incompatible redeclaration of library function 'rintf' [-Wincompatible-library-redeclaration]

int rintf (void);

^

_configtest.c:10:5: note: 'rintf' is a builtin with type 'float (float)'

_configtest.c:11:5: warning: incompatible redeclaration of library function 'truncf' [-Wincompatible-library-redeclaration]

int truncf (void);

^

_configtest.c:11:5: note: 'truncf' is a builtin with type 'float (float)'

_configtest.c:12:5: warning: incompatible redeclaration of library function 'sqrtf' [-Wincompatible-library-redeclaration]

int sqrtf (void);

^

_configtest.c:12:5: note: 'sqrtf' is a builtin with type 'float (float)'

_configtest.c:13:5: warning: incompatible redeclaration of library function 'log10f' [-Wincompatible-library-redeclaration]

int log10f (void);

^

_configtest.c:13:5: note: 'log10f' is a builtin with type 'float (float)'

_configtest.c:14:5: warning: incompatible redeclaration of library function 'logf' [-Wincompatible-library-redeclaration]

int logf (void);

^

_configtest.c:14:5: note: 'logf' is a builtin with type 'float (float)'

_configtest.c:15:5: warning: incompatible redeclaration of library function 'log1pf' [-Wincompatible-library-redeclaration]

int log1pf (void);

^

_configtest.c:15:5: note: 'log1pf' is a builtin with type 'float (float)'

_configtest.c:16:5: warning: incompatible redeclaration of library function 'expf' [-Wincompatible-library-redeclaration]

int expf (void);

^

_configtest.c:16:5: note: 'expf' is a builtin with type 'float (float)'

_configtest.c:17:5: warning: incompatible redeclaration of library function 'expm1f' [-Wincompatible-library-redeclaration]

int expm1f (void);

^

_configtest.c:17:5: note: 'expm1f' is a builtin with type 'float (float)'

_configtest.c:18:5: warning: incompatible redeclaration of library function 'asinf' [-Wincompatible-library-redeclaration]

int asinf (void);

^

_configtest.c:18:5: note: 'asinf' is a builtin with type 'float (float)'

_configtest.c:19:5: warning: incompatible redeclaration of library function 'acosf' [-Wincompatible-library-redeclaration]

int acosf (void);

^

_configtest.c:19:5: note: 'acosf' is a builtin with type 'float (float)'

_configtest.c:20:5: warning: incompatible redeclaration of library function 'atanf' [-Wincompatible-library-redeclaration]

int atanf (void);

^

_configtest.c:20:5: note: 'atanf' is a builtin with type 'float (float)'

_configtest.c:21:5: warning: incompatible redeclaration of library function 'asinhf' [-Wincompatible-library-redeclaration]

int asinhf (void);

^

_configtest.c:21:5: note: 'asinhf' is a builtin with type 'float (float)'

_configtest.c:22:5: warning: incompatible redeclaration of library function 'acoshf' [-Wincompatible-library-redeclaration]

int acoshf (void);

^

_configtest.c:22:5: note: 'acoshf' is a builtin with type 'float (float)'

_configtest.c:23:5: warning: incompatible redeclaration of library function 'atanhf' [-Wincompatible-library-redeclaration]

int atanhf (void);

^

_configtest.c:23:5: note: 'atanhf' is a builtin with type 'float (float)'

_configtest.c:24:5: warning: incompatible redeclaration of library function 'hypotf' [-Wincompatible-library-redeclaration]

int hypotf (void);

^

_configtest.c:24:5: note: 'hypotf' is a builtin with type 'float (float, float)'

_configtest.c:25:5: warning: incompatible redeclaration of library function 'atan2f' [-Wincompatible-library-redeclaration]

int atan2f (void);

^

_configtest.c:25:5: note: 'atan2f' is a builtin with type 'float (float, float)'

_configtest.c:26:5: warning: incompatible redeclaration of library function 'powf' [-Wincompatible-library-redeclaration]

int powf (void);

^

_configtest.c:26:5: note: 'powf' is a builtin with type 'float (float, float)'

_configtest.c:27:5: warning: incompatible redeclaration of library function 'fmodf' [-Wincompatible-library-redeclaration]

int fmodf (void);

^

_configtest.c:27:5: note: 'fmodf' is a builtin with type 'float (float, float)'

_configtest.c:28:5: warning: incompatible redeclaration of library function 'modff' [-Wincompatible-library-redeclaration]

int modff (void);

^

_configtest.c:28:5: note: 'modff' is a builtin with type 'float (float, float *)'

_configtest.c:29:5: warning: incompatible redeclaration of library function 'frexpf' [-Wincompatible-library-redeclaration]

int frexpf (void);

^

_configtest.c:29:5: note: 'frexpf' is a builtin with type 'float (float, int *)'

_configtest.c:30:5: warning: incompatible redeclaration of library function 'ldexpf' [-Wincompatible-library-redeclaration]

int ldexpf (void);

^

_configtest.c:30:5: note: 'ldexpf' is a builtin with type 'float (float, int)'

_configtest.c:31:5: warning: incompatible redeclaration of library function 'exp2f' [-Wincompatible-library-redeclaration]

int exp2f (void);

^

_configtest.c:31:5: note: 'exp2f' is a builtin with type 'float (float)'

_configtest.c:32:5: warning: incompatible redeclaration of library function 'log2f' [-Wincompatible-library-redeclaration]

int log2f (void);

^

_configtest.c:32:5: note: 'log2f' is a builtin with type 'float (float)'

_configtest.c:33:5: warning: incompatible redeclaration of library function 'copysignf' [-Wincompatible-library-redeclaration]

int copysignf (void);

^

_configtest.c:33:5: note: 'copysignf' is a builtin with type 'float (float, float)'

_configtest.c:34:5: warning: incompatible redeclaration of library function 'nextafterf' [-Wincompatible-library-redeclaration]

int nextafterf (void);

^

_configtest.c:34:5: note: 'nextafterf' is a builtin with type 'float (float, float)'

_configtest.c:35:5: warning: incompatible redeclaration of library function 'cbrtf' [-Wincompatible-library-redeclaration]

int cbrtf (void);

^

_configtest.c:35:5: note: 'cbrtf' is a builtin with type 'float (float)'

35 warnings generated.

clang _configtest.o -o _configtest

success!

removing: _configtest.c _configtest.o _configtest.o.d _configtest

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

_configtest.c:1:5: warning: incompatible redeclaration of library function 'sinl' [-Wincompatible-library-redeclaration]

int sinl (void);

^

_configtest.c:1:5: note: 'sinl' is a builtin with type 'long double (long double)'

_configtest.c:2:5: warning: incompatible redeclaration of library function 'cosl' [-Wincompatible-library-redeclaration]

int cosl (void);

^

_configtest.c:2:5: note: 'cosl' is a builtin with type 'long double (long double)'

_configtest.c:3:5: warning: incompatible redeclaration of library function 'tanl' [-Wincompatible-library-redeclaration]

int tanl (void);

^

_configtest.c:3:5: note: 'tanl' is a builtin with type 'long double (long double)'

_configtest.c:4:5: warning: incompatible redeclaration of library function 'sinhl' [-Wincompatible-library-redeclaration]

int sinhl (void);

^

_configtest.c:4:5: note: 'sinhl' is a builtin with type 'long double (long double)'

_configtest.c:5:5: warning: incompatible redeclaration of library function 'coshl' [-Wincompatible-library-redeclaration]

int coshl (void);

^

_configtest.c:5:5: note: 'coshl' is a builtin with type 'long double (long double)'

_configtest.c:6:5: warning: incompatible redeclaration of library function 'tanhl' [-Wincompatible-library-redeclaration]

int tanhl (void);

^

_configtest.c:6:5: note: 'tanhl' is a builtin with type 'long double (long double)'

_configtest.c:7:5: warning: incompatible redeclaration of library function 'fabsl' [-Wincompatible-library-redeclaration]

int fabsl (void);

^

_configtest.c:7:5: note: 'fabsl' is a builtin with type 'long double (long double)'

_configtest.c:8:5: warning: incompatible redeclaration of library function 'floorl' [-Wincompatible-library-redeclaration]

int floorl (void);

^

_configtest.c:8:5: note: 'floorl' is a builtin with type 'long double (long double)'

_configtest.c:9:5: warning: incompatible redeclaration of library function 'ceill' [-Wincompatible-library-redeclaration]

int ceill (void);

^

_configtest.c:9:5: note: 'ceill' is a builtin with type 'long double (long double)'

_configtest.c:10:5: warning: incompatible redeclaration of library function 'rintl' [-Wincompatible-library-redeclaration]

int rintl (void);

^

_configtest.c:10:5: note: 'rintl' is a builtin with type 'long double (long double)'

_configtest.c:11:5: warning: incompatible redeclaration of library function 'truncl' [-Wincompatible-library-redeclaration]

int truncl (void);

^

_configtest.c:11:5: note: 'truncl' is a builtin with type 'long double (long double)'

_configtest.c:12:5: warning: incompatible redeclaration of library function 'sqrtl' [-Wincompatible-library-redeclaration]

int sqrtl (void);

^

_configtest.c:12:5: note: 'sqrtl' is a builtin with type 'long double (long double)'

_configtest.c:13:5: warning: incompatible redeclaration of library function 'log10l' [-Wincompatible-library-redeclaration]

int log10l (void);

^

_configtest.c:13:5: note: 'log10l' is a builtin with type 'long double (long double)'

_configtest.c:14:5: warning: incompatible redeclaration of library function 'logl' [-Wincompatible-library-redeclaration]

int logl (void);

^

_configtest.c:14:5: note: 'logl' is a builtin with type 'long double (long double)'

_configtest.c:15:5: warning: incompatible redeclaration of library function 'log1pl' [-Wincompatible-library-redeclaration]

int log1pl (void);

^

_configtest.c:15:5: note: 'log1pl' is a builtin with type 'long double (long double)'

_configtest.c:16:5: warning: incompatible redeclaration of library function 'expl' [-Wincompatible-library-redeclaration]

int expl (void);

^

_configtest.c:16:5: note: 'expl' is a builtin with type 'long double (long double)'

_configtest.c:17:5: warning: incompatible redeclaration of library function 'expm1l' [-Wincompatible-library-redeclaration]

int expm1l (void);

^

_configtest.c:17:5: note: 'expm1l' is a builtin with type 'long double (long double)'

_configtest.c:18:5: warning: incompatible redeclaration of library function 'asinl' [-Wincompatible-library-redeclaration]

int asinl (void);

^

_configtest.c:18:5: note: 'asinl' is a builtin with type 'long double (long double)'

_configtest.c:19:5: warning: incompatible redeclaration of library function 'acosl' [-Wincompatible-library-redeclaration]

int acosl (void);

^

_configtest.c:19:5: note: 'acosl' is a builtin with type 'long double (long double)'

_configtest.c:20:5: warning: incompatible redeclaration of library function 'atanl' [-Wincompatible-library-redeclaration]

int atanl (void);

^

_configtest.c:20:5: note: 'atanl' is a builtin with type 'long double (long double)'

_configtest.c:21:5: warning: incompatible redeclaration of library function 'asinhl' [-Wincompatible-library-redeclaration]

int asinhl (void);

^

_configtest.c:21:5: note: 'asinhl' is a builtin with type 'long double (long double)'

_configtest.c:22:5: warning: incompatible redeclaration of library function 'acoshl' [-Wincompatible-library-redeclaration]

int acoshl (void);

^

_configtest.c:22:5: note: 'acoshl' is a builtin with type 'long double (long double)'

_configtest.c:23:5: warning: incompatible redeclaration of library function 'atanhl' [-Wincompatible-library-redeclaration]

int atanhl (void);

^

_configtest.c:23:5: note: 'atanhl' is a builtin with type 'long double (long double)'

_configtest.c:24:5: warning: incompatible redeclaration of library function 'hypotl' [-Wincompatible-library-redeclaration]

int hypotl (void);

^

_configtest.c:24:5: note: 'hypotl' is a builtin with type 'long double (long double, long double)'

_configtest.c:25:5: warning: incompatible redeclaration of library function 'atan2l' [-Wincompatible-library-redeclaration]

int atan2l (void);

^

_configtest.c:25:5: note: 'atan2l' is a builtin with type 'long double (long double, long double)'

_configtest.c:26:5: warning: incompatible redeclaration of library function 'powl' [-Wincompatible-library-redeclaration]

int powl (void);

^

_configtest.c:26:5: note: 'powl' is a builtin with type 'long double (long double, long double)'

_configtest.c:27:5: warning: incompatible redeclaration of library function 'fmodl' [-Wincompatible-library-redeclaration]

int fmodl (void);

^

_configtest.c:27:5: note: 'fmodl' is a builtin with type 'long double (long double, long double)'

_configtest.c:28:5: warning: incompatible redeclaration of library function 'modfl' [-Wincompatible-library-redeclaration]

int modfl (void);

^

_configtest.c:28:5: note: 'modfl' is a builtin with type 'long double (long double, long double *)'

_configtest.c:29:5: warning: incompatible redeclaration of library function 'frexpl' [-Wincompatible-library-redeclaration]

int frexpl (void);

^

_configtest.c:29:5: note: 'frexpl' is a builtin with type 'long double (long double, int *)'

_configtest.c:30:5: warning: incompatible redeclaration of library function 'ldexpl' [-Wincompatible-library-redeclaration]

int ldexpl (void);

^

_configtest.c:30:5: note: 'ldexpl' is a builtin with type 'long double (long double, int)'

_configtest.c:31:5: warning: incompatible redeclaration of library function 'exp2l' [-Wincompatible-library-redeclaration]

int exp2l (void);

^

_configtest.c:31:5: note: 'exp2l' is a builtin with type 'long double (long double)'

_configtest.c:32:5: warning: incompatible redeclaration of library function 'log2l' [-Wincompatible-library-redeclaration]

int log2l (void);

^

_configtest.c:32:5: note: 'log2l' is a builtin with type 'long double (long double)'

_configtest.c:33:5: warning: incompatible redeclaration of library function 'copysignl' [-Wincompatible-library-redeclaration]

int copysignl (void);

^

_configtest.c:33:5: note: 'copysignl' is a builtin with type 'long double (long double, long double)'

_configtest.c:34:5: warning: incompatible redeclaration of library function 'nextafterl' [-Wincompatible-library-redeclaration]

int nextafterl (void);

^

_configtest.c:34:5: note: 'nextafterl' is a builtin with type 'long double (long double, long double)'

_configtest.c:35:5: warning: incompatible redeclaration of library function 'cbrtl' [-Wincompatible-library-redeclaration]

int cbrtl (void);

^

_configtest.c:35:5: note: 'cbrtl' is a builtin with type 'long double (long double)'

35 warnings generated.

clang _configtest.o -o _configtest

success!

removing: _configtest.c _configtest.o _configtest.o.d _configtest

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

success!

removing: _configtest.c _configtest.o _configtest.o.d

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

success!

removing: _configtest.c _configtest.o _configtest.o.d

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

success!

removing: _configtest.c _configtest.o _configtest.o.d

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

success!

removing: _configtest.c _configtest.o _configtest.o.d

C compiler: clang -Wno-unused-result -Wsign-compare -Wunreachable-code -fno-common -dynamic -DNDEBUG -g -fwrapv -O3 -Wall -isysroot /Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/usr/include -I/Library/Developer/CommandLineTools/SDKs/MacOSX10.15.sdk/System/Library/Frameworks/Tk.framework/Versions/8.5/Headers

compile options: '-Inumpy/core/src/common -Inumpy/core/src -Inumpy/core -Inumpy/core/src/npymath -Inumpy/core/src/multiarray -Inumpy/core/src/umath -Inumpy/core/src/npysort -I/usr/local/include -I/usr/local/opt/openssl@1.1/include -I/usr/local/opt/sqlite/include -I/Users/destiny/Downloads/env/include -I/usr/local/Cellar/python@3.9/3.9.0_1/Frameworks/Python.framework/Versions/3.9/include/python3.9 -c'

clang: _configtest.c

_configtest.c:8:12: error: use of undeclared identifier 'HAVE_DECL_SIGNBIT'