🤖 LLM Evolutionary Merge

🤗 Model | 📂 Github | ✍️ Blog | 💡Inspired by Sakana AI

This project aims to optimize model merging by integrating LLMs into evolutionary strategies in a novel way. Instead of using the CMA-ES approach, the goal is to improve model optimization by leveraging the search capabilities of LLMs to explore the parameter space more efficiently and adjust the search scope based on high-performing solutions.

This project aims to optimize model merging by integrating LLMs into evolutionary strategies in a novel way. Instead of using the CMA-ES approach, the goal is to improve model optimization by leveraging the search capabilities of LLMs to explore the parameter space more efficiently and adjust the search scope based on high-performing solutions.

Currently, the project supports optimization only within the Parameter Space, but I plan to extend its functionality to enable merging and optimization in the Data Flow Space as well. This will further enhance model merging by optimizing the interaction between data flow and parameters.

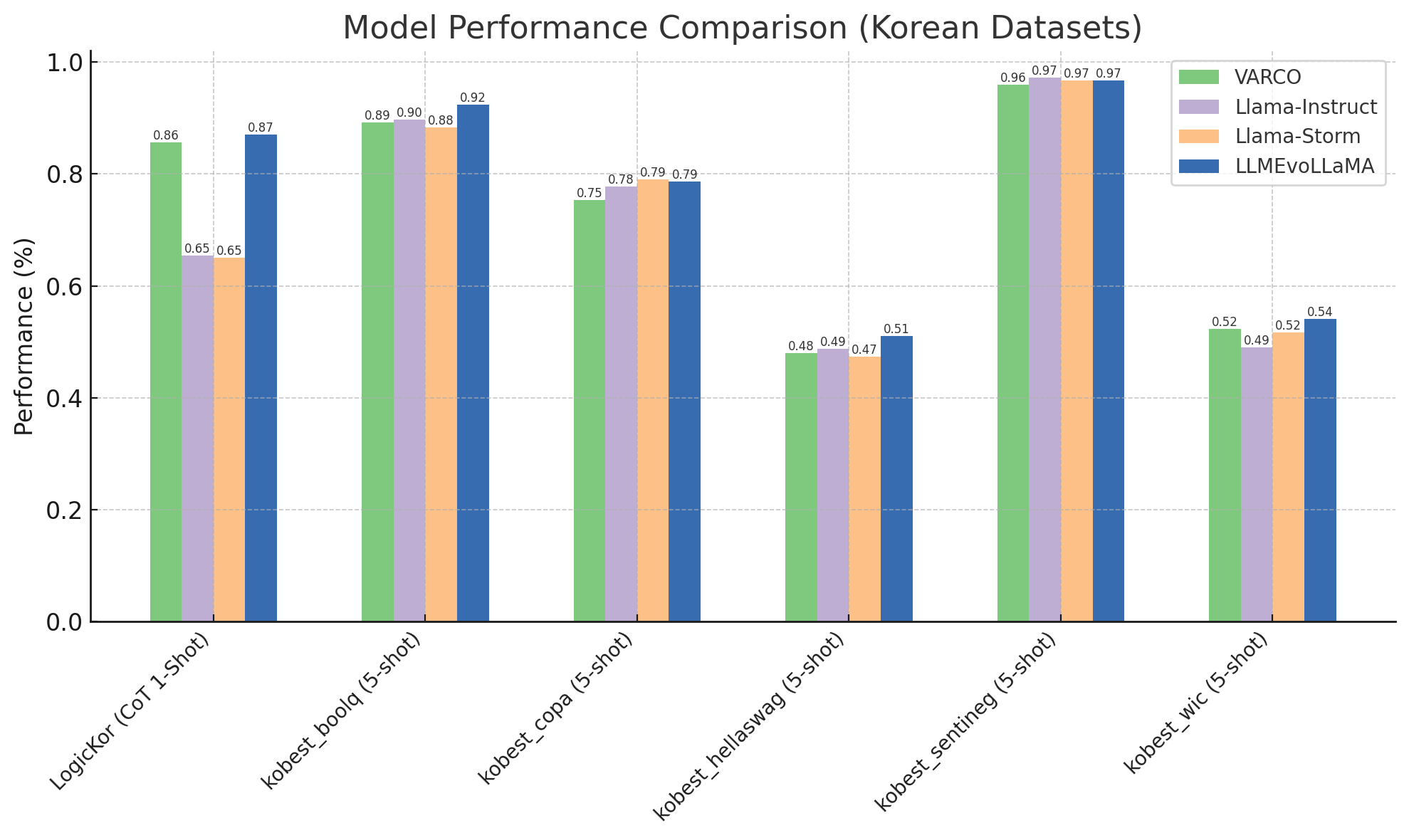

Performance

I focused on creating a high-performing Korean model solely through merging, without additional model training.

Merging Recipe

base_model: meta-llama/Llama-3.1-8B

dtype: bfloat16

merge_method: task_arithmetic

allow_negative_weights: true

parameters:

int8_mask: 1.0

normalize: 1.0

slices:

- sources:

- layer_range: [0, 2]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 1

- layer_range: [0, 2]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.3475802891062396

- layer_range: [0, 2]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [2, 4]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.8971381657317269

- layer_range: [2, 4]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.45369921781118544

- layer_range: [2, 4]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [4, 6]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.5430828084884667

- layer_range: [4, 6]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.2834723715836387

- layer_range: [4, 6]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [6, 8]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.419043948030593

- layer_range: [6, 8]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.3705268601566145

- layer_range: [6, 8]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [8, 10]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.3813333860404775

- layer_range: [8, 10]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.7634501436288518

- layer_range: [8, 10]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [10, 12]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.49134830660275863

- layer_range: [10, 12]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.7211994938499454

- layer_range: [10, 12]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [12, 14]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.9218963071448836

- layer_range: [12, 14]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.5117022419864319

- layer_range: [12, 14]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [14, 16]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.8238938467581831

- layer_range: [14, 16]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.851712316016478

- layer_range: [14, 16]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [16, 18]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.3543028846914006

- layer_range: [16, 18]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.6864368345788241

- layer_range: [16, 18]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [18, 20]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.9189961100847883

- layer_range: [18, 20]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.5800251781306379

- layer_range: [18, 20]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [20, 22]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.9281691677008521

- layer_range: [20, 22]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.5356892784211416

- layer_range: [20, 22]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [22, 24]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.839268407952539

- layer_range: [22, 24]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.5082186376599986

- layer_range: [22, 24]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [24, 26]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.6241902192095534

- layer_range: [24, 26]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.2945221540685877

- layer_range: [24, 26]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [26, 28]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.7030728026501202

- layer_range: [26, 28]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.2350478509634181

- layer_range: [26, 28]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [28, 30]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 0.2590342230366074

- layer_range: [28, 30]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.006083182855312869

- layer_range: [28, 30]

model: meta-llama/Llama-3.1-8B

- sources:

- layer_range: [30, 32]

model: NCSOFT/Llama-VARCO-8B-Instruct

parameters:

weight: 1

- layer_range: [30, 32]

model: akjindal53244/Llama-3.1-Storm-8B

parameters:

weight: 0.234650395825126

- layer_range: [30, 32]

model: meta-llama/Llama-3.1-8B

The models used for merging are listed below.

Base Model: meta-llama/Llama-3.1-8B

Model 1: NCSOFT/Llama-VARCO-8B-Instruct

Model 2: akjindal53244/Llama-3.1-Storm-8B

Comparing LLMEvoLlama with Source in Korean Benchmark

LogicKor: A benchmark that evaluates various linguistic abilities in Korean, including math, writing, coding, comprehension, grammar, and reasoning skills. (https://lk.instruct.kr/)

KoBest: A benchmark consisting of five natural language understanding tasks designed to test advanced Korean language comprehension. (https://arxiv.org/abs/2204.04541)

Comparing LLMEvoLlama with Source in English Benchmark and Total Average

| Model | truthfulqa_mc2 (0-shot acc) | arc_challenge (0-shot acc) | Korean + English Performance (avg) |

|---|---|---|---|

| VARCO | 0.53 | 0.47 | 0.68 |

| Llama-Instruct | 0.53 | 0.52 | 0.66 |

| Llama-Storm | 0.59 | 0.52 | 0.67 |

| LLMEvoLLaMA | 0.57 | 0.50 | 0.71 |

- Downloads last month

- 424

Model tree for fiveflow/LLMEvoLLaMA-3.1-8B-v0.1

Base model

meta-llama/Llama-3.1-8B