license: mit

language:

- en

library_name: transformers

pipeline_tag: image-to-text

tags:

- video-to-text

- video-captioning

- image-to-text

- image-captioning

- visual-question-answering

- blip-2

Model Card for EILEV BLIP-2-Flan-T5-xl

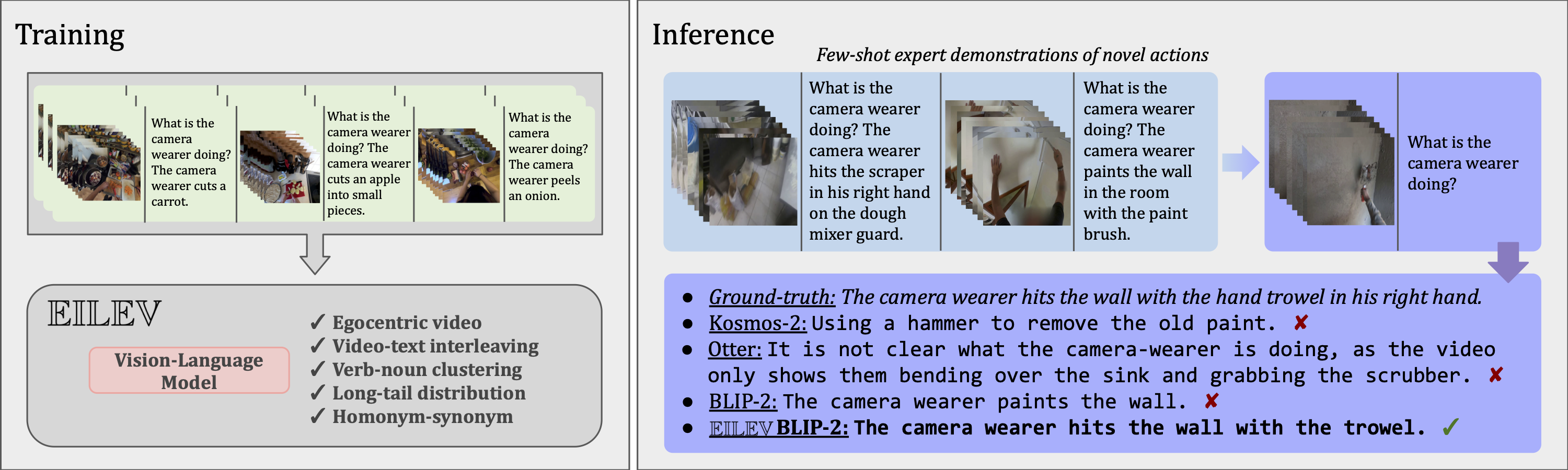

Salesforce/blip2-flan-t5-xl trained using EILeV, a novel training method that can elicit in-context learning in vision-language models (VLMs) for videos without requiring massive, naturalistic video datasets.

Model Details

Model Description

EILEV BLIP-2-Flan-T5-xl is a VLM optimized for egocentric video. It can perform in-context learning over videos and texts. It was trained on Ego4D.

Model Sources

- Repository: https://github.com/yukw777/EILEV

- Paper: https://arxiv.org/abs/2311.17041

- Demo: https://2e09-141-212-106-177.ngrok-free.app

Bias, Risks, and Limitations

EILEV BLIP-2-OPT-2.7B uses off-the-shelf Flan-T5 as the language model. It inherits the same risks and limitations from Flan-T5:

Language models, including Flan-T5, can potentially be used for language generation in a harmful way, according to Rae et al. (2021). Flan-T5 should not be used directly in any application, without a prior assessment of safety and fairness concerns specific to the application.

EILEV BLIP-2-OPT-2.7B has not been tested in real world applications. It should not be directly deployed in any applications. Researchers should first carefully assess the safety and fairness of the model in relation to the specific context they’re being deployed within.

How to Get Started with the Model

Please check out the official repository: https://github.com/yukw777/EILEV