Post

These past months, I've been busy baking a special sort of Croissant 🥐 with an awesome team !

🥐 CroissantLLM is a truly bilingual language model trained on 3 trillion tokens of French and English data. In its size category (<2B), it is the best model in French, but it also rivals the best monolingual English models !

💾 To train it, we collected, filtered and cleaned huge quantities of permissively licensed French data, across various domains (legal, administrative, cultural, scientific), and different text modalities (speech transcriptions, movie subtitles, encyclopedias, forums, webpages)...

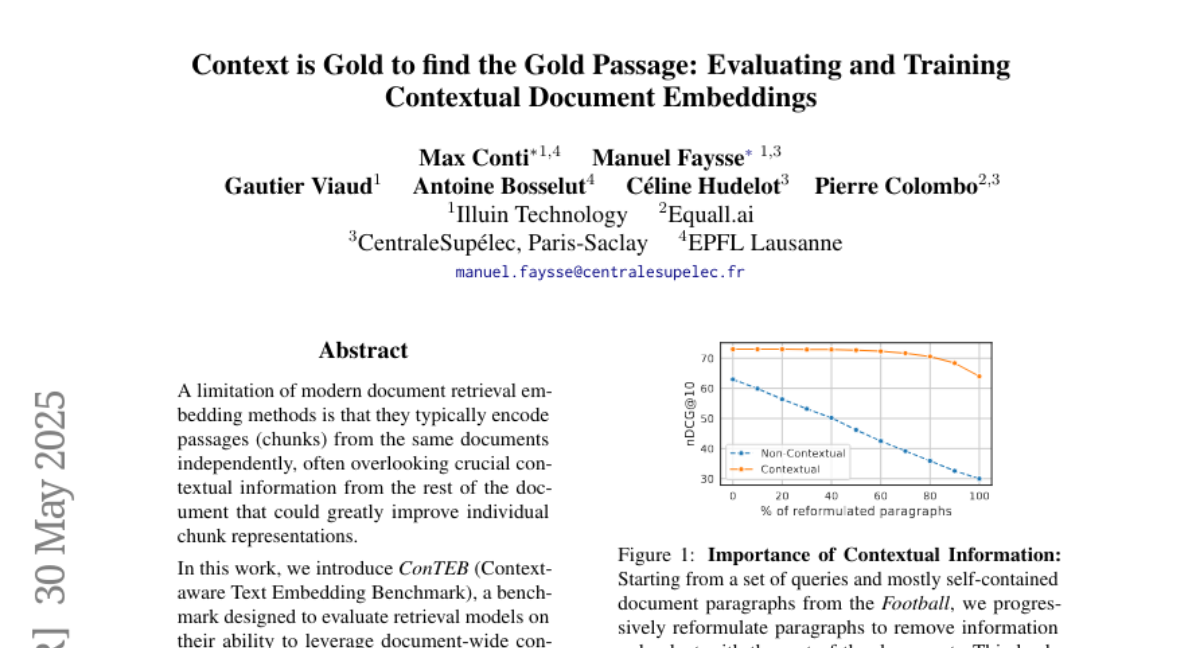

⚖️ Assessing LLM performance is not easy, especially outside of English, and to this end we crafted a novel evaluation benchmark, FrenchBench, aiming to assess reasoning, factual knowledge, and linguistic capabilities of models in French !

🔎 The best current LLMs are hidden behind a shroud of mystery, trained with undisclosed training data mixes or strategies. We go the opposite way, releasing all of the project's artefacts (model checkpoints, data, training details, evaluation benchmarks...) We obtain 81 % of the Stanford FMTI transparency criterias, far ahead of even most open initiatives !

🧪Beyond a powerful industrial resource, our transparent initiative is a stepping stone for many scientific questions ! How does teaching a model two languages instead of one splits its monolingual ability ? Does training on so much French help the model integrate French-centric knowledge and cultural biases ? How does the model memorize the training data ?

Many more things to say, for those interested, I recommend checking out:

🗞️ The blogpost: https://huggingface.co/blog/manu/croissant-llm-blog

📖 The 45 page report with lots of gems: https://arxiv.org/abs/2402.00786

🤖 Models, Data, Demo: croissantllm

croissantllm

🥐 CroissantLLM is a truly bilingual language model trained on 3 trillion tokens of French and English data. In its size category (<2B), it is the best model in French, but it also rivals the best monolingual English models !

💾 To train it, we collected, filtered and cleaned huge quantities of permissively licensed French data, across various domains (legal, administrative, cultural, scientific), and different text modalities (speech transcriptions, movie subtitles, encyclopedias, forums, webpages)...

⚖️ Assessing LLM performance is not easy, especially outside of English, and to this end we crafted a novel evaluation benchmark, FrenchBench, aiming to assess reasoning, factual knowledge, and linguistic capabilities of models in French !

🔎 The best current LLMs are hidden behind a shroud of mystery, trained with undisclosed training data mixes or strategies. We go the opposite way, releasing all of the project's artefacts (model checkpoints, data, training details, evaluation benchmarks...) We obtain 81 % of the Stanford FMTI transparency criterias, far ahead of even most open initiatives !

🧪Beyond a powerful industrial resource, our transparent initiative is a stepping stone for many scientific questions ! How does teaching a model two languages instead of one splits its monolingual ability ? Does training on so much French help the model integrate French-centric knowledge and cultural biases ? How does the model memorize the training data ?

Many more things to say, for those interested, I recommend checking out:

🗞️ The blogpost: https://huggingface.co/blog/manu/croissant-llm-blog

📖 The 45 page report with lots of gems: https://arxiv.org/abs/2402.00786

🤖 Models, Data, Demo: