license: gpl-3.0

language:

- en

- zh

inference: false

Ziya-LLaMA-13B-v1.1

- Main Page:Fengshenbang

- Github: Fengshenbang-LM

(LLaMA权重的许可证限制,我们无法直接发布完整的模型权重,用户需要参考使用说明进行合并)

姜子牙系列模型

- Ziya-LLaMA-13B-v1.1

- Ziya-LLaMA-13B-v1

- Ziya-LLaMA-7B-Reward

- Ziya-LLaMA-13B-Pretrain-v1

- Ziya-BLIP2-14B-Visual-v1

简介 Brief Introduction

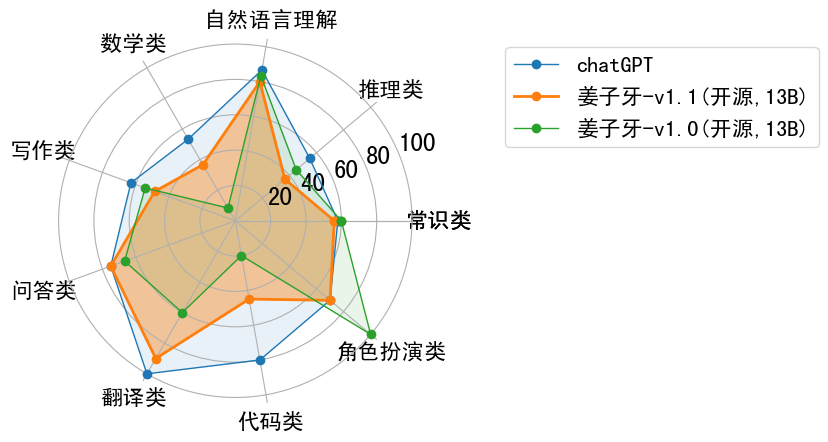

我们对Ziya-LLaMA-13B-v1模型进行继续优化,推出开源版本Ziya-LLaMA-13B-v1.1。通过调整微调数据的比例和采用更优的强化学习策略,本版本在问答准确性、数学能力以及安全性等方面得到了提升,详细能力分析如下图所示。

We have further optimized the Ziya-LLaMA-13B-v1 model and released the open-source version Ziya-LLaMA-13B-v1.1. By adjusting the proportion of fine-tuning data and adopting a better reinforcement learning strategy, this version has achieved improvements in question-answering accuracy, mathematical ability, and safety, as shown in the following figure in detail.

软件依赖

pip install torch==1.12.1 tokenizers==0.13.3 git+https://github.com/huggingface/transformers

使用 Usage

请参考Ziya-LLaMA-13B-v1的使用说明。

注意:合并后默认会生成3个.bin文件,md5值依次为59194d10b1553d66131d8717c9ef03d6、cc14eebe2408ddfe06b727b4a76e86bb、4a8495d64aa06aee96b5a1cc8cc55fa7。

Please refer to the usage for Ziya-LLaMA-13B-v1.

Note: After merging, three .bin files will be generated by default, with MD5 values of 59194d10b1553d66131d8717c9ef03d6, cc14eebe2408ddfe06b727b4a76e86bb, and 4a8495d64aa06aee96b5a1cc8cc55fa7, respectively.

微调示例 Finetune Example

Refer to ziya_finetune

推理量化示例 Inference & Quantization Example

Refer to ziya_inference

引用 Citation

如果您在您的工作中使用了我们的模型,可以引用我们的论文:

If you are using the resource for your work, please cite the our paper:

@article{fengshenbang,

author = {Jiaxing Zhang and Ruyi Gan and Junjie Wang and Yuxiang Zhang and Lin Zhang and Ping Yang and Xinyu Gao and Ziwei Wu and Xiaoqun Dong and Junqing He and Jianheng Zhuo and Qi Yang and Yongfeng Huang and Xiayu Li and Yanghan Wu and Junyu Lu and Xinyu Zhu and Weifeng Chen and Ting Han and Kunhao Pan and Rui Wang and Hao Wang and Xiaojun Wu and Zhongshen Zeng and Chongpei Chen},

title = {Fengshenbang 1.0: Being the Foundation of Chinese Cognitive Intelligence},

journal = {CoRR},

volume = {abs/2209.02970},

year = {2022}

}

You can also cite our website:

欢迎引用我们的网站:

@misc{Fengshenbang-LM,

title={Fengshenbang-LM},

author={IDEA-CCNL},

year={2021},

howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}},

}