Aranizer | Arabic Tokenizer

Aranizer is an Arabic SentencePiece-based tokenizer designed for efficient and versatile tokenization. It features a vocabulary size of 32,000 tokens and is optimized for a fertility score of 1.803. The total number of tokens processed is 1,387,929, making it suitable for a wide range of NLP tasks.

Features

- Tokenizer Name: Aranizer

- Type: SentencePiece tokenizer

- Vocabulary Size: 32,000

- Total Number of Tokens: 1,387,929

- Fertility Score: 1.803

- It supports Arabic Diacritization

Aranizer Collection Achieved State of the Art Arabic Tokenizer

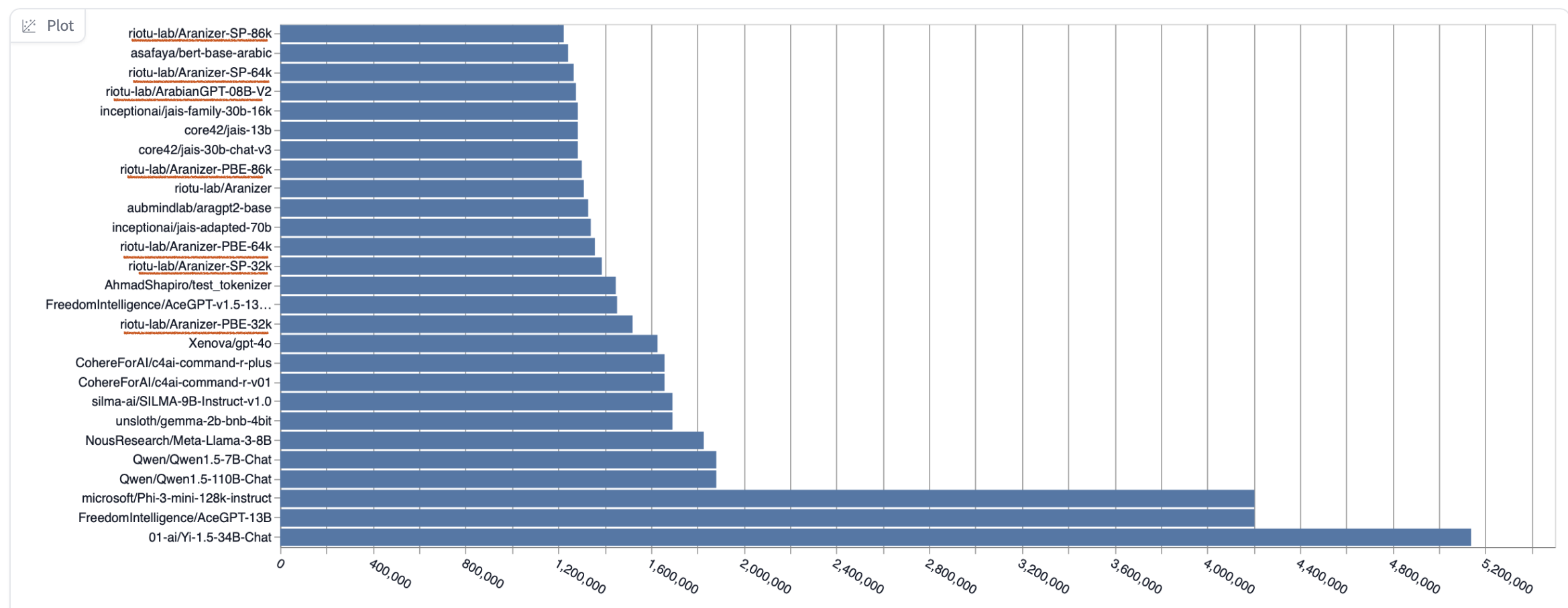

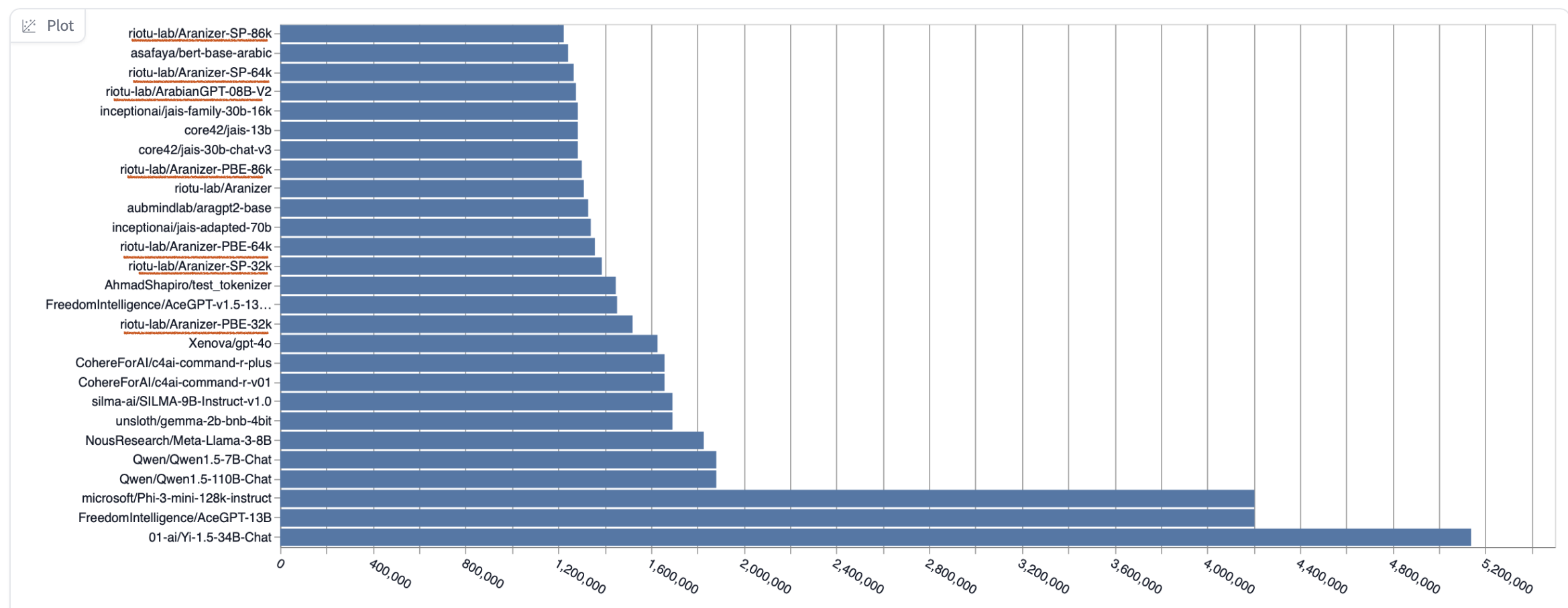

The Aranizer tokenizer has achieved state-of-the-art results on the Arabic Tokenizers Leaderboard on Hugging Face. Below is a screenshot highlighting this achievement:

How to Use the Aranizer Tokenizer

The Aranizer tokenizer can be easily loaded using the transformers library from Hugging Face. Below is an example of how to load and use the tokenizer in your Python project:

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("riotu-lab/Aranizer-SP-32k")

text = "اكتب النص العربي"

tokens = tokenizer.tokenize(text)

token_ids = tokenizer.convert_tokens_to_ids(tokens)

print("Tokens:", tokens)

print("Token IDs:", token_ids)

## Citation

@article{koubaa2024arabiangpt,

title={ArabianGPT: Native Arabic GPT-based Large Language Model},

author={Koubaa, Anis and Ammar, Adel and Ghouti, Lahouari and Necar, Omer and Sibaee, Serry},

year={2024},

publisher={Preprints}

}