{}

Reward Model Overview

The reward model is trained from the base model google/gemma-2b-it. See the 7B version RM-Gemma-7B.

The training script is available at https://github.com/WeiXiongUST/RLHF-Reward-Modeling .

Model Details

If you have any question with this reward model and also any question about reward modeling, feel free to drop me an email with wx13@illinois.edu. I would be happy to chat!

Dataset preprocessing

The model is trained on a mixture of

The total number of the comparison pairs is 250K, where we perform the following data selection and cleaning strateges:

- HH-RLHF: we use all the base, rejection sampling, and online subsets but delete the samples whose chosen == rejected, leading to 115547;

- SHP: we only use the samples with score ratio > 2, for each prompt, we only take 1 comparison, leading to 55916;

- Ultrafeedback: similar to UltraFeedback-Binarized, we use the fine-grained score instead of the overall one to rank samples. Meanwhile, for each prompt, we take the best one v.s. random chosen one in the remaining samples. Finally, we delete the selected pairs with equal scores, leading to 62793.

- HelpSteer: we use the mean of helpfulness and correctness to rank samples. Meanwhile, we take the best sample v.s. the random chosen one in the remaining samples. Finally, we delete the selected pairs with equal scores, leading to 8206;

- Capybara: we delete the pairs whose chosen and rejected samples are of the same rating, leading to 7562;

- Orca: we delete the pairs whose chosen and rejected samples are of the same rating, leading to 6405.

Training

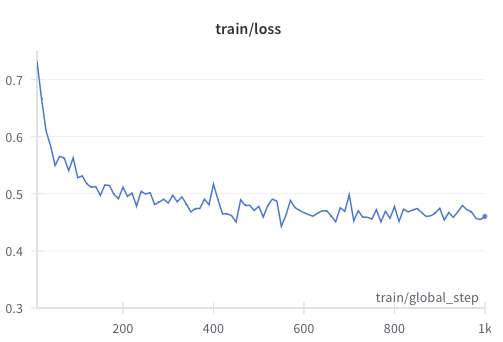

We train the model for one epoch with a learning rate of 1e-5, batch size 256, cosine learning rate decay with a warmup ratio 0.03. We present the training curve as follows.

Uses

from transformers import AutoTokenizer, pipeline

rm_tokenizer = AutoTokenizer.from_pretrained("weqweasdas/RM-Gemma-2B")

device = 0 # accelerator.device

rm_pipe = pipeline(

"sentiment-analysis",

model="weqweasdas/RM-Gemma-2B",

#device="auto",

device=device,

tokenizer=rm_tokenizer,

model_kwargs={"torch_dtype": torch.bfloat16}

)

pipe_kwargs = {

"return_all_scores": True,

"function_to_apply": "none",

"batch_size": 1

}

chat = [

{"role": "user", "content": "Hello, how are you?"},

{"role": "assistant", "content": "I'm doing great. How can I help you today?"},

{"role": "user", "content": "I'd like to show off how chat templating works!"},

]

test_texts = [tokenizer.apply_chat_template(chat, tokenize=False, add_generation_prompt=False).replace(tokenizer.bos_token, "")]

pipe_outputs = rm_pipe(test_texts, **pipe_kwargs)

rewards = [output[0]["score"] for output in pipe_outputs]

Results

We collect the existing preference datasets and use them as a benchmark to evaluate the resulting reawrd model.

Note that for MT-Bench dataset (lmsys/mt_bench_human_judgments), we delete the samples with tie as the comparison results. The Alpaca data is from Here.

| Model/Test set | HH-RLHF-Helpful | SHP | Helpsteer helpful + correctness | Helpsteer All | MT Bench Human | MT Bench GPT4 | Alpaca Human | Alpaca GPT4 | Alpca Human-crossed |

|---|---|---|---|---|---|---|---|---|---|

| UltraRM-13B | 0.71 | 0.73 | 0.72 | 0.72 | 0.78 | 0.9 | 0.65 | 0.83 | 0.62 |

| Pair-RM | 0.65 | 0.56 | 0.62 | 0.6 | 0.74 | 0.82 | 0.62 | 0.75 | 0.59 |

| RM-Gemma-2B | 0.68 | 0.73 | 0.68 | 0.72 | 0.77 | 0.87 | 0.63 | 0.78 | 0.59 |

Reference

To be added. The reward model may be readily used for rejection sampling finetuning (

@article{dong2023raft,

title={Raft: Reward ranked finetuning for generative foundation model alignment},

author={Dong, Hanze and Xiong, Wei and Goyal, Deepanshu and Pan, Rui and Diao, Shizhe and Zhang, Jipeng and Shum, Kashun and Zhang, Tong},

journal={arXiv preprint arXiv:2304.06767},

year={2023}

}