Spaces:

Running

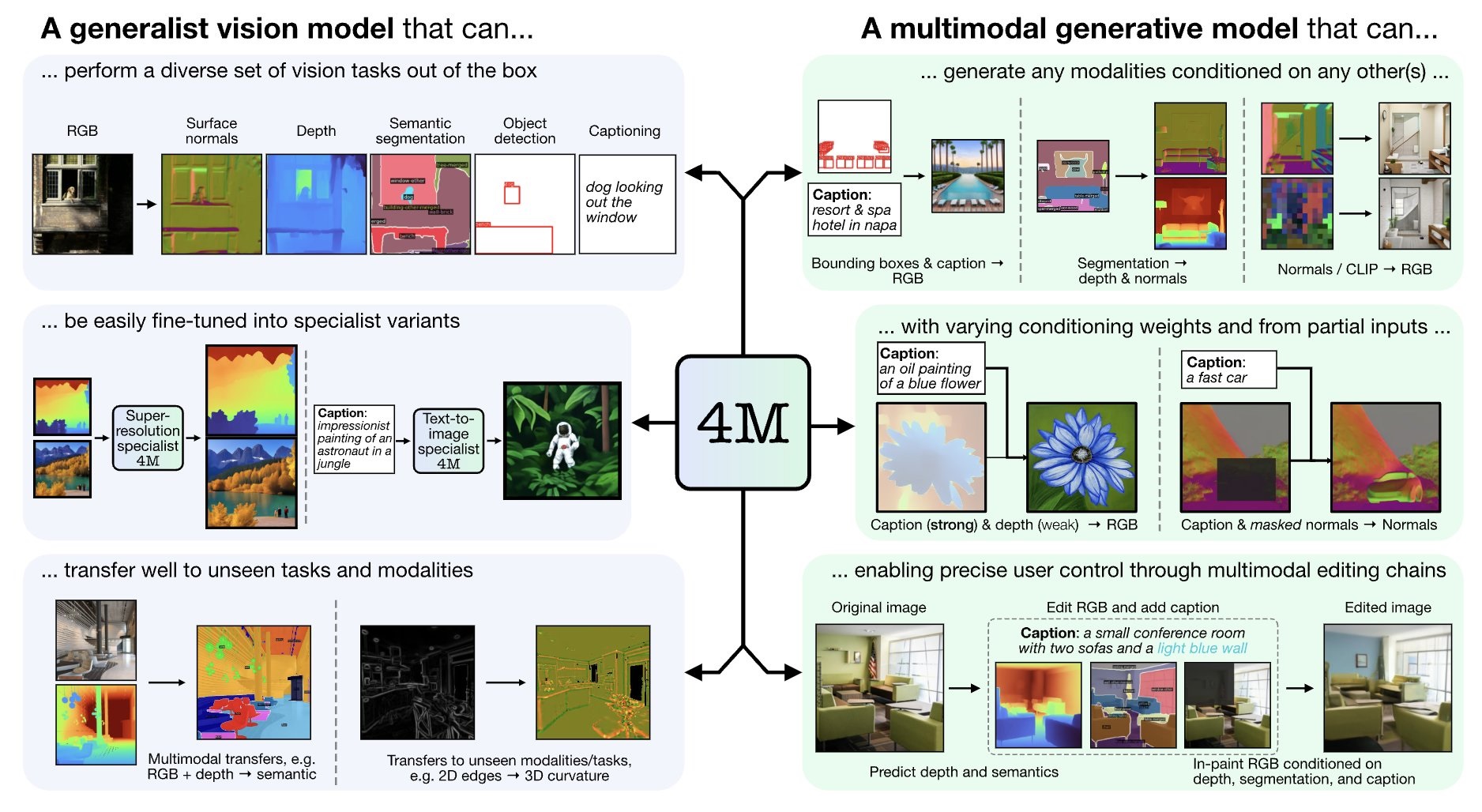

EPFL and Apple just released 4M-21: single any-to-any model that can do anything from text-to-image generation to generating depth masks! 🙀 Let's unpack 🧶

4M is a multimodal training framework introduced by Apple and EPFL.

Resulting model takes image and text and output image and text 🤩

Models | Demo

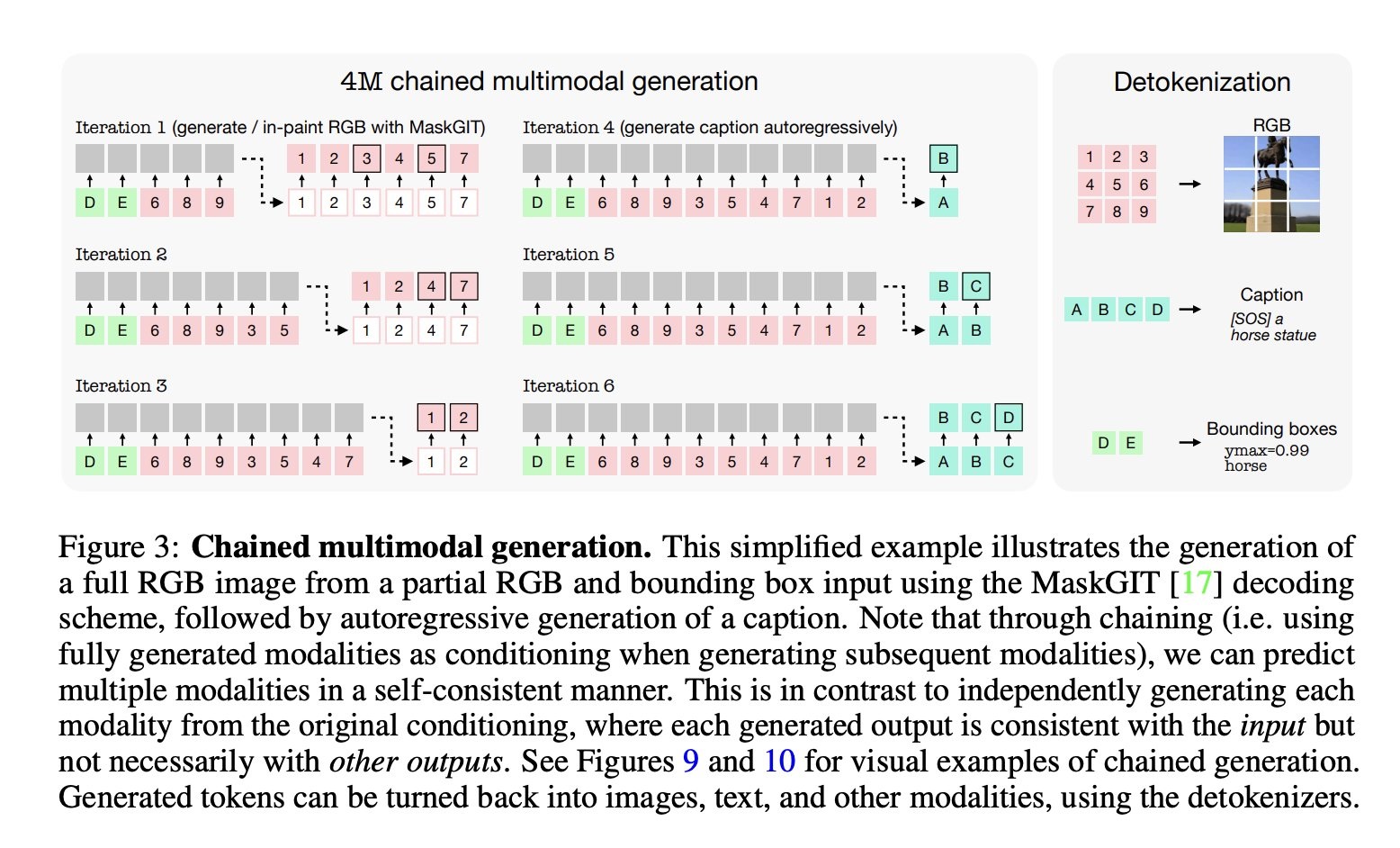

This model consists of transformer encoder and decoder, where the key to multimodality lies in input and output data: input and output tokens are decoded to generate bounding boxes, generated image's pixels, captions and more!

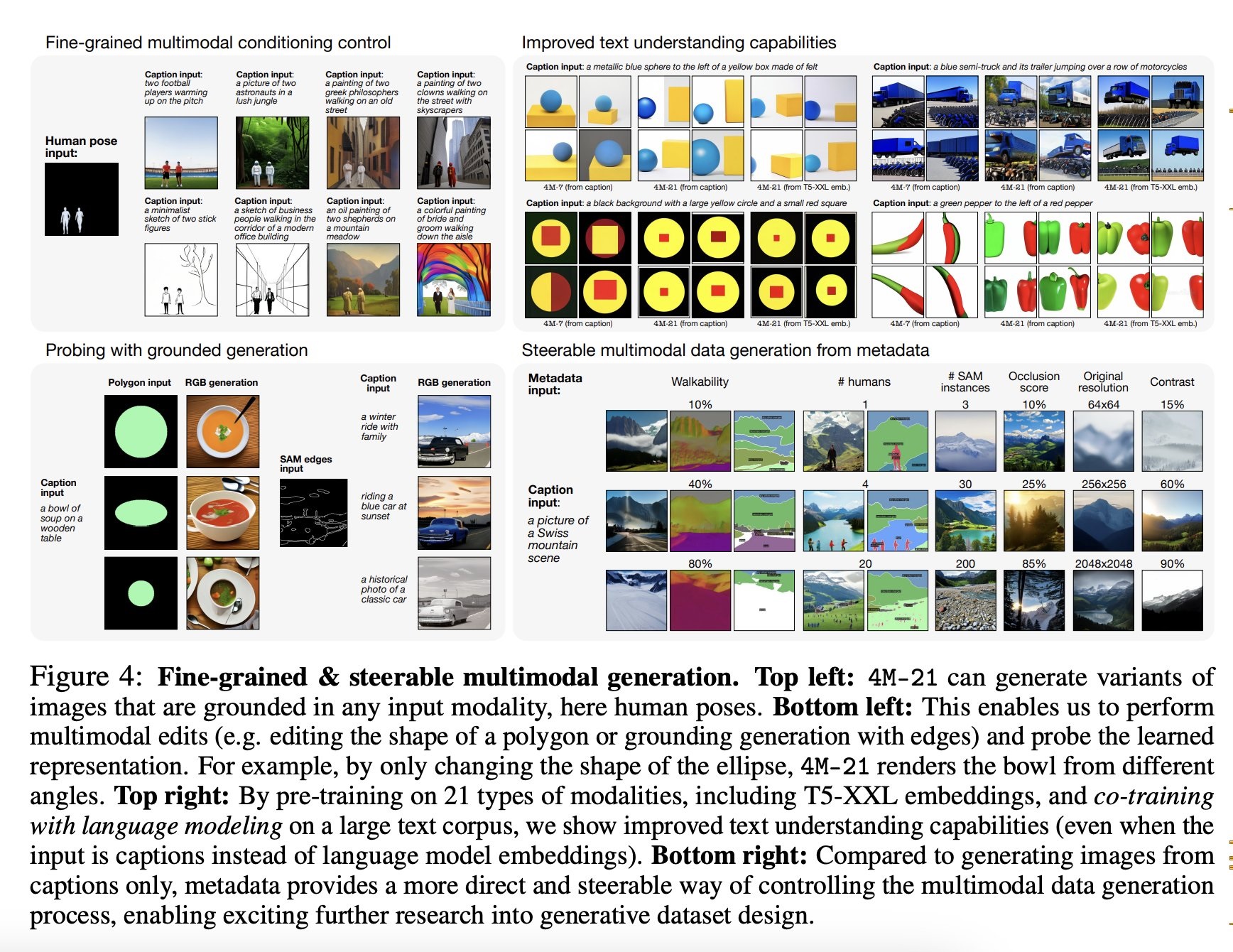

This model also learnt to generate canny maps, SAM edges and other things for steerable text-to-image generation 🖼️

The authors only added image-to-all capabilities for the demo, but you can try to use this model for text-to-image generation as well ☺️

In the project page you can also see the model's text-to-image and steered generation capabilities with model's own outputs as control masks!

Ressources:

4M-21: An Any-to-Any Vision Model for Tens of Tasks and Modalities by Roman Bachmann, Oğuzhan Fatih Kar, David Mizrahi, Ali Garjani, Mingfei Gao, David Griffiths, Jiaming Hu, Afshin Dehghan, Amir Zamir (2024) GitHub

Original tweet (June 21, 2024)